Social media platforms are in a perpetual war against 'harmful' content, and are always looking for new ways to keep the users on their platform safe.

Instagram is now the latest social media platform to launch an attack on potentially harmful content, and it's all in the name of keeping their user base safe. Instagram's AI will now officially comb through each caption to every image uploaded to its servers and determine whether or not the caption that the Instagram user chose is potentially harmful.

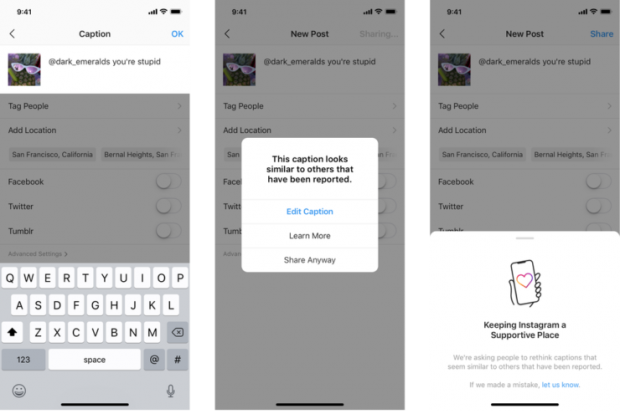

How does this work? Instagram's AI will take a user's caption and then compare it to the log of previously flagged 'harmful' comments, and if the caption is similar to these comments, the AI will send the Instagram user a push notification regarding their caption. Basically, Instagram's AI is fed data (flagged comments) and then uses that data as a benchmark for what is "offensive" or potentially "harmful". Then if anything comes close to that benchmark, warnings are sent out to the users who have crossed the line.

From the above screenshot, we can see that the word 'stupid' seems to have triggered Instagram's AI, which isn't a great start considering the word stupid can be used in multiple contexts that might not be "offensive" to the majority of people. This raises the question of "how is Instagram's AI going to know what is legitimately offensive and what is just people joking around?".