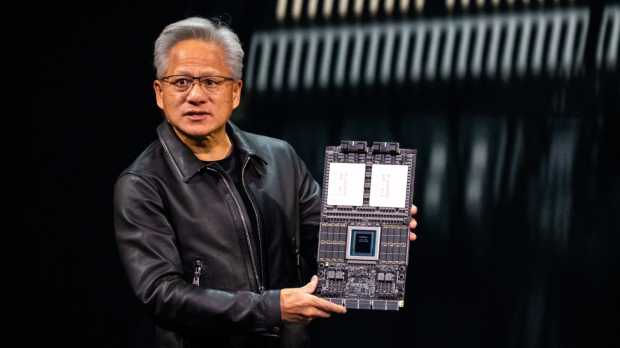

NVIDIA is reportedly running into manufacturing challenges with its next-generation Rubin Ultra GPU and is now considering a significant architectural revision. The standard Rubin, built for large-scale AI training, is on track to begin mass shipments this summer. Even before that rollout, reports about the more advanced Rubin Ultra are starting to surface, and its current roadmap may be hitting some real technological walls.

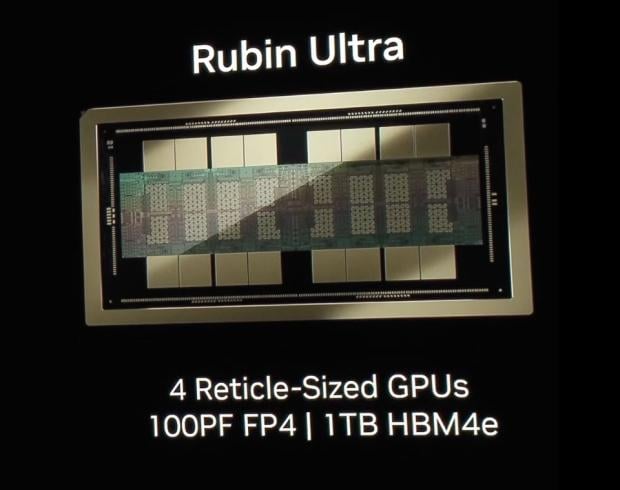

To understand the problem, it helps to know what Rubin Ultra was originally planned to be. NVIDIA introduced a dual-die architecture with the standard Rubin, meaning two silicon chips packaged together into one unit. Rubin Ultra was meant to take that further with a four-die setup, essentially doubling the base design into a much larger package. However, those ambitions may have pushed TSMC's advanced packaging technology past its practical limits.

Reports from Taiwan's Commercial Times suggest that NVIDIA may scale back the Rubin Ultra to a dual-die design, similar to the standard Rubin. The original design reportedly included 16 HBM4 memory stacks, around 1 TB of memory capacity, and CoWoS-L packaging.

- Read more: Future of next-gen HBM: HBM4, HBM5, HBM6, HBM7, and HBM8 teased with 15,000W AI GPUs by 2038

- Read more: NVIDIA ordering more CoWoS-L advanced packaging from TSMC: ready for more Blackwell AI GPUs

- Read more: NVIDIA's next-gen 'market-grabbing weapon': GB300 AI GPUs to be unveiled at GTC 2025

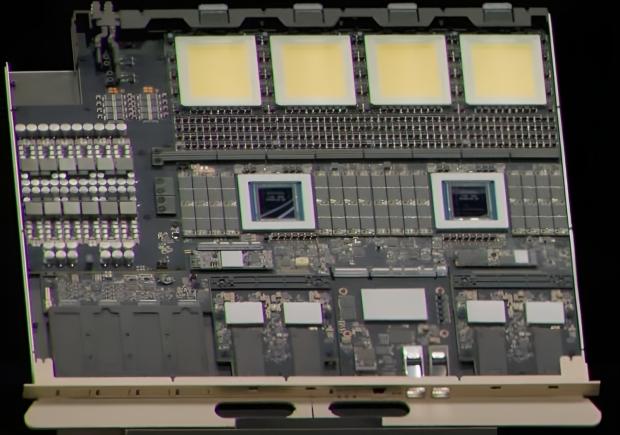

In a typical CoWoS package, TSMC combines multiple dies and HBM memory stacks into one unified structure. For Rubin Ultra, NVIDIA planned to use CoWos-L, but scaling it up to a four-die configuration is reportedly causing warping issues, with thermal and structural stresses bending the package in multiple directions. This prevents the compute dies from maintaining proper contact with the underlying substrate.

To work around this, NVIDIA is expected to shift back to a dual-die configuration while preserving overall compute performance through board-level design instead. Rather than cramming four dies into a single package, Rubin Ultra would use a 2+2 arrangement spread across a rack-level board. The practical upside is that, on paper, performance, HBM capacity, and compute output would remain the same, but the system would be significantly easier to manufacture and scale in a data center environment.

There is another option on the table. NVIDIA could move toward TSMC's CoPoS packaging, which stands for Chip-on-Panel-on-Substrate, a newer approach designed to support larger AI accelerator designs. The catch is that CoPoS isn't expected to reach mass production until late 2028 at the earliest, making it unlikely to have any impact on Rubin Ultra's 2027 target.

Despite the design revision, Rubin Ultra's final performance specifications are expected to remain unchanged. Questions around thermal management and physical rack-level integration remain open as NVIDIA continues pushing the boundaries of what AI GPU hardware can look like.