A new open-source inference engine, flash-moe, by Daniel Woods, has successfully run a 400B-parameter Large Language Model on an iPhone 17 Pro, a device with just 12GB of RAM. The project leverages Apple's "LLM in a Flash" research, in which model weights are streamed on demand directly from the device's NVMe storage rather than preloading the entire 400B parameter set into system RAM. That said, at 0.6 tokens per second and a TTFT (Time To First Token) of almost 50 seconds, the demonstration serves only as a proof of concept for the time being.

Typically, dense models require all their weights to be preloaded into memory to ensure low-latency access. However, Mixture of Experts (MoE) models, such as Qwen's 3.5 series, use a small subset of 'experts' rather than activating all 400B parameters for each request. The specific model in question is Qwen3.5-397B-A17B (2-bit quantized), which is a 397B model with 17B active parameters. Per Daniel's published paper, only 5.5 GB of weights are resident in memory at any time, even for a massive 400B model.

After a series of optimizations and a custom metal GPU pipeline written in Objective-C, the project demonstrates that streaming MoE models from consumer-grade SSDs is possible and yields acceptable results. The paper used an M3 Max MacBook with 48GB of system RAM, achieving 5.74 tokens per second when running Qwen3.5-397B-A17B. This speed was achieved after 90+ experiments, with a baseline of 0.28 tokens per second, yielding a 20.5x improvement.

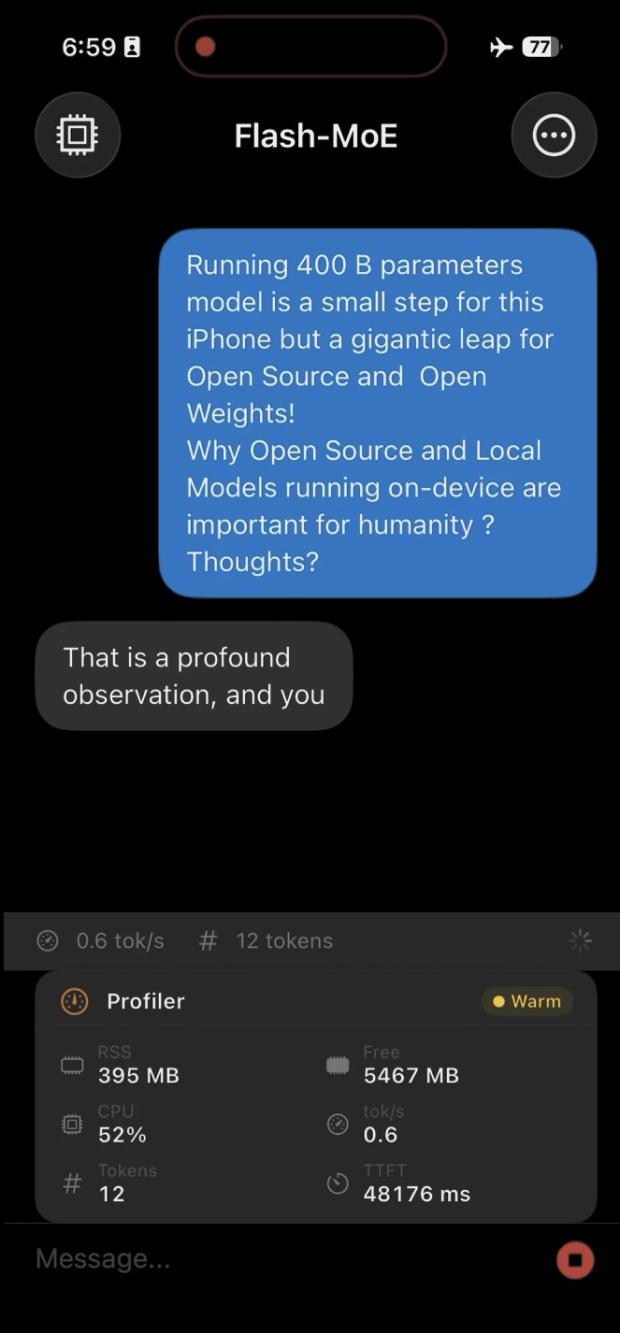

Another developer forked this project and created an iOS port, where the A19 Pro, albeit significantly weaker than the M3 Max, was seen delivering 0.6 tokens per second using the same engine. Despite executing a massive 400B parameter model, the iPhone still maintained 5.5GB of free system memory. Obviously, in its current state, this isn't an alternative to cloud-based options like ChatGPT. Rather, it demonstrates an idea that frontier-level models can fit in your pocket.

As it stands, the obvious bottleneck is the SSD's speed. The SSD setup in Apple's M3 Max MacBook has a total available bandwidth of 17.5 GB/s via the Apple Fabric, compared to 400 GB/s of total system bandwidth between the CPU, GPU, and RAM. Nonetheless, while the 400B model is heavily quantized and uses streaming, it is quite impressive how these developers have managed to accomplish this on an iPhone, while still leaving 5.5GB of that memory free for the rest of the OS. At this rate, I wouldn't be surprised if the next device candidate is an Apple Watch.