NVIDIA is reportedly acquiring hundreds of thousands of LPDDR-based SOCAMM memory that it'll use in future AI PC products, with demand for next-gen SOCAMM 2 memory expected to boom in the years ahead.

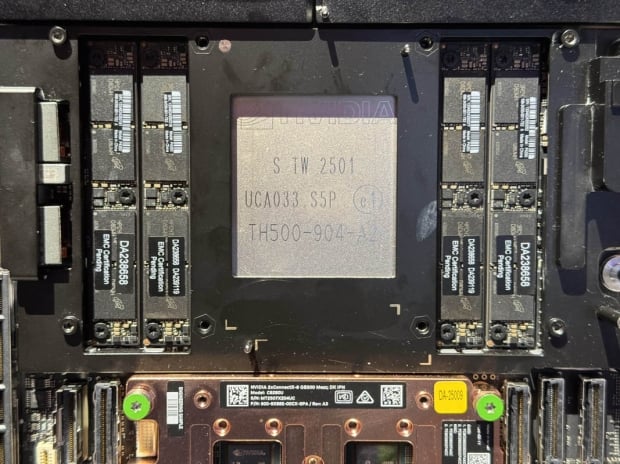

At its recent GTC (GPU Technology Conference) event earlier this year, the company showcased SOCAMM memory because of its superior performance and lower power consumption for AI products, with NVIDIA's new GB300 AI platform using SOCAMM memory developed by Micron. SOCAMM is very different to HBM and LPDDR5X memory used on current AI products including servers and mobile platforms.

SOCAMM memory is based on LPDDR DRAM, which is traditionally used inside of mobile and low-power devices, but SOCAMM memory is upgradable, unlike HBM and LPDDR5X memory. SOCAMM is not soldered onto the PCB, and can be secured by just three screws.

In a new report from Korean media outlet ETNews, we're hearing that NVIDIA is reportedly set to make between 600,000 to 800,000 units of its new SOCAMM memory this year, with a boost in production of the new memory standard in deployment for NVIDIA's family of AI products.

NVIDIA's new GB300 AI server platform is one of the first uses of SOCAMM memory, with the company set to move to the new SOCAMM memory standard for its future AI products, with up to 800,000 units of the new LPDDR-based SOCAMM memory lower than the use of HBM memory inside of its products by its memory partners in 2025, with plans to scale this up big time with next-gen SOCAMM 2 memory in 2026 and beyond.

SOCAMM includes a custom form factor, where it's not just compact and modular, but it's also incredibly more power efficient than RDIMM memory. We should expect SOCAMM to feature improved power efficiency, while also delivering more bandwidth than RDIMM, LPDDR5X, and LPCAMM memory.

SOCAMM has around 150GB/sec to 250GB/sec of memory bandwidth and they're swappable, meaning SOCAMM is a great option for AI PCs and AI servers, as they can be upgraded with ease. SOCAMM memory is expected to become the new standard for low-power AI devices, while Micron is the current manufacturer for SOCAMM memory for NVIDIA, the likes of Samsung and SK hynix are reportedly in discussions with NVIDIA to make the new SOCAMM modules for the company, too.