Introduction

Seagate's 600 Pro is marketed as a light enterprise solid state storage solution. Because of its enterprise pedigree, a 600 Series Pro SSD is a drive that has superior endurance and better heavy duty write performance than typical consumer based SSDs. In addition, the 600 Series Pro has on-drive power loss protection via integrated tantalum capacitors.

Seagate has tapped Link A Media's (LAMD) venerable LM87800 controller to power their third generation SSDs. Link A Media's LM87800 controller has an enterprise pedigree that makes it an ideal choice for Seagate. Seagate collaborated with LAMD to develop the LM87800 controller, which makes the LM87800 a logical choice for the 600 Pro.

Because the 600 Pro is designed to handle enterprise workloads, utilization of high quality flash is essential. Seagate utilizes 19nm Toshiba toggle mode flash in BGA packages on their third generation SSDs. Utilization of premium flash and a healthy dose of over-provisioning produces a drive with superior write endurance, i.e. enterprise class endurance.

Seagate doesn't make the components utilized in the 600 Series Pro, but never-the-less, the Seagate 600 Series SSDs are powered by Seagate. What makes the 600 Series tick is Seagate's in-house custom firmware. Writing their own firmware allows Seagate to tune their third generation SSDs performance for the intended market, whether it be consumer or enterprise.

An enterprise focused SSD typically makes for a superior enthusiast class SSD. Problem is, typically enterprise class SSDs are priced out of the enthusiast market. We consider Seagate's 600 Pro a hybrid of sorts because it's one of the only true enterprise class SSDs that's not priced out of the enthusiast market.

Until now, it's been hard-to-impossible for us to visually quantify in our reviews what advantages over-provisioning and enterprise tuned firmware really have to offer an enthusiast/professional. We know that endurance can be increased exponentially by heavy over-provisioning; we know this because we can see that for ourselves by taking a look at the TBW a drive is warrantied for. We can now show you exactly how much better a drive that is heavily overprovisioned will perform in the most demanding environments.

Our senior storage editor, Chris Ramseyer, recently introduced our readers to a new benchmark created by Futuremark. PCMark 8's command-line-executed consistency test, really for the first time, allows us to see pronounced differences in real-world performance between SSDs. Futuremark's consistency test spits out over 3500 lines of data. This data, when properly disseminated, can shed a new light on performance, even allowing us to see exactly what over-provisioning brings to the table.

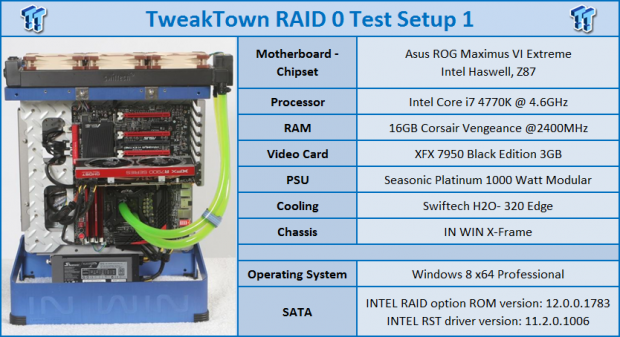

We already showed you what kind of drive scaling you can expect from native Intel 6Gb/s ports in our recent six drive Seagate 600 Pro 200GB RAID Report; today we are going to focus on how a two drive Seagate 600 Pro 200GB array stacks up against the competition. We will be utilizing our Test Setup 1 bench featuring the superior performance of our ASUS Maximus VI Extreme board. All our two drive array RAID Reports, with the exception of mSATA, are run on our ASUS board.

Specifications and Pricing, Drive Details & Test System Setup

Specifications and Pricing

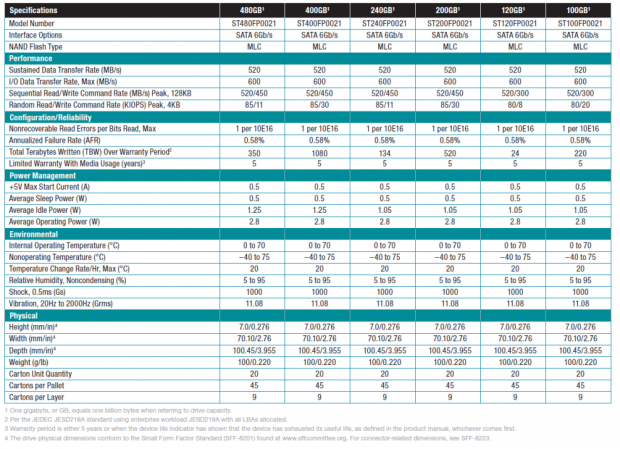

Seagate's 600 Pro SATA III SSD is available in 6 capacity sizes: 100GB, 120GB, 200GB, 240GB, 400GB, and 480GB. The 100GB, 200GB, and 400GB variants have far greater endurance ratings than the 120GB, 240GB, and 480GB variants. Specifications list the 200GB 600 Series Pro SSD as capable of 520MB/s sequential reads and 450MB/s sequential writes. Random read/write speed is listed at 85,000/30,000 IOPS. The write IOPS listed is what the drive is capable of in a steady state.

Seagate's 600 Series Pro is available in a 2.5in x 7mm z-height form factor. At time of this report, a 200GB Seagate 600 Pro retails for north of $300 dollars, making it a sizable investment, but an investment that in my opinion is certainly money well spent.

For most of us, nothing is more valuable than the data we store on our system's non-volatile storage platforms. The hardware, no matter how expensive, is almost never worth what our data is, so paying a little more for the superior data performance and protection afforded by a Seagate 600 Pro makes owning one a great investment.

This SSD is engineered to provide you constant, consistent, and enthusiast/light enterprise performance for the long haul. Seagate warranties the 600 Pro for an industry-leading 5 years and a whopping 520 TBW (Terabytes Written).

PRICING: You can find the Seagate 600 Pro (200GB) for sale below. The prices listed are valid at the time of writing but can change at any time. Click the link to see the very latest pricing for the best deal.

United States: The Seagate 600 Pro (200GB) retails for $338.00 at Amazon.

Canada: The Seagate 600 Pro (200GB) retails for CDN$294.99 at Amazon Canada.

Since this is a RAID review, we are going to focus on performance rather than features. For a more in-depth look at the Seagate 600's feature set, I will refer you to Paul Alcorns's extensive review of the Seagate 600 Pro SSD.

Drive Details - Seagate 600 Series Pro 200GB SSD

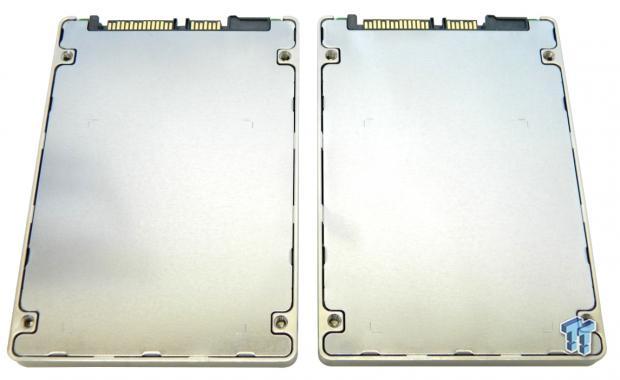

Our drives arrived bare, so we don't have anything to show you as far as packaging goes. The top and sides of the enclosure are formed from a single piece of cast, brushed aluminum that is natural in color. Centered on the top of the 600 Pro's enclosure is a manufacturer's sticker that lists the drive's part number, serial number, shipping firmware, and other relevant information.

The bottom of the drive's enclosure is formed from a piece of sheet metal. The bottom of the enclosure interlocks with the top making it tamper proof.

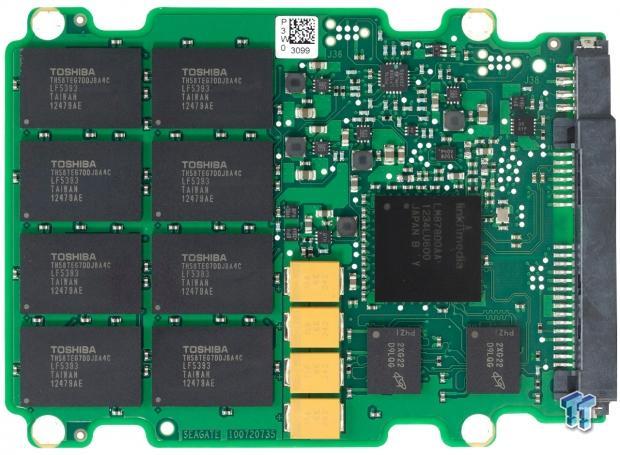

Normally, we disassemble our test subject/s to show you the internals, but not this time. We know from previous experience that opening a Seagate 600 SSD practically destroys the enclosure. Seagate was kind enough to provide us with an internal photo of a 600 Series PCB (pictured above) so we wouldn't have to rip one open to show you what's on the inside.

The drive's NAND array is located entirely on this side of the PCB along with two DRAM chips and a LAMD Amber LM87800 FSP (Flash Storage Processor). The reverse side of the PCB is devoid of components. There are four high quality tantalum capacitors (yellow rectangles) located just above the drive's flash array. Seagate employs a thermal pad to wick heat from the drive's controller into its enclosure.

Desktop Test System

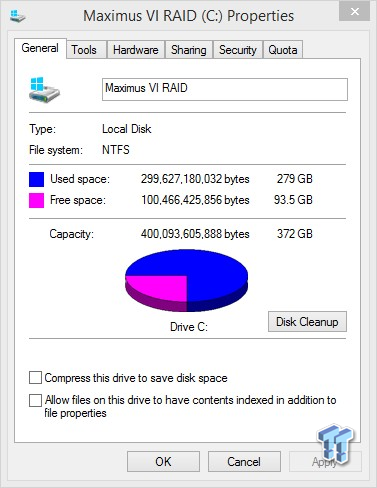

Drive Properties

The majority of our testing will be done with our test drive/array as our boot volume. Our boot volume is 75 percent full for all OS Disk "C" drive testing to mimic a typical consumer OS volume implementation. We're using 64k stripes for all our arrays. Write caching is enabled.

All of our testing includes charting the performance of a single drive as well as a RAID 0 array of our test subjects. We are utilizing Windows 8.1 64-bit for all of our testing.

Synthetic Benchmarks - ATTO, Anvil Storage Utilities, CrystalDiskMark & AS SSD

ATTO

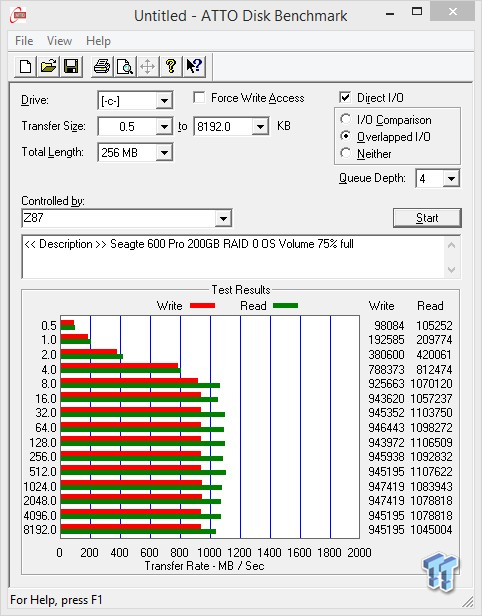

Version and / or Patch Used: 2.47

ATTO is a timeless benchmark used to provide manufacturers with data used for marketing storage products.

Transfers quickly ramp up reaching full performance by 8k transfers. Read and write transfers are well balanced, strong, and consistent throughout.

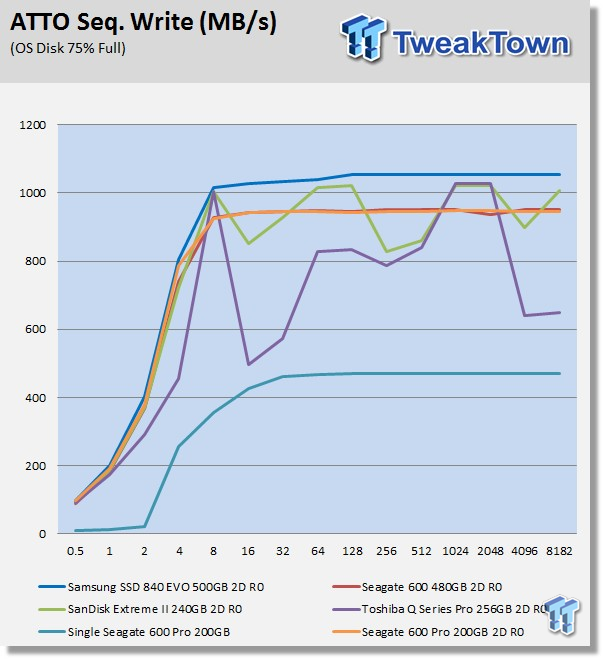

Sequential Write

Not surprisingly, our 600 Pro array's write performance curve is almost identical to the larger 600 Series array on our chart.

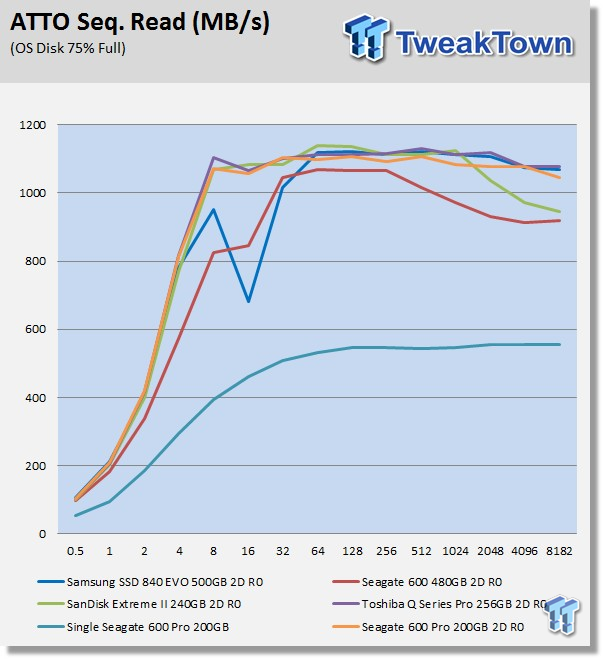

Sequential Read

It is a bit surprising to see our 600 Pro array perform so much better than our larger 600 series array. This difference in performance is more than likely attributable to the difference in firmware. One is tuned for consumer; the other, for enterprise.

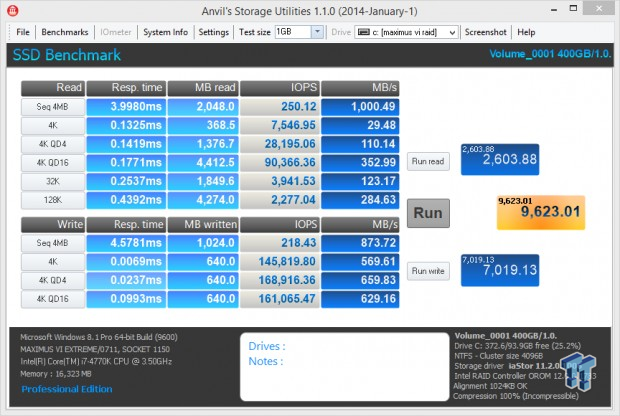

Anvil Storage Utilities

Version and / or Patch Used: RC6

So what is Anvil Storage Utilities? First of all, it's a storage benchmark for SSDs and HDDs where you can check and monitor your performance. The Standard Storage Benchmark performs a series of tests; you can run a full test or just the read or the write test, or you can run a single test, i.e. 4k QD16.

Near hyper-class scoring! Notice the 4k write speed. This is what we love about our Maximus IV Extreme board. No other board is going to net results like that.

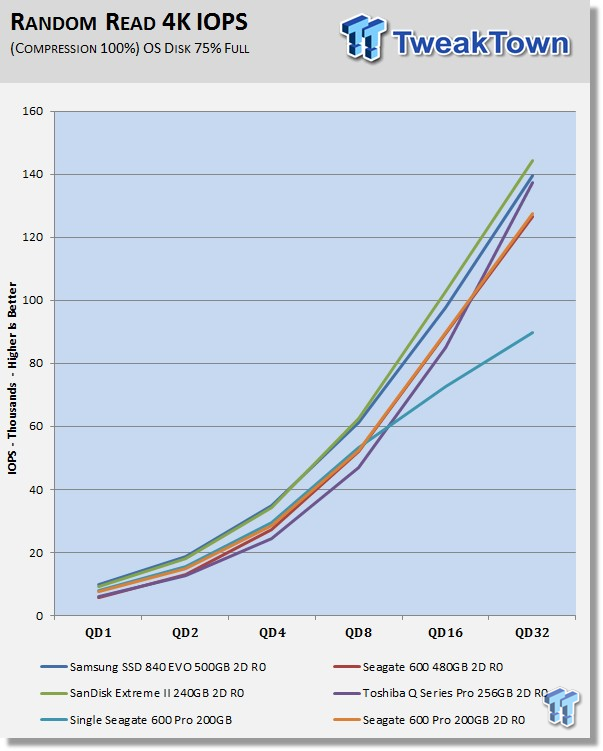

Read IOPS through Queue Depth Scale

Unlike what we saw with ATTO, this time read performance is practically identical to our larger Seagate 600 Series array.

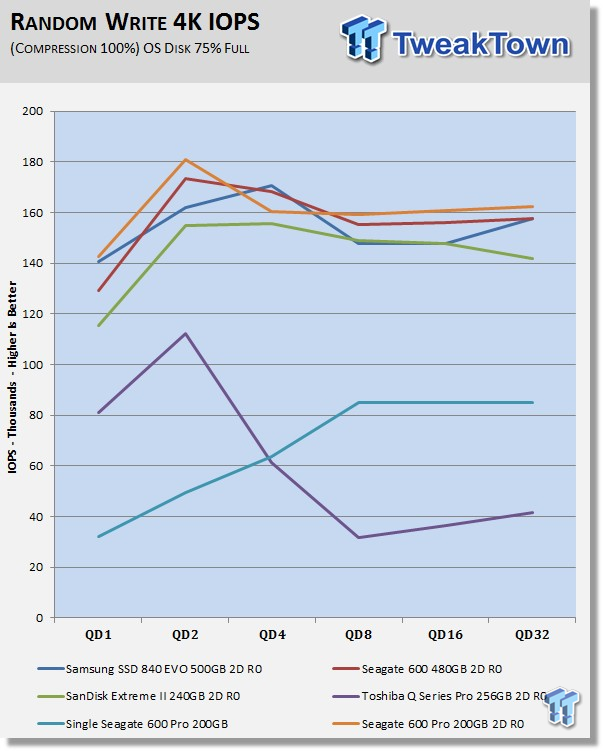

Write IOPS through Queue Scale

Our 600 Pro array puts up our best write performance to date in this test, edging out our larger 600 Series.

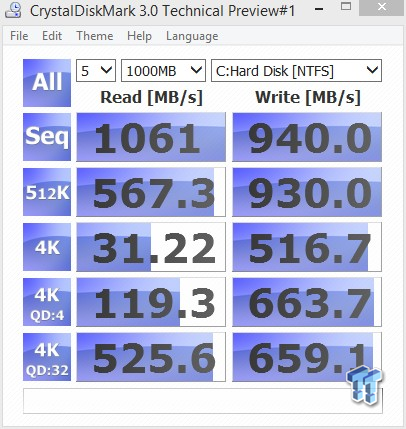

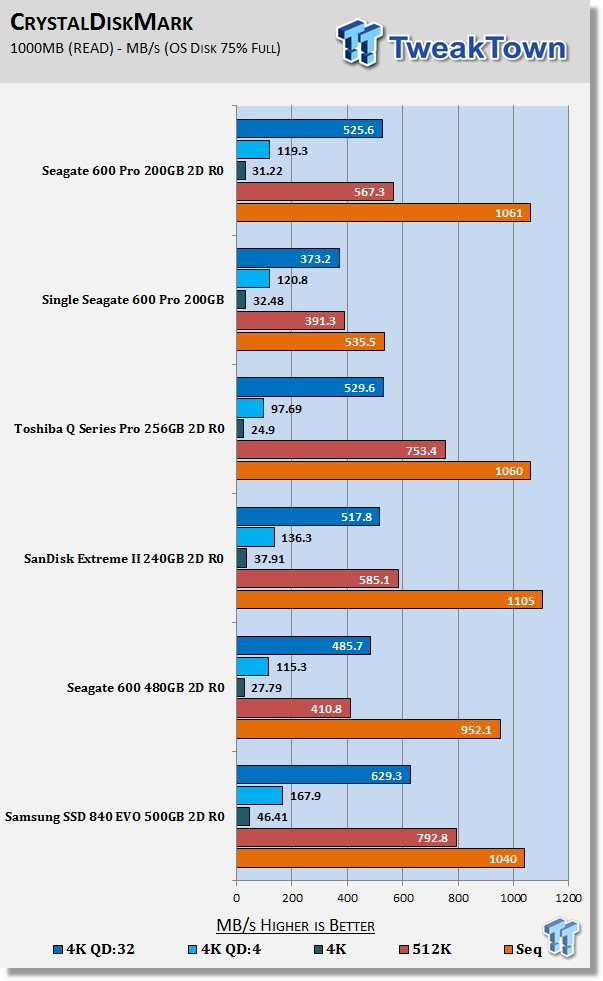

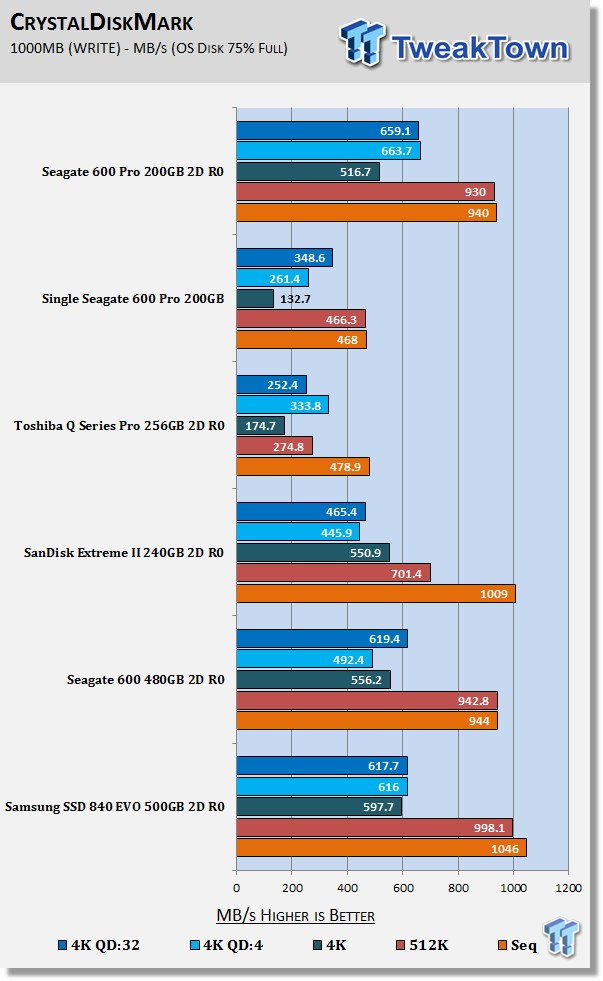

CrystalDiskMark

Version and / or Patch Used: 3.0 Technical Preview

CrystalDiskMark is disk benchmark software that allows us to benchmark 4k and 4k queue depths with accuracy.

Note: Crystal Disk Mark 3.0 Technical Preview was used for these tests since it offers the ability to measure native command queuing at 4 and 32.

Performance looks good across the board. The write performance is especially good.

Our 600 Pro array has excellent read performance. Again, we see the 600 Pro outperforming the standard 600 Series by a good margin.

It looks like Seagate has tuned the Pro series for exceptional write performance at high queue depths. Enterprise workloads, unlike consumer workloads, hit high queue depths frequently. Our 600 Pro array has by far the best QD:4 and QD:32 write performance of any array on our chart.

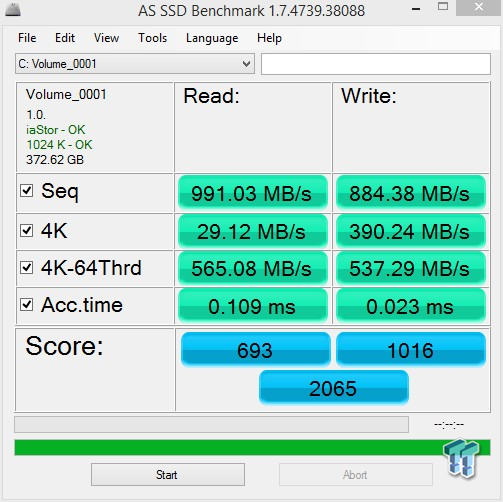

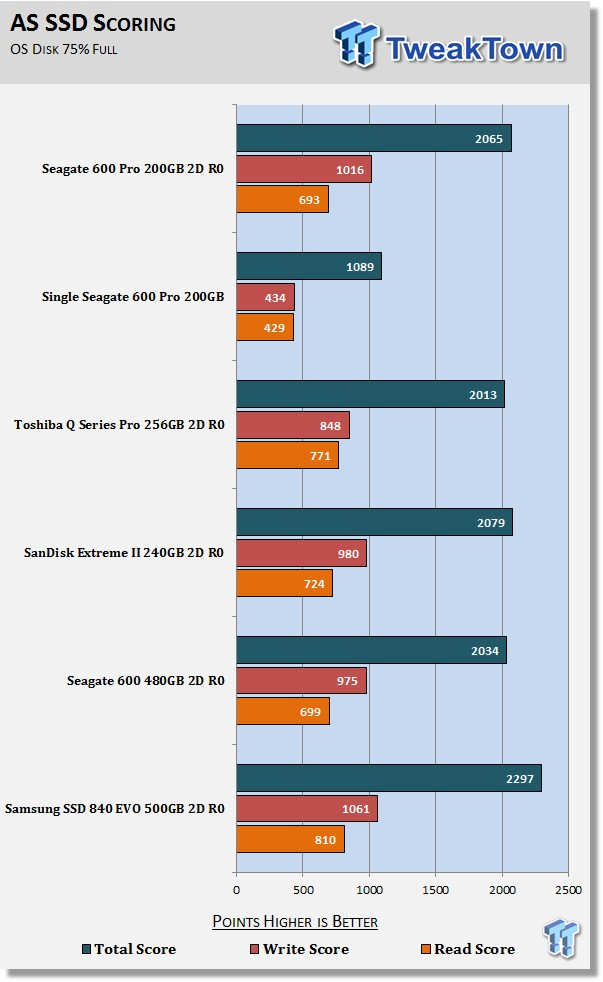

AS SSD

Version and / or Patch Used: 1.7.4739.38088

AS SSD determines the performance of Solid State Drives (SSD). The tool contains four synthetic and three practical tests. The synthetic tests are to determine the sequential and random read and write performance of the SSD. These tests are carried out without the use of the operating system caches.

Samsung's venerable 840 EVO owns this test. Our 600 Pro array puts up a nice performance, and once again, it edges out our larger 600 Series array.

OS Volume -Trace-Based Benchmarks - PCMark Vantage, PCMark 7 & PCMark 8

Light Usage Model:

We are going to categorize these tests as indicative of a light workload. Typical laptop usage models fit perfectly into this category. If you utilize your computer for light workloads like browsing the web, checking emails, and office related tasks, then this category of results is most relevant for your needs.

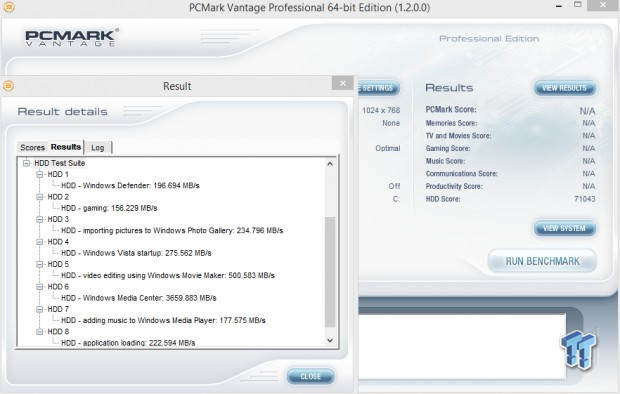

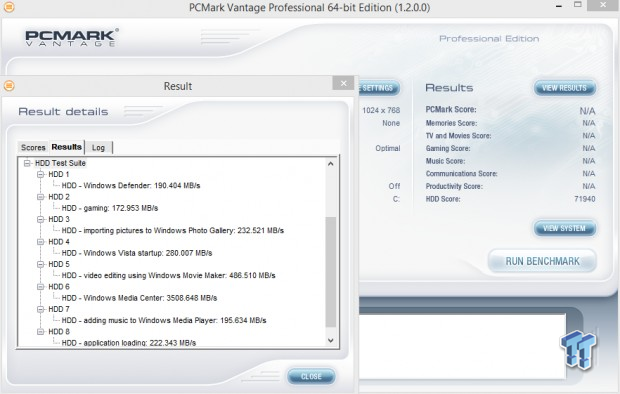

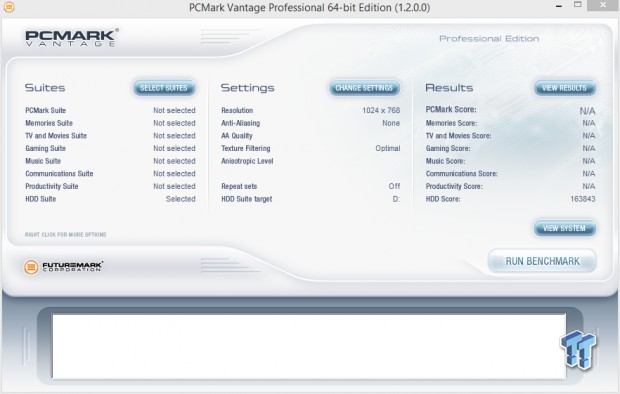

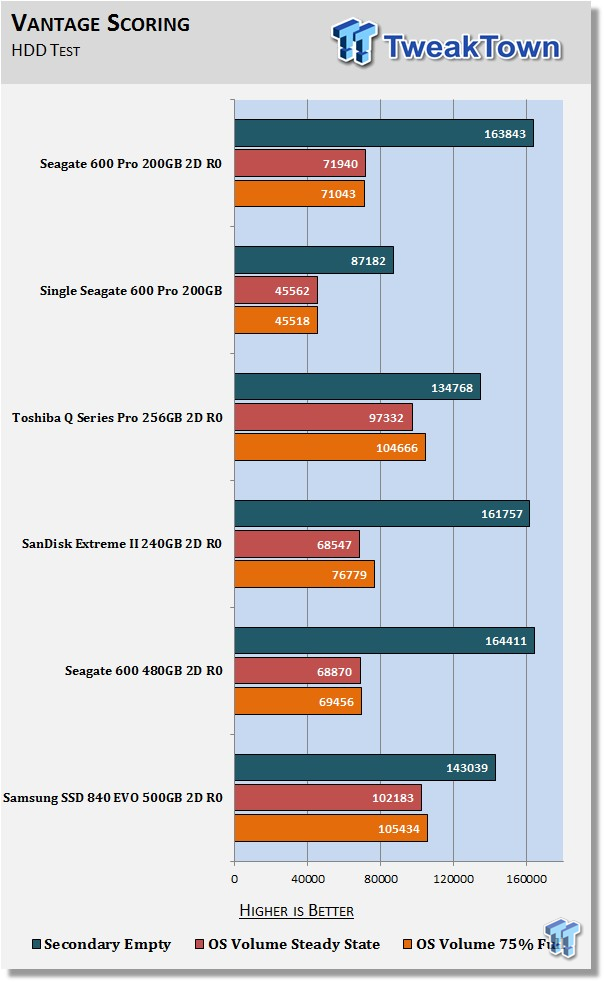

PCMark Vantage - Hard Disk Tests

Version and / or Patch Used: 1.2.0.0

The reason we like PCMark Vantage is because the recorded traces are played back without system stops. What we see is the raw performance of the drive. This allows us to see a marked difference between scoring that other trace-based benchmarks do not exhibit. An example of a marked difference in scoring on the same drive would be empty versus filled versus steady state.

We run Vantage three ways. The first run is with the OS drive/Array 75 percent full to simulate a lightly used OS volume filled with data to an amount we feel is common for most users. The second run is with the OS volume written into a "Steady State" utilizing SNIA's guidelines (Rev 1.1). Steady state testing simulates a drive/arrays performance similar to that of a drive/array that has been subjected to consumer workloads for extensive amounts of time. The third run is a Vantage HDD test with the test drive/array attached as an empty, lightly used secondary device.

OS Volume 75% full - Lightly Used

OS Volume 75% full - Steady State

Secondary Volume Empty - Lightly Used

As you can see, there's a big difference between an empty drive/array, one that's 75 percent full/used, and one that's in a steady state.

The important scores to pay attention to are "OS Volume Steady State" and "OS Volume 75% full." These two categories are most important because they are indicative of typical consumer user states.

When a drive/array is in a steady state, it means garbage collection is running at the same time it's reading/writing. There's a huge difference in performance between a single drive and a two-drive array. Our Seagate 600 Pro array manages to outperform our Extreme II and 600 Series arrays. Our Q series Pro and EVO arrays really outperform the rest in this round of testing.

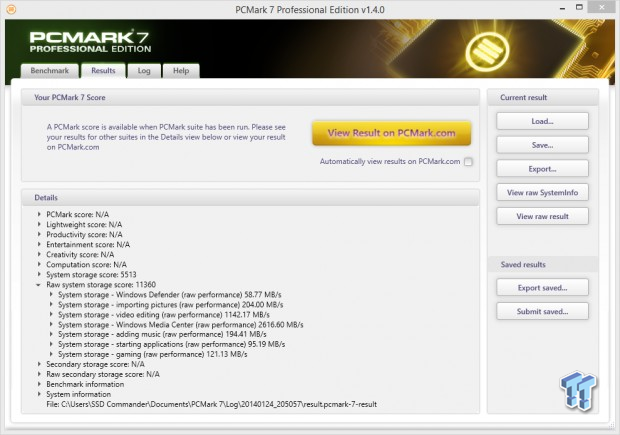

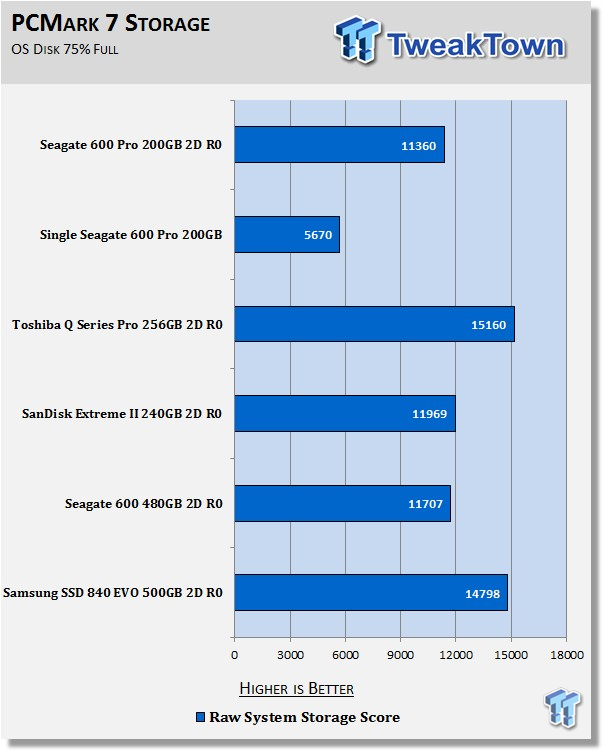

PCMark 7 - System Storage

Version and / or Patch Used: 1.4.00

We will look to the Raw system storage scoring for RAID 0 evaluations because it's done without system stops and therefore allows us to see significant scoring differences between drives/arrays.

OS Volume 75% full - Lightly Used

In a light usage scenario, our Seagate 600 Pro array is handily outperformed by the Q series Pro and the EVO arrays. Enthusiasts, however, typically do not fall into a light usage model category. The 600 Pro was designed for superior performance in heavy usage model scenarios.

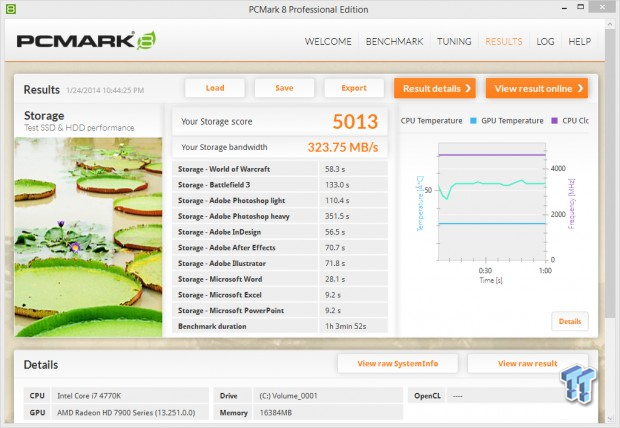

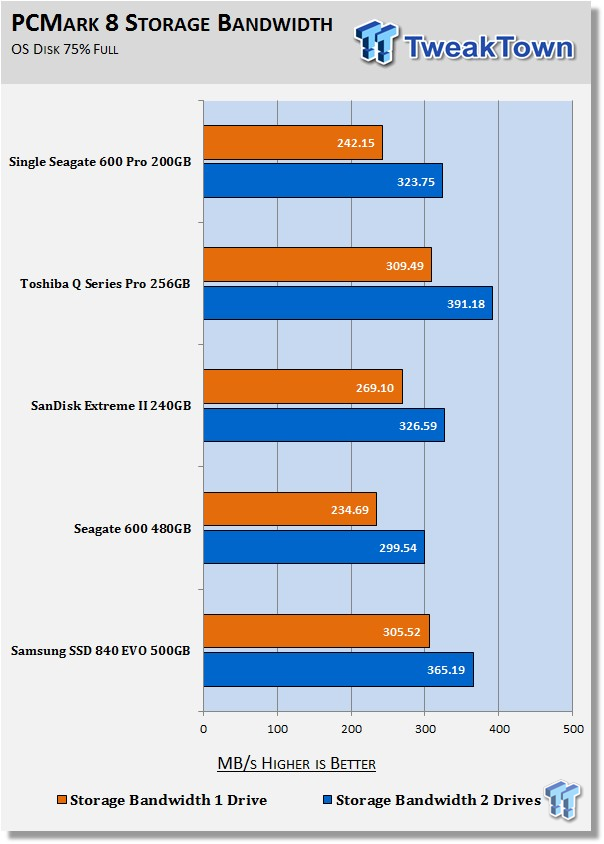

PCMark 8 - Storage Bandwidth

Version and / or Patch Used: 1.2.157

We use the PCMark 8 Storage benchmark to test the performance of SSDs, HDDs, and hybrid drives with traces recorded from Adobe Creative Suite, Microsoft Office, and a selection of popular games. You can test the system drive or any other recognized storage device, including local external drives. Unlike synthetic storage tests, the PCMark 8 Storage benchmark highlights real-world performance differences between storage devices.

OS Volume 75% full - Lightly Used

Once again, our 600 Pro array can't catch Toshiba's Q Series Pro or Samsung's EVO. Our 600 Pro array again shows performance that's superior to our larger 600 series array despite the larger array's capacity advantage.

Secondary Volume Benchmarks - Disk Response, Transfer Rates & PCMark 8 Extended

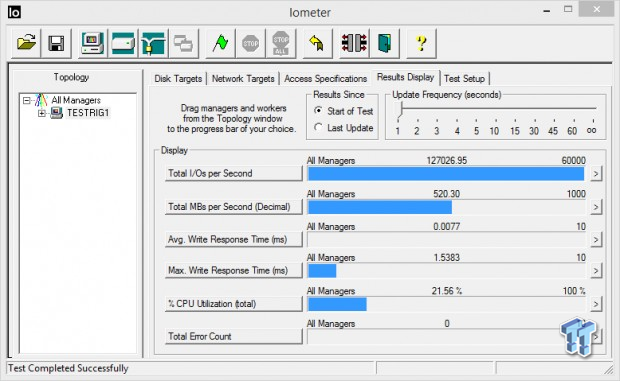

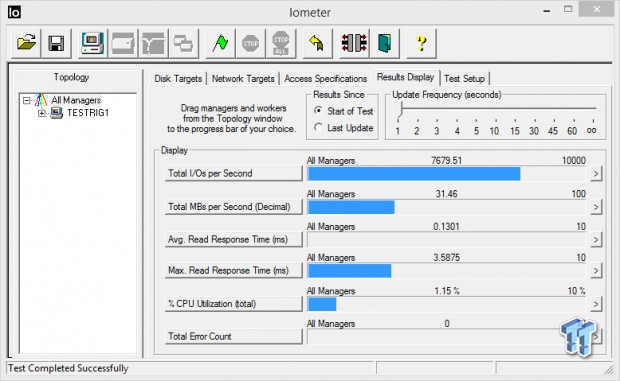

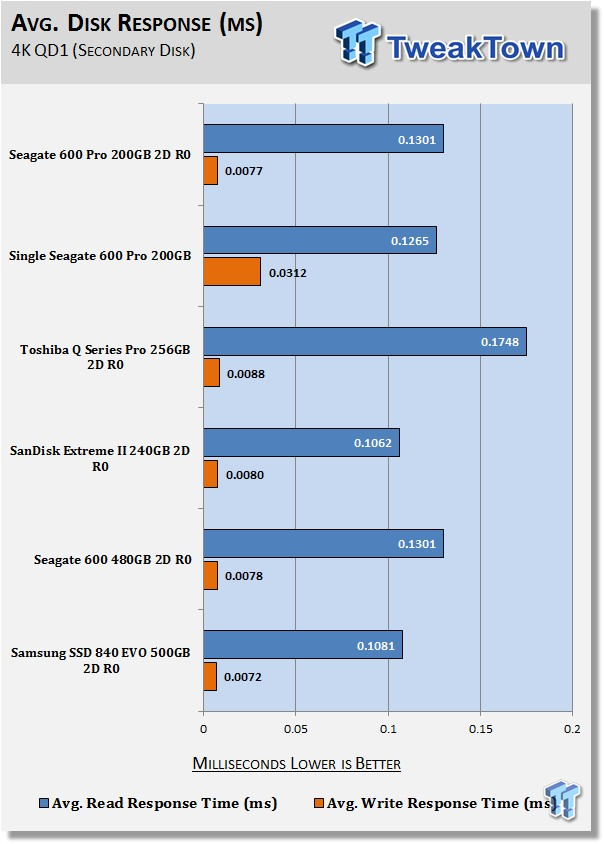

Iometer - Disk Response

Version and / or Patch Used: 1.1.0

We use Iometer to measure disk response times. Disk response times are measured at an industry accepted standard of 4k QD1 for both write and read. Each test is run twice for 30 seconds consecutively, with a 5 second ramp-up before each test. The drive/array is partitioned and attached as a secondary device for this testing.

Write Response

Read Response

Average Disk Response

Write response times benefit most from RAID 0 because of write caching. There is a slight latency increase in read response times for an array versus a single drive. Write response times are excellent, second only to our EVO array. Our test is operating within our EVO array's emulated SLC "Turbo Write" layer, so keep in mind that an EVO array will only have this kind of write response as long as what you are transferring fits within this layer.

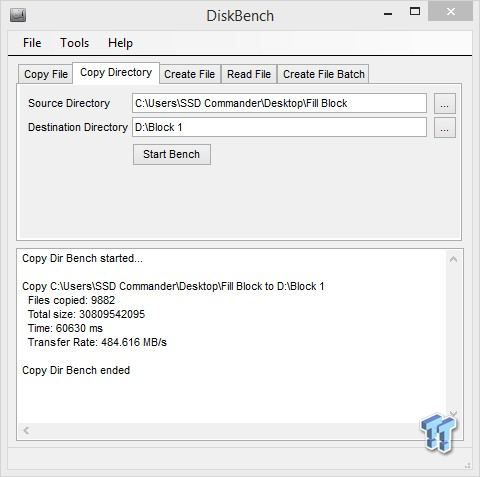

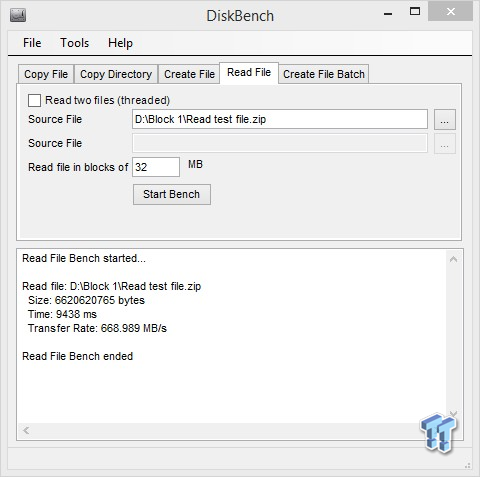

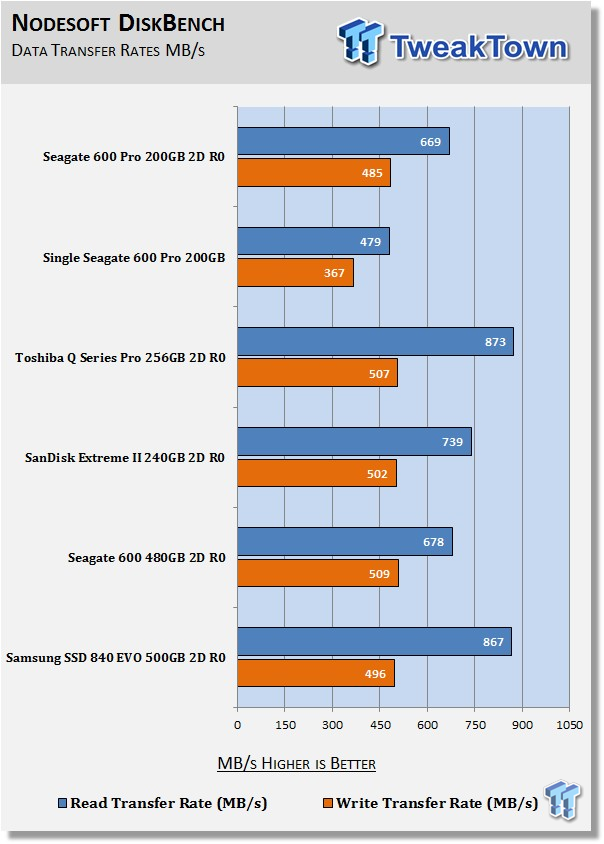

DiskBench - Directory Copy

Version and / or Patch Used: 2.6.2.0

We use DiskBench to time a 28.6GB block (9,882 files in 1,247 folders) of mostly incompressible random data as it's transferred from our OS array to our test drive/array. We then read from a 6GB zip file that's part of our 28.6GB data block to determine the test drive/array's read transfer rate. The system is restarted prior to the read test to clear any cached data, ensuring an accurate test result. This is a pure transfer test; no workload is running simultaneously.

Write Transfer Rate

485 MB/s is a good performance. We expected to see a little better performance, but then again, this is just a transfer test with no workload involved, so this test doesn't exactly fall into the 600 Pro's wheelhouse.

Read Transfer Rate

669 MB/s is one of the slower transfer rates we've achieved when running this test. It's still good, but it's nothing to write home about.

This is a perfect example of a capacity-based performance advantage. Our 600 series array has faster overall transfer rates due to its larger capacity, despite being the slower of the two arrays in a workload setting.

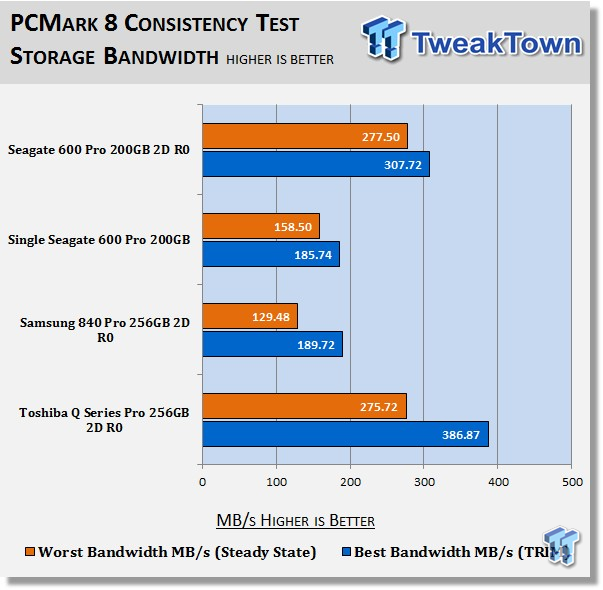

Futuremark PCMark 8 Extended - Consistency Test

Heavy Usage Model:

We consider PCMark 8's consistency test our heavy usage model test. This is the usage model most enthusiasts, gamers, and professionals fall into. If you do a lot of gaming, audio/video processing, rendering, or have workloads of this nature, this test will be most relevant to you.

PCMark 8 has built-in, command-line-executed storage testing. The PCMark 8 Consistency Test measures the performance consistency and degradation tendency of a storage system.

The Storage test workloads are repeated. Between each repetition, the storage system is bombarded with a usage that causes degraded drive performance. In the first part of the test, the cycle continues until a steady degraded level of performance has been reached (Steady State).

In the second part, the recovery of the system is tested by allowing the system to idle and measuring the performance with long intervals (TRIM).

The test reports the performance level at the start, the degraded steady-state, and the recovered state, as well as the number of iterations required to reach the degraded state and the recovered state.

We feel Futuremark's Consistency Test is the best test ever devised to show the true performance of solid state storage in a heavy usage scenario. This test takes on average 13 to 17 hours to complete and writes somewhere between 450GB and 7000GB of test data depending on the drive(s) being tested. If you want to know what an SSD's performance is going to look like after a few months or years of heavy usage, this test will show you.

Here's a breakdown of Futuremark's Consistency Test:

Precondition phase:

1. Write to the drive sequentially through up to the reported capacity with random data.

2. Write the drive through a second time (to take care of overprovisioning).

Degradation phase:

1. Run writes of random size between 8*512 and 2048*512 bytes on random offsets for 10 minutes.

2. Run performance test (one pass only).

3. Repeat 1 and 2 for 8 times, and on each pass increase the duration of random writes by 5 minutes.

Steady state phase:

1. Run writes of random size between 8*512 and 2048*512 bytes on random offsets for 50 minutes.

2. Run performance test (one pass only).

3. Repeat 1 and 2 for 5 times.

Recovery phase:

1. Idle for 5 minutes.

2. Run performance test (one pass only).

3. Repeat 1 and 2 for 5 times.

Storage Bandwidth

PCMark 8's Consistency test provides a ton of data output that we can use to judge a drive's performance. Final calculated storage bandwidth results are given 2 ways: Worst Bandwidth and Best Bandwidth. Worst Bandwidth (Steady State) equates to the lowest measured steady state achieved during the test. Best Bandwidth (TRIM) equates to the best bandwidth achieved during the recovery state.

We are going to consider steady state bandwidth (the orange bar) as our test that carries the most weight in ranking a drive's performance. The reason we consider steady state performance (Worst Bandwidth) more important than TRIM (Best Bandwidth) is that when you are running a heavy duty workload, TRIM will not be occurring while that workload is being executed. TRIM performance (the blue bar) is what we consider the second most important consideration when ranking a drive's performance.

Trace-based consistency testing is where SSDs like our 600 Pro excel and SSDs like the 840 Pro crumble. As you can see, our 600 Pro array's enterprise pedigree allows it to outperform the rest of the arrays on our chart in a steady state. Notice how the 600 Pro array has very little performance drop off in a steady state. This is an example of how over-provisioning benefits performance. Our non-overprovisioned Q Series Pro array performs great, but as you can see, without the benefit of over-provisioning, its performance drops off much more significantly in a steady state.

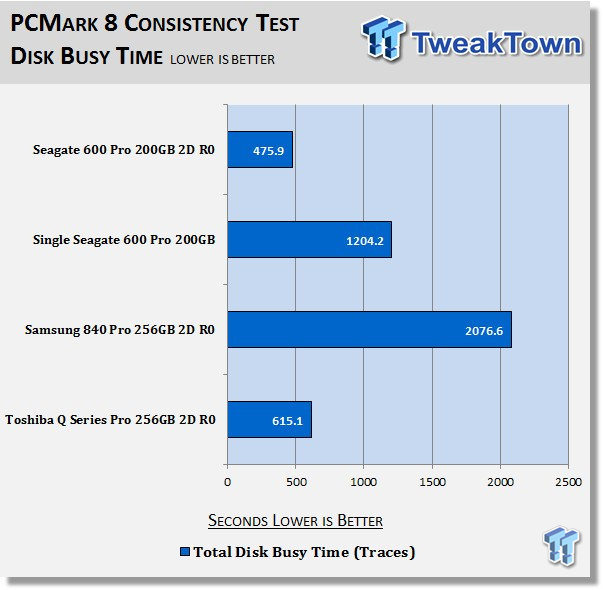

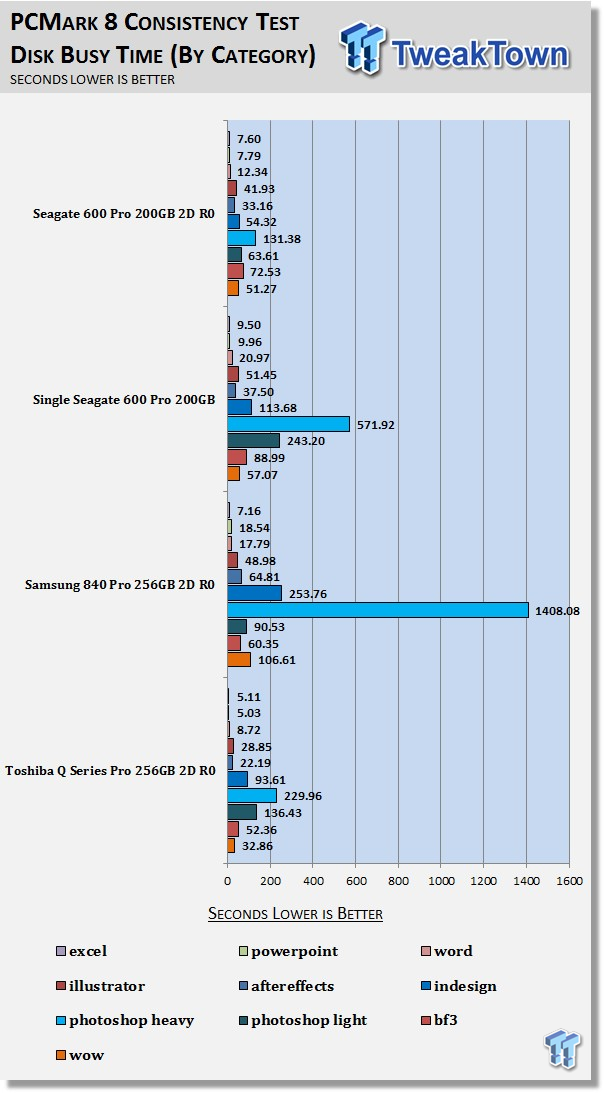

Disk Busy Time

Disk Busy Time is how long the disk is busy working. We measure the total time the disk is busy while replaying all 18 trace iterations.

Our 600 Pro array is able to spend less time working than our Q Series Pro while running the same tasks. Over-provisioning gives our 600 Series Pro the advantage once again. Compare the disk busy time of a single 600 Pro to our two-drive 600 Pro array. Notice the single drive is busy for more than 2.5 times the array. This over scaling shows you the benefit of write caching. Our Disk Busy Time by category chart looks out of whack due to the poor performance of our 840 Pro array.

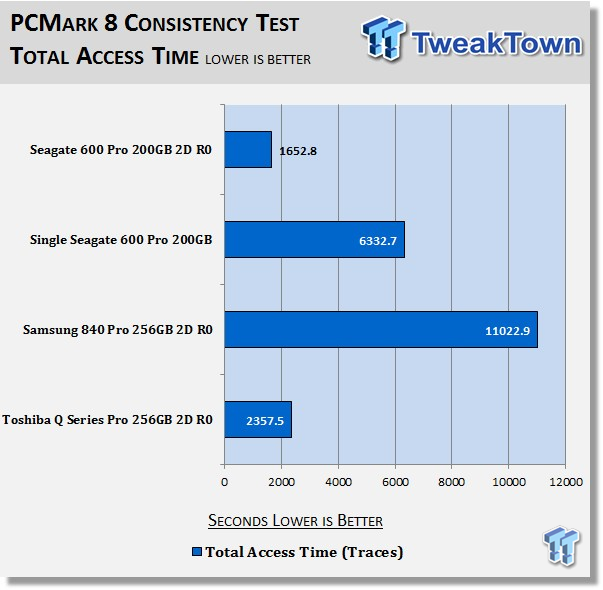

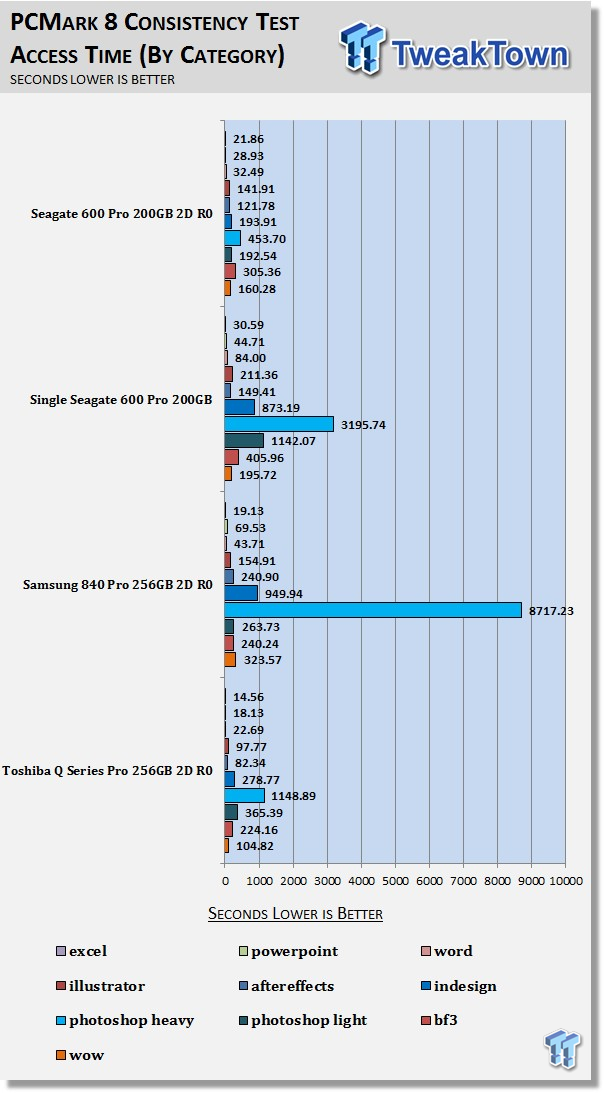

Total Access Time

Access time is the time delay or latency between a request to an electronic system and the access being completed or the requested data returned. Access time is how long it takes to get data back from the disk. We measure the total time the disk is being accessed when replaying all 18 trace iterations.

Coming as no surprise, our 600 Pro array easily wins this test, too. We're starting to see why a drive designed for an enterprise workload provides superior performance in a steady state. Clearly, the 840 Pro was not designed to excel when bombarded with heavy duty random workloads.

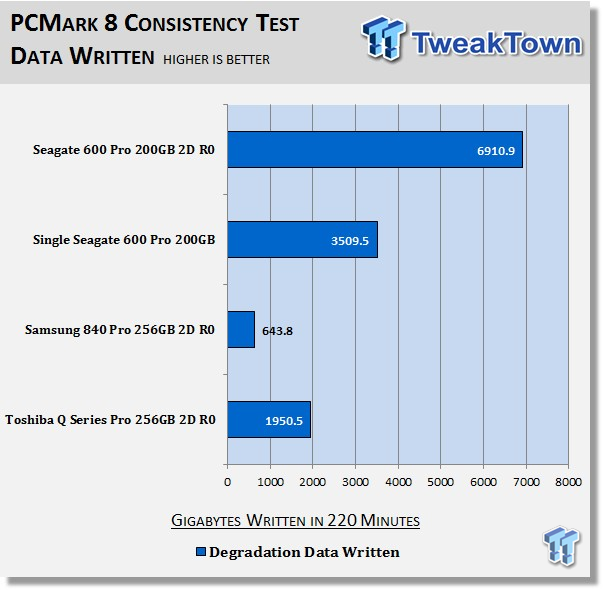

Data Written

We measure the total amount of random data that the drives are capable of writing during the degradation phases of the consistency test. The total combined time that degradation data is written to the drive is 220 minutes. This can be very telling. The better the drive can process a continuous stream of random data, the more data will be written.

In my opinion, nothing I've ever seen more clearly shows the benefit of over-provisioning than this chart does. In the same amount of time, our 600 Pro array was able to write over three times more random data than our Q Series Pro array despite their almost identical steady state storage bandwidth. Even a single 600 Pro can write almost twice the amount of data as the Q series Pro array.

The 600 Pro is over-provisioned 27 percent, so a large part of its NAND array is dedicated to on-the-fly garbage collection resulting in random write steady state performance that's vastly superior to drives with little or no over-provisioning. Our 840 Pro array is again gasping for air, unable to write even one-tenth the amount of random data in a steady state that our 600 Pro array can.

Final Thoughts

Solid state storage is the most important performance component found in a modern system today. Without it, you do not even have a performance system.

We will be updating our benchmarking regimen and report format over the next few months. Some of the benchmarks we've been using have already been, or will be, replaced with new benchmarks that drill down deeper into the meaning of real-world performance. We eliminated a couple of benchmarks in this report that we no longer find to be relevant and added a benchmark that we find to be more relevant than any we've ever seen.

As if there wasn't already enough to love about Seagate's 600 Pro SSD, our new PCMark 8 consistency testing sheds even more light as to why we consider this SSD one of the best ever made. Seagate's 600 Pro 200GB has been designed with enterprise workloads in mind. We've always known that an SSD that's designed to perform well in a demanding enterprise setting is an SSD that does have superior performance to a typical consumer-oriented SSD utilized in a demanding enthusiast/professional setting. However, until now, we haven't really had a test that clearly shows things like the advantages of over-provisioning and low latency in a steady state.

Until now, drives like the 840 Pro have dominated our charts, but as we now clearly see, drives that have been optimized for testing that falls into a synthetic or light usage scenario don't always come up looking like stars when we blast them with a heavy duty workload in a steady state. We can even see that some drives, once they hit a steady state, will not recover much by way of TRIM.

Futuremark's Extended Consistency test is really the gold standard of benchmarks for determining how well an SSD is going to perform for the long haul when subjected to the heavy duty workloads that most of our readers will subject their NV storage to. This test also clearly shows you the tremendous advantage RAID 0 brings to the table when heavy duty processing is what's for dinner.

For now, suffice it to say we consider Seagate's 600 Series Pro one of the top three SATA based SSDs ever made. Up next, we have another drive that falls into our top three SATA based SSDs ever made. We will be doing a six-drive RAID report of this drive that will include our new gold standard consistency testing. To sweeten the pot even more, five of you will have a chance to win one of your very own, so stay tuned for that.

RAIDing two or more drives together provides you with storage that takes performance to the next level and is something I recommend you try. Once you go RAID, there's no going back.

PRICING: You can find the Seagate 600 Pro (200GB) for sale below. The prices listed are valid at the time of writing but can change at any time. Click the link to see the very latest pricing for the best deal.

United States: The Seagate 600 Pro (200GB) retails for $338.00 at Amazon.

Canada: The Seagate 600 Pro (200GB) retails for CDN$294.99 at Amazon Canada.

United

States: Find other tech and computer products like this

over at

United

States: Find other tech and computer products like this

over at  United

Kingdom: Find other tech and computer products like this

over at

United

Kingdom: Find other tech and computer products like this

over at  Australia:

Find other tech and computer products like this over at

Australia:

Find other tech and computer products like this over at  Canada:

Find other tech and computer products like this over at

Canada:

Find other tech and computer products like this over at  Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf

Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf