Introduction

AMD's long awaited R600 DX10 GPU finally arrives

It has been a long time coming but today AMD is finally set to release its massively anticipated GPU codenamed R600 XT to the world with the official retail name of ATI Radeon HD 2900 XT. It is a hugely important part for AMD right now, who recently posted massive profit loss figures. It is counting on all these new models, along with the high-end 512MB DDR-3 DX10 part with 512-bit memory interface to kick ass and help raise revenue reports against the current range from the green GeForce team, which is selling like super hot cakes.

The new R600 range of graphics processing units was set to see a release on March 30 (R600 XTX) but due to production issues and lack of decisiveness to make any firm decisions, it got delayed and delayed. It was beginning to look like AMD would let down its loyal fan base; some even began suggesting the R600 was vaporware. That would have shaken up the industry immensely and thankfully for all, that did not happen. AMD is finally able to introduce some competition to Nvidia's GeForce lineup of cards with its new series of DX10 and Windows Vista ready products.

Eventually the folks at AMD got their act together and made some clear-cut decisions and got production issues under control and underway - probably due to indecisiveness between using GDDR-3 or GDDR-4 and associated cost vs. performance concerns. It was eventually leaked out to the world that the R600 XTX (the highest end model) would be reserved for system integrators due to its size and heat related issues - you may or may not see this GPU in OEM systems from companies like Dell and HP. That model will measure a staggering 12-inches long and probably will not be suitable for every computer case or configuration. It was deemed unacceptable for the consumer retail space and hence was scrapped from all plans.

Today AMD is launching an enthusiast part HD 2900 series with the HD 2900 XT, performance parts with the HD 2600 series including HD 2600 XT and HD 2600 PRO, along with value parts including HD 2400 XT and 2400 PRO. The HD 2600 and 2400 series have had issues of their own and you will need to wait a little longer before being able to buy these various models on shop shelves (July 1st). The HD 2900 XT will be available at most of your favorite online resellers as of today. Quantity is "not too bad" but a little on the short side with most of AMD's partners only getting between 400 - 600 units which is not that much considering the huge number of ATI fans out there. You may want to get in quick and place your order, if you are interested - some AIB companies are not sure when they will get in their next order, too.

Our focus today is solely on the HD 2900 XT 512MB GDDR-3 graphics card - it is the first GPU with a fast 512-bit memory interface but what does this mean for performance? While it is AMD's top model right now, it is actually priced aggressively at around the US$350 - US$399 mark in United States, which puts it price wise up against Nvidia's GeForce 8800 GTS 640MB. After taking a look at the GPU and the card from PowerColor as well as some new Ruby DX10 screenshots, we will move onto the benchmarks and compare the red hot flaming Radeon monster against Nvidia's GeForce 8800 GTX along with the former ATI GPU king, the Radeon X1950 XTX.

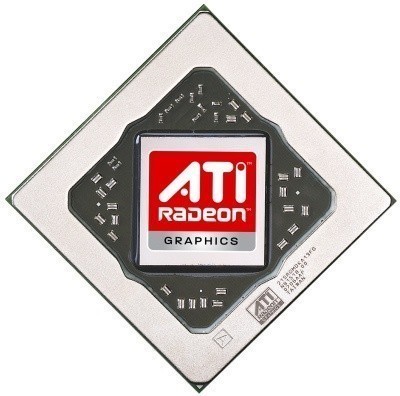

HD 2900 XT GPU

Radeon HD 2900 XT GPU

R600 is AMD's first range of top to bottom DirectX 10 graphics cards with fully certified support for Microsoft's Windows Vista operating system. While DX10 GPU support might not be very important right at this moment, soon it will be a requirement to experience the best graphics potential from current games, which are awaiting DX10 patches, and upcoming games such as Crysis, Alan Wake and Unreal Tournament 3. Sadly it is basically impossible for us to provide comparative DX10 benchmark numbers between AMD and Nvidia graphics cards at the moment - AMD gave the press a DX10 benchmark demo of Call of Juarez but it does not work properly on Nvidia graphics cards at this stage. 3DMark07 is not too far away and DX10 games and patches will come out soon (starting from June onwards) but until that time, we are unable to test this new API's performance.

R600 GPU is also the first out of the major GPU players to introduce a smaller 65nm manufacturing process but still manages to pack in a massive 700 million transistors for the top model. Keep in mind that the high-end HD 2900 XT uses 80nm process technology from TSMC but the HD 2600 and 2400 series use the more power efficient and cooler operating 65nm process. It is said that AMD will launch the faster R650 (possibly with two models, HD 2950 XT and XTX) in Q3 of this year that are also based on the improved 65nm process, which will allow AMD to ramp up clock speeds and fight harder against Nvidia. You were thinking it and you are right - it will probably only be three or four months before your brand new Radeon graphics card has been replaced with a faster model but that is just the way things seem to be now, like it or not.

It is also the first with 512-bit memory interface (compared to GeForce 8800 with 384-bit and 86.4GB/s bandwidth) which will increase memory bandwidth throughput (stock speeds push out 106GB/sec) without having to have the memory frequency tuned up as high or relying on more expensive GDDR-4 memory. For instance, the memory clock speed of the HD 2900 XT is just 828MHz (or 1656MHz DDR) whereas the 8800 GTX operates at 1800MHz DDR with its slower interface. Using 320 processing units at a core clock speed of 742MHz with two FLOPs per unit, it is able to process data at 475 GigaFLOPS.

It is enough to make the new HD 2900 XT up to 150% faster than the old Radeon X1950 XTX in some ultra-high-resolution gaming tests, according to AMD press documents. Crossfire dual-graphics support is not going anywhere and of course, it is fully supported by the HD 2900 XT.

AMD may well have added "HD" into the product name since it is not only able to process video but also audio over HDMI. Details are unclear at this stage but this card will be able to output up to 5.1 surround sound through the DVI port using a special DVI to HDMI adapter, which is also a first - previously if you used a DVI to HDMI connector on older graphics cards, it would just carry video signals. AMD has not been very forthcoming with info about how the sound part works but apparently there is an audio chip on the card that is able to achieve the feat. AMD has done this to gain Microsoft Windows Vista Premium Logo certification, as requirements for HDMI systems state that you cannot split the audio output - e.g. HDMI carrying video and audio and optical or whatever else also carrying the signal to your speakers.

That finishes the summary on the GPU itself - let us move to the actual card now. Next page please!

HD 2900 XT Graphics Card

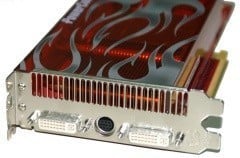

PowerColor HD 2900 XT Graphics Card

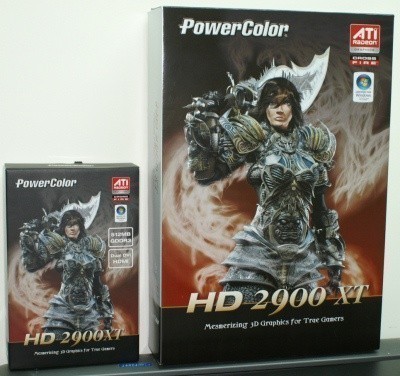

Due to limited availability as well as the fact press in different regions are getting priority over others, we tested an actual retail graphics card from PowerColor. It has the same clock speeds as all other reference cards floating around - 742MHz core clock and 512MB of GDDR-3 memory clocked at 828MHz or 1656MHz DDR.

The PowerColor PCI Express x16 card looks just the same as reference cards. Later on you will see more expensive water cooled HD 2900 XT models from the usual suspects along with overclocked models in the following weeks. We did not get time to perform any overclocking tests but reports are floating around that the core is good to at least 800 - 850MHz and the GDDR-3 memory more than likely has room to increase. You may even see some companies produce HD 2900 XT OC models which use 1GB of faster GDDR-4 memory operating at over 2000MHz DDR or they will use special cooling to get the most out of the default setup.

As far as size goes, the HD 2900 XT is a little longer than the Radeon X1950 XTX but a good deal shorter than the GeForce 8800 GTX, as you can see from the shot above with the PowerColor HD 2900 XT sitting in the middle of the group. Both of the other cards take up two slots and the HD 2900 XT is no different.

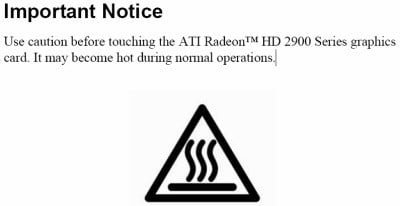

In 2D mode (non-gaming in Windows), the clock speeds are automatically throttled back to 506MHz on the core and 1026MHz DDR on the memory. This is done to reduce power consumption and also to reduce temperatures, which seems to pretty important for the HD 2900 XT. Check out this warning from the user manual supplied by AMD:

Ouch! Using some AMD monitoring software, we noted idle temperatures of between 65 - 70-degrees Celsius from the core die and that is just sitting in Windows. At full load halfway through a 3DMark06 benchmark run, we noted a maximum temperature of 84-degrees Celsius from the core die - in other words, very hot! The air exhausting through the back vents on the card was not warm, but hot. I could not keep my hand in the way of the airflow for very long - it would make a good hairdryer if the airflow was a little stronger but people might look at you strangely if you dry your hair this way, not to mention you probably do not want to be waving your dripping wet hair around the back of your PC! Suffice to say, we suggest against trying. To be honest, I was not expecting the card to be so hot - at one time I almost burnt my fingers!

The card is said to be good to 100-degrees Celsius on the core itself but these temperatures are crazy and we tend to think that OC might be limited considering it is already running this hot - at stock speeds. Once AMD moves to 65nm process technology, this should cure some heat related issues while also allowing for higher clock speeds. Nevertheless, the flame design on the cooler is rather appropriate - the flaming hot monster indeed.

HD 2900 XT Graphics Card Continued

PowerColor HD 2900 XT Graphics Card Continued

The good news is that noise coming from the cooler has been improved (and it is heavier with more heat pipe action) over the previous Radeon X1950 XTX, which at full RPM fan speed produced a kind of high pitched whining type of sound. Thankfully the HD 2900 XT has improved on this and that kind of annoying high pitched sound does not exist and it is noticeably quieter than the previous high-end model. When in 2D mode, the HD 2900 XT was hardly audible - the reference Intel CPU cooler from Cooler Master was outputting more sound than the graphics card when sitting in Windows. At first, we even had to check if the fan was actually working while in 2D mode - you can hear when 3D mode kicks in but that was with the case open and in a completely silent room, not a realistic environment when you have the sound turned up playing games or music.

As far as power goes, AMD recommend a minimum 750-watt power supply unit for single card but we only used a (high-quality) 500-watt PSU for our testing and did not note any stability problems - and we were using an Intel Core 2 Quad processor operating at 3GHz, which is no pussycat. The power supply recommended is one with two 2x3-pin PCI Express power connectors. However, if you want to use AMD's built-in overclocking software in Catalyst Control Center called OverDrive, you will need one 2x3-pin and one 2x4-pin connector. This can be overcome by using a 2x4-pin to 2x3-pin connector, as shown below. Other Radeon overclocking tools such as ATI Tool may not even require the 2x4-pin connector but that remains to be seen.

As far as other connectors go, you of course have the mandatory Crossfire connectors for dual-graphics support. On the back in the I/O area are two dual-link DVI connectors along with the S-video like port connector that allows for HDTV-out through component cable and so forth. As mentioned on the previous page, most companies should end up including a special DVI to HDMI connector that is not only able to output video but also audio.

Since we tested the PowerColor HD 2900 XT before it was ready to be shipped out to customers, the retail package was not yet available, but we did get a chance to see the stylish box design. We are not exactly sure what will come in the package but you can most likely expect the usual array of cables and we have been told you will get the 2x4-pin to 2x3-pin connector and special HDMI adapter.

As we have already reported in the news, one of the major selling points of the R600 cards will be the fact that AMD has teamed up with Valve Software again to include a voucher for some upcoming games. When the games are ready (and it should not be too far away), you will be able to login to your Steam account and download the full versions of Half-Life 2: Episode 2, Team Fortress 2 (l33t!) and Portal. If other companies choose to also include another full version game, the package is starting to look quite impressive!

Before we move into the benchmarks, we want to show you a technology showcase of what the R600 GPU is capable of thanks to our friend Ruby but now all new and improved.

New and Improved Ruby - DX10 Screenshots

When, in the past, ATI and Nvidia released brand new graphics cards, they were usually accompanied by a tech demo showcasing what the product is capable of doing. We are pleased to see AMD is continuing that tradition.

The famous Ruby babe is back with the launch of the R600 GPU and now she is rendered in DX10 and looking better than ever.

Impressed?

Benchmarks - Test System Setup

Test System Setup

Processor: Intel Core 2 Quad QX6700 at 3GHz (10 x 300MHz FSB)

Motherboard: ASUS P5WDG2 WS PRO (Intel 975X)

Memory: 4 x 512MB Samsung DDR2-800

Hard Disk: Western Digital 80GB 7200 RPM SATA

Power Supply Unit: Seventeam 500-watt

Monitor: Dell 3007WFP 30" LCD

Operating System: Microsoft Windows XP Processional SP2

Drivers: Intel INF 8.3.0.1011, ATI Catalyst 7.4 (for X1950 XTX), ATI 8.361 (for HD 2900 XT), ForceWare 158.22 (for 8800 GTX) and DX9c

UPDATE - We are aware there are newer drivers in the wild (8.37 and 8.38) but these are BETA drivers and NOT the final shipping driver. They are said to improve performance but are not stable. All retail cards will be shipping with 8.361 as we tested.

We performed all testing of the graphics on the system above with the impressive Dell 30-inch LCD monitor. It is a high powered system with the quad-core QX6700 processor overclocked to 3GHz (10 x 300MHz FSB) to eliminate any chance of the CPU being the bottleneck of the system. AMD recommends at least a 750-watt power supply but we only used a 500-watt model and had no issues, but your mileage may vary.

All cards are running at their stock speeds, we did not change anything except disabling vertical sync. We are comparing the Radeon HD 2900 XT 512MB against the older Radeon X1950 XTX 512MB and Nvidia's GeForce 8800 GTX 768MB using the latest drivers for each card. While the HD 2900 XT 512MB is priced more closely to the GeForce 8800 GTS 640MB, we wanted to compare against the more expensive 8800 GTX to see what AMD's new part can do against the more expensive product - in fact, at retail the 8800 GTX is at least US$150 more than the HD 2900 XT 512MB. If AMD's new flaming beast can keep up even a little with the GTX, it will be impressive!

We tested with the latest available testing driver from AMD which is 8.361 - and this is the shipping driver for Radeon HD 2900 XT. There is a new driver floating around but that is not entirely stable yet. We would not be surprised if AMD releases its Catalyst 7.5 monthly driver soon and that should probably see some performance improvements and fixes of known bugs. The driver that we are using now is not perfect and AMD has noted some known issues. For example, in some of our tests, the older Radeon X1950 XTX is actually faster than the HD 2900 XT - this is a driver issue and we hope AMD fixes the problems very soon.

As mentioned, we did not get time to run any overclocking tests but we did manage to run at three resolutions - 1280 x 1024 (standard LCD), 1920 x 1200 (24" LCD) and 2560 x 1600 (30" LCD). We also ran a couple of tests with AA and AF enabled at the maximum possible at 1920 x 1200 to put as much strain on the cards as possible. We have also started to use Supreme Commander to expand our lineup of benchmark tests and boy is it intensive. All games were tested with graphics quality settings of "high" or "highest" and patched to their latest versions for best support.

Does AMD's ATI Radeon HD 2900 XT have what it takes to put a smile back on its shareholder's faces? Let us not waste any more time and get cracking!

Benchmarks - 3DMark05

3DMark05

Version and / or Patch Used: Build 130

Developer Homepage: http://www.futuremark.com

Product Homepage: http://www.futuremark.com/products/3dmark05/

Buy It Here

3DMark05 is now the second latest version in the popular 3DMark "Gamers Benchmark" series. It includes a complete set of DX9 benchmarks which tests Shader Model 2.0 and above.

For more information on the 3DMark05 benchmark, we recommend you read our preview here.

Straight off the bat we can see that the GeForce 8800 GTX manages to take the lead in every test but the new HD 2900 XT managed to put up quite a good fight considering the price difference.

As you would expect, the R600 has a big lead over its older brother, the Radeon X1950 XTX. Is it enough to warrant an upgrade though? Let us check out some more tests and find out.

Benchmarks - 3DMark06

3DMark06

Version and / or Patch Used: Build 110

Developer Homepage: http://www.futuremark.com

Product Homepage: http://www.futuremark.com/products/3dmark06/

Buy It Here

3DMark06 is the very latest version of the "Gamers Benchmark" from FutureMark. The newest version of 3DMark expands on the tests in 3DMark05 by adding graphical effects using Shader Model 3.0 and HDR (High Dynamic Range lighting) which will push even the best DX9 graphics cards to the extremes.

3DMark06 also focuses on not just the GPU but the CPU using the Ageia PhysX software physics library to effectively test single and dual-core processors.

Under the more intensive 3DMark06 benchmark we see the same type of picture painted.

Benchmarks - Supreme Commander

Supreme Commander

Version and / or Patch Used: 3223

Timedemo or Level Used: Built-in Test

Developer Homepage: http://www.gaspowered.com

Product Homepage: http://www.supremecommander.com

Buy It Here

In the 37th Century, you are the Supreme Commander of three races, with a single goal in mind-to end the 1000 year Infinite War and become the reigning power supreme. For a thousand years, three opposing forces have waged war for what they believe is true. There can be no room for compromise: their way is the only way. Dubbed The Infinite War, this devastating conflict has taken its toll on a once-peaceful galaxy and has only served to deepen the hatred between the factions.

Interestingly enough in our first real-world game test, and a very intensive one at that, we see that the HD 2900 XT is able to edge ahead of the 8800 GTX when we record minimum FPS, which is important to take into consideration. We are not sure why this might be but Supreme Commander is still a relatively new game and upcoming Nvidia ForceWare drivers might fix the issue or it could just be the way things are.

Once we take average frame rates into consideration, we see the green team take the lead back. At these high-quality settings with 1280 x 1024 resolution the 8800 GTX and HD 2900 XT are only offering a playable gaming experience, but really only just. If you want to play this game with the settings turned up, it is clear that you will need a faster Crossfire or SLI dual-graphics solution.

Benchmarks - Prey

Prey

Version and / or Patch Used: 1.3

Timedemo or Level Used: HardwareOC Custom Timedemo

Developer Homepage: http://www.humanhead.com

Product Homepage: http://www.prey.com

Buy It Here

Prey is one of the newest games to be added to our benchmark lineup. It is based off the Doom 3 engine and offers stunning graphics passing what we've seen in Quake 4 and does put quite a lot of strain on our test systems.

In Prey we are using the "Highest Quality" option but with the 512MB graphics boost option disabled as we have had issues with it in the past.

Again Nvidia is in front with its more expensive GeForce 8800 GTX offering the smoothest gaming experience. Keep in mind though that the HD 2900 XT never drops below 60 FPS which is what we consider the minimum average frame rate you should be looking at for a good game play experience. The Radeon X1950 XTX is really only good for solid game play at 1280 x 1024 and then it starts to go downhill after that.

Benchmarks - Company of Heroes

Company of Heroes

Version and / or Patch Used: 1.5

Timedemo or Level Used: Built-in Test

Developer Homepage: http://www.relic.com

Product Homepage: http://www.companyofheroesgame.com

Buy It Here

Company of Heroes, or COH as we are calling it, is one of the latest World War II games to be released and also one of the newest in our lineup of benchmarks. It is a super realistic real-time strategy (RTS) with plenty of cinematic detail and great effects. Because of its detail, it will help stress out even the most impressive computer systems with the best graphics cards - especially when you turn up all the detail. We use the built-in test to measure the frame rates.

COH is a little less intensive than Supreme Commander but at the two lower resolutions we are seeing the cheaper HD 2900 XT take the lead. It is only at 2560 x 1600 that the green team is able to snatch the lead back from the red guys.

Again though, when we look at average frame rates the GeForce 8800 GTX is quite a way ahead at 1280 x 1024 and 1920 x 1200. It is interesting to note that at the highest resolution, the HD 2900 XT is only a little behind the GTX.

It seems like when you set the resolution this high, certain games are able to take better advantage of the HD 2900 XT's massive memory bandwidth of 106GB/s compared to 86.4GB/s of the GeForce 8800 GTX.

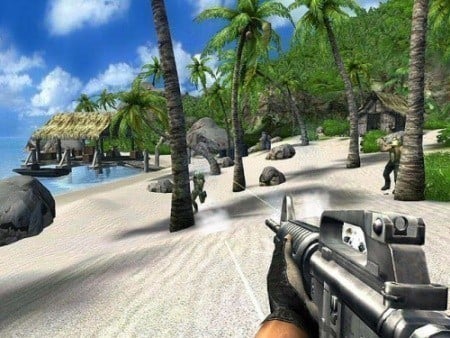

Benchmarks - Far Cry

Far Cry

Version and / or Patch Used: 1.4 with HDR

Timedemo or Level Used: Ubisoft Volcano

Developer Homepage: http://www.crytek.com

Product Homepage: http://www.farcrygame.com

Buy It Here

While Far Cry is now one of our older benchmarking games, it is still able to put pressure on most computers systems as it is able to utilize all parts of the system. Utilizing PS2.0 technology with the latest versions supporting Shader Model 3.0 with DX9c and offering an exceptional visual experience, there is no denying that even some of the faster graphics cards get a bit of a workout.

Some say Far Cry is CPU limited but we laugh in the face of danger - well, not literally but anyway... We maxed out Far Cry as much as we could. Using the latest patch and running at 2560 x 1600 with Ultra detail level, Shader Model 3.0, 8 x AF and 4 x AA with High Dynamic Range lighting enabled is bound to stress out a GPU, even if the game is quite old.

We can see that the GeForce 8800 GTX has the lead in each resolution but again, pay careful attention to these results. AMD's HD 2900 XT offers a playable gaming experience up to 1920 x 1200 whereas the older Radeon X1950XTX is only good for up to 1280 x 1200 at these extreme quality settings. When we max things out to 2560 x 1600, there is little difference between the GTX and XT which tends to tell us that extra memory bandwidth of the R600 is being thoroughly utilized.

Benchmarks - Serious Sam 2

Serious Sam 2

Version and / or Patch Used: 2.070

Timedemo or Level Used: Greendale

Developer Homepage: http://www.croteam.com

Product Homepage: http://www.serioussam2.com

Buy It Here

Picking up where Serious Sam: Second Encounter left off, Sam has rocketed off towards the conquered planet of Sirius, the new home of the notorious Mental. While en route, the Great Wizards Council of the nearly eradicated Sirian civilization telepathically contacts Sam to aid him in his quest to destroy Mental and help restore Sirius. He is then sent on a quest to find the fragments of a mystical medallion scattered throughout the galaxy that will bestow Sam with the power to defeat Mental.

While Serious Sam 2 is not one of the most intensive games in our benchmark suite, we can stress the system by enabling HDR.

Like Far Cry, we decided to max out Serious Sam 2 since it is an older game. Never doubt these older games though, when you turn everything up to the maximum, they are still able to give even the most powerful GPUs a run for their money, as you can see.

This is one of the driver issues we saw with the HD 2900 XT - after a driver fix, this should all be sorted out. Being an older game and not as popular, sometimes they are not given as much priority as other games.

Benchmarks - High Quality AA and AF

High Quality AA and AF

Our high quality tests let us separate the men from the boys and the ladies from the girls. If the cards were not struggling before they will start to now.

In this test we enabled AA and AF to see how things scale. Nvidia's GeForce 8800 GTX has a good lead over the new HD 2900 XT but the old Radeon X1950 XTX is a long way behind AMD's newest GPU.

In our final test, we see the real power of the more expensive GeForce 8800 GTX. Keep in mind the quality settings are set to maximum and with 4 x AA and 16 x AF at 1920 x 1200 the GPUs are pushed almost to their limits.

GeForce 8800 GTX is able to offer a playable gaming experience while the HD 2900 XT sees some driver issues again with these particular settings. Even if the driver was working properly, our numbers would suggest that the XT would be sitting around 50 - 55 average FPS which is not quite high enough for a solid gaming experience.

Final Thoughts

Final Thoughts

AMD's new series of R600 DX10 graphics cards are finally here and we have had plenty of time to examine them but we are left with a tough decision if we should recommend the HD 2900 XT or not. It impressed us considering the price tag and the extras such as sound output through DVI to HDMI adapters and the Valve software bundle but it leaves us wondering if AMD could have done a little better...

AMD lost its chance to take the GPU performance crown here when the planned R600XTX with 1GB GDDR-4 fell through the cracks and did not see the light of day, at least in the channel. It is quite clear to see that the 512-bit memory interface of the HD 2900 XT was a fantastic idea but it also more than likely attributed to manufacturing difficulties (delays) which let Nvidia get in front with earlier released products. Nevertheless, AMD is able to mount cheaper GDDR-3 memory onto the PCB which does not need to operate as fast as the competitor's products, since it is able to push through more data at slower clock speeds, equaling lower costs. You can only imagine if the R600 XTX saw the light of day operating with higher core clock, 1GB of GDDR-4 memory at higher clocks - it would have probably even come close to giving the new GeForce 8800 Ultra a good run for its money. It still might but only time will tell.

AMD's Radeon HD 2900 XT is going to cost between US$350 - US$399, closer to the latter in most places. That makes it at least US$150 cheaper than most GeForce 8800 GTX 768MB cards. Sure, Nvidia's 8800 GTX green champ won most of our tests in the 1280 x 1024 to 1920 x 1200 range but not by as bigger margins as we expected. What is interesting are our results at the 2560 x 1600 resolution - both products have their share of driver issues (which need to be sorted out quickly!) but from our testing at this point in time, the HD 2900 XT makes a really good showing at the 2560 x 1600 resolution. In games like Prey, Company of Heroes and Far Cry, the more expensive 8800 GTX really only has a small lead over the XT. In real-world terms, both offer basically about the same level of game play experience in terms of smoothness and we put that down to the massive 106GB/s of memory bandwidth from the R600, which is able to be properly utilized at this ultra-high resolution. With some overclocking of the core and memory, the HD 2900 XT could probably match or better the more expensive 8800 GTX and that is with 256MB less memory. This in turn tells us that the engineers of the R600, while slow and indecisive, were smart and produced a GPU which is very efficient - but at the right settings.

We expect factory overclocked HD 2900 XT cards to start selling in less than one month from now. AIB partners currently have the option of ordering 1GB GDDR-4 models with faster clock speeds but it is unsure if this product will be called HD 2900 XT 1GB GDDR-4 or HD 2900 XTX - you may end up seeing these types of cards appear in early June (around Computex Taipei show time). If we saw a product like this with slightly faster core clock and obviously much faster memory clock (2000 - 2100MHz DDR vs. 1656MHz DDR), we think it would compete very nicely against the GeForce 8800 GTX as far as price vs. performance goes. Sadly we did not have a GeForce 8800 GTS 640MB handy for testing but matching up with our previous testing on similar test beds, the HD 2900 XT will beat it quite considerably, by around the 20% mark in 3DMark06, for example. This is rather interesting since the HD 2900 XT is in the same price range as the GeForce 8800 GTS 640MB - we will test against this card shortly!

Summing it up, we are happy to see a new high-end Radeon graphics card from AMD - it literally is the red hot flaming monster but it manages to offer a good amount of performance and impressive feature set with full DX10 and Shader Model 4.0, Crossfire and Windows Vista support and a host of others which we did not even have enough time to cover in full today, such as improved anti-aliasing and UVD. It was a long time coming but it is able to offer very good bang for buck against the equivalent from Nvidia - GeForce 8800 GTS.

It is also something for the green team to think about if AMD comes out with a faster version of R600 XT either with faster operating GDDR-4 memory (and more of it) or faster clock speeds using 65nm processor technology, later in the year. Interesting times ahead in the GPU business but for right now the Radeon HD 2900 XT offers very solid performance for the price but we will be more interested in what is coming in the following weeks as overclocked versions emerge and shake things up even more.

PRICING: You can find products similar to this one for sale below.

United

States: Find other tech and computer products like this

over at Amazon.com

United

States: Find other tech and computer products like this

over at Amazon.com

United

Kingdom: Find other tech and computer products like this

over at Amazon.co.uk

United

Kingdom: Find other tech and computer products like this

over at Amazon.co.uk

Australia:

Find other tech and computer products like this over at Amazon.com.au

Australia:

Find other tech and computer products like this over at Amazon.com.au

Canada:

Find other tech and computer products like this over at Amazon.ca

Canada:

Find other tech and computer products like this over at Amazon.ca

Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf Amazon.de

Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf Amazon.de

What's in Cameron's PC?

- CPU: Intel Core i7 13700KF

- MOTHERBOARD: ASRock Z790 Taichi

- RAM: TEAM DDR5-7200 32GB

- GPU: Inno3D iChill GeForce RTX 4090

- SSD: Samsung 990 Pro 2TB

- OS: Windows 11 Pro

- COOLER: Corsair iCUE H150i ELITE CAPELLIX XT

- CASE: Corsair iCUE 5000X RGB

- PSU: Corsair HX1000i

- KEYBOARD: Corsair K70 RGB

- MOUSE: Corsair M55 Pro RGB

- MONITOR: Samsung Odyssey OLED G8 34-inch Ultrawide

Newsletter Subscription

We openly invite the companies who provide us with

review samples / who are mentioned or discussed to express their

opinion. If any company representative wishes to respond, we will

publish the response here. Please contact us if you wish to respond.

Similar Content

Newsletter Subscription

Latest News

![The iPhone 16 Pro could have a 20% brighter display than your boring old iPhone 15 Pro]() The iPhone 16 Pro could have a 20% brighter display than your boring old iPhone 15 Pro

The iPhone 16 Pro could have a 20% brighter display than your boring old iPhone 15 Pro![New iPhone 16 dummy unit leak shows off its refreshed camera design and more]() New iPhone 16 dummy unit leak shows off its refreshed camera design and more

New iPhone 16 dummy unit leak shows off its refreshed camera design and more![Analysts expect an iPad Pro sales slowdown despite new models, here's why]() Analysts expect an iPad Pro sales slowdown despite new models, here's why

Analysts expect an iPad Pro sales slowdown despite new models, here's why![WhatsApp announces a design refresh for its iPhone and Android apps]() WhatsApp announces a design refresh for its iPhone and Android apps

WhatsApp announces a design refresh for its iPhone and Android apps![Apple's new 13-inch M2 iPad Air has a brighter display than its smaller 11-inch sibling]() Apple's new 13-inch M2 iPad Air has a brighter display than its smaller 11-inch sibling

Apple's new 13-inch M2 iPad Air has a brighter display than its smaller 11-inch sibling

Latest Reviews

![Corsair 6500X Mid-Tower Dual Chamber Chassis Review]() Corsair 6500X Mid-Tower Dual Chamber Chassis Review

Corsair 6500X Mid-Tower Dual Chamber Chassis Review![Corsair MP700 Pro SE 4TB SSD Review - As Fast as They Come]() Corsair MP700 Pro SE 4TB SSD Review - As Fast as They Come

Corsair MP700 Pro SE 4TB SSD Review - As Fast as They Come![Corsair MP600 Mini 2024 Edition 1TB SSD Review - Fastest Tiny Drive on the Planet]() Corsair MP600 Mini 2024 Edition 1TB SSD Review - Fastest Tiny Drive on the Planet

Corsair MP600 Mini 2024 Edition 1TB SSD Review - Fastest Tiny Drive on the Planet![Lexar SL500 1TB Portable SSD Review - The best of native USB]() Lexar SL500 1TB Portable SSD Review - The best of native USB

Lexar SL500 1TB Portable SSD Review - The best of native USB![Corsair Platform:6 Elevate Modular Desk Review]() Corsair Platform:6 Elevate Modular Desk Review

Corsair Platform:6 Elevate Modular Desk Review![True Lies (1997) 4K Blu-ray Review]() True Lies (1997) 4K Blu-ray Review

True Lies (1997) 4K Blu-ray Review![Fractal Design North XL Full-Tower Chassis Review]() Fractal Design North XL Full-Tower Chassis Review

Fractal Design North XL Full-Tower Chassis Review![Western Digital WD Gold 24TB HDD Review - High-Capacity Masterpiece]() Western Digital WD Gold 24TB HDD Review - High-Capacity Masterpiece

Western Digital WD Gold 24TB HDD Review - High-Capacity Masterpiece![AOC U27G3X 27-inch Gaming Monitor Review - 4K 160Hz for $500]() AOC U27G3X 27-inch Gaming Monitor Review - 4K 160Hz for $500

AOC U27G3X 27-inch Gaming Monitor Review - 4K 160Hz for $500![MSI Vector GP68HX Gaming Laptop Review]() MSI Vector GP68HX Gaming Laptop Review

MSI Vector GP68HX Gaming Laptop Review

Latest Articles

![Building the Ultimate Home Entertainment Server with an ASUSTOR NAS and Viper Gaming NVMe SSDs]() Building the Ultimate Home Entertainment Server with an ASUSTOR NAS and Viper Gaming NVMe SSDs

Building the Ultimate Home Entertainment Server with an ASUSTOR NAS and Viper Gaming NVMe SSDs![Everything you need to know about the latest ASUS NUC Mini PCs - NUC 14 Pro and ROG NUC]() Everything you need to know about the latest ASUS NUC Mini PCs - NUC 14 Pro and ROG NUC

Everything you need to know about the latest ASUS NUC Mini PCs - NUC 14 Pro and ROG NUC![How to Overclock Your GPU and Boost Your PC Gaming with ASUS GPU Tweak III]() How to Overclock Your GPU and Boost Your PC Gaming with ASUS GPU Tweak III

How to Overclock Your GPU and Boost Your PC Gaming with ASUS GPU Tweak III![ASUS's AMD Radeon RX 7000 Series GPU lineup has something for every gamer]() ASUS's AMD Radeon RX 7000 Series GPU lineup has something for every gamer

ASUS's AMD Radeon RX 7000 Series GPU lineup has something for every gamer![ASUS OLED Premium Care defends the ROG Swift OLED PG32UCDM gaming monitor from burn-in]() ASUS OLED Premium Care defends the ROG Swift OLED PG32UCDM gaming monitor from burn-in

ASUS OLED Premium Care defends the ROG Swift OLED PG32UCDM gaming monitor from burn-in