AMD has already confirmed it has refreshed variants of its Instinct MI300 series AI and HPC processors in the second half of this year, with a tweaked Instinct MI350X featuring ultra-fast HBM3E memory.

AI GPU competitor NVIDIA has its current Hopper H100 AI GPU with HBM3 memory, while its newly announced H200 AI GPU features ultra-fast HBM3E memory -- the world's first AI GPU with HBM3E memory. The next-gen Blackwell B200 AI GPU ships with ultra-fast HBM3E memory as standard.

Market research firm TrendForce recently teased AMD's new Instinct MI350X. The firm says the new Instinct MI350X will feature chiplets made on TSMC's newer 4nm process node, which is an enhanced version of TSMC's 5nm-class process node. The new TSMC N4 process node will allow AMD to choose between increasing performance or lowering power consumption on its tweaked Instinct MI350X over the MI300 series AI GPU.

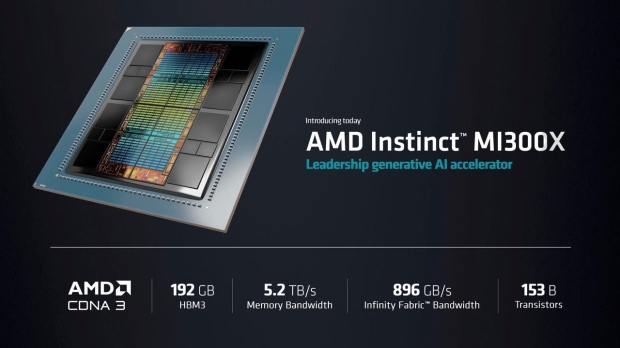

AMD's flagship Instinct MI300X AI GPU features 192GB of HBM3 memory with 12-Hi HBM stacks, while the Instinct MI300A features 128GB of HBM3 on 8-Hi HBM stacks.

- Read more: NVIDIA's next-gen Vera Rubin AI GPU will fight AMD Instinct MI400X in 2025

- Read more: AMD confirms ultra-fast HBM3e memory for Instinct MI300 refresh AI GPU

- Read more: AMD's next-gen MI400 AI GPU teased for 2025, MI300 AI GPU refresh coming

- Read more: AMD Instinct MI300X: new AI GPU with 192GB of HBM3 at 5.3TB/sec bandwidth

TrendForce noted: "that the extension of export controls now includes not only the previously restricted AI chips from NVIDIA and AMD, such as the NVIDIA A100/H100, AMD MI250/300 series, NVIDIA A800, H800, L40, L40S, and RTX4090, but also their next-generation successors like NVIDIA's H200, B100, B200, GB200, and AMD's MI350 series. In response, HPC manufacturers have quickly developed products that comply with the new TPP and PD standards, such as NVIDIA's adjusted H20/L20/L2, which remain eligible for export".