One of our more popular articles was the 'How much VRAM do you really need at 1080p, 1440p and 4K?' but I did promise a follow up article where I would crank up the in-game AA. Here we are, a few days later, with that very article, pushing our video card much closer to the wall.

I changed out video cards from the NVIDIA GeForce GTX 980 Ti to the Titan X, so that when we did use more VRAM, I wouldn't run out thanks to the Titan X sporting 12GB of GDDR5. This was the only change in hardware, but I did get some more games into the tests.

We now have Far Cry 4 and The Witcher 3 to our VRAM consumption tests, as some of you requested. Far Cry 4 is quite taxing on our video cards with VRAM usage.

Note: We stuffed up our BF4 results in the previous article, where it had AA applied. We have run this again without AA and updated the results in this article.

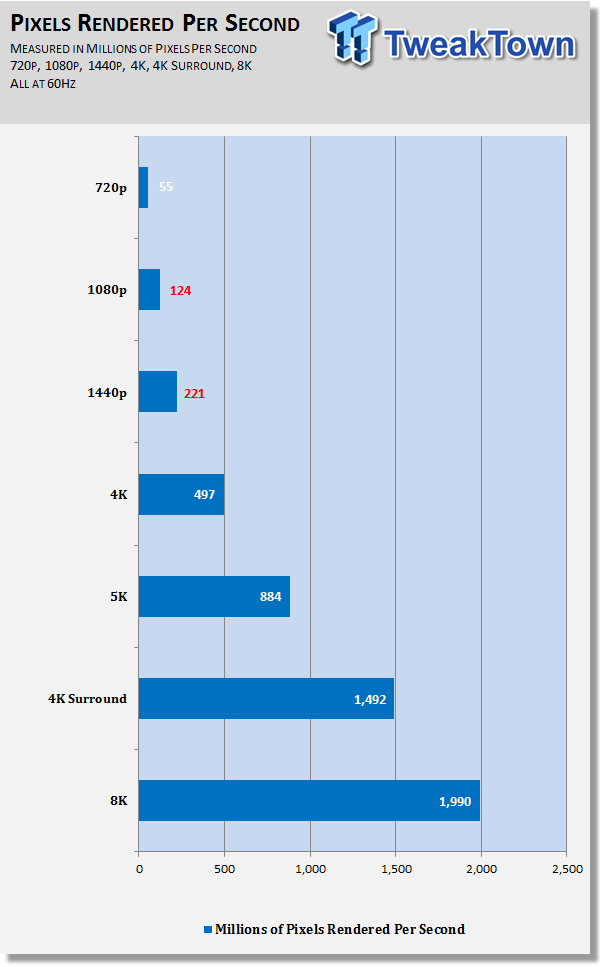

Instead of writing about how many pixels are being rendered, we've put them into a chart so you can better understand just how many pixels we're driving here today. Right now, the 'next-gen' consoles are rendering games at around 720p - 900p, which if they were running at 60Hz (or 60FPS) which most of the time they aren't, it's usually 30FPS or so, they would be rendering 55 million pixels per second.

Jumping up to 1080p, that number climbs to 124 million while 1440p has it jump to 221 million. At 4K, the pixels rendered per second at 60Hz start to get serious, with 497 million, but 4K Surround has this catapult to 1.49 billion. 8K, which is in the not-too-distant future, sees 1.99 billion pixels being rendered per second.

- CPU: Intel Core i7 5820K processor w/Corsair H110 cooler

- Motherboard: GIGABYTE X99 Gaming G1 Wi-Fi

- RAM: 16GB Corsair Vengeance 2666MHz DDR4

- Storage: 240GB SanDisk Extreme II and 480GB SanDisk Extreme II

- Chassis: Lian Li T60 Pit Stop

- PSU: Corsair AX1200i digital PSU

- Software: Windows 7 Ultimate x64

How we ran our tests

We ran all of our normal tests that we do for our video card reviews, and recorded their average VRAM usage in megabytes (so 1400MB = 1.4GB) over the entire run. Battlefield 4 is a little different, as we run a 5-minute real-time multiplayer match and average the FPS for our benchmarks. For this, we just ran it like normal, and recorded VRAM consumption.

So how did we go? Well, let's see.

Heaven - 4K Surround

Far Cry 4

The Witcher 3

Battlefield 4

Metro: Last Light

Middle-earth: Shadow of Mordor

Grand Theft Auto V

Tomb Raider

Results

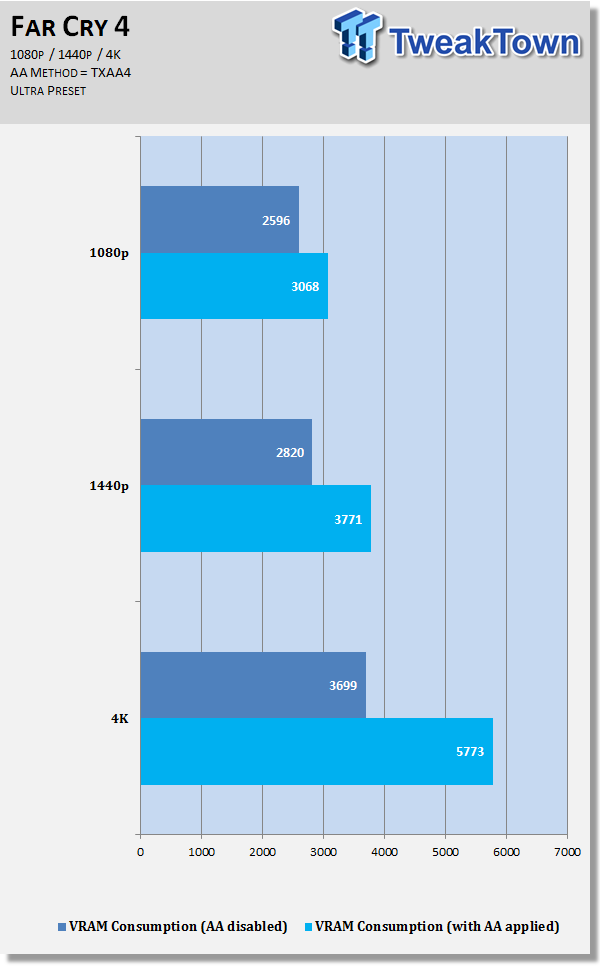

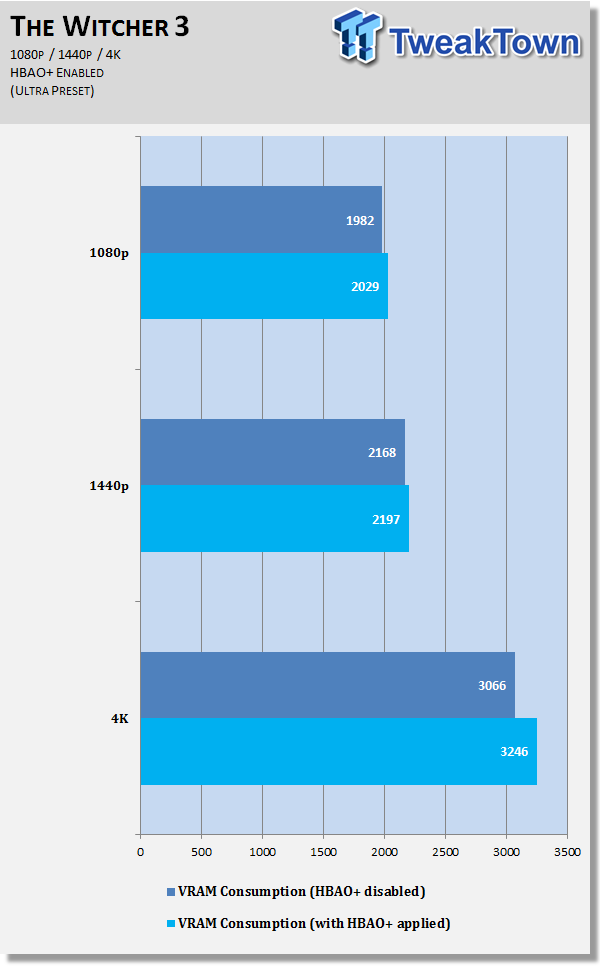

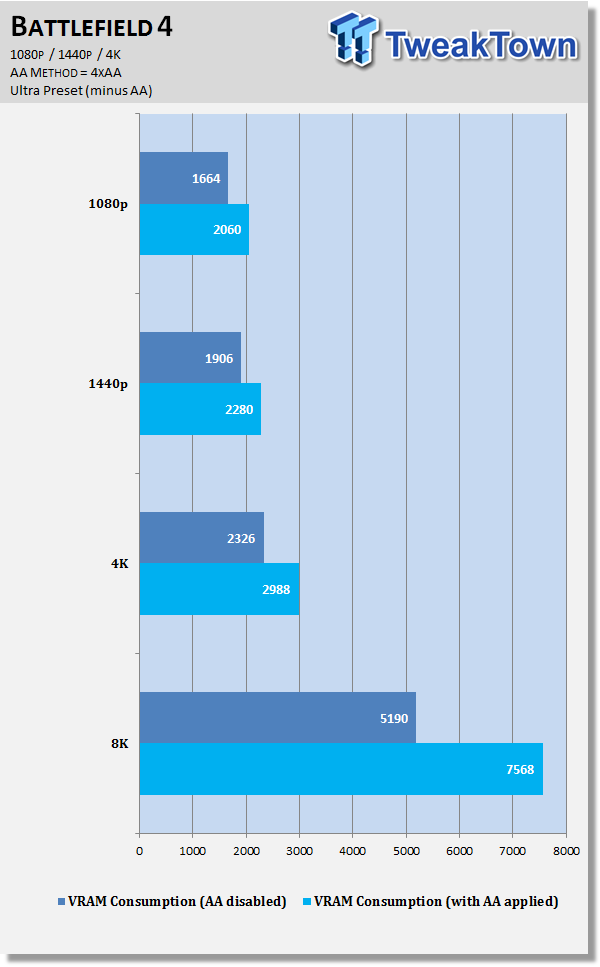

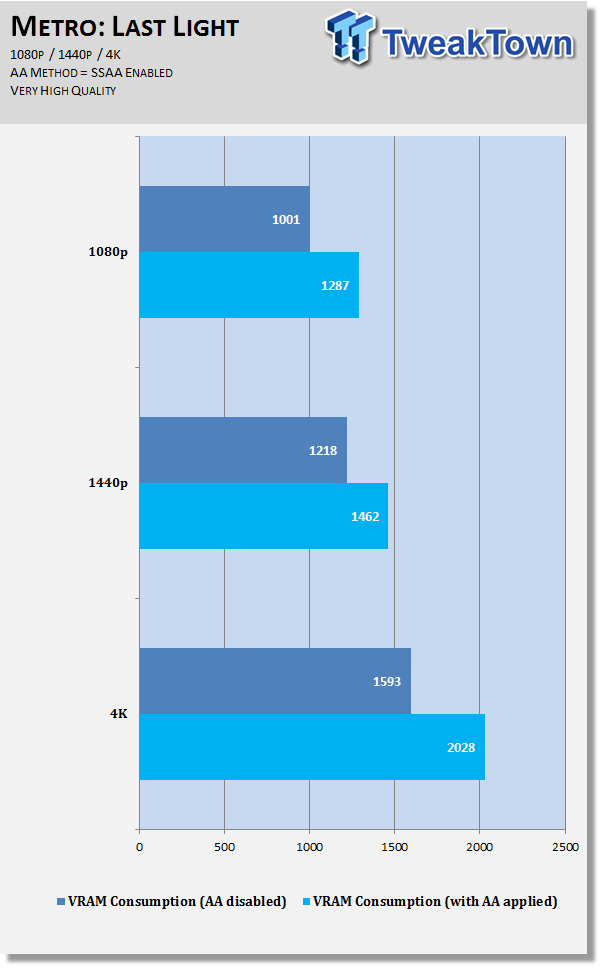

1080p: Far Cry 4 actually doesn't perform too badly at all at 1080p, but with AA enabled you're going to need 3GB+. When it comes to The Witcher 3, HBAO+ doesn't do too much at all to VRAM consumption. Battlefield 4 consumes another 20% VRAM or so at 1080p with AA enabled while Metro: Last Light uses another 30% or so - up to 1.3GB of VRAM.

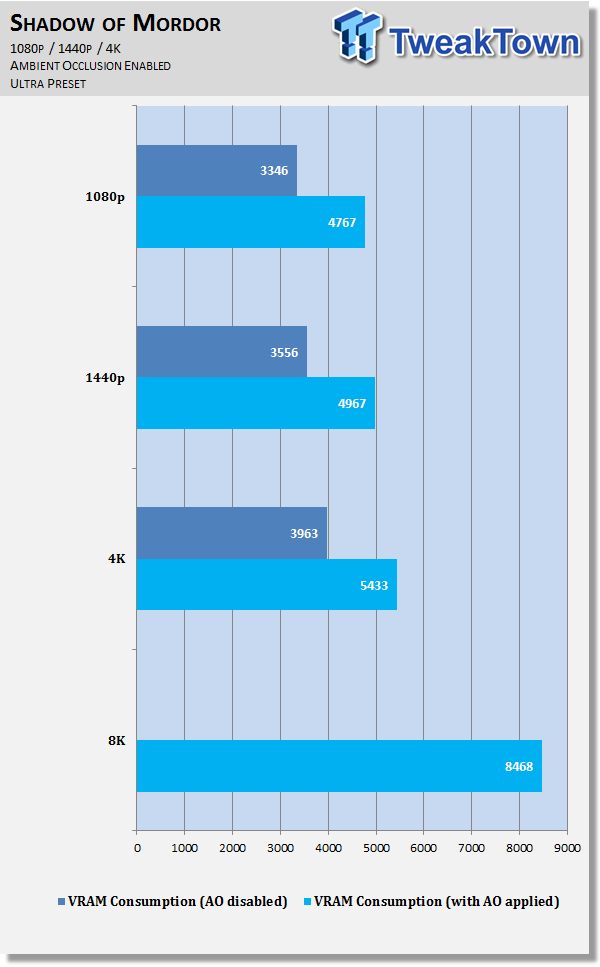

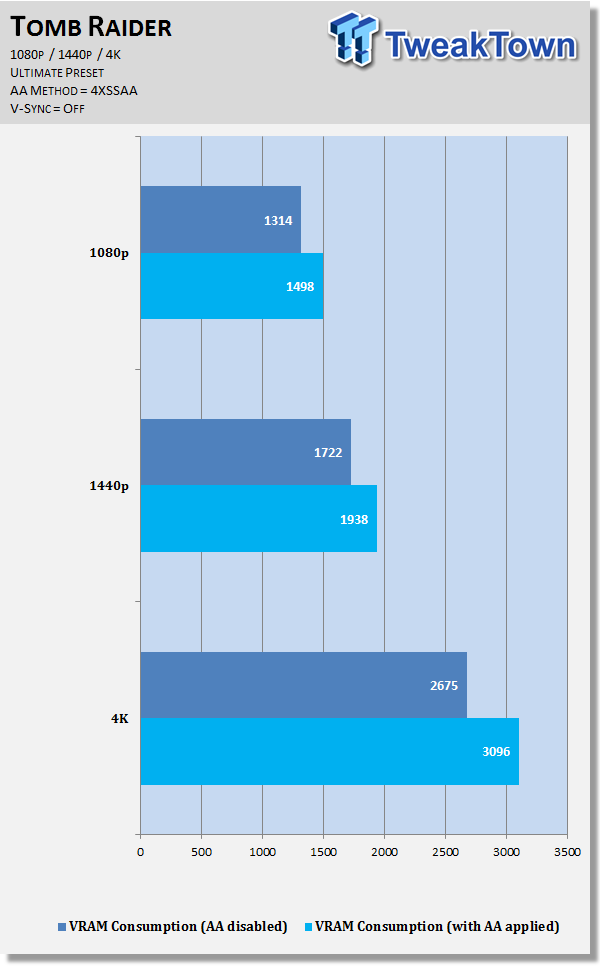

Shadow of Mordor really loves its VRAM, with 4.7GB of VRAM being used at just 1080p with AA enabled, up from the 3.3GB used without AA. GTA V doesn't use much more VRAM with AA enabled at 1080p, while Tomb Raider uses another 180MB of VRAM for a total of 1.5GB at 1080p.

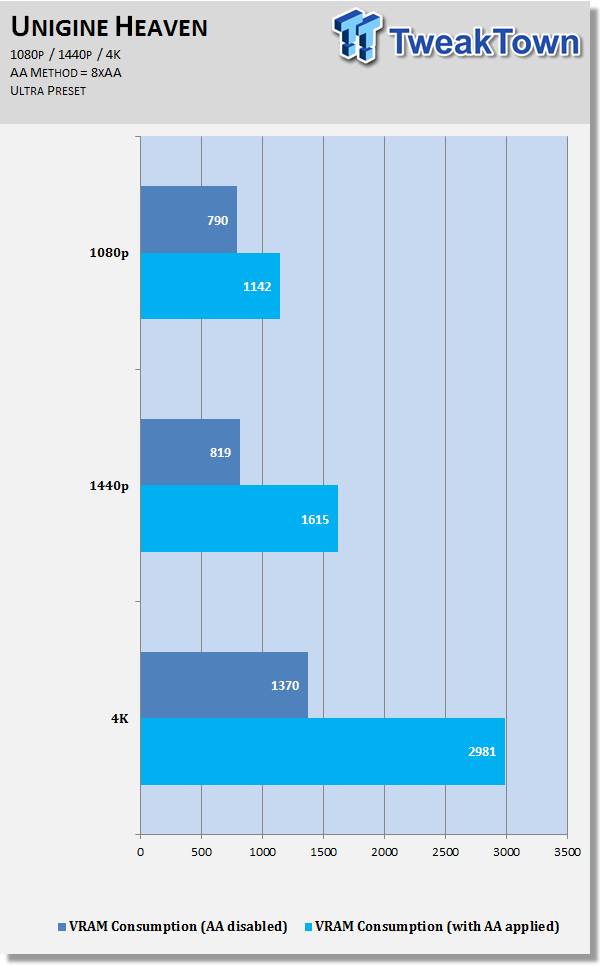

1440p: 2560x1440 is where the VRAM begins to get stressed out, starting with Heaven using 1.6GB compared to 819MB - a 100% increase in VRAM consumption. But Far Cry 4 is where the fun starts, with 3.7GB used, up from 2.8GB without AA. The Witcher 3 again doesn't consume much more at all, you don't notice any difference between 2168MB and 2197MB being used.

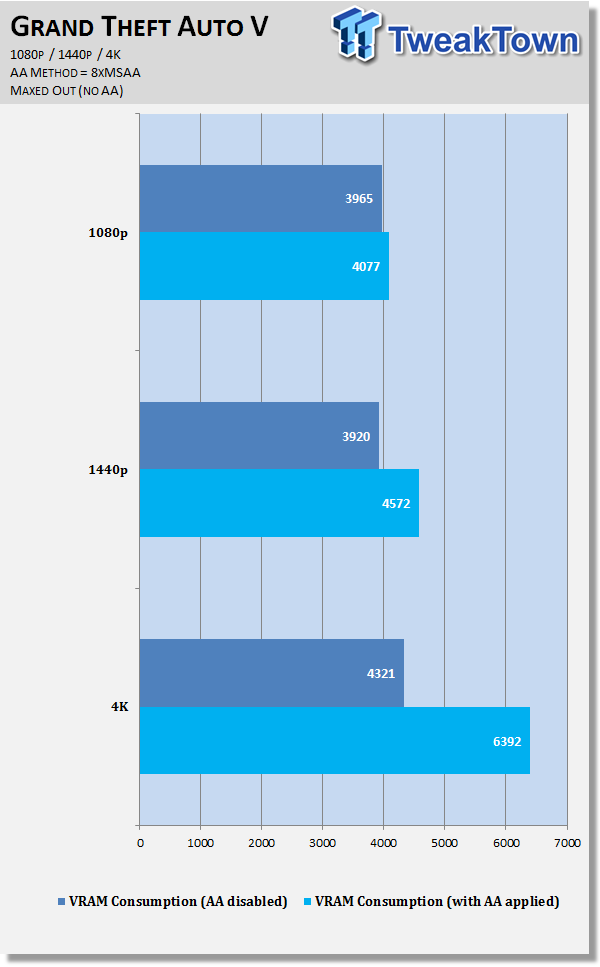

Battlefield 4 jumps up from 1.9GB to nearly 2.3GB at 1440p with AA applied, while Metro: Last Light uses another 200MB+ of VRAM at 1440p with AA enabled. Shadow of Mordor uses just under 5GB of VRAM at 1440p with AO enabled. Grand Theft Auto V uses much more VRAM at 1440p with AA enabled, jumping up from 3.9GB to nearly 4.6GB of VRAM.

4K: This is why you're here once again, to see 4K results with AA enabled. Starting with Heaven, which leaps from 1.3GB to a huge 3GB of VRAM consumption with AA enabled. Far Cry 4 uses a huge 5.7GB at 4K with AA enabled, a large jump from the 3.7GB without AA enabled.

Ambient Occlusion enabled in The Witcher 3 sees the game using another 200MB of VRAM, while Battlefield 4 uses nearly 3GB of VRAM with AA enabled versus 2.3GB without AA. Metro: Last Light consumes just over 2GB of VRAM with AA enabled, versus the 1.6GB used without AA.

Shadow of Mordor really enjoys the VRAM at 4K with AO enabled, jumping from 3.9GB to 5.4GB while GTA V absolutely leaps up from its 4.3GB to a huge 6.4GB of VRAM consumption with AA enabled at 4K.

8K: Bonus round! I ran Shadow of Mordor at 4K with 200% super sampling, which renders the game at 7680x4320, or 8K. At 8K, Shadow of Mordor consumes a mammoth, VRAM-busting 8.4GB of VRAM.

Battlefield 4 on the other hand, I could run with AA enabled, and disabled. At 7680x4320 without AA, Battlefield 4 was using 5.2GB of VRAM, while 4xAA enabled saw this skyrocket to a huge 7.5GB of VRAM.

Final Thoughts

Our first article really gave us a great look at just how much VRAM you need in games, where we honestly weren't surprised that you don't really need 4GB of VRAM or more. With AA applied - and some people really do love using AA - those numbers really start to climb above 6GB in some games, like Shadow of Mordor, or GTA V.

We aren't even maxing out the anti-aliasing settings, as you can force NVIDIA or AMD settings to much higher settings, but that is overkill, isn't it? For some it won't be, so if that's something you want to see us do, please do let us know. For now, we've shown you just how much the maxed out in-game settings can do with AA (or AO in SoM) can cause games to consume much more VRAM.

One of the more interesting tests was quickly running Middle-earth: Shadow of Mordor at 8K, which consumes a gigantic 8.4GB of VRAM. 8K is the future of gaming, and while it is many years away, if today's games are using 8GB of VRAM, what will the games of tomorrow consume?

You might think that VRAM consumption isn't something to worry about, or "I don't need more than 4-6GB" - and while that applies to most of the games today - it will definitely change in the future. 8K will use 8GB+ of VRAM in the future, but by next year I think we'll begin to see video cards with 24GB of VRAM (Titan 2, I'm looking at you).

Last updated: Nov 3, 2020 at 08:12 pm CST