NVIDIA still hasn't signed on with Samsung for its HBM memory chips, something that will need to be resolved by NVIDIA because it requires Samsung's silicon interposers and advanced chip packaging.

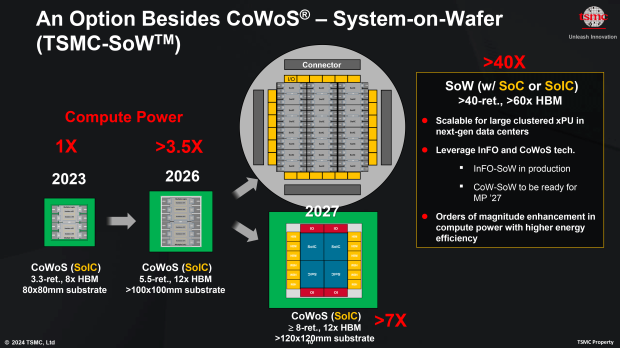

In a new report from TheElec, the huge bottleneck for NVIDIA's current-gen Hopper H100 and H200 AI GPUs is now a shortage of TSMC's CoWoS advanced packaging capacity, with an interposer shortage on the horizon. The interposer is another important key part of AI chips, with the interposer integrating multiple chips into a single package.

NVIDIA's advanced AI GPU itself, HBM memory chips, and more. H100's interposer is 3.3x the size of a lithography mask, and only 9 can be cut form a 12-inch silicon wafer (on the 40nm process). However, the more advanced GPUs like NVIDIA's new Blackwell B100 and B200 AI GPUs are even bigger, and by 2026, with Rubin R100 AI GPUs, interposers will need to be 5.5x the size of a mask... by 2027, we're looking at 8x.

- Read more: Samsung denies claims its HBM3E memory failed NVIDIA's quality tests for AI GPUs

- Read more: Samsung reportedly FAILS to pass HBM3E memory qualification tests by NVIDIA for its AI GPUs

- Read more: NVIDIA is qualifying Samsung's new HBM3E chips, will use them for future B200 AI GPUs

- Read more: Samsung wins advanced chip packaging order from NVIDIA for AI GPUs, TSMC isn't enough

- Read more: Samsung establishes dream team of engineers to win AI chip orders from NVIDIA

TSMC is working on CoWoS-L (local interconnect) in order to shrink the interposer, but that's not an easy task, and TheElec reports that NVIDIA will eventually (and I'm sure, begrudgingly) need help from South Korean giant Samsung.