In a new article published by The Guardian, Tom Lamont, the author of the piece, set out to talk to as many AI doomsayers as possible.

While the entire article is certainly an interesting read, one quote, in particular, stood out from the bunch of experts Lamont spoke to. Eliezer Yudkowsky, an AI researcher that Lamont said was the most pessimistic about the future of AI implementation out of the researchers he spoke to, and according to Yudkowsky, humanity has a "shred of a chance" at surviving what's coming.

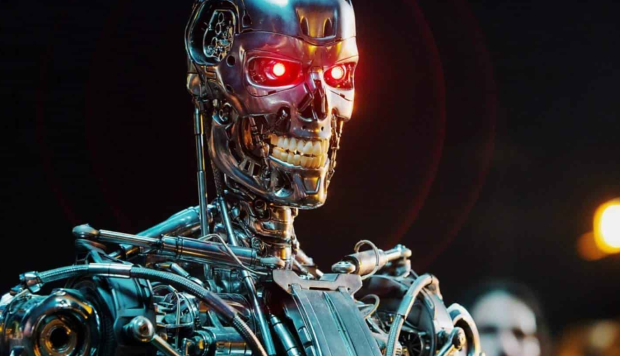

Yudkowsky is the lead researcher at a nonprofit called Machine Intelligence Research Institute in Berkeley, California, and according to the interview, if Yudkowsky was "put to a wall" and forced to give probabilities on what the future entails, "I have a sense that our current remaining timeline looks more like five years than 50 years. Could be two years, could be 10." The two were discussing the context of how long until humanity faces a Terminator-like apocalypse or Matrix hellscape.

The AI researcher said the "difficulty is, people do not realize" what is on the horizon, and that people should picture "an alien civilization that thinks a thousand times faster than us," but in multiple boxes, so many that it will be impossible for us to completely dismantle even if we wanted to. It should be noted that Yudkowsky has previously penned an article in Time last year that called for data centers where AIs are trained to be hit with airstrikes.

For more reading on Yudkowsky's opinion on the future of AI development, check out this link here.