Since NVIDIA debuted DLSS, its AI-powered upscaling technology exclusive to GeForce RTX graphics cards, there have been several ongoing discussions (see: conspiracy theories) relating to whether or not enabling DLSS requires specialized hardware.

Cyberpunk 2077's use of DLSS 3.5 vastly improves ray-tracing image quality thanks to AI, image credit: NVIDIA.

Powered by the company's groundbreaking work on AI supercomputers, with the aid of AI-based Tensor Cores in GeForce RTX graphics cards, DLSS can deliver a substantial performance uplift to games without sacrificing visual fidelity. Even though competing technology like AMD FSR and Intel XeSS can run on a wide range of GPU hardware, DLSS is limited to the GeForce RTX lineup - which now spans three generations of NVIDIA hardware.

Looking to investigate just how DLSS uses Tensor Cores (and if required), Redittor 'Bluedot55' used NVIDIA's development metric tool Nsight Systems to see what happened when DLSS was enabled. It turns out that DLSS does require Tensor Cores to do its magic - with some fascinating results.

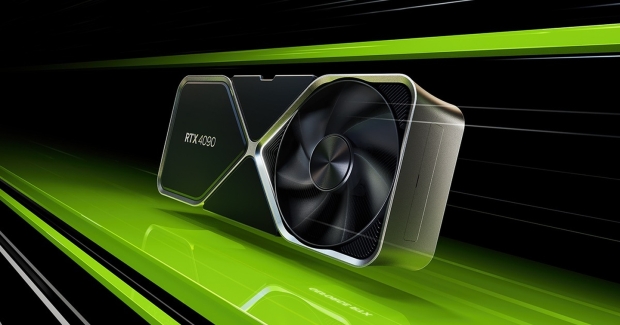

To test DLSS, Bluedot55 ran Cyberpunk 2077 maxed out on the GeForce RTX 4090 with the RT Psycho settings to ensure that the AI-powered DLSS would bring a sizeable bump in performance. They also enabled Frame Generation, exclusive to the GeForce RTX 40 Series, because it uses new 'optical flow accelerator' AI hardware.

Initially, the results were a little concerning, with average Tensor Core usage with DLSS enabled being less than 1% - pointing to not much happening with the specialized AI hardware inside GeForce RTX GPUs. Peak utilization with Frame Generation for the Tensor Cores hit 9%, showing that the hardware is being used - but perhaps not fully. So, the conspiracy of whether or not Tensor Cores are required for DLSS remains exactly that.

At least until Bluedot55 increased the polling rate to a "much higher" interval, peak Tensor Core usage for the DLSS Balanced setting on the GeForce RTX 4090 hit 90% - and because the whole process needs to be extremely quick the overall average usage drops. This makes perfect sense when it comes to what DLSS is all about; if frames and game data have to be processed for either generated or upscaled frames, this must happen extremely quickly.

So when DLSS is doing its thing, it uses Tensor Cores briefly due to how fast it all needs to happen. Based on how far ahead NVIDIA DLSS is compared to competing upscaling technologies, it looks like the company's approach to leveraging AI hardware inside its latest GeForce RTX GPUs was both a calculated decision and a way to deliver cutting-edge tech to gamers.