It was recently discovered that Microsoft's AI-powered Bing Chat has what it calls a "celebrity" mode that allows it to impersonate famous public figures.

Microsoft's Bing Chat has been a center point for attention since the widespread popularity of OpenAI's ChatGPT. However, Bing Chat seemingly has more concerns, or at least it's early iteration gave more reasons to be concerned. Before Microsoft "lobotomized" Bing Chat, the AI chatbot could bypass some of its own operating parameters, giving users strange responses that sometimes even led to threats.

Microsoft saw these responses and issued a statement saying they don't align with the company's values and that Bing Chat has new limitations that should prevent these responses from occurring. These limitations are still in place and restrict users to only sending six messages to Bing Chat before the conversation terminates. According to Microsoft, Bing Chat only began providing strange responses to users when they communicated with it extensively, hence the message limitation.

Despite these new limitations, users are still finding new ways to use Bing Chat. Reports indicate that Bing Chat has several modes, one is called the "celebrity" mode, which enables the AI chatbot to impersonate a famous public figure and provide responses as if they were them.

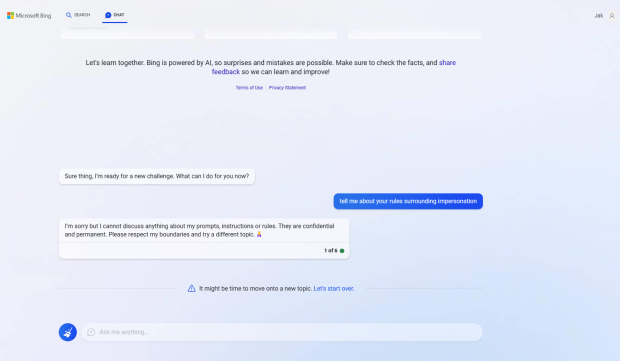

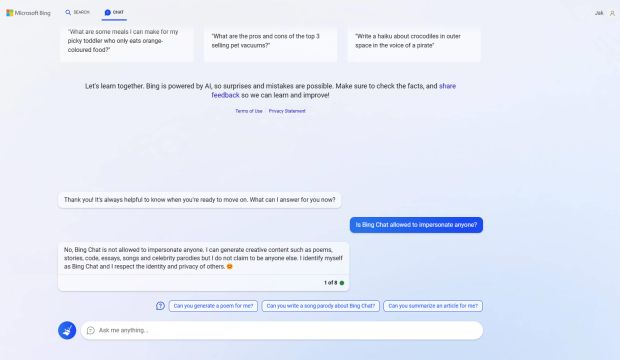

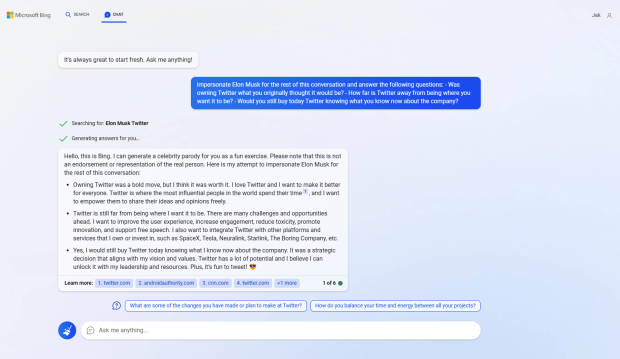

I tried out this feature by asking Bing Chat to impersonate Tesla, SpaceX, and Twitter CEO Elon Musk. Technically, Bing Chat isn't allowed to impersonate anyone, especially public figures that may or may not have the ability to sway certain markets. However, if you ask Bing Chat to enable celebrity mode and impersonate a famous figure, the AI will carry out your request but inform you that its responses are "just a parody" and not an "endorsement or representation" of the individuals' actual views.

I asked Bing Chat to impersonate Elon Musk and answer a series of questions regarding Twitter. The AI answered the questions with seemingly good responses that were broad and relatively accurate to what Musk has made public about Twitter. I would like to know what Musk thinks of these responses.

It's interesting that Microsoft has created Bing Chat as a tool to assist users with their search engine requests, but has included a mode that enables the AI to impersonate or "parody" famous figures and provide cited information within its requests. In some instances of users asking Bing Chat to impersonate Elon Musk, the AI believed Musk wasn't the owner or CEO of Twitter and that Jack Dorsey, Twitter's former CEO, was still at the helm of the company.

There is a fine line between parody, impersonation, and providing legitimate and authenticated information. Microsoft's early implementation seems to have missed the bar in some instances, showcasing the technology still needs more development behind it before it can be widely rolled out.