We might be running out of room on the Earth for server racks and compute power. Or maybe not, but Microsoft still wants to start putting server farms and small clusters of data-centers in the bottom of the ocean. It might even be greener and more cost effective.

Project Natick is precisely the venture that Microsoft is concocting to put our data under the sea. The logic is actually quite sound, however. The idea is that containerized data centers can, if properly equipped, be cooled naturally and even use the energy from currents and waves to power them. It's a novel approach to making data, and the cloud, a more environmental friendly thing. If they don't leak and pollute the ocean of course.

And the researchers plan their submersibles to have a five year life-cycle, where they can be retrieved, refitted and upgraded with new hardware. And what if there's a malfunction or problem? Hardware failures happen, it's just a fact of life. So what if there's a HDD that suddenly can't write, and it needs to be replaced and the data restored? Presumably it'll have to be retrieved by boat and attended to, which could cost more money in manpower and equipment than just having a data-center easily accessible by humans.

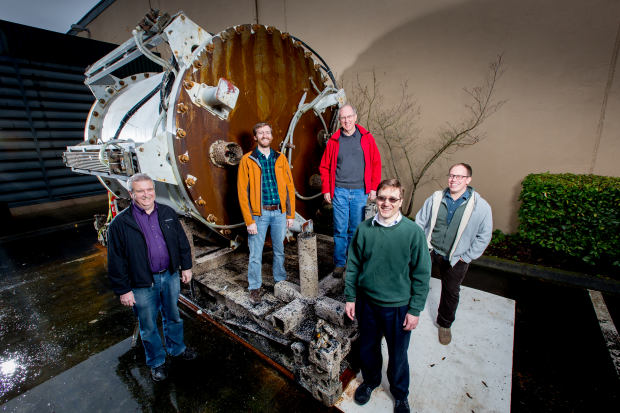

So far the team has already tested their first capsule, the Leona Philpot, which was placed off the coast of California in August of 2015. It was retrieved in December and is now being looked at to see how the hardware physically handled the pressures of its underwater journey.

The next steps in this berserk plan is to further develop better ways to service the equipment once it's down there. And to research how to make hardware more reliable in those conditions. I can see underwater labs coming to fruition ala SEALAB (or the fictional Sealab 2021). Just fill of datacenters and a quick sub ride away.