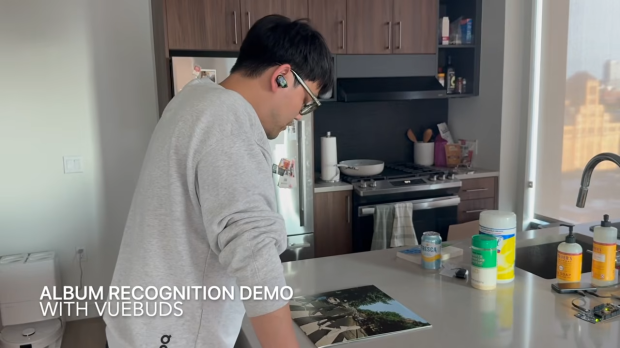

Researchers at the University of Washington have unveiled Vuebuds, a prototype pair of wireless earbuds equipped with tiny cameras that enable real-time visual AI interactions.

Designed to change how users engage with their surroundings, Vuebuds allow wearers to simply look at an object and ask questions, with responses delivered through the earbuds in about a second. Unlike smart glasses, which have struggled to gain mainstream adoption, Vuebuds aim to provide a more subtle and intuitive alternative.

The system captures still images using low-resolution, black-and-white cameras rather than recording continuous video, a deliberate choice to address privacy concerns while still delivering useful contextual information. A key feature of Vuebuds is on-device processing, meaning image analysis happens locally instead of being sent to the cloud.

This reduces latency, improves responsiveness, and enhances privacy by keeping sensitive data off external servers. The technology highlights a growing trend toward compact, AI-powered wearables that blend seamlessly into everyday devices. By integrating visual intelligence into earbuds, Vuebuds open the door to new use cases, from hands-free product searches while shopping to real-time assistance for visually impaired users.

While still in the prototype stage, Vuebuds demonstrate how AI-driven perception could evolve beyond screens and glasses, offering a glimpse into a future where everyday audio devices double as intelligent companions that help users better understand the world around them. Concepts such as this highlight the various ways Apple could improve AirPods, or perhaps the visual technology within the Vuebuds could be adopted by mainstream wearables, such as Meta's smart glasses.