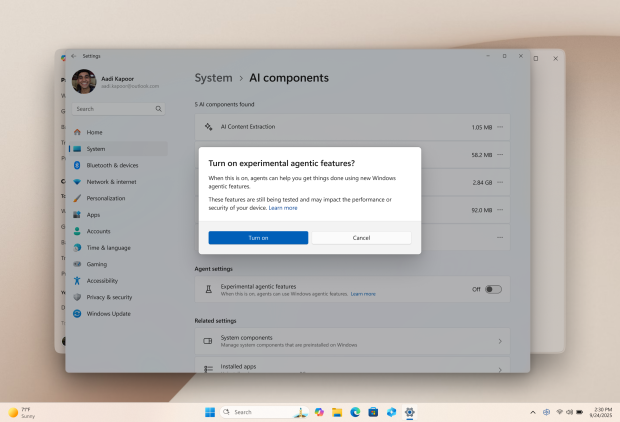

Microsoft has proclaimed on multiple occasions that Windows 11 and Windows in general are transforming into an 'Agentic OS,' and the latest 'Experimental Agentic Features' included in a recent Windows 11 preview build offer a first honest look at a Windows PC becoming an AI PC. The quick summary is that AI Agents will have their own accounts and privileges and run in the background while you're using your PC, leading to a situation where multiple users are logged in to your PC, with you being the only human.

Basically, you'll be able to interact with your PC using natural language. At the same time, these AI Agents will handle everything from launching office apps and creating charts to browsing, finding a deal, buying a new appliance, and searching through images to find something specific. These agents will run in the background, with Copilot as the primary interface.

Microsoft notes that you'll be able to monitor AI Agents like you can apps, while also confirming that these agents are prone to hallucinating and can even be tricked into installing malware or sending sensitive data and files to bad actors, which makes you wonder why anyone would enable these 'Experimental Agentic Features' when Microsoft is adamant that they pose a real security risk.

"AI models still face functional limitations in terms of how they behave and occasionally may hallucinate and produce unexpected outputs," Microsoft writes. "Additionally, agentic AI applications introduce novel security risks, such as cross-prompt injection (XPIA), where malicious content embedded in UI elements or documents can override agent instructions, leading to unintended actions like data exfiltration or malware installation."

Microsoft's 'Agentic security and privacy principles' outline the company's goals with these agents, stating that even though they're autonomous entities, they'll keep detailed logs of their activities, with the ability for users to supervise them. In addition, access to sensitive information will be "granular, specific and time-bound" and will only occur in "user-authorized contexts." Also, agents aren't allowed to engage with other agents; only the owner is allowed to do so.

Basically, it reads like the beginning of a sci-fi story where a rogue AI kickstarts the end of civilization by leaking someone's credit card and passport details to a group of hackers. Even the best-case scenario sounds counterproductive. Instead of jumping onto a PC and clicking on a button and typing, your hands are tied as you try to wrangle a team of stoners and guide them through multiple steps just to send an email.