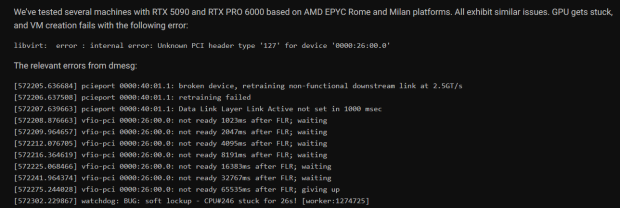

NVIDIA's higher-end GeForce RTX 5090 and RTX PRO 6000 cards have hit a new bug after running virtualization for a few days, which requires a full system reboot to get them back online again.

CloudRift is a GPU cloud for developers, reporting crashing issues with both the RTX 5090 and RTX PRO 6000 cards saying that after a "few days" of VM usage, the cards were completely unresponsive. The GPUs can no longer be accessed unless the node system is rebooted, but thankfully it's only happening to the RTX 5090 and RTX PRO 6000, as the RTX 4090, Hopper H100, and Blackwell B200 aren't affected, for now.

What's happening exactly? The GPU gets assigned to a VM environment using the device driver VFIO, and after the Functional Level Reset (FLR), the GPU is completely unresponsive. After the GPU becomes unresponsive, it results in a kernel "soft lock" which puts the host and client environments under a deadlock. In order to get out of that deadlock the machine has to be rebooted, which isn't an easy thing to do for CloudRift, as they have a big volume of guest machines.

CloudRift isn't the only company affected either, with a user at Proxmox reporting something similar, where he witnessed a complete host crash after shutting down a Windows client. He said that NVIDIA has responded to the problem, claiming that the company has been able to reproduce the issue, and is currently working on a fix for the issue.

Better yet, CloudRift has a $1000 bug bounty for those who can fix, or mitigate the issue, but NVIDIA is at work on that fix which shouldn't be too far away, especially when it's having a negative effect on AI workloads.