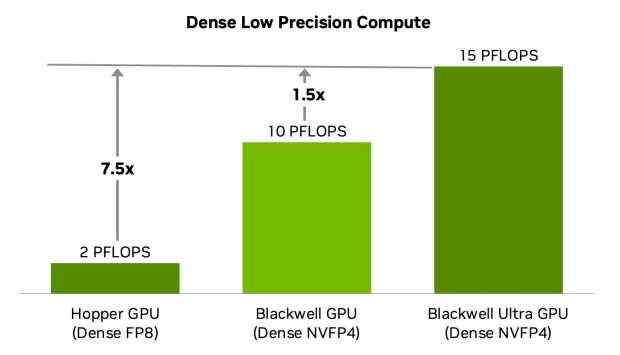

NVIDIA has quite a lot of things to detail and announce at Hot Chips 2025, with one of them being more details on its new Blackwell Ultra GB300 GPU, the fastest AI chip the company has ever made, and it's 50% faster than GB200.

The new entry into the Blackwell AI GPU family before its next-gen Rubin AI chips debut in 2026, the new Blackwell Ultra GB300 features two Reticle-sized Blackwell GPU dies, connecting them through NVIDIA's in-house NV-HBI high-bandwidth interface, making them appear as a single GPU.

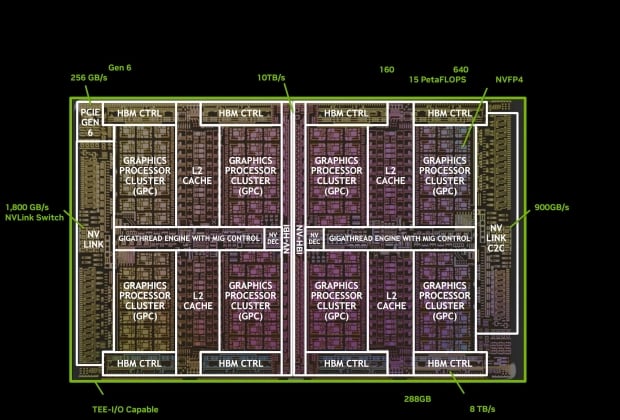

The Blackwell Ultra GPU is made on the TSMC N4P process node (which is an optimized 5nm node for NVIDIA) with 208 billion transistors in total, beating out the 185 billion transistors in AMD's new flagship Instinct MI355X AI accelerator. The NV-HBI interface on Blackwell Ultra GB300 has 10TB/sec of bandwidth for the two GPU dies, while functioning as a single chip.

- Read more: NVIDIA CEO says new Blackwell Ultra GB300 AI platform is in full-scale mass production

- Read more: ASUS ExpertCenter desktop PC: NVIDIA GB300 Blackwell Ultra, up to 784GB RAM

- Read more: NVIDIA's next-gen GB300 AI servers now in production, will begin shipping in September

- Read more: NVIDIA GB300 NVL72 AI server at Computex 2025, packing Blackwell Ultra GB300 GPUs

NVIDIA's new Blackwell Ultra GB300 GPU features 160 SMs in total, each containing 128 CUDA cores for a total of 20,480 CUDA cores, with 5th Gen Tensors Cores with FP8, FP6, NVFP4 precision compute, 256KB of Tensor memory (TMEM) and SFUs.

All of the AI goodness happens inside of those 5th Gen Tensor Cores, with NVIDIA injecting huge innovations throughout each generation of Tensor Cores, and the 5th Gen Tensor Cores are no different. Here's how it has been over the years with GPU architectures and Tensor Core generations:

- NVIDIA Volta: 8-thread MMA units, FP16 with FP32 accumulation for training.

- NVIDIA Ampere: Full warp-wide MMA, BF16, and TensorFloat-32 formats.

- NVIDIA Hopper: Warp-group MMA across 128 threads, Transformer Engine with FP8 support.

- NVIDIA Blackwell: 2nd Gen Transformer Engine with FP8, FP6, NVFP4 compute, TMEM Memory.

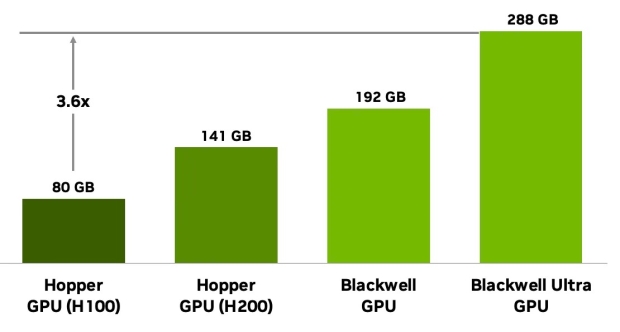

NVIDIA also has a huge HBM capacity increased on Blackwell Ultra GB300, with up to 288GB HBM3E per GPU compared to 192GB on GB200. GB300 opens the door to NVIDIA supporting multi-trillion-parameter AI models, with the HBM3E arriving in 8-Hi stack with 16 512-bit memory controller (8192-bit interface) with 8TB/sec of memory bandwidth per GPU. GB300 with 288GB of HBM is a 3.6x increase over the 80GB on H100, and a 50% increase in HBM over the GB200. This allows for:

- Complete model residence: 300B+ parameter models without memory offloading.

- Extended context lengths: Larger KV cache capacity for transformer models.

- Improved compute efficiency: Higher compute-to-memory ratios for diverse workloads.

These enhancements aren't just about raw FLOPS. The new Tensor Cores are tightly integrated with 256 KB of Tensor Memory (TMEM) per SM, optimized to keep data close to the compute units. They also support dual-thread-block MMA, where paired SMs cooperate on a single MMA operation, sharing operands and reducing redundant memory traffic.

The result is higher sustained throughput, better memory efficiency, and faster large-batch pre-training, reinforcement learning for post-training, and low-batch, high-interactivity inference.

- NVIDIA GB300 NVL72 rack-scale system: This liquid-cooled rack integrates 36 Grace Blackwell Superchips, interconnected through NVLink 5 and NVLink Switching, enabling it to achieve 1.1 exaFLOPS dense FP4 compute. The GB300 NVL72 also enables a 50x higher AI factory output, combining 10x better latency (TPS per user) and 5x higher throughput per megawatt relative to Hopper platforms. GB300 systems also redefine rack power management. They rely on multiple power-shelf configurations to handle synchronous GPU load ramps. NVIDIA power smoothing innovations-including energy storage and burn mechanisms-help stabilize power draw across training workloads.

- NVIDIA HGX and DGX B300 systems: Standardized 8 GPU Blackwell Ultra configurations. NVIDIA HGX B300 and NVIDIA DGX B300 Systems continue to support flexible deployment models for AI infrastructure while maintaining full CUDA and NVLink compatibility.