When NVIDIA announced the new GeForce RTX 50 Series and RTX Blackwell architecture earlier this year, the company focused heavily on the new architecture's AI capabilities and how new 'neural shaders' and AI rendering tools will drive the next generation of real-time graphics.

One of the latest technologies the company introduced is called RTX Neural Texture Compression, which sees a shift away from block-compressed textures to neurally compressed textures, saving up to 7X the VRAM. In the age of 4K gaming, where high-quality textures sit in VRAM, properly implementing this technology will dramatically reduce the VRAM requirement for running games at 1440p or 4K using high-quality textures.

Neural texture compression is a reality in DirectX 12 thanks to a recent update introducing Cooperative Vectors for rendering. Intel also leverages this technology for neural texture compression, as seen in its walking T-Rex demonstration. YouTube channel Compusemble has put together a video of Intel and NVIDIA's AI-powered texture compression demos in action, and the results are impressive.

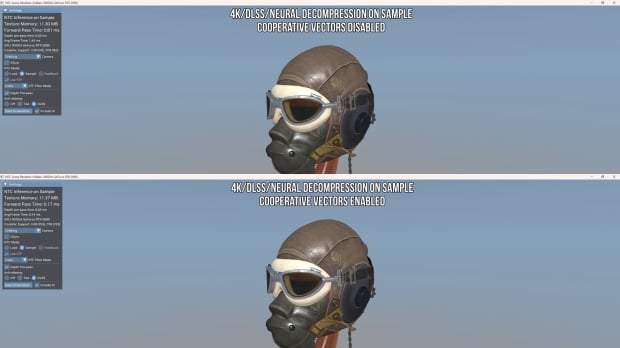

Cooperative Vectors is the key to making this technology a reality, as with other AI rendering tools like DLSS, there's a cost in rendering time to use AI for texture compression. The good news is that in the video, the render time of traditional block compression increases only slightly, going from 0.045ms to 0.111ms when shifting to neural texture compression. Even better, the AI approach improves texture quality and detail. Without Cooperative Vectors, the render cost increases dramatically to 5.7ms.

We're now at the proof of concept stage for Cooperative Vectors, and with these results, it's likely only a matter of time before we start implementing neural texture compression support. In the NVIDIA demo, the VRAM requirement decreases from 98MB to 11.37MB, which is enough to breathe new life into capable GPUs currently held back by VRAM limitations - namely, 8GB cards like the GeForce RTX 5060, RTX 5060 Ti 8GB, and Radeon RX 9060 XT 8GB.