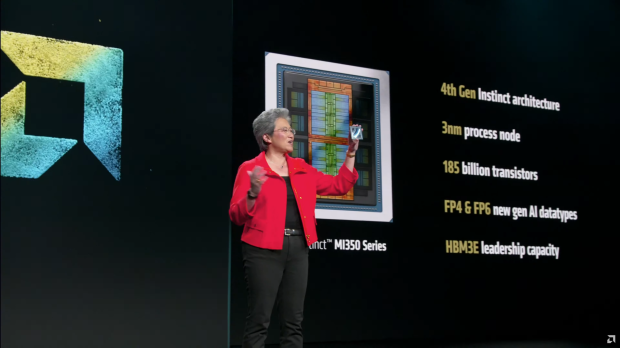

AMD launched its new Instinct MI350 series AI accelerators today, rocking 185 billion transistors, up to 288GB of HBM3E memory, FB4 and FP6 support, and more.

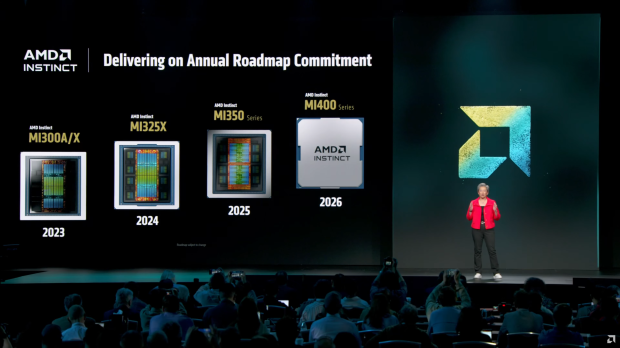

The new Instinct MI350 series AI chips were launched during AMD's huge Advancing AI event, where it also unveiled its new Zen 6-based EPYC "Venice" CPUs, a tease of its next-next-gen Zen 7-based EPYC "Verano" CPUs, as well as a tease of its new Instinct MI400 and even next-next-gen Instinct MI500 series AI accelerators.

AMD's new Instinct MI350 series AI accelerators are based on the company's new CDNA 4 architecture, and fabbed on TSMC's 3nm process node. Inside, the chip itself features 185 billion transistors and comes in two different variants: the MI350X and MI355X, offered in both air-cooled, and liquid-cooled configurations.

The new Instinct MI350 series features a total of 256 compute units with 128 stream processors making 16,384 cores. This means we have lower core counts than the Instinct MI325 and MI300 series AI chips, which feature 304 compute units and a max core clock of 19,456.

The compute units inside of the Instinct MI350 series are split into 8 zones, each with its own XCD, and each XCD packing 32 compute units. The XCDs are based on TSMC's N3P process node, with the dual I/O dies based on TSMC's older 6nm process node. Inside of the IOD we have 128 HBM3E channels, the Infinity Cache, and 4th Gen Infinity Fabric Links.

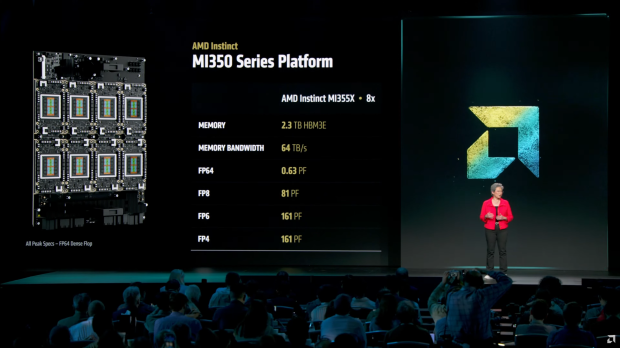

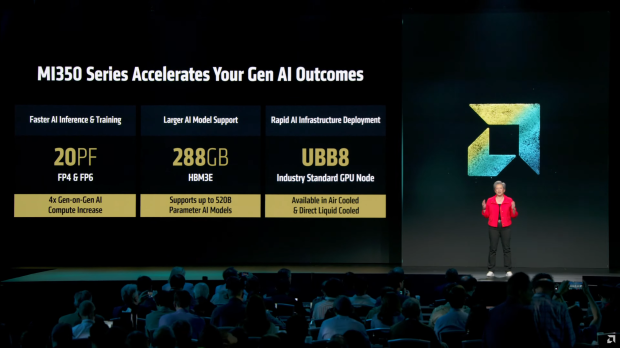

AMD says that its new Instinct MI350 series AI chips have 20 PFLOPs of FP4/FP6 compute performance, which is a huge 4x uplift over the previous-gen Instinct AI chips. HBM3E also provides faster transfer speeds with up to 288GB of HBM3E on both variants, with 256MB of new Infinity Cache on the new Instinct MI350 series AI chips.

The new HBM3E memory is on 8 stacks, with each stack featuring 36GB of HBM3E in 12-Hi stacks, with the new chips also packing UBB8, which is a new Rapid AI infrastructure deployment standard, which allows for faster deployment of air- and liquid-cooled nodes.

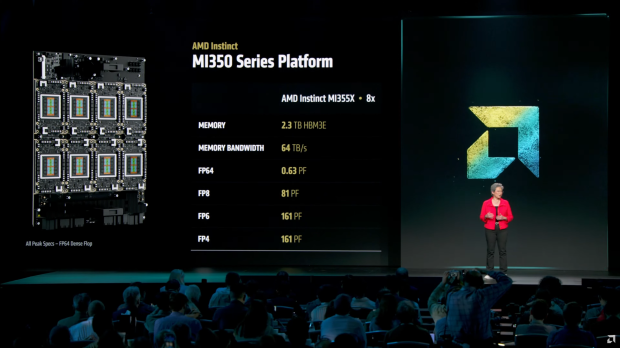

AMD shared some competitive metrics of its new Instinct MI355X, which offers 8 TB/s of aggregate memory bandwidth, 79 TFLOPs of FP64, 5 PFLOPs of FP16, 10 PFLOPs of FP8, and 20 PFLOPs of FP6/FP4 compute.

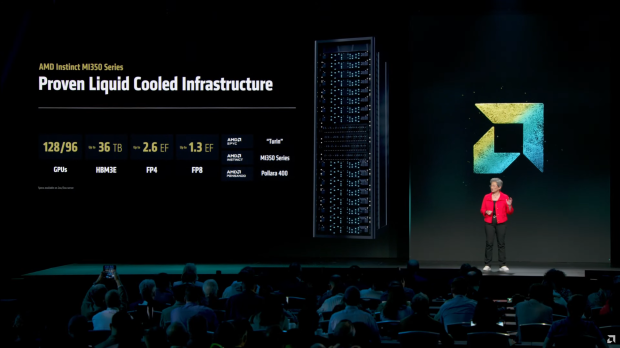

AMD says that a full rack of liquid-cooling will pack 96-128 Instinct MI350 series AI GPUs with up to 36TB of HBM3E memory, 2.6 Exaflops of FP4 compute, 1.3 Exaflops of FP8 compute, and will power AMD's EPYC "Turin" CPUs based on the Zen 5 architecture, alongside the new Pollara 400 interconnect solution.

MI355X vs B200:

- Memory: 1.6x Higher

- Bandwidth: 1.0x Higher

- FP64: 2.1x Higher

- FP16: 1.1x Higher

- FP8: 1.1x Higher

- FP6: 2.2x Higher

- FP4: 1.1x Higher

MI355X vs GB200:

- Memory: 1.6x Higher

- Bandwidth: 1.0x Higher

- FP64: 2.0x Higher

- FP16: 1.0x Higher

- FP8: 1.0x Higher

- FP6: 2.0x Higher

- FP4: 1.0x Higher