Huawei has started delivering its new CloudMatrix 384 AI clusters to customers in China, powered by its Ascend 910C AI chips.

In a new report from the Financial Times, we're learning that 10 different clients have now adopted Huawei's new CloudMatrix 384 AI servers into their data center portfolios. We don't know which Chinese companies are using Huawei's new AI servers, but they are reportedly primary customers of Huawei's product offerings.

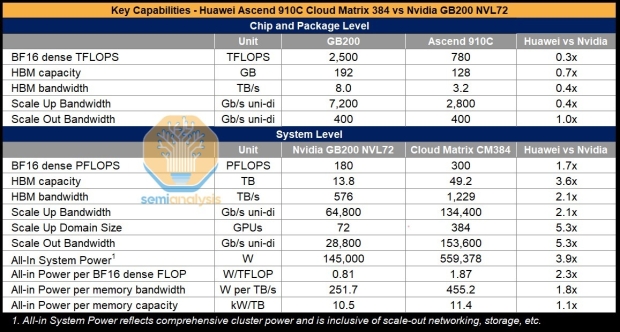

Huawei's new CloudMatrix 384 "CM384" AI cluster is powered by 384 Huawei Ascend 910C AI chips connected in an "all-to-all topology" configuration. Huawei is outweighing the architectural flaws of its AI chips by using 5x more of them than NVIDIA uses with GB200 inside of NVL72 servers. This is why the company doesn't care about the costs, performance inefficiency, scalability ratios, and more.

There's also far more bandwidth on CloudMatrix, with up to 12209TB/sec (1.2 petabytes per second, which is insane) compared to "just" 576TB/sec from NVIDIA GB200 NVL72. We might have far bigger pools of super-fast HBM, but when it comes to performance per watt, across multiple AI workloads the Ascend 910C-powered CloudMatrix AI server uses around 3.9x MORE power than NVIDIA's bleeding-edge GB200 NVL72 AI server... but China doesn't need to worry about power costs or infrastructure, it can just GO.

The power consumption numbers are scary, with CloudMatrix using 3.9x more power... I can't imagine the cost of running this in a country (like where I am in Australia, and why we aren't seeing AI server infrastructure roll-outs because electricity is so expensive here) where it's 3x or 5x or even 10x more expensive.