Introduction, Drive Specifications

Most enthusiasts are familiar with Intel's mighty 750 Series NVMe PCIe Gen 3x4 SSDs. We tend to think of the 750 series in terms of an Add-In-Card (AIC) form factor. However, Intel's 750 Series SSDs, as well as most of their enterprise NVMe SSDs are also available in a 2.5" form factor. All 2.5" NVMe SSDs have a common connector known as an SFF-8639, or U.2 connector. A U.2 Connector provides four lanes of PCIe Gen 3.0, theoretically capable of up to 4GB/s bandwidth in each direction.

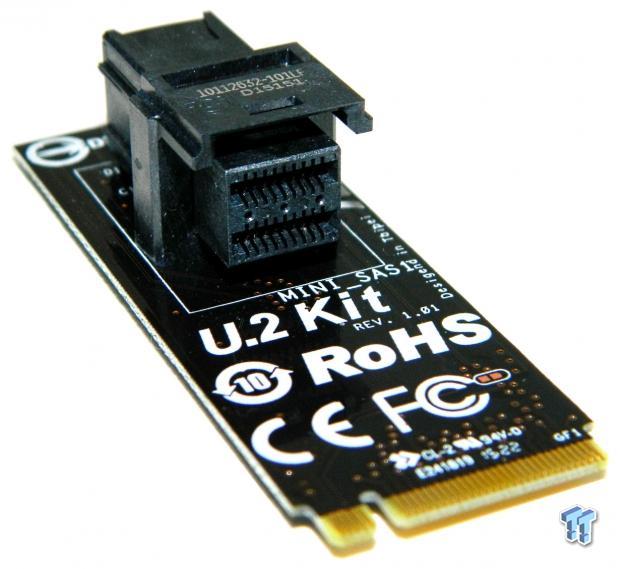

U.2 SSDs are desirable in the enterprise space for a whole host of reasons, the most important of which is that U.2 SSDs are hot-swappable; AIC SSDs are not. In the enterprise space, U.2 SSDs usually interface to custom backplanes. In the consumer space, U.2 SSDs primarily interface with the host via mini-SAS ports and specialized cabling. As it stands right now, there are very few consumer motherboards that have built-in mini-SAS connections, so adapters are typically required.

In gamer/enthusiast circles, U.2 SSDs are more desirable than add-in-PCIe cards because you will not need to give up a PCIe slot. In the case of Intel Z170-based motherboards, you don't need to give up any of the CPU lanes associated with that slot if the drive is routed through the Z170 chipset. So, how exactly do you go about getting a mini-SAS connector routed through the Z170 chipset? Well, it has to go through a built-in M.2 Gen 3x4 slot that is routed through the Z170 (DMI 3.0) chipset via a mini-SAS to M.2 adapter.

There are a variety of mini-SAS to M.2 adapters on the market. One would think that the adapter is just a passive piece of hardware, and it should not matter what brand you use. Well, that line of thinking turns out to be incorrect. We found that we could not use ASUS brand mini-SAS to M.2 adapters with ASRock motherboards. GIGABYTE brand mini-SAS adapters are more universal and will work with some non-GIGABYTE motherboards. However, the design of the GIGABYTE adapter may be undesirable because the vertical position of the mini-SAS connection can conflict with video cards in some cases. We suggest using an adapter that is the same brand as your motherboard.

Intel's Z170 chipset is bandwidth limited to roughly 3.6GB/s sequential throughput. While DMI 3.0 doesn't deliver enough bandwidth to fully exploit all of the read performance available from multiple NVMe SSDs running as a RAID array, it does allow for a bootable NVMe array. Why do you want a bootable NVMe array? Simple really, think of RAID 0 as the SLI of storage. If you want the fastest OS disk available, then you want a bootable NVMe RAID 0 array.

Intel's 750 series SSDs are known for kicking out the highest IOPS of any consumer SSD to date. The 750 Series SSDs are also well known for unmatched performance with sustained mixed random workloads such as our 4K 70/30 steady state mixed workload test.

We believe a bootable two-drive array composed of affordable 400GB Intel 750 U.2 NVMe SSDs should deliver the goods. Let's get into the report so we can show you why we believe that for the ultimate in OS disk performance, RAID 0 is back on top.

Specifications

Intel's 750 Series SSD is available in three capacities 400GB, 800GB, and 1.2TB. All capacities are available in two form factors, a half-length, half-height AIC with a single slot Gen 3x4 connector and a 2.5" x 15mm z-height standard form factor with a U.2 (SFF-8639) compatible connector.

Sequential R/W performance for the 400GB 750 is listed at 2200/900 MB/s. 4K random read performance is listed at up to 430,000 IOPS. 4K random write performance is listed at up to 230,000 IOPS. Both available form factors (AIC & 2.5") carry identical performance ratings.

Enhanced power-loss protection is provided by onboard capacitors. Data protection is enhanced by up to 32GB of the drive's memory dedicated to XOR internal data parity. Endurance is rated at up to 70GB per day or 219 TBW (Terabytes Written). Power consumption is listed at 12W active / 4W idle. The 400GB 750 Series carries an MSRP of $389 (current street pricing is much lower). Intel backs their 750 Series SSDs with a five-year limited warranty.

Drive Details

Intel 750 NVMe 400GB U.2 PCIe Gen 3x4 NVMe SSD

In typical Intel fashion, the drive ships in attractive, protective packaging. The front of the mostly blue box lists the drive model, capacity, and sports a depiction of the drive within. Also depicted is the included mini-SAS to U.2 PCIe connector cable, and the required PCIe connector/adapter that is not included.

The back of the blue colored packaging lists sequential and random 4K performance specifications at each available capacity point. We note that the performance specifications listed are slightly lower than those given originally by Intel.

Contained within the packaging is the drive itself, a PCIe connector cable, and a CD containing warranty information and Intel's proprietary NVMe driver. The included PCIe connector cable is a U.2 to mini-SAS cable that also has an integrated standard SATA power connector to provide additional power to the drive.

The drive itself is a very attractive piece of hardware that exudes quality and craftsmanship. The enclosure is all aluminum alloy, natural in color; with a nice heft to it. The front face of the enclosure is emblazoned with a chrome Intel logo and a trademark swooped manufacturers label that contains all the particulars of the drive.

The bottom half of the enclosure is a solid cast aluminum passive heat sink.

Here are the components we used to create our bootable NVMe RAID array.

Test System Setup and Drive Properties

Jon's Consumer PCIe SSD Review Test System Specifications

- Motherboard: ASRock OC Formula Z170 - Buy from Amazon / Read our review

- CPU: Intel Core i7 6700K @ 4.7GHz - Buy from Amazon / Read our review

- Cooler: Swiftech H2O-320 Edge - Buy from Amazon / Read our review

- Memory: Corsair Vengeance LPX DDR4 16GB 3200MHz - Buy from Amazon

- Video Card: Onboard Video

- Case: IN WIN X-Frame - Buy from Amazon / Read our review

- Power Supply: Seasonic Platinum 1000 Watt Modular - Buy from Amazon / Read our review

- OS: Microsoft Windows 10 Professional 64-bit - Buy from Amazon

- Drivers: Intel RAID option ROM version 14.6.0.1029 and Intel RST driver version 14.8.0.1042

We would like to thank ASRock, Crucial, Intel, Corsair, RamCity, IN WIN, and Seasonic for making our test system possible.

Bootable PCIe RAID 0 Settings

We will briefly go over the settings we used for our bootable PCIe RAID array:

SATA mode needs to be set to RAID and RST PCIe storage remapping needs to be enabled. Storage OpROM policy needs to be set to boot UEFI only for a bootable array.

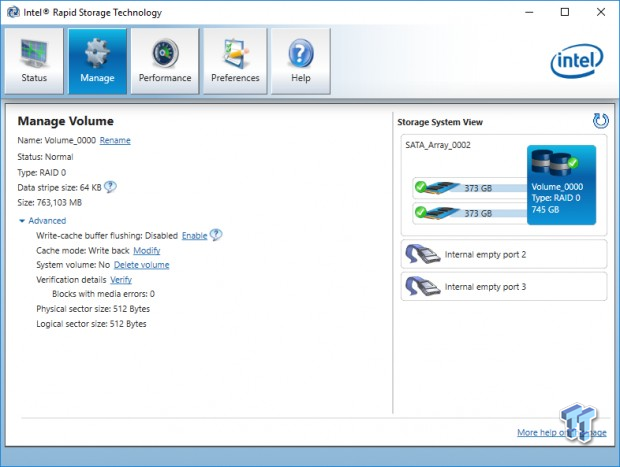

After a restart, Intel's Rapid Storage Technology will show up in advanced options, and you can configure your PCIe array. We used 64K stripes to create our array. We found that a 64K stripe size delivers better overall performance than the default 16K.

After creating the array, you are ready to load Windows, but you must have a compatible "F6" RST driver ready to load with Windows (we chose the latest version 14.8), or the array will not be recognized. Windows installer must be loaded as UEFI, so Windows is installed on a GPT partition.

Once Windows is loaded, you will need to install the Intel RST control panel to enable RST write-back caching, which is a MUST DO for superior performance.

Drive Properties

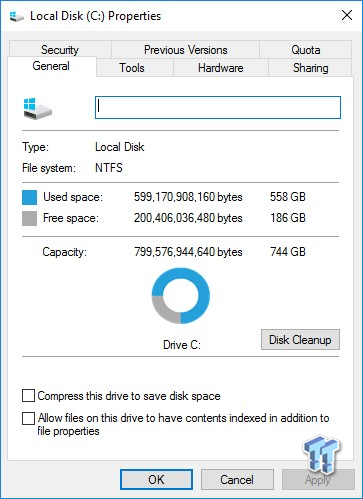

The majority of our testing is performed with our test drive as our boot volume. Our boot volume is 75% full for all OS Disk "C" drive testing to replicate a typical consumer OS volume implementation. We feel that most of you will be utilizing your SSDs for your boot volume and that presenting you with results from an OS volume is more relevant than presenting you with empty secondary volume results.

System settings: Cstates and Speed stepping are both disabled in our systems BIOS. Windows High-Performance power plan is enabled. Windows write caching is enabled, and Windows buffer flushing is disabled. We are utilizing Windows 10 Pro 64-bit for all of our testing except for our MOP (Maxed-Out Performance) benchmarks where we switch our OS to Windows Server 2012 R2 64-bit.

Synthetic Benchmarks - ATTO & Anvil Storage Utilities

ATTO

Version and / or Patch Used: 2.47

ATTO is a timeless benchmark used to provide manufacturers with data used for marketing storage products.

Sequential read/write transfers max out at 3605/1993 MB/s with our 400GB x 2 array. Keep in mind this is our OS volume 75% full.

Sequential Write

Because of RST write-back caching, the small file performance of our 750 array is greatly enhanced in comparison to a single drive. Our 750 array is able to outperform our dual 256GB 950 Pro array. Our dual 512GB 950 Pro array wins this easily, which is no surprise, considering it has a 1200 MB/s sequential write advantage.

Sequential Read

This chart is an excellent illustration of why you want RAID 0 and why you need to enable RST write-back caching. A properly configured array has a much-improved performance curve in comparison to a single drive when write-back caching is enabled.

Anvil Storage Utilities

Version and / or Patch Used: 1.1.0

Anvil's Storage Utilities is a storage benchmark designed to measure the storage performance of SSD's. The Standard Storage Benchmark performs a series of tests; you can run a full test or just the read or write test, or you can run a single test, i.e. 4k QD16.

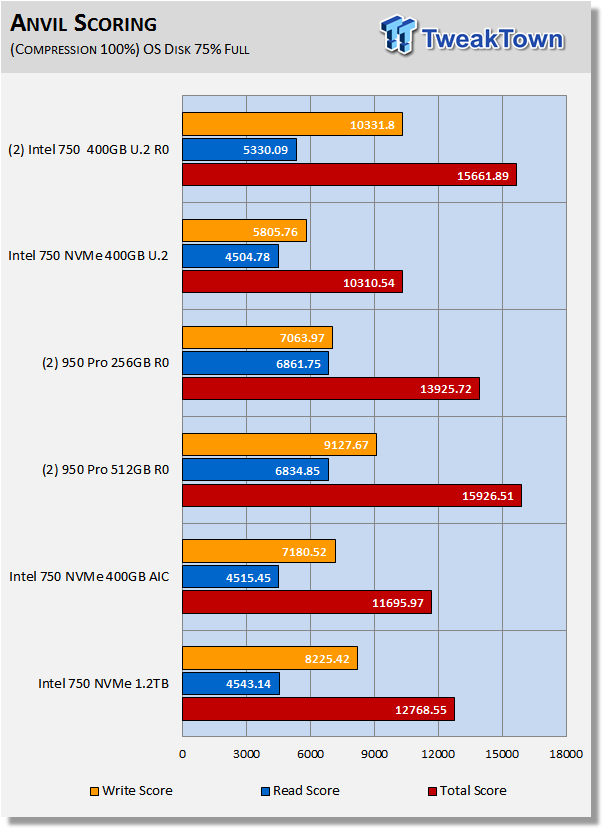

Scoring

We note about a 50% increase in scoring from a single drive to a two-drive array, with the majority coming from increased write performance. Our 750 array dominates the write scoring because it is able to kick out uber write IOPS. The dual 750 array is able to outscore the dual 256GB 950 Pro array, but not the dual 512GB 950 Pro array.

(Anvil) Read IOPS through Queue Depth Scale

The 950 Pro arrays display better performance at consumer queue depths, but the 750 array has an advantage at queue depths of 64 or greater.

(Anvil) Write IOPS through Queue Scale

At queue depths of two and higher, the Intel 750 series has a clear advantage over Samsung's 950 Pro. Our dual 750 array easily outperforms the rest of our test pool. Curiously, the 750 400GB AIC handily outperforms the U.2 variant.

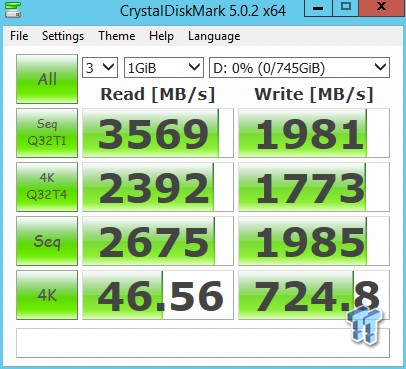

Synthetic Benchmarks - CrystalDiskMark & AS SSD

CrystalDiskMark

Version and / or Patch Used: 3.0 Technical Preview

CrystalDiskMark is disk benchmark software that allows us to benchmark 4k and 4k queue depths with accuracy. Note: Crystal Disk Mark 3.0 Technical Preview was used for these tests since it offers the ability to measure native command queuing at QD4.

Our 750 array has the advantage over a single drive in only one area of this test; sequential performance. In contrast to what we saw with ATTO, the 950 Pro arrays deliver better sequential performance than the 750 array because this version of CMD measures sequential performance at QD1, we use QD4 with ATTO.

Write performance is where an array has a significant advantage over a single drive. In this case, the chipset isn't getting in the way of maximum performance. Our dual 400GB 750 array is delivering 100% better sequential performance, and more importantly, 30% better 4K QD1 random performance than a single drive. The performance of our 750 array is almost identical to our dual 256GB 950 Pro array.

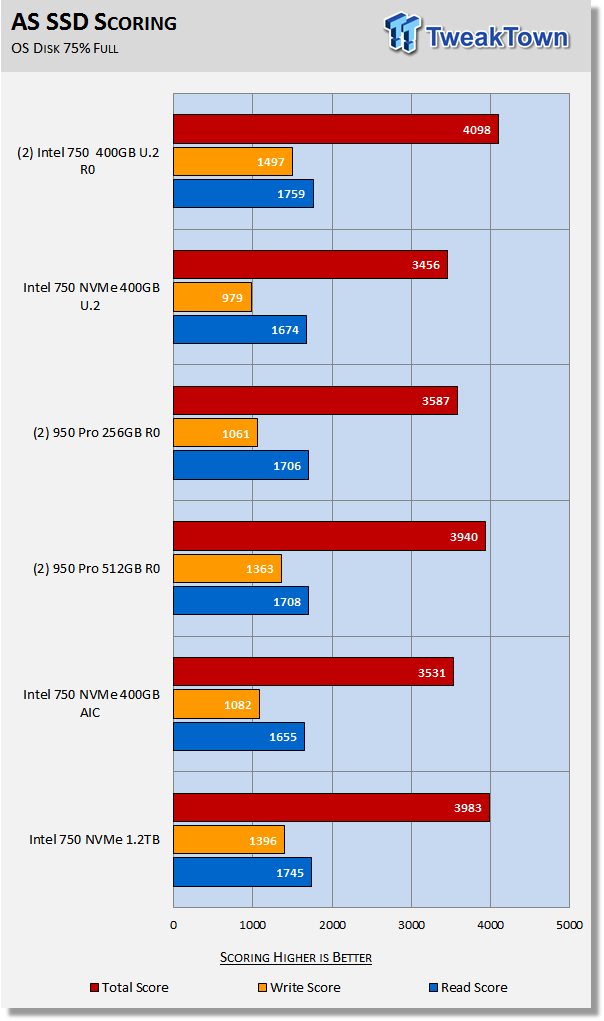

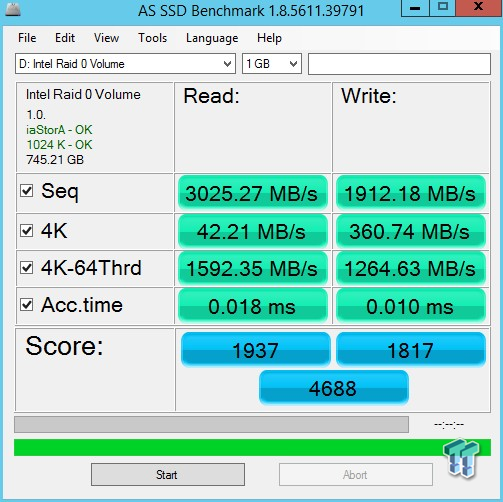

AS SSD

Version and / or Patch Used: 1.7.4739.38088

AS SSD determines the performance of Solid-State Drives (SSD). The tool contains four synthetic as well as three practice tests. The synthetic tests are to determine the sequential and random read and write performance of the SSD.

So much of AS SSD's scoring comes from 4K-64 thread performance, which is nearly irrelevant in a consumer setting, that scoring may not reflect what can be expected in terms of real-world performance. We still use AS SSD because it's a very popular test that many enthusiasts use and scoring aside, it does provide important data. Our 750 array delivers the best score.

With our synthetic testing out of the way, let's take a look at our moderate workload testing.

Benchmarks (Trace-Based OS Volume) - PCMark Vantage, PCMark 7 & PCMark 8

Moderate Workload Model

We categorize these tests as indicative of a moderate workload environment.

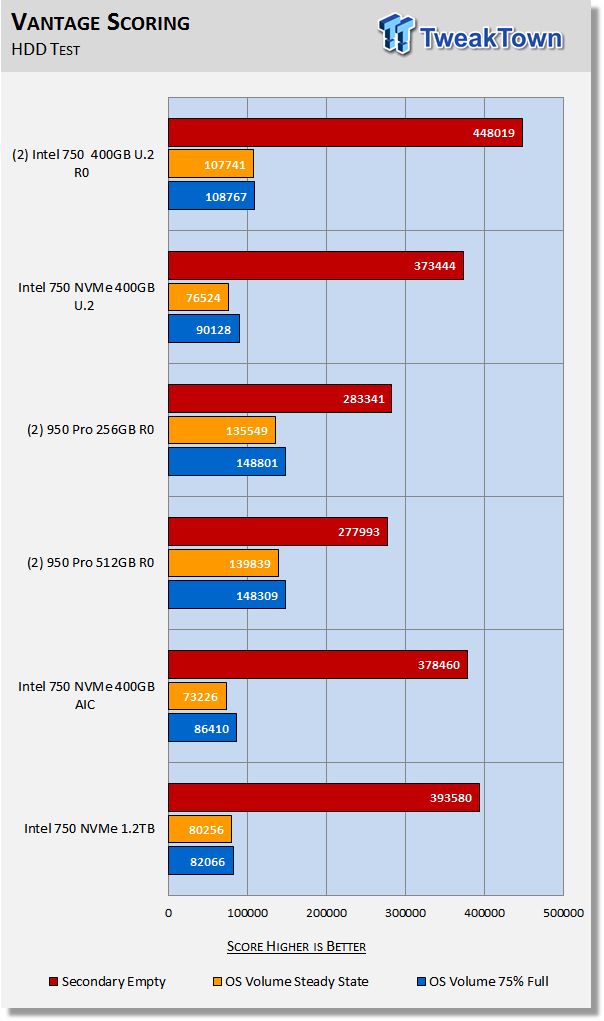

PCMark Vantage - Hard Disk Tests

Version and / or Patch Used: 1.2.0.0

The reason we like PCMark Vantage is because the recorded traces are played back without system stops. What we see is the raw performance of the drive. This allows us to see a marked difference between scoring that other trace-based benchmarks do not exhibit. An example of a marked difference in scoring on the same drive would be empty vs. filled vs. steady state.

We run Vantage three ways. The first run is with the OS drive 75% full to simulate a lightly used OS volume filled with data to an amount we feel is common for most users. The second run is with the OS volume written into a "Steady State" utilizing SNIA's guidelines. Steady state testing simulates a drives performance similar to that of a drive that been subjected to consumer workloads for extensive amounts of time. The third run is a Vantage HDD test with the test drive attached as an empty secondary storage device.

OS Volume 75% Full - Lightly Used

OS Volume 75% Full - Steady State

Secondary Volume Empty - FOB

There's a big difference between an empty drive, one that's 75% full/used, and one that's in a steady state.

The important scores to pay attention to are "OS Volume Steady State" and "OS Volume 75% full." These two categories are most important because they are indicative of typical of consumer user states. When a drive is in a steady state, it means garbage collection is running at the same time it's reading/writing. This is exactly why we focus on steady state performance.

We observe a whopping 40% increase in steady-state performance by going from a single 750 to a two drive array. The 950 Pro arrays still win, but the real-world advantage of an NVMe array over a single NVMe SSD is on full display.

PCMark 7 - System Storage

Version and / or Patch Used: 1.4.0

We will look to Raw System Storage scoring for evaluation because it's done without system stops and, therefore, allows us to see significant scoring differences between drives.

OS Volume 75% Full - Lightly Used

PCMark 7 is showing a scoring increase of 62% for a dual 750 array over a single 750. This backs up what we saw from Vantage.

PCMark 8 - Storage Bandwidth

Version and / or Patch Used: 2.5.419

We use PCMark 8 Storage benchmark to test the performance of SSDs, HDDs, and hybrid drives with traces recorded from Adobe Creative Suite, Microsoft Office, and a selection of popular games. You can test the system drive or any other recognized storage device, including local external drives. Unlike synthetic storage tests, the PCMark 8 Storage benchmark highlights real-world performance differences between storage devices.

OS Volume 75% Full - Lightly Used

PCMark 8 is the most intensive moderate workload simulation we run. Although not shown on this chart, a single 950 Pro managed to outperform a dual 950 Pro array with this test which ran contrary to all of our other results. This is not happening with our 750 array. The 750 array outperforms a single drive by a significant margin.

Benchmarks (Secondary) - Max IOPS, Response & Transfer Rates

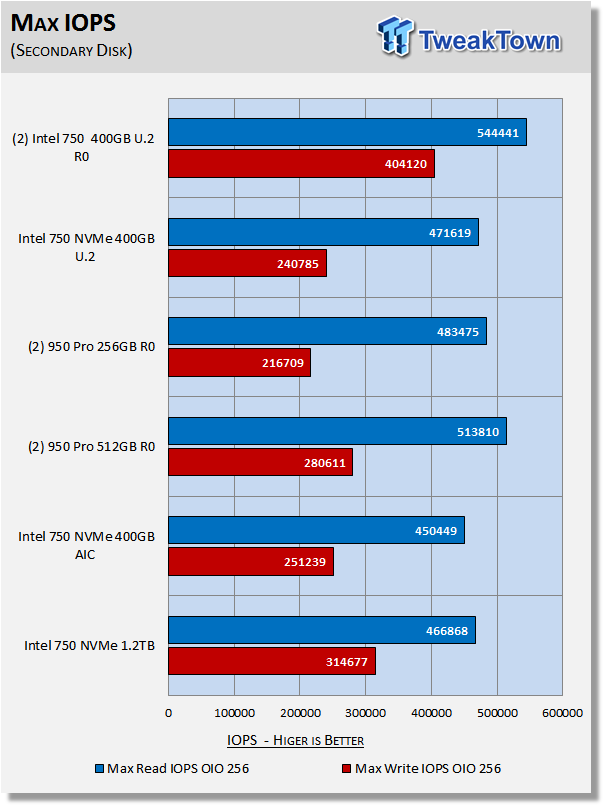

Iometer - Maximum IOPS

Version and / or Patch Used: Iometer 2014

We use Iometer to measure high queue depth performance. (Secondary Volume, No Partition)

Max IOPS Read 8-Workers (FOB) QD 256 8GB LBA

Max IOPS Write 8-Workers (FOB) QD 256 8GB LBA

We run this test at QD256, which is a queue depth that a consumer SSD will never see. We do this just to see what the maximum attainable IOPS from our configuration actually is. Our dual 400GB 750 array is able to attain more read/write IOPS than the 950 Pro arrays.

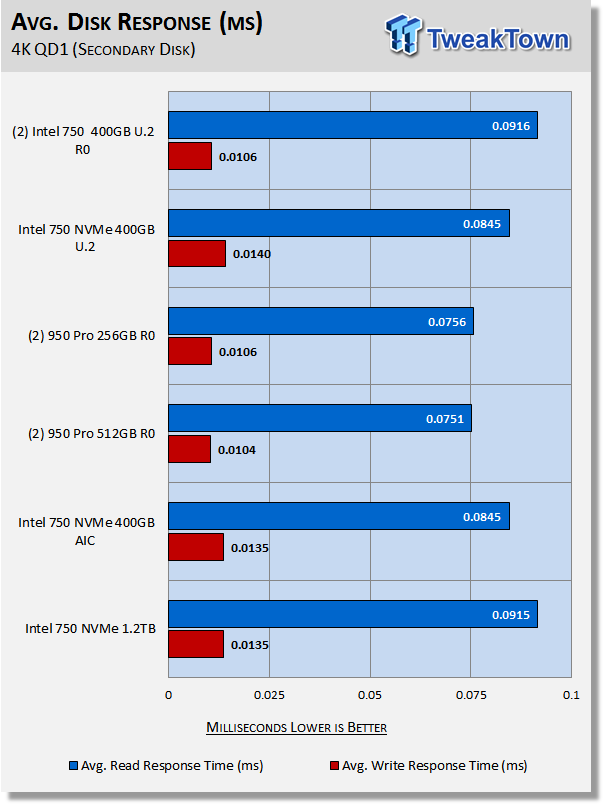

Iometer - Disk Response

Version and / or Patch Used: Iometer 2014

We use Iometer to measure disk response times. Disk response times are measured at an industry accepted standard of 4K QD1 for both write and read. Each test runs twice for 30 seconds consecutively, with a 5-second ramp-up before each test. We partition the drive/array as a secondary device for this testing.

Avg. Write Response

Avg. Read Response

This is exactly what we saw from our synthetic testing. A single 750 has better QD1 read performance than an array. This is common to all arrays we've tested over the years. Write response is another matter though (when write-back caching is enabled). A dual 750 array delivers 25% lower latency than a single 750.

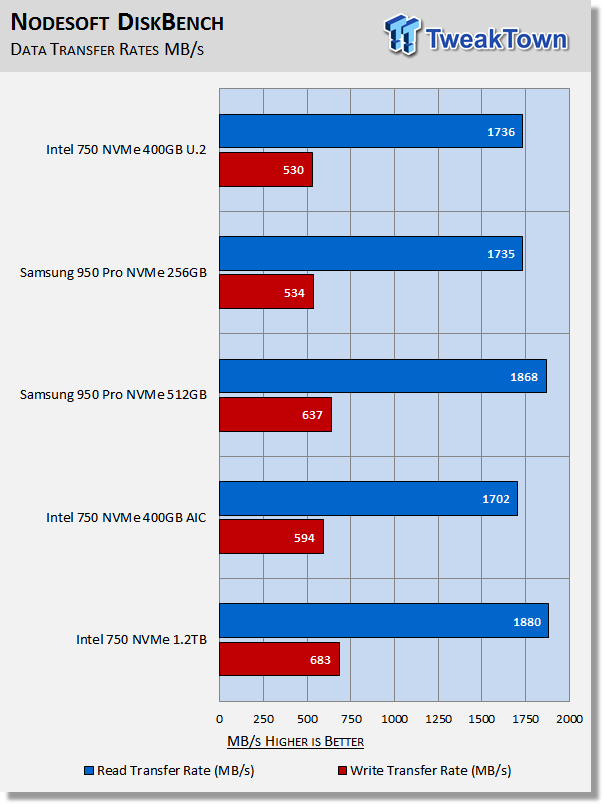

DiskBench - Directory Copy

Version and / or Patch Used: 2.6.2.0

We use DiskBench to time a 28.6GB block (9,882 files in 1,247 folders) composed primarily of incompressible sequential and random data as it's transferred from our DC P3700 PCIe NVME SSD to our test drive. We then read from a 6GB zip file that's part of our 28.6GB data block to determine the test drives read transfer rate. Our system is restarted prior to the read test to clear any cached data, ensuring an accurate test result.

Transfer Rates

We only tested single drive transfer rates because we aren't sure that we have anything fast enough to feed our arrays. In a single drive setting, Intel's 1.2 TB 750 delivers the best transfer rates.

Benchmarks (Secondary Volume) - PCMark 8 Extended

Futuremark PCMark 8 Extended

Heavy Workload Model

PCMark 8's consistency test simulates an extended duration heavy workload environment. PCMark 8 has built-in, command line executed storage testing. The PCMark 8 Consistency test measures the performance consistency and the degradation tendency of a storage system.

The Storage test workloads are repeated. Between each repetition, the storage system is bombarded with a usage that causes degraded drive performance. In the first part of the test, the cycle continues until a steady degraded level of performance has been reached. (Steady State)

In the second part, the recovery of the system is tested by allowing the system to idle and measuring the performance after 5-minute long intervals. (Internal drive maintenance: Garbage Collection (GC)) The test reports the performance level at the start, the degraded steady-state, and the recovered state, as well as the number of iterations required to reach the degraded state and the recovered state.

We feel Futuremark's Consistency Test is the best test ever devised to show the true performance of solid state storage in an extended duration heavy workload environment. This test takes on average 13 to 17 hours to complete and writes somewhere between 450GB and 14,000GB of test data depending on the drive. If you want to know what an SSD's steady state performance is going to look like during a heavy workload, this test will show you.

Here's a breakdown of Futuremark's Consistency Test:

Precondition phase:

1. Write to the drive sequentially through up to the reported capacity with random data.

2. Write the drive through a second time (to take care of overprovisioning).

Degradation phase:

1. Run writes of random size between 8*512 and 2048*512 bytes on random offsets for 10 minutes.

2. Run performance test (one pass only).

3. Repeat 1 and 2 for 8 times, and on each pass increase the duration of random writes by 5 minutes.

Steady state phase:

1. Run writes of random size between 8*512 and 2048*512 bytes on random offsets for 50 minutes.

2. Run performance test (one pass only).

3. Repeat 1 and 2 for 5 times.

Recovery phase:

1. Idle for 5 minutes.

2. Run performance test (one pass only).

3. Repeat 1 and 2 for 5 times.

Storage Bandwidth

PCMark 8's Consistency test provides a ton of data output that we use to judge a drive's performance.

We consider steady state bandwidth (the blue bar) our test that carries the most weight in ranking a drive/arrays heavy workload performance. Performance after Garbage Collection (GC) (the orange and red bars) is what we consider the second most important consideration when ranking a drives performance. Trace-based steady state testing is where true high performing SSDs are separated from the rest of the pack.

We observe a 14% increase in steady-state performance when going from a single 750 to a dual 750 array. This chart is a carry-over from our 950 Pro RAID Report, where we manually over-provisioned both of our 950 Pro arrays by 20%, and that's the main reason they both outperform the 750 array by a significant margin. Without OP, the 950 Pro arrays and our 750 Pro array perform similarly in terms of PCMark 8 extended storage bandwidth.

We chart our test subject's storage bandwidth as reported at each of the test's 18 trace iterations. This gives us a good visual perspective of how our test subjects perform as testing progresses. We notice that an array displays much less performance variability across the 18 phases of this brutal test than does a single drive.

Total Access Time (Latency)

We chart the total time the disk is accessed as reported at each of the test's 18 trace iterations. The total latency of our 750 array is up to 2.5X lower than a single 750. This is another example we can point to as a reason why we believe an NVMe array is currently the ultimate OS disk.

Disk Busy Time

Disk Busy Time is how long the disk is busy working. We chart the total time the disk is working as reported at each of the tests 18 trace iterations.

When latency is low, disk busy time is low as well.

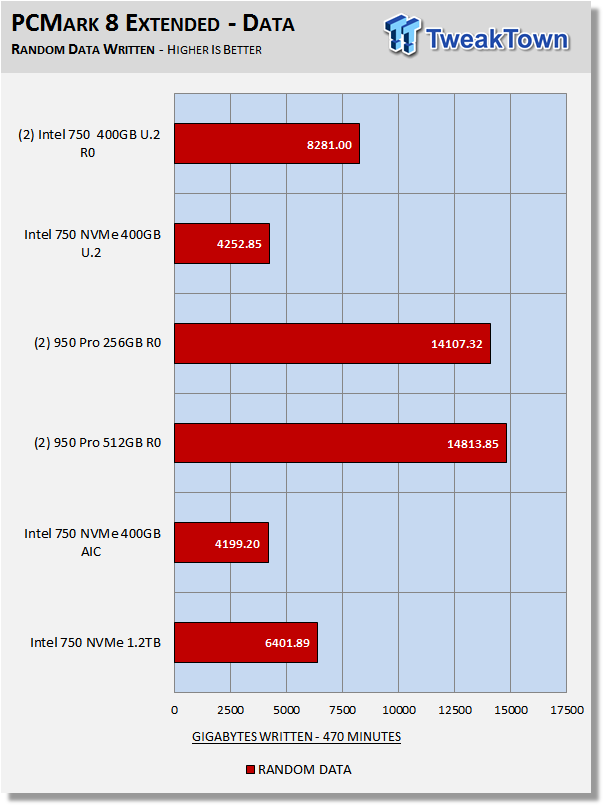

Data Written

We measure the total amount of random data that our test drive/array is capable of writing during the degradation phases of the consistency test. Pre-conditioning data is not included in the total. The total combined time that degradation data is written to the drive/array is 470 minutes. This can be very telling. The better a drive/array can process a continuous stream of random data, the more data will be written.

It is important to keep in mind that the 950 Pro arrays shown on this chart are over-provisioned by 20% which resulted in up to 9X increase in the amount of data written in comparison to a single non-overprovisioned 950 Pro. Although not charted, without manually over-provisioning the 950 Pro arrays, our dual 750 array is able to write double the amount of data of a non-overprovisioned 950 Pro array.

Benchmarks (Secondary Volume) – 70/30 Mixed Workload

70/30 Mixed Workload Test (Sledgehammer)

Version and / or Patch Used: Iometer 2014

Heavy Workload Model

This test hammers a drive so hard we've dubbed it "Sledgehammer". Our 70/30 Mixed Workload test is an enterprise-class test designed to simulate a heavy-duty workstation steady-state environment. We feel that a mix of 70% read/30% write, full random 4K transfers best represents this type of user environment. Our test allows us to see the drive enter into and reach a steady state as the test progresses.

Phase one of the test preconditions the drive for 1 hour with 128K sequential writes. Phase two of the test runs a 70% read/30% write, full random 4K transfer workload on the drive for 1 hour. We log and chart (phase two) IOPS data at 5-second intervals for 1 hour (720 data points). 60 data points = 5 minutes.

What we like about this test is that it reflects reality. Everything lines up, as it should. Consumer drives don't outperform Enterprise-Class SSDs that were designed for enterprise workloads. Consumer drives based on old technology are not outperforming modern Performance-Class SSDs, etc.

The 750 Series is more or less an enterprise SSD with consumer flash. This testing perfectly illustrates this fact. Enterprise class SSDs will always outperform consumer class SSD in this brutal test. Our 750 array easily leaves the competition in the dust.

Maxed-Out Performance (MOP)

This testing is just to see what the drive is capable of in an FOB (Fresh Out of Box) state under optimal conditions which is for the most part how most reviewers run these tests. We are utilizing Windows Server 2012 R2 64-bit for this testing. Same Hardware, just an OS change.

Note the 4K write performance. This is what write-back caching can do. Windows Server delivers vastly superior 4K random performance in comparison to Windows 8-10.

Approaching 18K.

500K Vantage

We used Server 2012 for MOP instead of 2008 for Intel RST PCIe arrays because the system must be UEFI booted for Windows to even see an Intel RST (Rapid Storage Technology) created PCIe array. Server 2008, although it performs better than Server 2012, does not UEFI boot.

Final Thoughts

NVMe is rapidly changing the storage landscape. No longer is just going solid state the path to the best-performing storage solution; now it needs to be NVMe for the ultimate in performance. As enthusiasts, we are always-on the lookout for a pathway to even more performance than the mainstream has to offer.

Storage enthusiasts such as myself are on a never-ending quest for the ultimate operating system (OS) disk, and this is the driving force that inevitably leads to RAID 0. As NVMe is becoming mainstream, committed enthusiasts are looking for even more storage performance than a single NVMe SSD has to offer, and Intel has delivered a pathway to a bootable NVMe array with its Z170 chipset.

The Z170 chipset is bandwidth limited to about 3.6 gigabytes per second sequential performance. Currently, 3.6 GB/s exceeds the performance of any consumer-based NVMe SSD on the market by quite a bit; especially when you consider that sequential write performance tops out at about 1.5GB/s on the fastest consumer NVMe SSDs.

Throughout our testing, we clearly demonstrated the performance gains that can be achieved with a bootable RAID 0 NVMe array for an OS disk. Intel's 750 SSD is still the leader when it comes to heavy mixed workloads and maximum IOPS.

We are more than satisfied with the SSD experience we were able to extract from our dual 400GB Intel 750 U.2 bootable array. If you are an avid enthusiast that simply has to have the best performing bootable storage solution, then you need an NVMe array. RAID 0 is back, and we encourage you to give it a try. You won't be disappointed.

Intel 750 400GB U.2 x2 Bootable NVMe Array

Pros:

- Five-Year Warranty

- Highest Consumer IOPS

- Superior Heavy Workload Performance

- Appealing Form Factor

Cons:

- Moderate Workload Performance

United

States: Find other tech and computer products like this

over at

United

States: Find other tech and computer products like this

over at  United

Kingdom: Find other tech and computer products like this

over at

United

Kingdom: Find other tech and computer products like this

over at  Australia:

Find other tech and computer products like this over at

Australia:

Find other tech and computer products like this over at  Canada:

Find other tech and computer products like this over at

Canada:

Find other tech and computer products like this over at  Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf

Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf