We've already tested our SAPPHIRE Radeon R9 290X Tri-X GPUs in both 4K at stock, and overclocked, but the numbers when overclocked were not great, considering the mammoth memory bandwidth numbers we saw.

We were not that impressed with the added performance from the overclock, given the increase in audible noise; when those fans spin up, it can get quite loud.

We'd like to thank Corsair, AMD, GIGABYTE, AMD, Corsair, and InWin for making this all happen - without you, we couldn't have done it. AMD and SAPPHIRE really stepped up here, providing the two SAPPHIRE Radeon R9 290X Tri-X GPUs we're testing, so I'd really like to take the time out and personally thank them - it means a lot to have your support, and we wouldn't be here today without it.

Now I'm sure you want to know the exact specs of the system, so here we go:

- CPU: Intel Core i7 4770K "Haswell" processor w/Corsair H110i cooler

- Motherboard: GIGABYTE Z87X-OC

- GPUs: SAPPHIRE Radeon R9 290X Tri-X (2x)

- RAM: Corsair Vengeance Pro 16GB kit of 2400MHz DDR3

- Storage: 240GB Corsair Neutron GTX and 480GB Corsair Neutron GTX

- Chassis: InWin X-Frame Limited Edition

- PSU: Corsair AX1200i digital PSU

- Software: Windows 7 Ultimate x64

- Drivers: Catalyst 13.12 WHQL

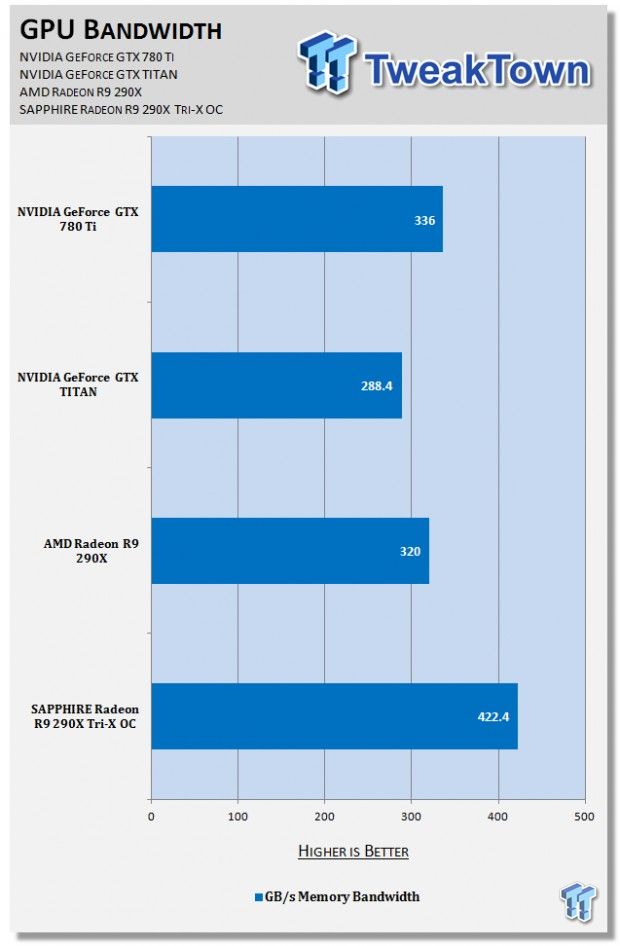

We overclocked our already-overclocked R9 290X past its Tri-X settings, to an insane 6.6GHz memory (from 5.2GHz on the reference R9 290X). This resulted in some absolute benchmark-busting memory bandwidth. Increasing from 320GB/sec on the reference R9 290X, to a huge 422.4GB/sec on our overclocked Tri-X GPU.

Now, let's compare that 422.4GB/sec of available memory bandwidth against the fastest card from NVIDIA - well, two of them. First, the GeForce GTX TITAN, and one of the faster non-reference GeForce GTX 780 Ti's.

As we can see, NVIDIA's GeForce GTX 780 Ti trumps the GTX TITAN when it comes to memory bandwidth - with 336GB/sec available to the GTX 780 Ti, and only 288.4GB/sec for the TITAN. Things shift up considerably on AMD's Hawaii GPU.

The stock Radeon R9 290X has 320GB/sec of available memory bandwidth, but as you can see our overclocked SAPPHIRE Radeon R9 290X Tri-X GPU has some insane memory bandwidth available with our insane overclock, climbing up to a massive 422.4GB/sec of memory bandwidth.

We're going to try and score some GTX 780 Ti and GTX TITAN's from NVIDIA, as well as a highly-overclockable GTX 780 Ti to do some comparisons with, so expect a future article with some new numbers in the near future!

Fan impressions at stock clocks: It is perfect. The annoying fan on the reference Radeon R9 290X is no longer: SAPPHIRE has fixed this with its Tri-X GPU. The triple-fan solution keeps your GPU cool while stock, while not busting your eardrums.

Remember, with two SAPPHIRE Tri-X GPUs in CrossFire, we have 6 fans spinning at once - not just the usual 3 on a single card. Even still, the noise is manageable, and with headphones on playing BF4, I couldn't hear a thing.

When overclocked: There is a big difference when overclocked, it is almost like night and day. Those numbers we discussed before do have a flip side, and that is noise. All that extra GPU horsepower requires power, which equals heat, where the overclocked Tri-X GPUs reach stock R9 290X temps.

This is where most people that talk about GPUs will discuss the noise, but most gamers - not benchmarkers - will have headphones on, or speakers up loud. I'm not going to be playing Battlefield 4 without sound, but then we have the problem of putting out that much noise into the room.

If you're sitting 10 feet away, you'll hear these fans. It's not something where you might think twice about these particular GPUs, but it will definitely have you experiencing buyers remorse, until you look at those bandwidth numbers that is.

Even with headphones on and in the middle of a game, I could still make out the fan noise when a quieter moment in the game happened. The fans could just be heard, and taking the headphones off made an instant difference.

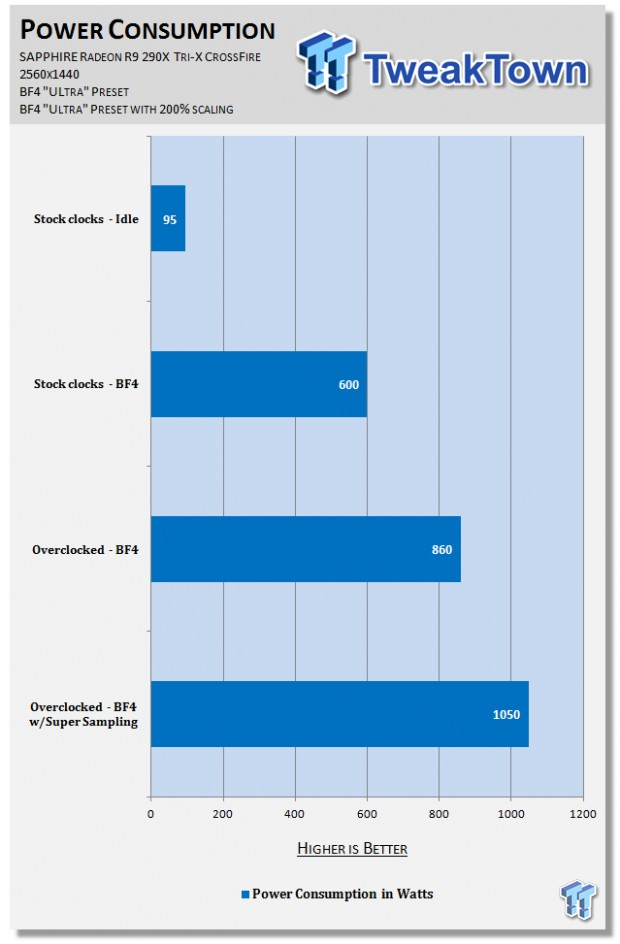

Total power consumption:

Note: The tests were running Battlefield 4 in a 64-player server in multiplayer, which provides some real-world results. These numbers are also for the entire system.

When at stock clocks, using around 600W draw from the entire machine (sans monitor) is to be expected, considering they're Radeon R9 290Xs in CrossFire. Running them in CrossFire at 2560x1440 is no basic resolution, unlike 720p and 1080p.

But the power consumption numbers begin to climb with our overclock, from around 600W to 860W. This is a large increase, very close to 50%. This isn't surprising, considering the amazing bandwidth increase we receive - and we are running two of them in CrossFire.

Something I didn't expect, was using the "200% scaling" in Battlefield 4, which is just a fancy word for Super Sampling. Using this 200% option renders the image at 200% of the original, rendered resolution, and considering we're running 2560x1440 - this is a huge task for the GPU.

It is rendering an image much larger than 4K, and sampling it down to fit onto our 2560x1440 display. This gives the GPUs a much tougher workload, something I didn't see coming - not this much, anyway.

We went from 860W on our overclocked Tri-X GPUs to a massive, unexpected 1050W. I couldn't believe my eyes when I saw the power meter ticking at 1050W, as this is something I would've expected from a 3-way CrossFire setup.

Conclusion:

The first thing that popped into my head as the power consumption numbers began to sink in was: I can't wait to test these Tri-X GPUs in 4-way CrossFire. If we're using 1050W with two heavily overclocked SAPPHIRE Radeon R9 290X Tri-X GPUs, how much power would four of the things use?

We could be getting awfully close to drawing 2000W of power... which would mean I have to rig up a second PSU just to do more testing, or I have to see if I can hunt something more beastly up. Corsair did just unveil a new AX1500i PSU...

Last updated: Nov 3, 2020 at 07:12 pm CST