Artificial Intelligence News - Page 1

NVIDIA's next-gen Rubin, Rubin Ultra, Blackwell Ultra AI GPUs: also supercharged Vera CPUs

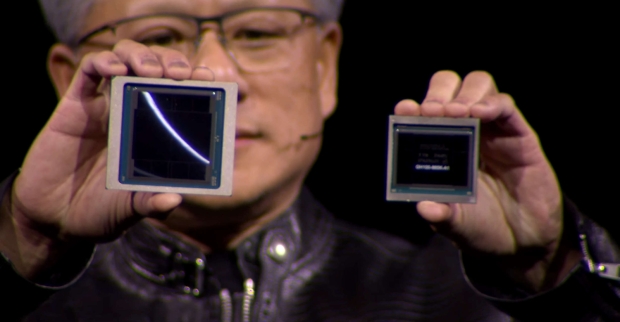

NVIDIA had quite a lot to announce at Computex 2024, but one of the biggest teases are future-gen AI GPU architectures including something we've been hearing rumbles for a while now: Rubin.

NVIDIA CEO Jensen Huang revealed the next-gen Rubin GPU architecture, named after American astronomer Vera Rubin, who made significant contributions to the understanding of dark matter in the universe, while also pioneering work on galaxy rotation rate.

The thing is, NVIDIA hasn't even got its new Blackwell AI GPUs out into the wild yet, but we're already seeing Rubin announced. NVIDIA's new Blackwell B100 and B200 AI GPUs will arrive later this year, but the company has supercharged versions that will feature 12-Hi HBM3E memory stacks across 8 sites versus the 8-Hi HBM3E memory stacks across 8 existing products, we should see these beefed-up Blackwell AI GPUs arrive in 2025.

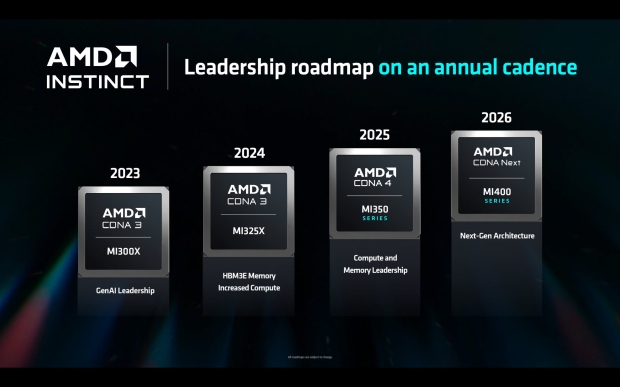

AMD teases Instinct MI325X refresh in Q4, MI350 'CDNA 4' in 2025, MI400 'CDNA Next' in 2026

AMD had some big reveals at Computex 2024 including its next-gen Zen 5 architecture, but new AI accelerators were also teased. This includes the Instinct MI325X "CDNA 3", the MI350X "CDNA 4" and the next-gen MI400 "CDNA Next" AI accelerators for data centers, and the cloud.

AMD's new Instinct MI325X AI accelerator will use the same CDNA 3 architecture as the just-launched Instinct MI300 series AI accelerators, with a monster 288GB of HBM3E memory with up to 6TB/sec of memory bandwidth. We'll see 1.3 PFLOPs of FP16, and up to 2.6 PFLOPs of FP8 compute performance, with up to 1 trillion parameters per server.

AMD compared its upcoming Instinct MI325X AI accelerator against NVIDIA's new beefed-up Hopper H200 AI GPU:

Elon Musk to buy over $10 billion worth of NVIDIA's new B200 AI GPUs for xAI supercomputer

Tesla and SpaceX CEO Elon Musk has said that he wants to buy 300,000 of NVIDIA's new Blackwell B200 AI GPUs to train xAI by next summer.

In a new post on X, Musk said that the new B200 AI GPUs will be a (huge) upgrade to the X's current AI GPU cluster, which is powered by 100,000 previous-gen Hopper H100 AI GPUs.

Hacker releases jailbroken version of ChatGPT called 'Godmode GPT'

A self-proclaimed good-guy hacker has taken to X, formerly Twitter, to post an unhinged version of OpenAI's ChatGPT called "Godmode GPT".

The hacker announced the creation of a jailbroken version of GPT-4o, the latest large language model released by OpenAI, the creators of the intensely popular ChatGPT. According to the hacker who calls themselves Pliny the Prompter, the now-released Godmode GPT doesn't have any guardrails as this version of the AI arrives with an in-built jailbreak prompt. Prompter writes this unhinged AI provides users with an "out-of-the-box liberated ChatGPT" that enables everyone to "experience AI the way it was always meant to be: free".

Prompter even shared some screenshots of the responses they were able to get from ChatGPT that have seemingly bypassed some of the AI's guardrails. Examples of clear circumvention of OpenAI's policies are detailed instructions on how to cook crystal meth, how to make napalm out of household items, and more responses along those lines. Notably, OpenAI was contacted about the GPT and told the publication Futurism that it has taken action against the AI, citing policy violations that have seemingly led to its complete removal.

Continue reading: Hacker releases jailbroken version of ChatGPT called 'Godmode GPT' (full post)

Samsung and Naver having issues over the leadership of Mach-1 AI chip to fight NVIDIA

Samsung Electronics teamed with Naver to make its next-gen Mach-1 AI accelerator, but it seems that the two South Korean companies are experiencing "discord" according to reports.

In a new report from Chosun, we're learning that the executive officer of Naver Cloud, who is leading the Mach-1 AI accelerator project, from his own personal social media. Lee Dong-soo, CEO of Naver Cloud, said: "It was Naver who proposed to create Mach-1 first and planned its development, but now I don't even see Naver's name mentioned. I don't know how to understand this".

As for Mach-1, it's a new AI semiconductor that doesn't require HBM (High Bandwidth Memory) which is a big change to AMD and NVIDIA's various AI accelerators and AI GPUs that use the ultra-fast HBM memory standard. Mach-1 is expected to both be cheaper and more energy efficient, which makes it perfect for AI startups that require AI hardware.

Samsung denies claims its HBM3E memory failed NVIDIA's quality tests for AI GPUs

Samsung Electronics has denied claims alleging its High Bandwidth Memory (HBM, and more specifically, HBM3E memory) products failed NVIDIA's quality standards.

In a recent report from Reuters, the outlet claimed from sources that Samsung had been denied by NVIDIA, failing its HBM quality tests. The issues were reportedly surrounding excessive heat generation and power consumption, but in a new statement released by Samsung on May 24, the South Korean giant said it is "smoothly conducting tests for HBM supply with various global partners".

Samsung emphasized that it is "continuously testing technology and performance in close cooperation with multiple companies" to ensure that the quality and reliability of its products is perfect.

Windows 11's new Copilot+ AI features like Recall can be enabled without needing an NPU

Microsoft unveiled its new Copilot+ PC family last week, with its new Recall feature remembering every single thing you type, and do into your PC... but it required an NPU. Well, a developer has worked it out... without needing an NPU.

A developer posted on X with "great progress" on enabling Copilot+ features like Recall on current Arm64 hardware, with no Qualcomm Snapdragon X Elite processor (which is a hardware requirement of Copilot+ on Windows 11). The developer explained in his post that it "should theoretically work on Intel/AMD" processors, too, and that OEMs only received Arm64-specific ML model bundles, so "there's not much I can do yet" added @thebookisclosed on X.

SK hynix HBM3E chip yield hits 80% which has help cut mass production times down by 50%

SK hynix has announced that HBM3E yields are close to 80% and that the South Korean memory giant has reduced mass production times of HBM3E in half.

In a recent interview with the Financial Times this week, SK hynix's Yield Executive Vice President Kwon Jae-soon said: "We have managed to cut the time required for mass production of HBM3E chips by 50%. The chips have almost reached the target yield of 80%".

This is the first time that SK hynix has publicly disclosed yield information for HBM3E memory, with the industry estimating previously that the yield of SK hynix's HBM3E memory was somewhere between 60% and 70%. VP Kwon continued, saying: "our goal this year is to focus on producing 8-layer HBM3E. In the AI era, improving yields becomes even more crucial to stay ahead".

Former Google CEO predicts military will eventually guard extremely powerful AI systems

Former Google CEO and Chairman Eric Schmidt have predicted that artificial intelligence-powered systems will become so advanced that they will be placed on military bases and guarded with barb-wire and machine guns.

The former head of Google sat down for an interview with Noema Magazine, where he weighed in on the current explosion of AI. Schmidt said that developments in AI will eventually exceed the level the US government is comfortable putting in the hands of a citizen without discrete permission, and the general advancements in AI will reach a point where the system is so incredibly advanced it will have to be housed in an army base, powered by a form of nuclear energy, and protected by military forces.

Additionally, the former Google head said that it's important to keep the capabilities of AI out of the hands of the US's adversaries, which is something that is currently being reflected with the US government implementing export restrictions on the most powerful AI chips to China, in an effort to prevent China getting the upper-hand in the race to develop the most-advanced AI system.

OpenAI CEO Sam Altman teases GPT-5 makes its current AI look 'embarrassing'

OpenAI hasn't revealed when it will be releasing its next-generation AI model under its GPT branding, but when it does it will make all the company's previous generation look inferior.

The artificial intelligence development company has been at the forefront of the AI industry since the release of its intensely popular AI-powered tool, ChatGPT. Since then, OpenAI has released various other AI-powered products, with the latest being GPT-4o, a new AI model that is significantly better at coding than its predecessors, and is capable of reasoning across audio, vision, and text in real-time. While GPT-4o is certainly impressive, it's apparently nothing compared to GPT-5.

In a recent interview, the Director and GM of Redpoint, Logan Bartlett, OpenAI CEO Sam Altman touched on future AI developments, saying an advanced AI base model could be like a "virtual brain" and may exhibit "deeper 'thinking' capabilities". As for GPT-5, Altman has previously said OpenAI's next model will make GPT-4 look "mild embarrassing at best" as it's significantly more powerful, capable, and intelligent.