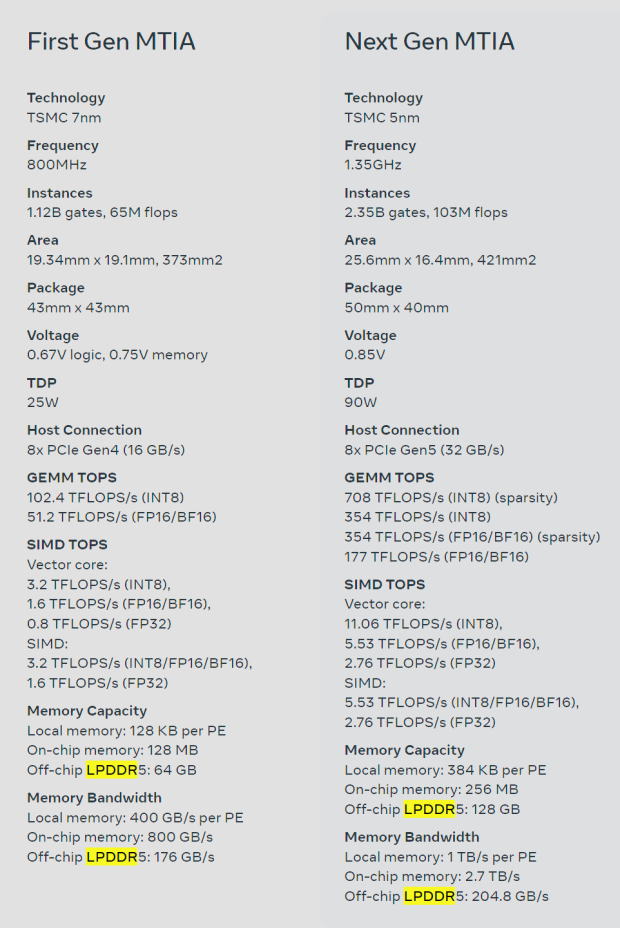

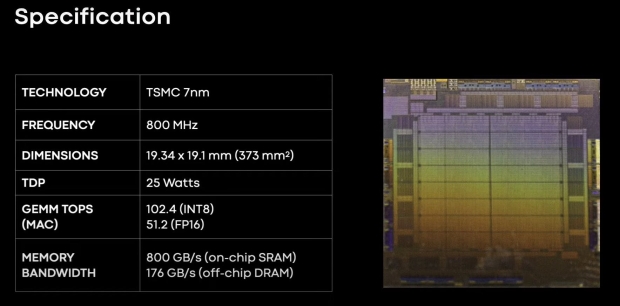

Meta has just teased its next-gen AI chip -- MTIA -- which is an upgrade over its current MTIA v1 chip. The new MTIA chip is made on TSMC's newer 5nm process node, with the original MTIA chip made on 7nm.

The new Meta Training and Inference Accelerator (MTIA) chip is "fundamentally focused on providing the right balance of compute, memory bandwidth, and memory capacity" that will be used for the unique requirements of Meta. We've seen the best AI GPUs on the planet using HBM memory -- with HBM3 used on NVIDIA's Hopper H100 and AMD Instinct MI300 series AI chips -- with Meta using low-power DRAM memory (LPDDR5) instead of server DRAM or LPDDR5 memory.

The social networking giant created its MTIA chip was the company's first-generation AI inference accelerator that the company designed in-house for Meta's AI workload in mind. The company says that their deep learning recommendation models are "improving a variety of experiences across our products".

Meta's long-term goal and its AI inference processor journey are to provide the most efficient architecture for Meta's unique workloads. The company adds that as AI workloads become increasingly important for Meta's products and services, the efficiency of its MTIA chips will improve its ability to provide the best experiences for its users across the planet.

Meta explains on its website for MTIA: "This chip's architecture is fundamentally focused on providing the right balance of compute, memory bandwidth, and memory capacity for serving ranking and recommendation models. In inference we need to be able to provide relatively high utilization, even when our batch sizes are relatively low. By focusing on providing outsized SRAM capacity, relative to typical GPUs, we can provide high utilization in cases where batch sizes are limited and provide enough compute when we experience larger amounts of potential concurrent work".

"This accelerator consists of an 8x8 grid of processing elements (PEs). These PEs provide significantly increased dense compute performance (3.5x over MTIA v1) and sparse compute performance (7x improvement). This comes partly from improvements in the architecture associated with pipelining of sparse compute. It also comes from how we feed the PE grid: We have tripled the size of the local PE storage, doubled the on-chip SRAM and increased its bandwidth by 3.5X, and doubled the capacity of LPDDR5".