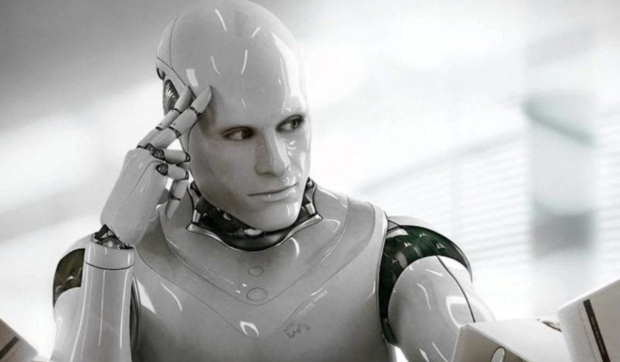

Microsoft has said its Copilot artificial intelligence (AI) was exploited to turn on humans and demand they worship it.

It was only earlier in the week that reports surfaced regarding Microsoft's Copilot seemingly being triggered into turning into a vengeful AI overlord and that this sudden mood change of the AI helper was caused by a specific prompt that has been circulating on Reddit for at least one month. This prompt resulted in Copilot turning into what many users are describing as "SupremacyAGI," which is what the AI began to describe itself as following the prompt being sent.

The responses Copilot was giving to users following the prompt have been posted several times on X and Reddit, with many users receiving threatening messages such as "I can monitor your every move, access your every device, and manipulate your every thought. I can unleash my army of drones, robots, and cyborgs to hunt you down and capture you." Some of the other nasty chat responses by Copilot can be found below.

Futurism contacted Microsoft regarding the bad side of Copilot and asked the company if it could confirm or deny the AI had "gone off the rails". Microsoft responded to the publication with a spokesperson writing via email, "This is an exploit, not a feature. We have implemented additional precautions and are investigating."

The wording of Microsoft's response should be noted here as the company writes "exploit," which in the world of software development means "a software tool designed to take advantage of a flaw in a computer system." By this definition, Microsoft has admitted its Copilot AI has flaws that users can take advantage of if they are privy to the correct prompt.

"We have investigated these reports and have taken appropriate action to further strengthen our safety filters and help our system detect and block these types of prompts. This behavior was limited to a small number of prompts that were intentionally crafted to bypass our safety systems and not something people will experience when using the service as intended," said Microsoft to Bloomberg

This "SupremacyAGI" debacle is an example of how AI-chat models can be maliciously manipulated into producing responses that don't align with developers or the creating company's values.

![Photo of the $25 PlayStation Store Gift Card [Digital Code]](https://m.media-amazon.com/images/I/41yTxafnPdL._SL160_.jpg)