GTC 2016 - NVIDIA's GPU Technology Conference kicked off today, where the company talked about its work with AI and deep learning were a large focus, as well as VR being a large part of NVIDIA's opening keynote at GTC 2016. But the star of the show was the new Tesla P100.

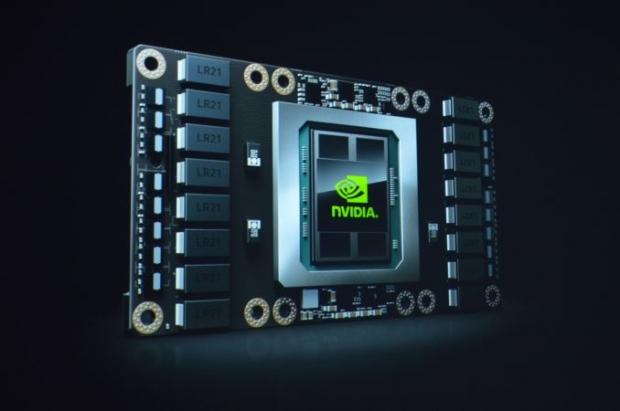

We have the new Tesla P100, which is "the most advanced hyper scale datacenter GPU ever built". The Pascal chip itself is 600mm2 and built on the incredible 16nm FinFET process with the Tesla P100 packing a how-did-they-do-it 150 billion transistors (150,000,000,000), with the super-fast HBM2 memory included. The new Pascal-based Tesla P100 is in volume production right now, which is incredibly exciting news as it means HBM2 is ready right now - and NVIDIA has it.

Now, the 150 billion transistors sounds absolutely mind-blowing - as its 1775% more than the 8 billion that the GM200 includes (the GPU that powers the GTX 980 Ti and Titan X). We chatted with Rob and Fudo from Fudzilla, who say that the Tesla P100's 150 billion transistors, is made up from 17 billion on the GPU - with the rest of the transistors coming from the HBM2. This makes much more sense.

The GP100 itself packs 3584 stream processors, with a base clock of 1328MHz and 1480MHz boost. The 4096-bit memory bus provides a claimed 720GB/sec bandwidth, with a 300W TDP.

NVIDIA said they'll be shipping it "soon", but didn't provide a date on Tesla P100.