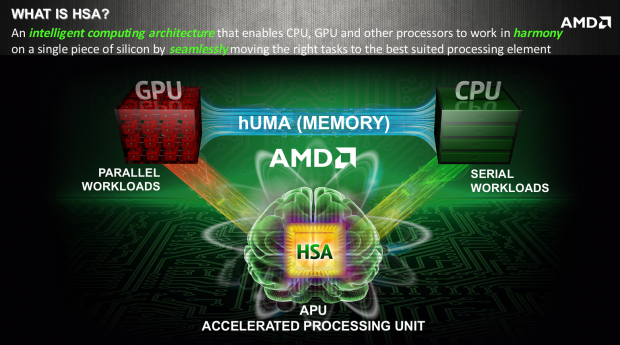

This morning AMD announced the next big advancement concerning their APU technology. AMD heterogeneous Uniform Memory Access (hUMA) is an intelligent computing architecture that enables the CPU, GPU, and other processors to work in harmony from a single piece of silicon in a single pool of memory and seamlessly move task to the best suited processing unit.

This means that in a single application, some calculations will run on the CPU while others run on the GPU accessing the same memory though the same addresses without worrying about which software touched the data last. AMD has been able to achieve this by moving the GPU and CPU onto a single die and then AMD enabled the GPU to have direct access to the CPU memory from the same address space. Finally AMD was able to simplify the data sharing by updating the GPU memory set so that it can follow pointers and complex data structures in the same way that the CPU does. These advancements allowed for better efficiency and lower power consumption.

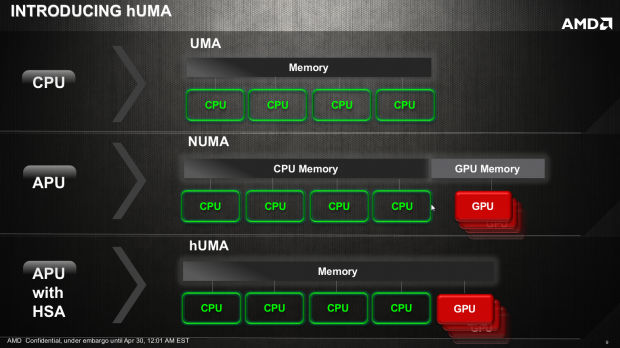

AMD is touting hUMA as restoring the GPU to the world of Uniform Memory Access. As it sits now the GPU utilizes non-uniform memory access and creates a mass of coding headaches for developers. With hUMA application coding can be simplified, and made more efficient throughout the code base. hUMA will also allow discrete GPU's to access other discrete GPU or APU memory space.

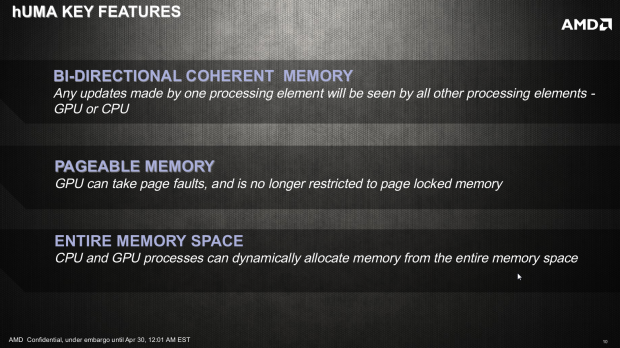

hUMA's key features are as follows:

- Bi-Directional Coherent Memory - This means that any updates made by one processing element will be seen by all other processing elements such as the CPU or GPU.

- Pageable Memory - This allows the GPU to handle page faults the same way that the CPU does. This also removes restricted page locked memory.

- Entire Memory Space - Both the CPU and GPU processes can dynamically allocate memory from the entire memory space. This means it has access to no only the physical memory, but it also has access to the entire virtual memory address space.

hUMA is also said to be "Extremely friendly to virtualization could become a game changer in the server space. 3D rendering performance is also said to greatly benefit from hUMA as it will allow partial texture pages to be loaded instead of loading the whole large texture as is done now. AMD says that we will see hUMA in practice on the next generation "Cavari" AMD APUs that are set to launch in the next few months. I have included the entire slide show below if you are interested in learning more.