Introduction

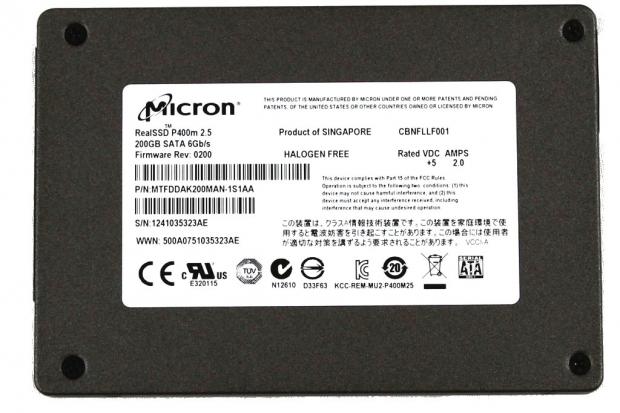

The Micron P400m is Micron's latest SSD in their well-rounded enterprise SSD series. The P400m is the new mainstream enterprise SSD, and Micron offers the P320h as the flagship PCIe solution and the P400e as the entry level SSD.

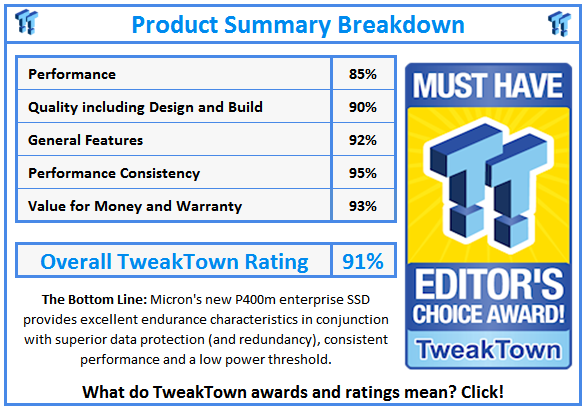

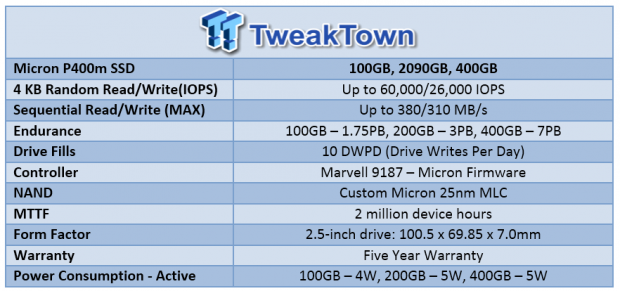

The new P400m enterprise SSD features Micron 25nm MLC NAND in conjunction with the Marvell 9187 controller. One of the many compelling features of the P400m is its 10 DWPD (Drive Writes per Day) of write endurance, which is guaranteed by Micron's first enterprise SSD five year warranty.

The P400m brings sequential read/write speeds of 380/310MB/s, and random read and write IOPS of 60,000/26,000. While the random write IOPS may seem low to the casual observer, it is important to note that these fall into Micron's' traditionally conservative specifications. Measurements are taken while the SSD is in steady state with full span random writes. This is the most demanding scenario for any storage solution, and many other SSD manufacturers will only advertise FOB (Fresh out of Box) specifications.

Micron's previous enterprise SSD, the P300m, was an SLC SSD that provided the ultimate in write endurance for users. SLC can withstand 100,000 P/E cycles, making it incredibly resilient in heavy write workloads. The progression of time and technology has allowed Micron to extend the same 10DWPD endurance to the MLC P400m. The transformation of the P400m's MLC into a solution with similar endurance to an SLC SSD is quite the feat.

Micron's' eXtended Performance and Enhanced Reliability Technology (XPERT) is a new feature from Micron that makes its debut with the P400m series of SSDs. This suite of hardware and firmware optimizations provide the exponential increase in the longevity and endurance of the 25nm MLC NAND employed in the P400m. XPERT also includes data redundancy in the form of the RAIN (Redundant Array of Independent NAND) functionality. This data redundancy is an extra layer of protection that will recover user data should the device suffer an uncorrectable data error, or even the loss of a whole page or block of data. We will cover the XPERT suite in more detail on the following page.

Micron uses a custom high quality MLC NAND from their foundry as a key building block for the P400m. Micron manufactures their own components, which allows them to create custom NAND for their products. This custom NAND has its own designation not found on Micron product sheets, and Micron will not be providing resellers with this specific NAND outside of the P400m. Micron also produces their own DRAM and serial NOR which gives them total control of the data media. This inherent knowledge of component design, manufacture, and core characteristics provide Micron with an advantage during the design and integration phases.

Micron utilizes the proven Marvell 9187 controller with the P400m. This popular 8-channel controller delivers its speed via the 6Gb/s SATA port and allows Micron to create their own firmware. This tailor-made firmware is enhanced to work efficiently with the Micron NAND, adjusting the NANDs characteristics as it ages to wring more endurance from the flash.

Our Latest SSD Review Coverage

- Memblaze PBlaze 7 7A40 Ocean 61.44TB Enterprise SSD Review - Oceans of QLC at 3.3 million IOPS

- KIOXIA CD9P-R 7.68TB E3.S Review - The Best-In-Class Data Center SSD

- DapuStor Roealsen6 R6060 E1.L 245.76TB SSD Review - Massive Capacity with Fast Retrieval

- Phison Pascari X200P 7.68TB Enterprise SSD Review - Sequential Read Champion

- Micron 9550 Pro E1.S 15mm 7.68TB SSD Review - G8 Flash for Read-Intensive AI Storage Compute

The P400m is intended for mainstream enterprise use and will perform well in a variety of environments. Financial applications, virtualization appliances, logging, VDI, boot storm, data warehouses and VOD (Video on Demand) applications will all benefit from the Micron P400m. One benefit of the SATA connection is that it allows for a wide variety of applications and deployments. Micron already has a SAS alternative in the works for those who need the functionality provided by SAS.

The SATA space is a good fit for an SSD designed and engineered for mainstream use. With the enterprise SATA SSD market projected to jump from two million units in 2013 to three million units in 2016, the time is ripe for manufacturers with their own foundries to begin churning out mainstream enterprise SSDs en masse.

Micron P400m Architecture

XPERT

XPERT (eXtended Performance and Enhanced Reliability Technology) provides enhanced defect and error management technology. This approach utilizes a combination of hardware based error correction algorithms along with static and dynamic firmware-based wear-leveling algorithms. Micron has implemented data protection and security at every level of the SSDs design, truly dedicating themselves to preserving user data above what many competing solutions offer.

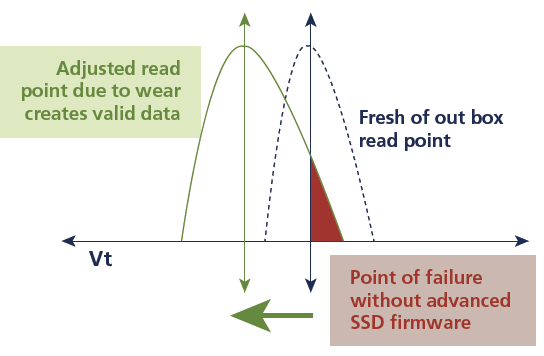

ARM/OR

ARM/OR (Adaptive Read Management/Optimized Read) is an adaptive read management technology that dynamically optimizes the NAND as the SSD ages. Over time, natural shifts occur in NAND read locations. This affects the accuracy and endurance of the individual NAND cells. Adaptive management algorithms identify these shifts and adjust read points on-the-fly, aligning them perfectly. This tuning greatly improves the longevity of the NAND.

RAIN

Micron has taken error correction and avoidance to the next level with RAIN (Redundant Array of Independent NAND), which calculates and stores parity. This is in essence a RAID 5 implementation at the device level, which provides the ability to recover data in the event of an error or failure. The great thing about RAIN is that it provides data security beyond the standard ECC approach.

The ability to use parity to recreate data, even if ECC cannot correct it, provides an almost 'bulletproof' method of protecting against the increasing amount of errors that comes with NAND as it ages. This transparent process takes place without any degradation of the SSDs performance, but does come at the expense of capacity. This implementation relies upon extra NAND to store the data, but Micron has compensated for this with a generous 70% overprovisioning on the P400m.

ReCAL

ReCAL (Reduced Command Access Latency) enables lower maximum command access/latency through managing internal operations at a granular level. This includes managing wear leveling and other in-house operations to minimize the impact upon service host I/O requests. ReCAL also optimizes the logical-to-physical (L2P) internal data structure by breaking this structure into a series of smaller, more manageable chunks.

Due to the wearing characteristics of NAND during the course of its life it will fall into three distinct failure regions; early-life, long-term use, and end-of-life. The P400m is pre-cycled at the factory during the manufacturing process. This pre-cycling eliminates the early-life failure region.

DataSAFE

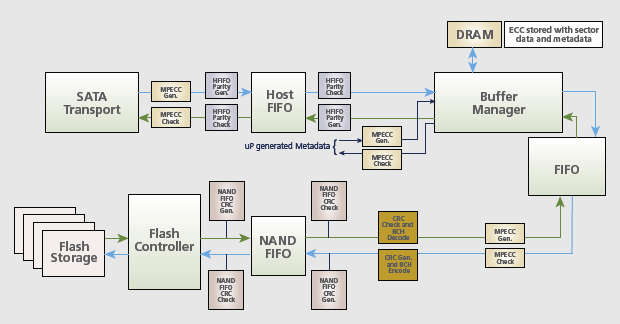

DataSAFE protects user data by embedding the LBA metadata along with user data to ensure that the SSD returns the exact data requested.

DataSAFE also protects data as it moves through the SSD. Typical SSD architecture consists of two 'lanes' of traffic through the device. Input and Output operations travel between a series of volatile SRAM, cache and buffer management components. More potential failure points have been introduced as the architecture of SSDs has advanced.

Each of these points in the data traverse can subject the data to corruption and errors. The P400m utilizes expanded data path protection features that ensure the validity of the data is it travels through these possible failure points. User data is effectively "wrapped" in an envelope as it moves through the potential failure points. Enhanced ECC and CRC parity algorithms within the firmware embed RAID-like parity data before each potential failure point in the data traverse, then validates that parity after it passes through the component.

This provides end-to-end data path protection for the Micron P400m.

Micron P400m Internals

The Micron P400m comes in a 2.5-inch form factor with a 7mm Z-height. This enables deployment into slim applications and blade servers.

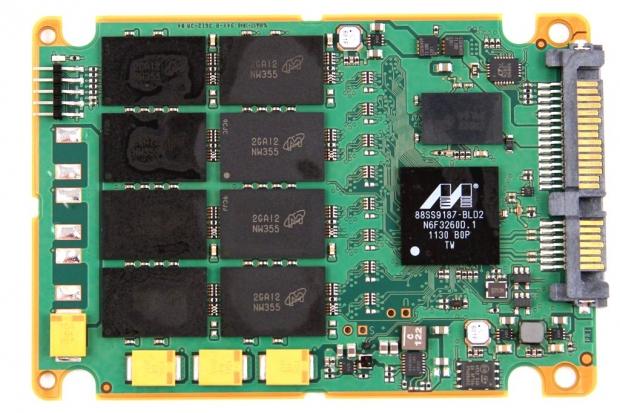

Once opened, we can observe that Micron is using thick thermal pads to shed heat form the controller and NAND into the case of the SSD. This creates a heatsink effect, helping to lower the overall heat of the SSD. This increases the longevity of the components.

The P400m has a generous helping of overprovisioning, weighing in at 70%. This provides ample space for internal functions and the RAIN implementation.

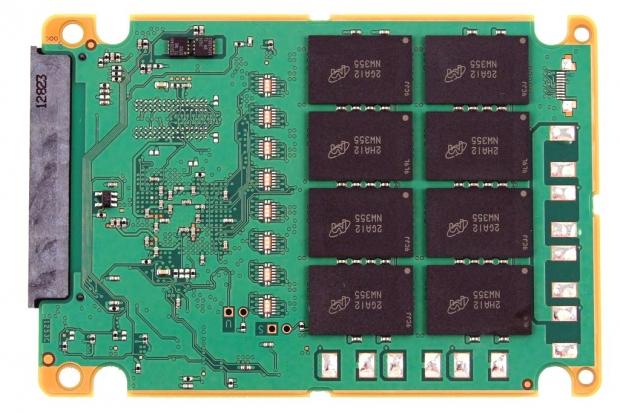

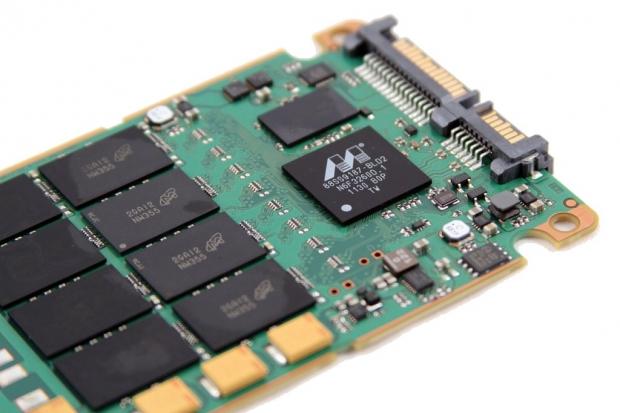

There are 16 emplacements of custom 25nm MLC NAND on the PCB, with 8 per side. Each of the three capacity points (100, 200, 400GB) will utilize the same number of NAND packages with a varying die count per package. The 100GB capacity will feature Dual die, the 200GB capacity has Quad die, and the 400GB will feature 8 die per package.

This NAND is an SSD-specific design and will not be available to Micron's customers on the open market. The MLC NAND manages to increase endurance without sacrificing performance, and features an 8KiB user page size with 256 pages per block.

There are also four yellow capacitors along the edge of the PCB. These capacitors will flush data to the NAND in the event of a host power-loss issue. We do note that there are several open slots for more capacitors, possibly for higher capacity versions of the P400m.

There are other open mounting points for capacitors on the bottom of the PCB and the remaining 8 NAND packages.

The Marvell 9187 SSD controller dominates the front of the PCB near the SATA controller. This controller has a long track record of reliability in the enterprise space. The Marvell controller is powered by Micron custom-built firmware. This firmware is designed and optimized to interact with their onboard NAND and DRAM. The controller is flanked by a 50nm 2GB DDR2-800 DRAM module.

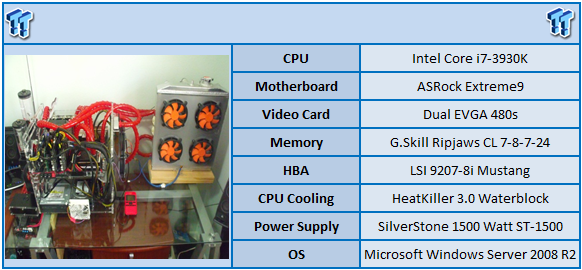

Test System and Methodology

We utilize a new approach to HDD and SSD storage testing for our Enterprise Test Bench, designed specifically to target the long-term performance of solid state with a high level of granularity.

Many forms of testing involve utilizing peak and average measurements over a given time period. While these average values can give a basic understanding of the performance of the storage solution, they fall short in providing the clearest view possible of the QOS (Quality Of Service) of the I/O.

The problem with average results is that they do little to indicate the performance variability experienced during the actual deployment of the device. The degree of variability is especially pertinent, as many applications can hang or lag as they wait for one I/O to complete. This type of testing illustrates the performance variability expected in these types of scenarios, including the average measurements, during the measurement window.

In reality, while under load all storage solutions deliver variable levels of performance that are subject to constant change. While this fluctuation is normal, the degree of fluctuation is what separates enterprise storage solutions from typical client-side hardware. By providing ongoing measurements from our workloads with one-second reporting intervals, we can illustrate the difference between different products in relation to the purity of the QOS. By utilizing scatter charts readers can gain a basic understanding of the latency distribution of the I/O stream without directly observing numerous graphs.

Consistent latency is the goal of every storage solution, and measurements such as Maximum Latency only illuminate the single longest I/O received during testing. This can be misleading, as a single 'outlying I/O' can skew the view of an otherwise superb solution. Standard Deviation measurements take the average distribution of the I/O into consideration, but do not always effectively illustrate the entire I/O distribution with enough granularity to provide a clear picture of system performance. We use histograms to illuminate the latency of every single I/O issued during our test runs.

Our testing regimen follows SNIA principles to ensure consistent, repeatable testing. We attain steady state convergence through a process that brings the device within a performance level that does not range more than 20% from the average speed measured during the measurement window. Forcing the device to perform a read-write-modify procedure for new I/O triggers all garbage collection and housekeeping algorithms, highlighting the real performance of the solution.

We only test below QD32 to illustrate the scaling of the device. However, low QD testing with enterprise-class storage solutions is a frivolous activity if not presented with higher QD results as well. The explosion of virtualization into the datacenter places focus on the high QD performance of the storage solution as the most important metric.

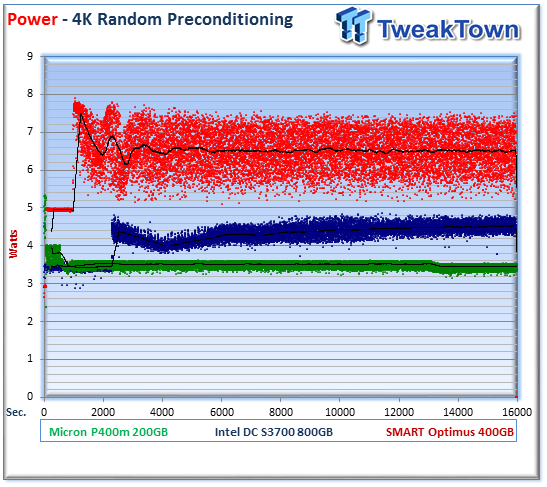

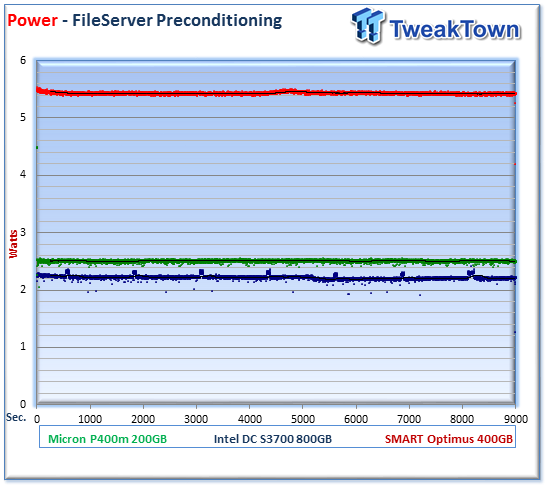

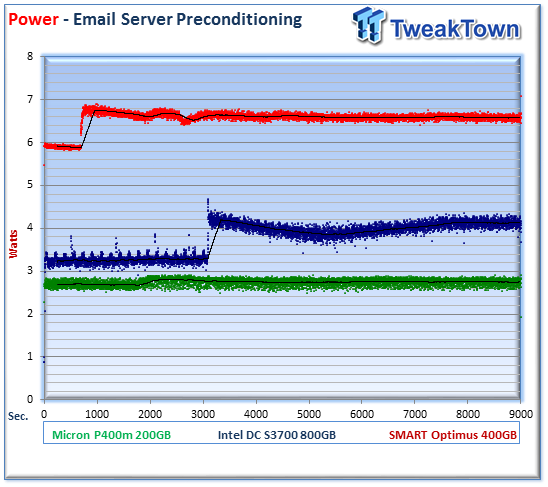

We have also begun expanded power testing with a measurement of the power consumption during each of our precondition runs. This provides measurements in time-based fashion, measured every second, that illuminate the behavior of the power consumption in steady state conditions. The power consumption of storage devices can cost more over the life of the device than the actual up-front costs of the drive itself. This significantly affects the TCO of the storage solution.

The first page of results will provide the 'key' to understanding and interpreting our new test methodology.

4K Random Read/Write

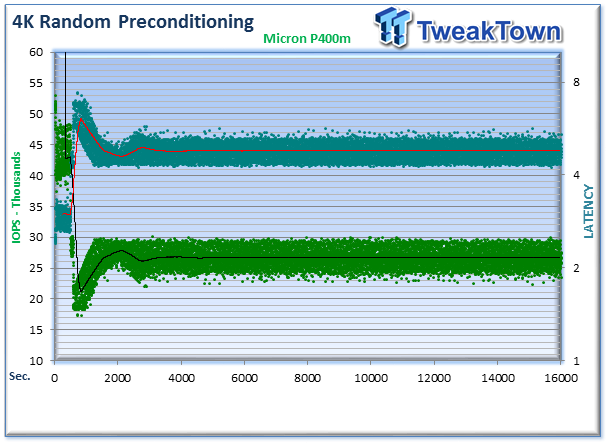

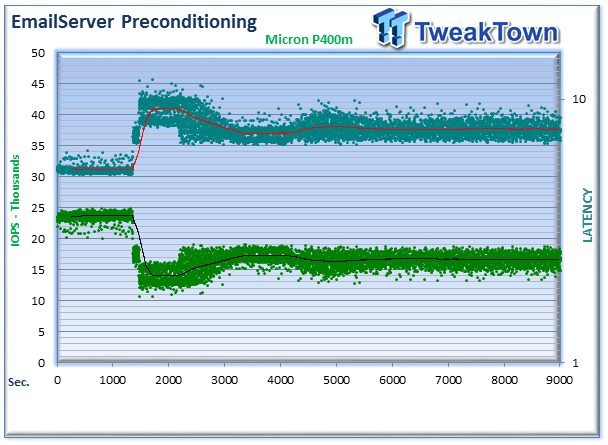

We precondition the Micron P400m for 18,000 seconds, or five hours. Every second we are receiving reports on several parameters of the workload performance. We then plot this data to illustrate the drives' descent into steady state.

This chart consists of 36,000 data points. The green dots signify the IOPS during the test, and the light blue (teal) dots are the latency encountered during the test period. We place the latency data in a logarithmic scale to bring it into comparison range. This is a dual-axis chart with the IOPS on the left and the latency on the right. The lines through the data scatter are a moving average during the test. This type of testing presents standard deviation and maximum/minimum I/O in a visual manner.

Note that the IOPS and Latency figures are nearly mirror images of each other. This illustrates the point that high-granularity testing can give our readers a good feel for the latency distribution by viewing IOPS at one-second intervals. This should be in mind when viewing our test results below.

We provide histograms to provide further latency granularity below. This preconditioning slope of performance happens very few times in the lifetime of the device, and we present these test results for the tested device only to confirm the attainment of steady state convergence.

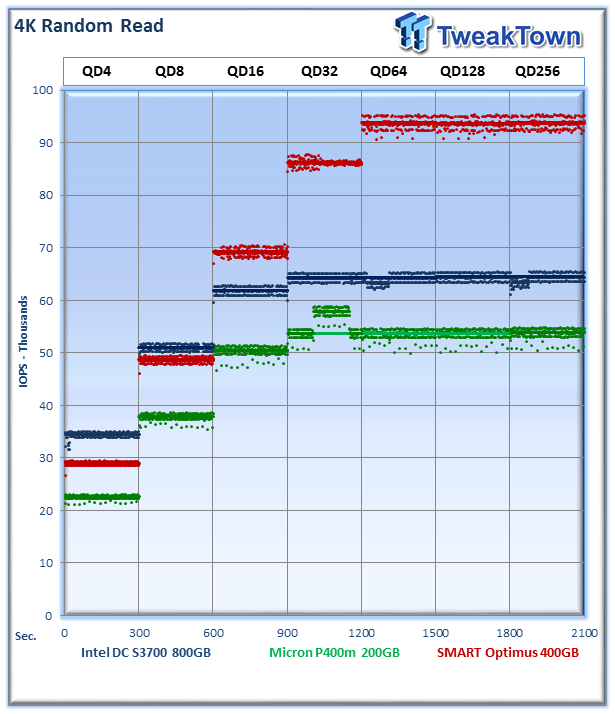

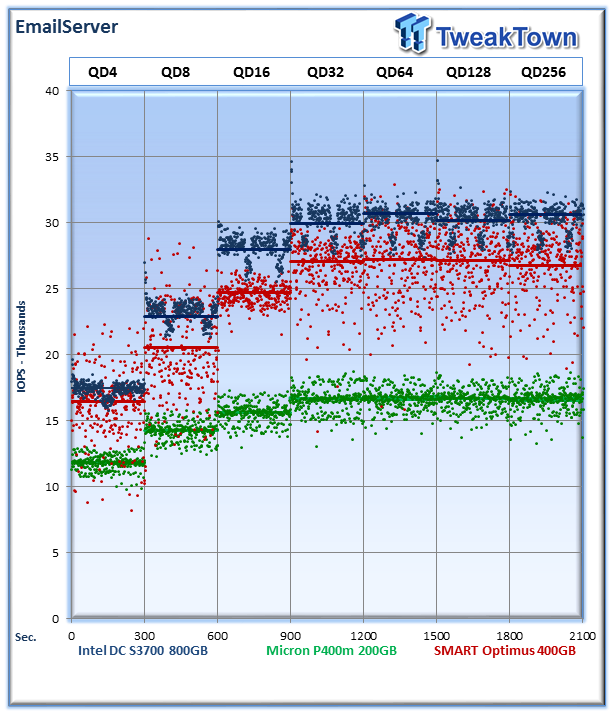

Each QD for every parameter tested includes 300 data points (five minutes of one second reports) to illustrate the degree of performance variability. The line for each QD represents the average speed reported during the five-minute interval.

4K random speed measurements are an important metric when comparing drive performance, as the hardest type of file access for any storage solution to master is small-file random. One of the most sought-after performance specifications, 4K random performance is a heavily marketed figure.

The Micron P400m provides a very tight performance range as it averages 53,847 IOPS at QD256. The Intel DC S3700 delivers an average speed of 64,533 IOPS. The SMART Optimus, with its native SAS connection, provides an average read speed of 93,860 IOPS at QD256.

We observe several dips in performance with the Intel SSD in the QD64 and QD256 range, and one period at QD 32 where the P400m jumps appreciably. These are indicative of the housekeeping routines running in the background.

It is worth noting that the Micron P400m returns back to its normal level of performance quickly, possibly due to the ReCAL algorithms kicking in and steadying performance. The Intel DC S3700 experiences these dips continuously on a steady cadence, as it seems to simply run the garbage collection algorithm at a predetermined time. Micron's approach to intelligent garbage collection routines in observance of the level of I/O requests provides a consistent level of performance.

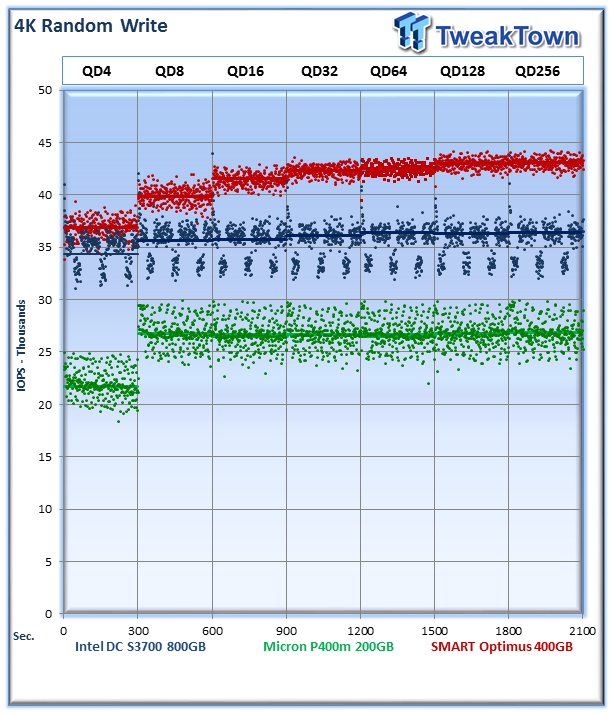

Garbage collection routines are more pronounced in heavy write workloads. The Micron delivers solid performance with very little variability, and the Intel's latency optimization seems to focus around the garbage collection routines triggering at steady intervals of 90-100 seconds. The SMART Optimus provides the least variability of the three.

The scale of this chart should be taken into consideration, with the Micron P400m and Intel DC S3700 varying only 6,000 IOPS from minimum to maximum, while the Optimus performance is within a few thousand IOPS.

The Micron averages 26,815 IOPS, the Intel DC S3700 averages 36,428 IOPS, and the SMART Optimus averages 43,081 IOPS at QD 256.

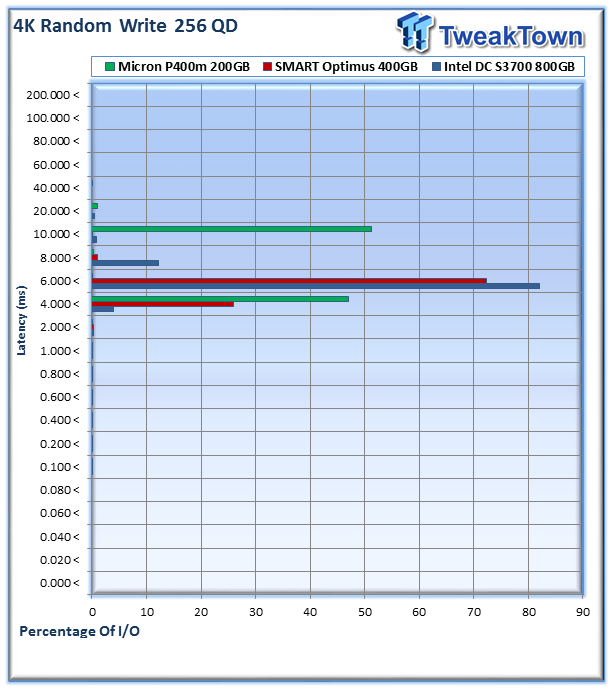

The Micron P400m serves 46.9% (3,784,211 I/Os) of requests within the 4-6ms range, and 51% of commands (4,126,898 I/Os) in the 10-20ms range. This higher latency is reflected by the slower write speed of the P400m in comparison to the other two SSDs, but we do not note any large areas spikes or variances in performance.

The power consumption measurements, taken during our precondition run, for the Micron P400m are very good. We can see that it maintains steady power consumption over the course of the precondition run (4.5 hours). The Micron averages 3.5 Watts, in comparison to the Intel at 4.36 Watts and the SMART Optimus at 6.51 Watts. The P400m scores 7,624 IOPS per Watt, the Intel scores 8,336 IOPS per Watt, and the Optimus scored 6,617 IOPS per Watt.

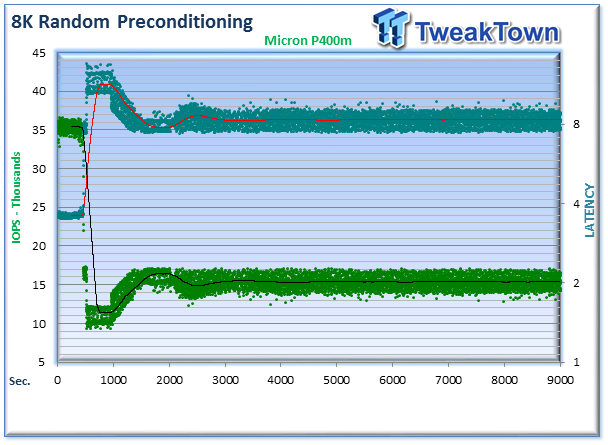

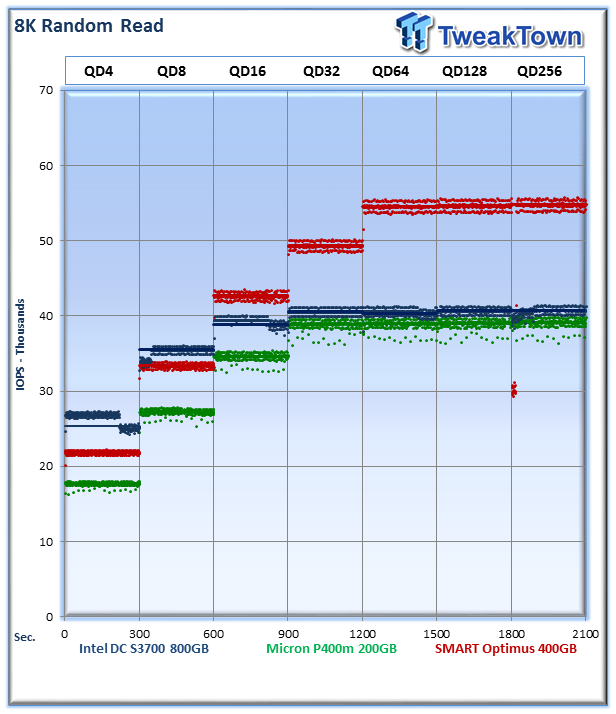

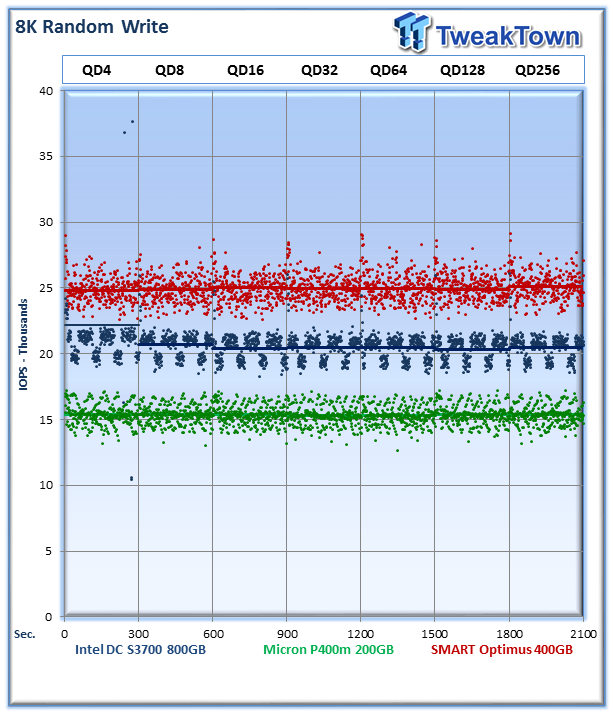

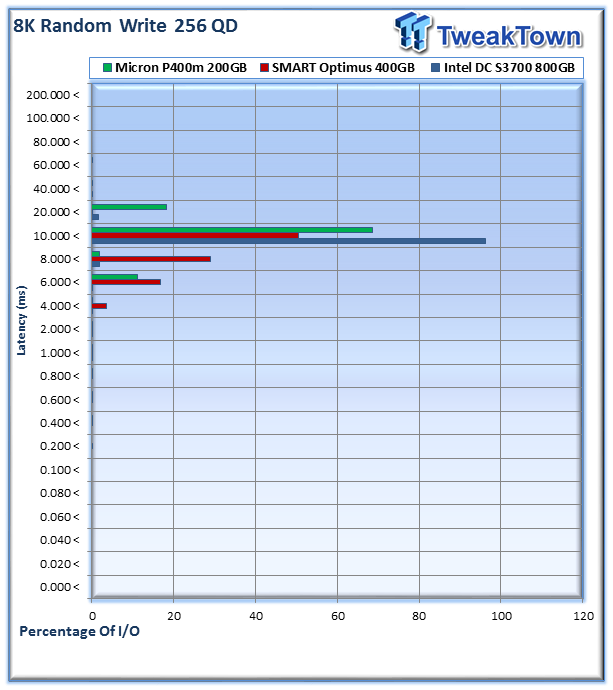

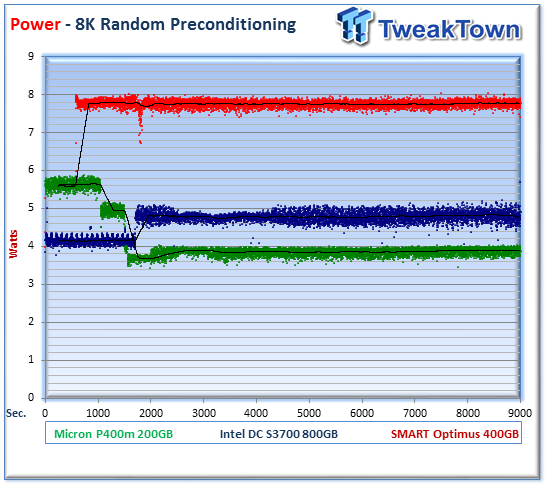

8K Random Read/Write

8K random read and write speed is a metric that is not tested for consumer use, but for enterprise environments this is an important aspect of performance. With several different workloads relying heavily upon 8K performance, we include this as a standard with each evaluation. Many of our Server Emulations below will also test 8K performance with various mixed read/write workloads.

The average 8K random read speed of the P400m at QD256 was 39,122 IOPS, the DC S3700 was 40,766 IOPS compared to 54,767 IOPS for the Optimus.

The average 8K random write speed of the Micron P400m at QD256 was 15,394 IOPS, the DC S3700 was 20,475 IOPS and the Optimus delivered 25,096 IOPS.

Micron P400m provides 68% (3,155,955) of I/O requests in the 10-20ms range, and 18% (842,946 I/Os) in the 20-40ms range. The SAS-powered Optimus provides the lowest overall latency, dipping as low as 4ms.

The power for the Micron P400m averaged 3.89 Watts during the precondition run, the Intel averaged 4.81 Watts, and the Optimus averaged 7.7 Watts. This gives the Micron 3,954 IOPS per Watt, the Intel 4,252 write IOPS per Watt and the Optimus 3,228 IOPS.

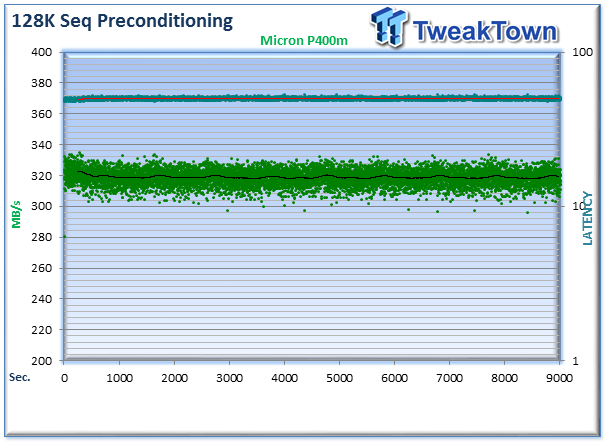

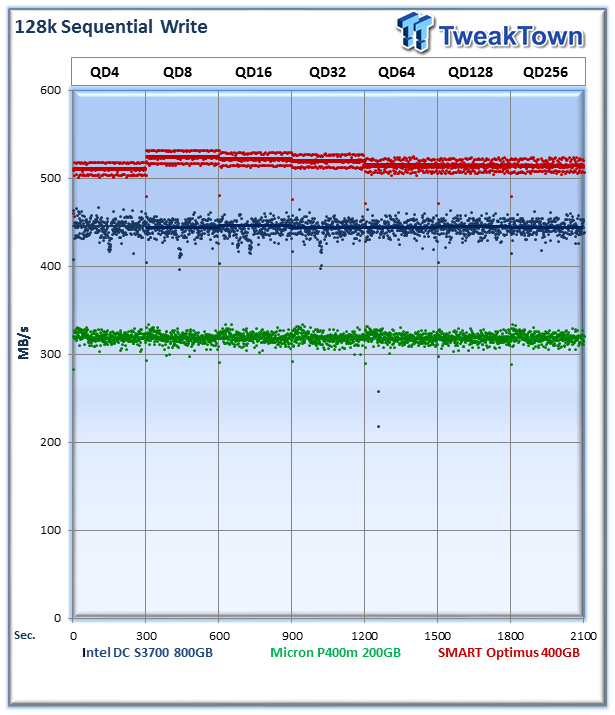

128K Sequential Read/Write

The 128K sequential speeds reflect the maximum sequential throughput of the SSD using a realistic file size actually encountered in an enterprise scenario.

The Micron averaged 413MB/s in sequential reads at QD256, the Intel DC S3700 averaged 473MB/s, and the Optimus averaged 526MB/s. All three SSDs exhibit very good consistent performance in the read testing, though the Intel tends to slip back into its garbage collection mode intermittently.

The Micron averages 318MB/s in 128K sequential write speed, the DC S3700 averages 444MB/s, and the Optimus weighs in at 514MB/s.

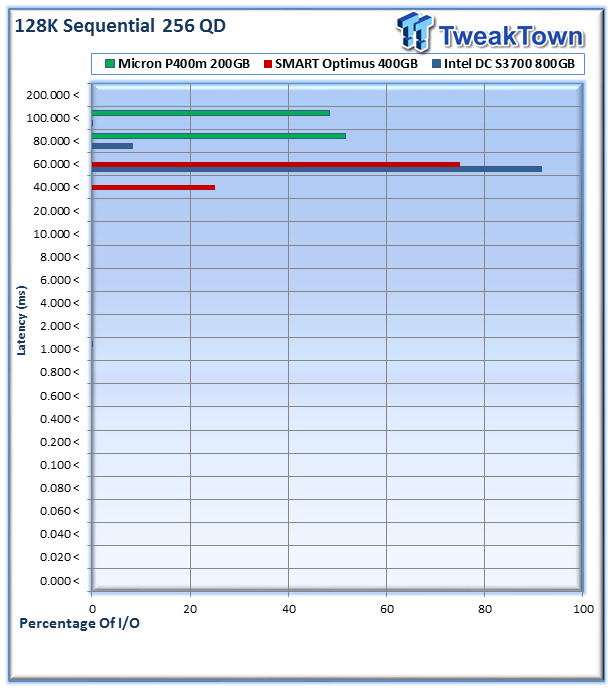

The Micron P400m provides 51.6% (394,224 I/Os) in the 80-100ms range, and 48% (369,021 I/Os) in the 100-200ms range.

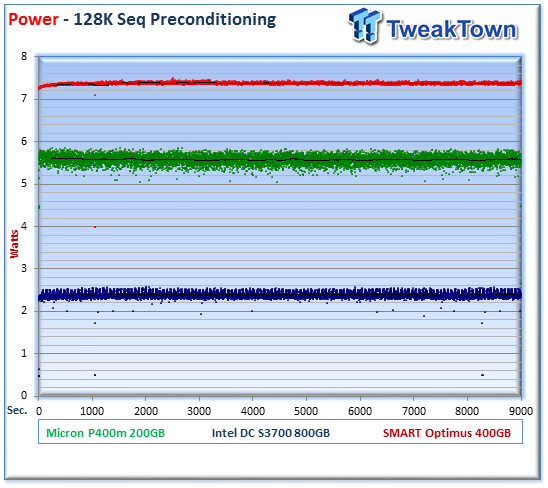

The Micron P400m averages 5.57 Watts during sequential writes, the Intel averages 2.39 Watts, and the Optimus averages 7.37 Watts. The P400M gives 57MB/s IOPS per Watt, the DC S3700 gives 185MB/s per Watt and the Optimus scores 69MB/s per Watt.

OLTP and Webserver

OLTP

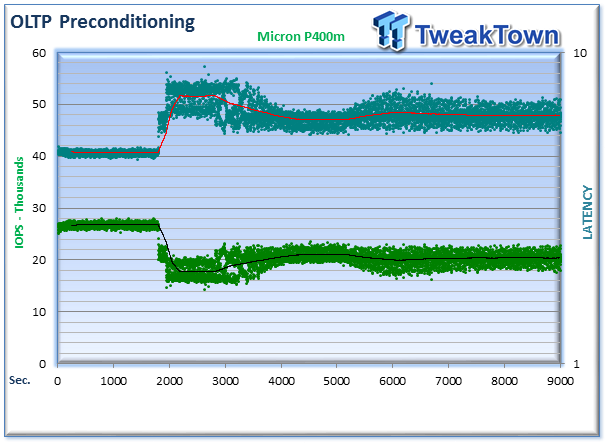

This test emulates Database and On-Line Transaction Processing (OLTP) workloads. OLTP is in essence the processing of transactions such as credit cards and high frequency trading in the financial sector. Enterprise SSDs are uniquely well suited for the financial sector with their low latency and high random workload performance. Databases are the bread and butter of many enterprise deployments. These are demanding 8K random workloads with a 66% read and 33% write distribution that can bring even the highest performing solutions down to earth.

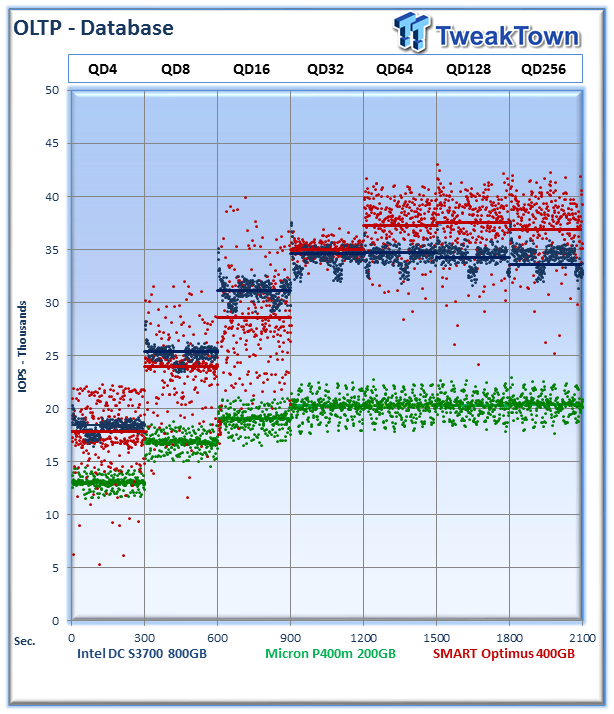

The P400m chugs away with very little variability in this mixed workload, and the Intel also retains its clustered performance. The Optimus does begin to show signs of increased variability under this type of workload. The P400m averages 20,413 IOPS at QD256, the DC S3700 averages 33,546 IOPS, and the Optimus averages 36,837 IOPS.

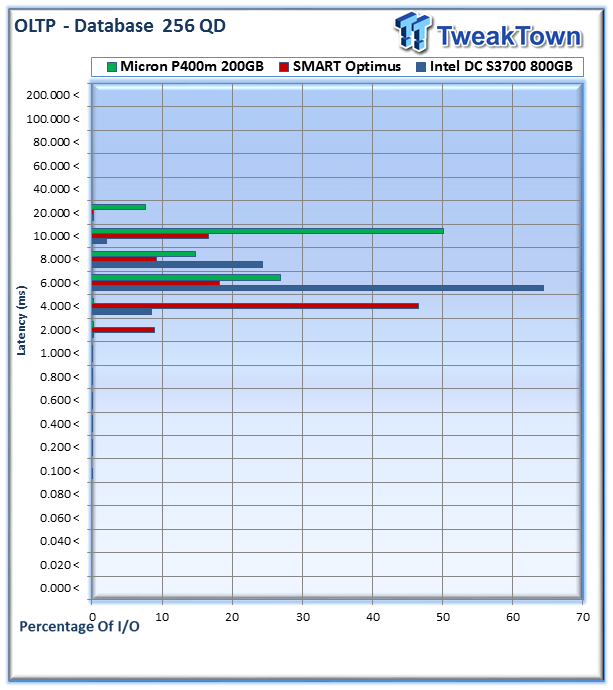

The P400m provides 26% (1,641,970) of requests within the 6-8ms range, 14% (905,642 I/Os) in the 8-10ms range, and 50% (3,065,305 I/Os) in the 10-20ms range.

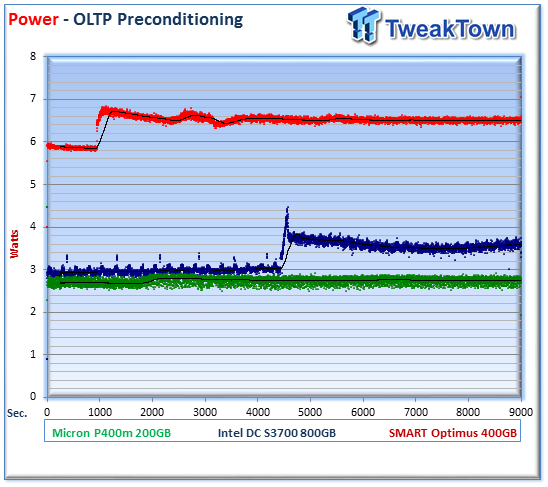

The P400m averages 2.75 Watts, the Intel averages 3.56 Watts and the Optimus averages 6.5 Watts. This gives the Micron P400m 7,422 IOPS per Watt, the Intel 9,418 IOPS per Watt, and the Optimus 5,659 IOPS per Watt.

Webserver

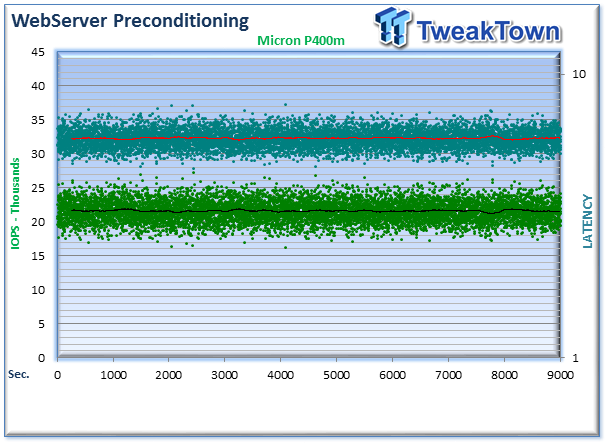

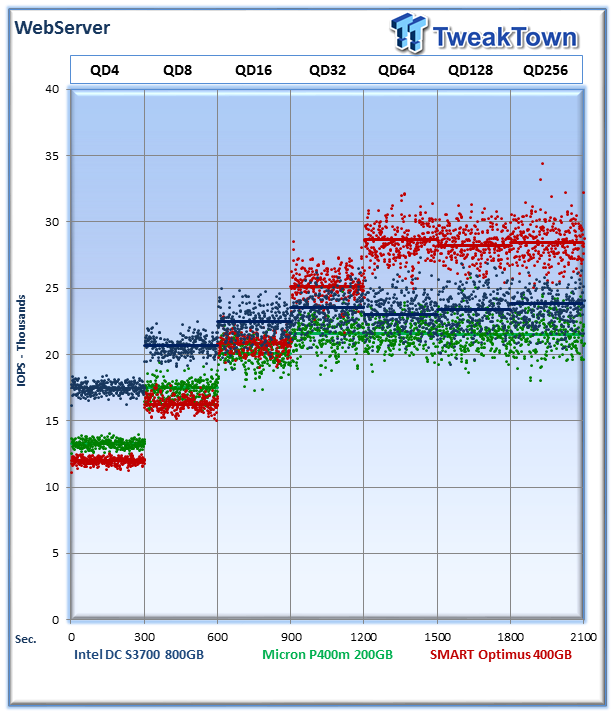

The Webserver profile is a read-only test with a wide range of file sizes. Web servers are responsible for generating content for users to view over the internet, much like the very page you are reading. The speed of the underlying storage system has a massive impact on the speed and responsiveness of the server that is hosting the websites, and thus the end user experience.

The P400m averages 21,533 IOPS at QD256, the Intel averages 33,546 IOPS, and the Optimus averages 36,837 IOPS. All SSDs exhibit some variance, but taking into consideration the scale of the graph it is minimal. The P400m pulls up nearly even with the Intel, which apparently abandons its garbage collection cadence during this test. The Optimus again pulls ahead in overall speed.

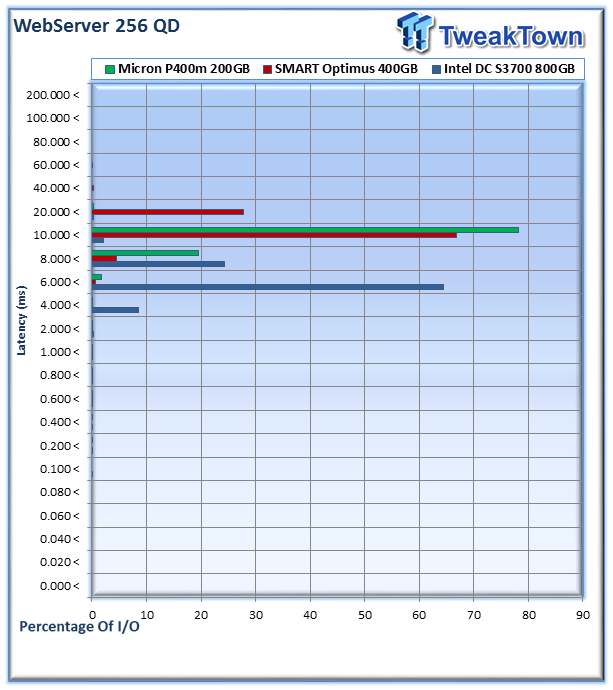

The Micron P400m delivers 78% of I/O in the 10-20ms range, and 19% in the 8-10ms range.

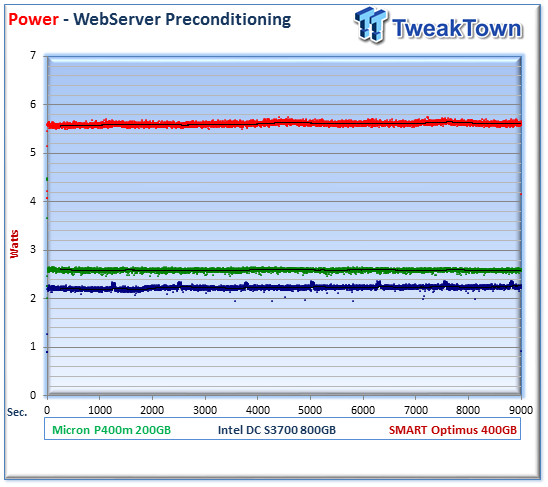

The Micron P400m averages 2.59 Watts, the Intel averages 2.24 Watts, and the Optimus averages 5.61 Watts. This gives the P400m 8,304 IOPS per Watt, the Intel an average of 10,600 IOPS per Watt, and the Optimus 5,071 IOPS per Watt.

Fileserver and Emailserver

Fileserver

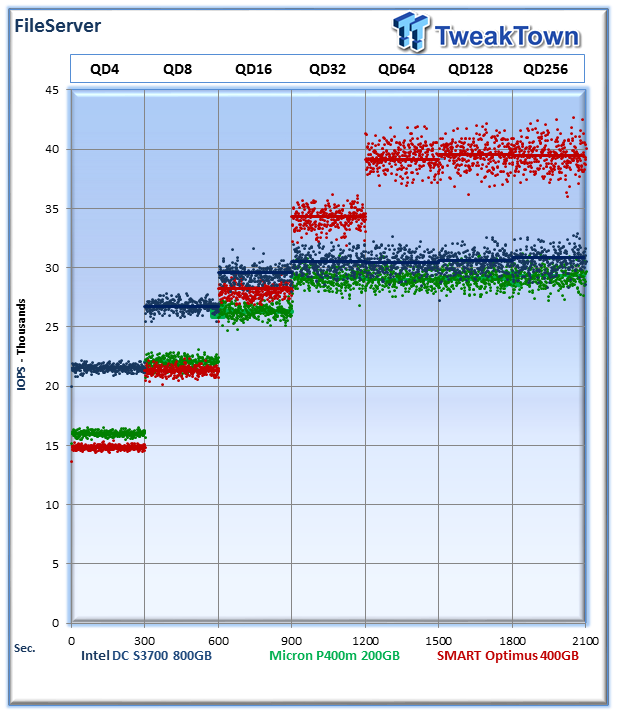

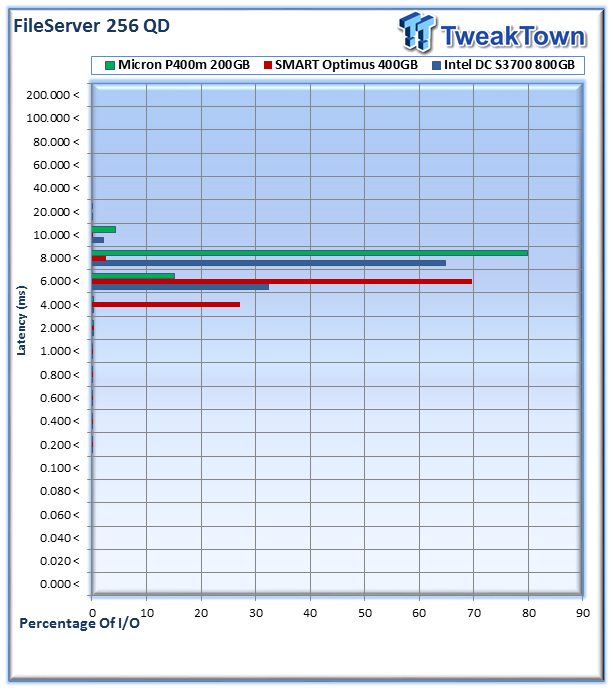

The File Server profile represents typical file server workloads. This profile tests a wide variety of different file sizes simultaneously, with an 80% read and 20% write distribution.

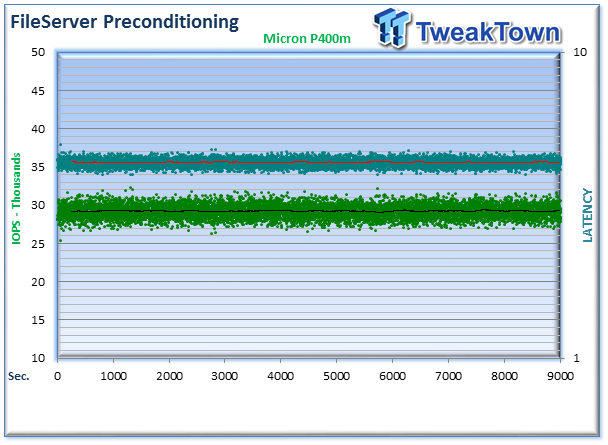

The P400m and the Intel score very closely in this test, while the SAS Optimus pulls ahead in overall speed. The P400m averages 29,311 IOPS at QD256, the DC S3700 averages 30,888 IOPS, and the Optimus averages 39,434 IOPS.

The P400m delivers remarkable consistency in this workload, with 79% of I/O (6,990,715) in the 8-10ms range, and 15% (1,325,555) in the 6-8ms range.

The P400m averages 2.76 Watts, the Intel averages 2.2 Watts, and the Optimus averages 5.41 Watts. The P400m gives 10,593 IOPS per Watt, the Intel provides 14,040 IOPS per Watt, and the Optimus weighs in at 7,276 IOPS per Watt.

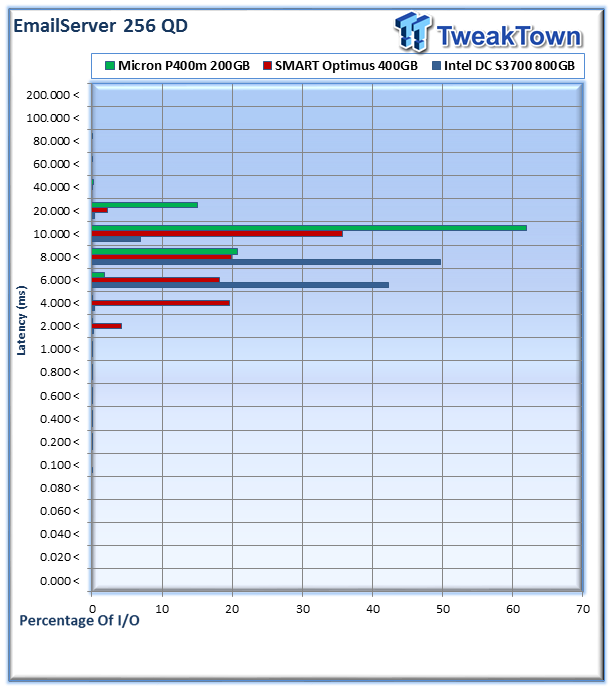

Emailserver

The Emailserver profile is very demanding 8K test with a 50% read and 50% write distribution. This application is indicative of the performance of the solution in heavy write workloads.

The Micron averages 16,609 IOPS at QD256, the Intel averages 30,632 IOPS, and the Optimus averages 26,762 IOPS.

The P400m provides 62% (3,084,683 i/Os) in the 10-20ms range, 20% (1,029,745) in the 8-10ms range, and 15% (750,312) in the 20-40ms range.

The P400m averages 2.74 Watts, the Intel DC S3700 averaged 4.12 Watts and the Optimus averaged 6.58 Watts. This averages out to 6,041 IOPS per Watt for the Micron P400m, 7,425 IOPS per Watt for the Intel and 4,066 IOPS per Watt for the Optimus.

Final Thoughts

Micron is working to diversify itself from a memory-only company as they move further into the SSD market. With an increasingly diverse portfolio of consumer and enterprise storage solutions, Micron continues to push this evolution to the next level.

The most critical aspect of any storage solution is the protection of user data. Equipment failures and loss of data can result in array rebuilds and system downtime. In many cases, failures can even lead to excessive use of internal data networks as administrators clamor to restore snapshots. Data loss or equipment failure is always costly in one form or another, no matter the circumstances.

In enterprise environments reliability is the most sought after characteristic of any component, trumping overall performance in all but niche applications. These niche deployments, a very small percentage of the overall pie, are increasingly moving to utilizing PCIe application accelerators for the ultimate in performance. The majority of SSD deployments in the datacenter revolve around the traditional 2.5-inch form factor SSDs. For mainstream enterprise applications, the real focus is not king-of-the-hill specifications, but enhanced reliability, consistent performance and endurance.

The Micron P400m exhibited consistent performance and the 10DWPD of endurance provides users with a durable SSD. The low power requirements of the P400m lend itself well to managing long-term TCO. The power consumption of the P400m easily bests any HDD, and many SSDs, on the market.

One area of improvement that we would like to see is toolbox functionality for customers. Providing SMART data and drive management tools in an easy to use solution would be a value added proposition for customers. Micron captured this with the Real SSD Manager for the P320h, but as of yet does not offer this type of utility for their traditional form factor SSDs.

Micron has provided an almost bulletproof data protection solution with the combination of techniques utilized in its XPERT technology suite. Several internal mechanisms work to provide increased endurance, but Micron takes it a step further by employing their RAIN solution. This provides data redundancy at the device level, providing yet another safety net in the event of an uncorrectable error.

While RAIN does require more space to utilize its parity scheme, Micron has employed a staggering 70% overprovisioning with the P400m. Micron has integrated more techniques and IP into the SSD aimed at providing redundancy and full data path protection than many mainstream enterprise SSDs we have tested.

The Micron P400m is already qualified at major OEMs and is already available for purchase. Micron backs the P400m up with a five year warranty. While there are certainly faster options, for SATA deployments where protecting data is the top priority, we expect many to choose the Micron P400m and its brilliant XPERT technology.