Science, Space, Health & Robotics News - Page 52

Pentagon UFO whistleblower claims US government has covered up aliens murdering people

Former intelligence official David Grusch has sounded the alarm on what he describes as secret US military programs that involve officials recovering a crash-landed alien spacecraft that contained non-human intelligent lifeforms.

Grusch has appeared in a few interviews where he outlined his claims to the best of his ability, saying that there are secret government programs being hidden from Congress. Additionally, Grusch claims he has provided proof of these secret government programs that involved US military recovering a non-human spacecraft and encountering non-human intelligence. This proof was sent to both the Inspector General and Congress.

One of the most shocking claims made by Grusch was during a News Nation exclusive interview where he responded to being asked if human beings have been harmed or killed by a non-human intelligence. Grusch says, "While I can't get into the specifics because that would reveal certain US classified operations. I was briefed by a few individuals on the program that there were malevolent events like that."

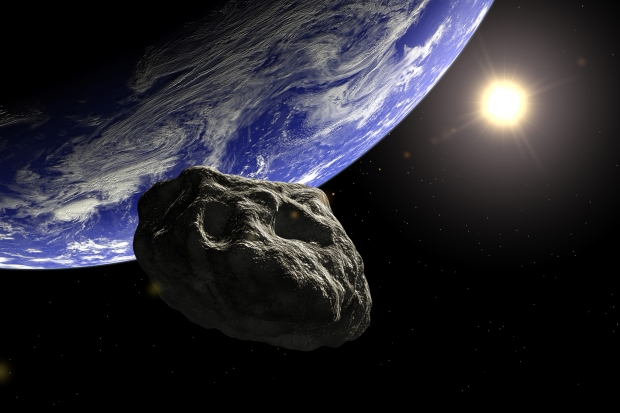

NASA database confirms 'potentially hazardous' asteroid will approach Earth today

NASA's Center for Near Earth Objects (CNEOS) has confirmed a space rock that's between 1,214 and 2,727 feet in diameter will approach Earth today.

The asteroid, which was originally identified in 1994 by the Spacewatch group working at Kitt Peak Observatory in Arizona, is now on its way to making its closest approach to Earth, coming within 1.8 million miles of our planet, or approximately eight times the distance between Earth and the moon. According to NASA projections, the asteroid named 1994 XD will be at its closest point to Earth on June 12, 9 pm EDT.

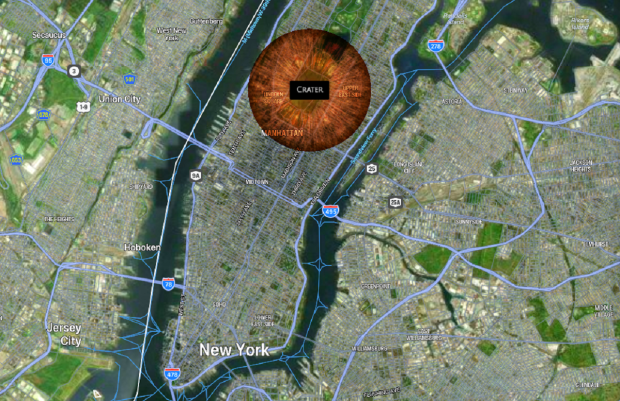

Despite this asteroid's size, the goliath space rock is moving at extreme speeds, with recent measurements indicating a speed of 48,032 mph. Combining the asteroid's speed with an average diameter measurement of 1,500 feet, we can predict how much damage such an object would create if it were to collide with the surface of Earth. Using the Asteroid Launcher simulation website, plugging in the 1,500 diameter, speed of 48,000 mph, an impact angle of 45 degrees, and Central Park, New York, it's estimated that 222,286 people would be vaporized and carve a 1,390 ft deep crater.

Scientists will use AI to create a revolutionary, first-of-its-kind map of the human brain

Researchers are aiming toward generating the world's first brain atlas that will hopefully assist clinicians and scientists in determining why diseases such as Alzheimer's occur.

In a new partnership between the Allen Institute for Brain Science and Amazon Web Services (AWS), using funding from the National Institute of Health, researchers are aiming at creating the first map of the brain using artificial intelligence. According to the press release, using AI-powered tools, the team will create a map that will be based on single-cell genomics technologies that will be able to measure genes within individual brain cells and identify the cognitive functions through these genes.

The press release states that through the analysis of these cells, researchers will be able to create a platform that will integrate information on all mammalian biological systems.The brain has approximately 200 billion cells, and getting an AI to scan each of these cells, organize the data, and store it in a server, is no easy task.

UFO crash lands in Las Vegas backyard, police release bodycam footage

Multiple news outlets have reported on a family that claimed a UFO crash landed in their backyard, and the spacecraft contained non-human subjects that stood as tall as 10 feet.

Authorities responded to a call made by a family on May 1 in Las Vegas that dialed 911 to report a spacecraft crash landing in their backyard. The 911 audio has been released by police, and you can hear the caller recounting the events, starting by saying he was working on his truck in his backyard with his brother and dad when they saw an object fall down out of the sky and land in their big lot. The caller recounts the object having lights, and when it crash landed, they all felt a "big impact".

The caller went on to say they began to hear "a lot of footsteps near us", and then they saw a lifeform inside the spacecraft that had "big eyes" and was "looking right at us". Additionally, the caller said that these creatures were massive, measuring anywhere between eight and ten feet in height. One of them was spotted outside of the "equipment," and the other was spotted inside the craft.

Continue reading: UFO crash lands in Las Vegas backyard, police release bodycam footage (full post)

NASA responds to claims the government has recovered an alien spaceship with pilots inside

The newest viral story to do with aliens comes from whistleblower David Charles Grusch, a 36-year-old decorated former combat officer in Afghanistan, and a veteran of the National Geospatial-Intelligence Agency (NGA) as well as the National Reconnaissance Office (NRO).

As reported yesterday, Grusch told The Debrief that the US government has recovered an alien spacecraft that contained pilots, withheld this information from Congress, and is actively covering up the program. According to Grusch and other intelligence officials, both retired and active, who vouch for his story, these events are still ongoing, with The Guardian even reporting the story with additional information that wasn't included in The Debrief story.

The first addition was that NASA has gone on record in response to Grusch's story, that's making waves.

Scientists discover a 'fake moon' has been following Earth for 2,000 years

Space is a strange and interesting place, and while scientists can look millions of light-years into the universe's distant past, sometimes they don't have to look very far to start stretching their heads.

A team of astronomers discovered an object using the Pan-STARRS observatory in Hawaii dubbed 2023 FW13 in March of this year and believed it was orbiting Earth. The scientists first thought this object was orbiting Earth, but after follow-up observations, it was confirmed that it was actually orbiting the Sun and was an asteroid. According to the space news website Space & Sky Telescope, this object is an asteroid that is orbiting the Sun at a very similar pace as Earth, with our planet playing no role in the motion of the object or its orbit.

Alan Harris, a scientist specializing in near-Earth objects at the Space Science Institute, spoke to Sky & Telescope and said that considering the newfound characterization of the asteroid, it's technically called a "quasi moon", or a "fake moon" as its not associated with Earth other than by chance. So, what makes this fake Moon special? Typically, quasi-moons only trail planets for several decades before separating out into other regions of space.

Government whistleblower says US has recovered a 'non-human' spacecraft with alien 'pilots'

A new report by The Debrief has cited a former intelligence official claiming that he's provided Congress with information on a downed "non-human" spacecraft that contained dead "pilots."

Firstly, it should be noted that none of the following information has been confirmed by the US government or any other regulatory body, and are direct claims from the whistleblower/s. The whistleblower's name is David Grusch, an Air Force veteran and former member of the National Geospatial-Intelligence Agency, who claims the information he provided Congress is "proof" of the recovery of objects of "exotic origin (non-human intelligence, whether extraterrestrial or unknown origin)."

The descriptions of these objects were based on vehicle morphologies and material science testing, which included atomic arrangements and radiological signatures. Grusch explained that the recovered material "includes intact and partially intact vehicles. These are retrieving non-human origin technical vehicles, call it spacecraft if you will, non-human exotic origin vehicles that have either landed or crashed."

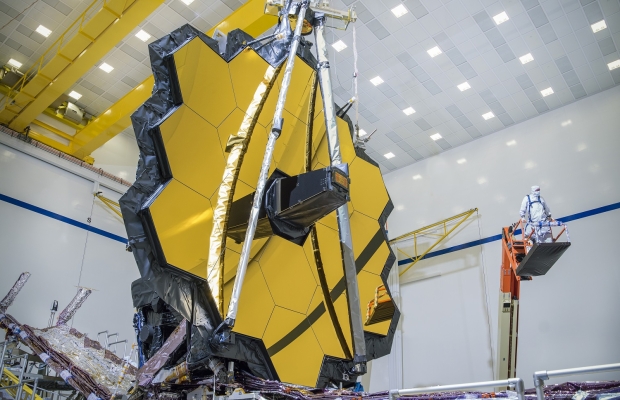

NASA's Webb peers into the heart of distant galaxy revealing its secrets

NASA's James Webb Space Telescope (JWST) continues to shock scientists and astronomers with the incredible science the observatory can conduct from its vantage point of 1 million miles away from Earth at what is called the second Lagrange point.

NASA intentionally sent Webb to Lagrange Point 2 for a few different coinciding reasons. This position in space enables Webb to stay in line with Earth as it orbits the Sun while also giving it the ability to see further into space than any other telescope. Since arriving at its destination, Webb has been conducting cutting-edge science, and according to a newly released image on the European Space Agency (ESA) website, Webb isn't by any means slowing down its scientific escapade as it has recently imaged a barred spiral galaxy dubbed NGC 5068.

The ESA explains on its website that Webb's image showcases the bright long tendrils of gas and stars of NGC 5068, which is located approximately 17 million light years away from Earth within the constellation Virgo. To give a perspective of just how big 17 million light-years is, the Milky Way's neighborhood of galaxies is called the Local Group and is approximately 5 million light-years away, which puts NGC 5068 well out of our galactic neighborhood.

NASA expects it'll spend $6 billion on a rocket that's six years behind schedule

NASA's Inspector General has released a new audit on NASA's management of the Space Launch System rocket, which is slated to be the transportation astronauts will take to the Moon.

The audit states that NASA will spend as much as $93 billion on the Artemis Moon Program by the end of 2025, with $22 billion being spent just in 2022 on the development of the Space Launch System (SLS) rocket. Notably, the audit states that mounting costs can be attributed to development hurdles and missteps, resulting in the SLS rocket being behind schedule by six years. Furthermore, NASA's audit states that the space agency expects these costs to increase as well as the SLS schedule.

The development of the SLS rocket has already pushed NASA's budget for its Artemis Moon Program over by $6 billion, and according to the audit, these schedule delays and mounting costs can be traced back to poor assumptions of reusing salvaged engines from Space Shuttle launches. More specifically, the SLS rocket uses four RS-25 engines for every launch, which are either destroyed or abandoned entirely after launch.

Scientists say they've found Bible chapter hidden for more than 1,500 years

Scientists using ultraviolet photography have made an intriguing discovery in the field of biblical studies.

They have found an ancient version of a chapter from the Bible that had been hidden beneath another section of text for over 1,500 years. Historian Grigory Kessel from the Austrian Academy of Sciences unveiled this groundbreaking finding in an article published in the prestigious journal New Testament Studies.

According to Kessel, "The manuscript offers a 'unique gateway' for researchers to understand the earliest phases of the Bible's textual evolution. It shows some differences from modern translations of the text." Kessel used ultraviolet photography to reveal the earlier text concealed beneath three layers of words on a palimpsest, an ancient manuscript that was often reused.