Video Cards & GPUs News - Page 133

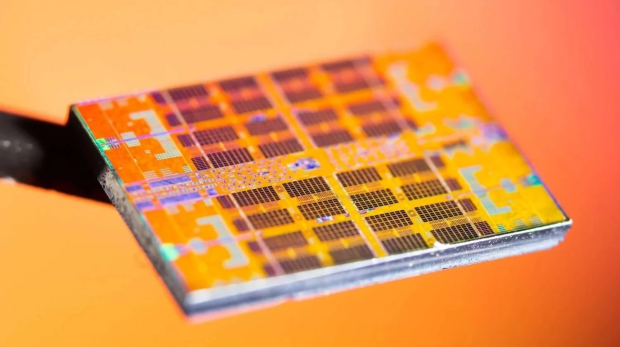

TSMC's next-gen 3nm yields are so good, production is starting earlier

It looks like we'll be enjoying 3nm products sooner than we thought, with Taiwan Semiconductor Manufacturing Company (TSMC) seeing yield improvements with its N3e production.

The news is coming from Retired Engineer, who tweeted that with TSMC's new 3nm node seeing yield improvements that volume production on N3e may start in Q2 2023 (April-June). Morgan Stanley has reportedly checked with equipment vendors that have said TSMC could "freeze" the N3e process flow, meaning the design parameters -- kinda like TSMC is saying its N3e has 'Gone Gold' and is ready for production -- yeah, that works.

Retired Engineer explained: "N3e production yield improves; schedule being pulled in: Our recent checks with equipment vendors suggest that TSMC may freeze the N3e process flow sooner - by the end of this March. This means that volume production of N3e may start in 2Q23, around a quarter ahead of the original schedule of 3Q23".

Continue reading: TSMC's next-gen 3nm yields are so good, production is starting earlier (full post)

NVIDIA closes can of whoop ass in Russia, halts sales over Ukraine

NVIDIA has now halted sales of its products to Russia over the ongoing conflict in Ukraine, joining the likes of its competitors AMD and Intel.

An NVIDIA spokesperson confirmed the news to PCMag, but they didn't have any reasoning behind it. It's more of a "yeah, we're not selling our gear to them anymore" kind of thing. I'm not going to get into the politics of it all, as that's not our place to report on these things at TweakTown, but NVIDIA is a big deal -- so too is AMD and Intel -- and now, no more GeForce GPUs for Russian gamers.

The NVIDIA spokesperson said: "We are not selling into Russia".

Continue reading: NVIDIA closes can of whoop ass in Russia, halts sales over Ukraine (full post)

NVIDIA GeForce RTX 3090 Ti reportedly launches March 29, yeah sure

Man, what an absolute mess the launch of NVIDIA's GeForce RTX 3090 Ti has been so far... the card was meant to be unleashed in late January, and now we're in the first week of March and it's still not here.

The latest on NVIDIA's new flagship GeForce RTX 3090 Ti graphics card is that the company will release it on March 29, just over three weeks from now. The reasons for the delay? Reportedly they've been memory-related... due to the 24GB of single-sided GDDR6X memory at 21Gbps.

There could be more hardware-related or even BIOS-level issues with the card... but with the amount of power driving through the card, Samsung's 8nm process not being the best (up against TSMC and its 7nm and lower nodes), and many other factors (the pandemic, supply chains in South Korea, etc).

Continue reading: NVIDIA GeForce RTX 3090 Ti reportedly launches March 29, yeah sure (full post)

NVIDIA GeForce RTX 40 series GPU: specs leak thanks to hackers

NVIDIA has been slammed by a cyberattack that is causing all sorts of issues, with another one of them being GPU-related leaks to its next-gen GPU architectures... the next one, is Ada Lovelace.

The hacking group has infiltrated NVIDIA's servers, and now more information on Ada Lovelace is here. We have the full specs of NVIDIA's next-gen Ada Lovelace-based GeForce RTX 40 series GPUs including the AD102, AD103, AD104, AD106, and AD107 GPUs.

NVIDIA's next-gen Ada Lovelace GPU architecture is expected to be deployed later this year, with the flagship AD102 GPU with the full 18432 CUDA cores and 384-bit memory bus. Next down on the list we have the AD103 GPU which should have 10753 CUDA cores, and the AD104 GPU with 7680 CUDA cores. After that there's the AD106 and AD107 GPUs with 4608 CUDA cores, and 3072 CUDA cores respectively.

Continue reading: NVIDIA GeForce RTX 40 series GPU: specs leak thanks to hackers (full post)

PCIe 5.0 '12VHPWR' power connector: 150W, 300W, 450W, 600W settings

We might not ever see the next-gen graphics card released this year that actually uses PCIe 5.0 power connectors, but the PSU market is gearing up for the big launch of next-gen PCIe 5.0-ready GPUs.

Well, now the official specifications of the new PCI-Express 5.0 cable for next-gen GPUs has been leaked, with data approved by Intel who are part of defining the ATX specs. We now know there should be up to four different power settings, with the PCIe 5.0 power cable supporting 150W, 300W, 450W, and a huge 600W over a single 16-pin PCIe 5.0 power cable.

It looks like the binary configuration of the Sense0 and Sense1 sideband signals, but when both of the signals are grounded, the GPU will have up to 375W of power pumped into it, with a maximum of up to an insane 600W of sustained power.

Continue reading: PCIe 5.0 '12VHPWR' power connector: 150W, 300W, 450W, 600W settings (full post)

NVIDIA DLSS source code leaked, hackers could force DLSS open source

The ongoing NVIDIA issues are surely causing massive meetings inside of Team Green, with the cyber attack now seeing the DLSS (Deep Learning Super Sampling) source code being leaked... and that's not the worst of it.

NVIDIA has kept its magic DLSS technology close to its chest, and while this is good for the company, its competitors -- AMD and Intel -- have their similar upscaling tech being open source. NVIDIA hasn't pushed into the world of open-source with DLSS, but hackers might actually force their hands.

DLSS beats the pants off of FSR (AMD's FidelityFX Super Resolution) and even more so with DLSS 2.2 and DLSS 2.3 if the games support it, because when they do... NVIDIA reigns supreme by a (very) long shot. But now that the DLSS source code is out in the wild, it could end in a ball of blames for NVIDIA as their secret sauce is out in the nude.

Continue reading: NVIDIA DLSS source code leaked, hackers could force DLSS open source (full post)

NVIDIA GeForce RTX 4090 rumored with 'Infinity Cache' style cache

NVIDIA's next-gen Ada Lovelace GPU architecture could have a big surprise up its sleeve, with new information that continues to flow from the leaked cache of content that hackers gained access to when they cyberattacked NVIDIA.

Inside of some of that information are some files that refer to the "Ada" GPUs with 16MB of cache per 64-bit memory bus, up from the 512KB per 32-bit memory on Ampere GPUs. This is a huge change, so the fact that the flagship AD102 GPU has 96MB of cache means it'll have a whopping 90MB more L2 cache than the GA102 Ampere GPU.

AMD has up to 128MB of Infinity Cache on its RDNA 2-based Radeon RX 6000 series GPUs, with the likes of the Radeon RX 6800 XT and Radeon RX 6900 XT both rocking 128MB Infinity Cache. NVIDIA's next-gen AD102 GPU should power the GeForce RTX 4090, GeForce RTX 4080 Ti, and GeForce RTX 4080 graphics cards if the company keeps its naming system the same.

Continue reading: NVIDIA GeForce RTX 4090 rumored with 'Infinity Cache' style cache (full post)

NVIDIA hackers threaten to leak data: LHR bypass, GPU drivers and more

NVIDIA's systems have reportedly been compromised, with hackers now threatening to leak out confidential NVIDIA data, information, and even crypto gimping unlocks.

Things continue to go from bad to worse, where now the hackers are threatening to leak out information -- and unlocks to the LHR-based GeForce RTX 30 series GPUs. The LHR V2 bypass for NVIDIA's gimped GA102 + GA104 GPU, and now those unlocks are being sold.

This means that the hacking collective has found a solution to the algorithm that gimps crypto mining on the RTX 30 series GPUs that are LHR-gimped, should be un-gimped soon enough. NVIDIA has admitted it has been attacked now, but I guess we'll see how much deeper this goes in the coming hours, days, and weeks.

Continue reading: NVIDIA hackers threaten to leak data: LHR bypass, GPU drivers and more (full post)

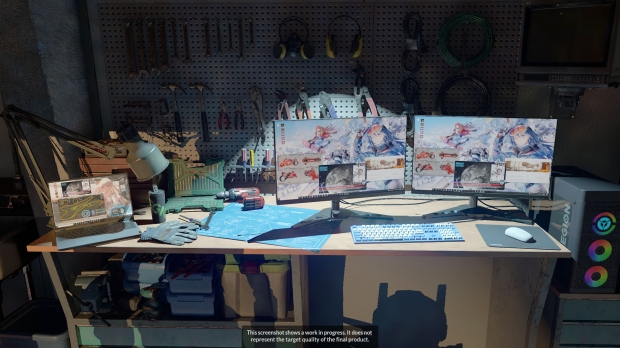

3DMark Speed Way: next-gen benchmark for DirectX 12 Ultimate features

UL has just announced its next-gen 3DMark Speed Way benchmark, which will stress test GPUs with ray tracing, and other DirectX 12 Ultimate features.

The new 3DMark Speed Way benchmark sees UL teaming with Lenovo for branding, stressing out your GPU with ray tracing, and real-time global illumination to render realistic lighting and reflections. Microsoft's new DirectX 12 Ultimate features will be stressed, including mesh shaders, and variable rate shading (VRS) to optimize performance and visual quality.

Lenovo's partnership with UL sees the company's Legion gaming brand having product placements in the Speed Way benchmark, including Legion gaming products (gaming PC + monitors + laptop) as well as merchandise. As for the benchmark, 3DMark Speed Way will be launching on Steam later this year -- but we don't know if it'll be an add-on available for free -- or a separate DLC.

Continue reading: 3DMark Speed Way: next-gen benchmark for DirectX 12 Ultimate features (full post)

NVIDIA's next-gen GPU after Hopper: Blackwell GPU aka Ampere Next Next

NVIDIA should hopefully be unveiling its next-gen Hopper GPU architecture at its upcoming GTC (GPU Technology Conference) in March 2022 -- but now we're hearing about its successor -- the next-next-gen Blackwell GPU architecture.

Where is this news coming from? Well, that would be from NVIDIA being recently hacked, where one of the files refers to "ARCH_BLACKWELL" which would see NVIDIA's next-gen GPU architecture named after David Harold Blackwell, an American statistician and mathematician specializing in statistics, game theory and information theory.

The first mentions of Blackwell turned up in July 2021, with leaker "Kopite7kimi" tweeting out the Blackwell GPU architecture tease. NVIDIA has only mentioned "Ampere Next" and "Ampere Next Next" but we should see those GPUs as Hopper and Ada Lovelace, but now we have Blackwell thrown into the next-gen GPU mix.

Continue reading: NVIDIA's next-gen GPU after Hopper: Blackwell GPU aka Ampere Next Next (full post)