IT/Datacenter & Super Computing News - Page 6

HP's new supercomputer is 8000x faster than your PC

HP has unveiled its new supercomputer, simply called The Machine, something first announced back in 2014. HP is aiming to smash all previous technology in existence with The Machine, as their new supercomputer doesn't need to rely on traditional processors - instead, it will utilize memory for its brute speed.

HP Enterprise explains that The Machine is up to 8000x faster than traditional machines (you'd freakin' hope so), but it's still years away from being released. HP will be aiming at high-end servers for companies like Google and Facebook, with the architecture itself powered by memory-driven computing, we should see this type of memory-driven PC trickle down to the PC one day, I hope.

The Machine uses photonics to transmit data using light, and thanks to its massive, and super-fast memory pool - The Machine can really crank through those datasets. When the data needs to be transferred between processors, things slow down - but HP has thought ahead of time using memory to super-speed the supercomputer.

Continue reading: HP's new supercomputer is 8000x faster than your PC (full post)

NVIDIA's new supercomputer will design next-gen GPUs

NVIDIA just continues to smash the GPU and supercomputing game, with the announcement of their newest DGX SATURNV supercomputer that is designed from the ground up on building smarter cars and next generation GPUs.

The new DGX SATURNV is ranked 28th on the Top500 list of supercomputers, but thanks to the use of the Tesla P100-powered DGX-1 units, it's the most efficient supercomputer in the world. Up until now, the most efficient machine on the Top500 list is at 6.67 GigaFlops/Watt, but the new NVIDIA DGX SATURNV is capable of a massive 9.46 GigaFlops/Watt, a huge 42% improvement.

Inside of the NVIDIA DGX-1 we have:

Continue reading: NVIDIA's new supercomputer will design next-gen GPUs (full post)

Canadian PM explains quantum computing to a reporter, like a boss

From now on, don't mess with Canadian PM Justin Trudeau - he's a total boss in his knowledge about quantum computing - smacking down a "sassy reporter" who didn't expect Trudeau to know much about quantum computing.

Well, he's actually quite knowledgeable when it comes to quantum computing, answering the reporter's question of "I was going to ask you to explain quantum computing, but...". Trudeau was quick off the mark, replying with: "Very simple: normal computers work by...".

The crowd laughed, interrupting him briefly, but then he continued with a brief explanation of quantum computing - explaining more about the subject than the reporter thought he'd know on the subject.

Continue reading: Canadian PM explains quantum computing to a reporter, like a boss (full post)

IBM and NVIDIA develop new OpenPOWER HPC server with Pascal P100

This week during GTC we saw NVIDIA change its focus from primarily consumer GPU's to professional technology aimed squarely at the evolution of AI. Pascal, while a vastly different and incredibly powerful architecture, is perfect for the ever evolving HPC field. IBM, at the OpenPOWER Summit that went on this week alongside GTC, announced their newest server that includes the Tesla P100 compute accelerators combined with POWER8 processors.

The big draw is the use of NVLink, the 40GBps data link directly from the CPU to the GPU that allows for quick communication and transfer of data. It's this innovation that might help to fuel faster HPC applications and even better, more nimble AI that can absorb vast amounts of information more quickly than before. The new server architecture will require the porting over of applications, but IBM and NVIDIA are both willing to assist in that regard, to make the transition easier.

IBM's Watson division will also be participating in the design and implementation of the new server platform, adn might even end up incorporating the Tesla P100 into their own design for an upgraded Watson super computer. The initial specifcations call for cramming 4 of those compute cards into the server along with four POWER8 12-core/96-thread CPU's operating at 3-3.5GHz combined with up to 1TB of DDR4-2400 RAM in this case. The implications for AI, let alone any other type of compute heavy load are tremendous. This could very well put the PPC architecture back on the map in a big way, especially with the assistance from IBM and NVIDIA in porting over your applications. Second generation POWER8 servers are just a stepping stone to the next-generation POWER9 architecture, which is just around the corner.

Continue reading: IBM and NVIDIA develop new OpenPOWER HPC server with Pascal P100 (full post)

Tyan introduces 1U POWER8-based OpenPOWER server for HPC

Tyan announced at the OpenPOWER summit this past week that they're going to start supporting IBM's OpenPOWER initiative by offering 1U POWER8-based servers for the HPC and in-memory application markets. POWER processors might not be as prolific as Xeon, but Tyan is of the mind that variety is the spice of life, and that there's a market for these processors that could well be untapped.

They're going to offer a total of three different configurations with their new GT75-BP012 server platform. This particular platform is a single-CPU design that allows for a massive amount of memory to be installed, though at slightly slower DDR3L speeds. They're positioning these to compete in niche markets that might not need such high processing requirements but need that extra capacity of RAM to be able to keep more things persistent so they run slightly faster as a result. It'll be difficult to compete with the price-performance ratio of the typical, and even lower-cost Xeon's, but with far more DRAM here, it could be useful in some markets.

The maximum configuration will have a single 10-core/80-thread POWER8 CPU running at 2.095GHz with 1024GB of DDR3L-1600MHz RAM, four 10GbE ports, four GbE ports and 1 PCIe expansion slot, that will actually support NVIDIA's forthcoming Pascal P100 GPU. These also have support for IBM's own Centaur memorry buffer chips that allow for even more in-memory buffer capacity at DDR3 speeds. The low-end will have an 8-core/64-thread POWER8 CPU running at 2.328GHz with the same 1TB of DDR3L RAM limit. a 750W PSU will be powering the servers.

Continue reading: Tyan introduces 1U POWER8-based OpenPOWER server for HPC (full post)

Microsoft wants to put data centers at the bottom of the ocean

We might be running out of room on the Earth for server racks and compute power. Or maybe not, but Microsoft still wants to start putting server farms and small clusters of data-centers in the bottom of the ocean. It might even be greener and more cost effective.

Project Natick is precisely the venture that Microsoft is concocting to put our data under the sea. The logic is actually quite sound, however. The idea is that containerized data centers can, if properly equipped, be cooled naturally and even use the energy from currents and waves to power them. It's a novel approach to making data, and the cloud, a more environmental friendly thing. If they don't leak and pollute the ocean of course.

And the researchers plan their submersibles to have a five year life-cycle, where they can be retrieved, refitted and upgraded with new hardware. And what if there's a malfunction or problem? Hardware failures happen, it's just a fact of life. So what if there's a HDD that suddenly can't write, and it needs to be replaced and the data restored? Presumably it'll have to be retrieved by boat and attended to, which could cost more money in manpower and equipment than just having a data-center easily accessible by humans.

Continue reading: Microsoft wants to put data centers at the bottom of the ocean (full post)

Facebook's custom AI hardware will be open-source, ready for Skynet

It looks like The Matrix and the Terminator movies weren't enough to make us stop trying to create an AI takeover, but now Facebook has just announced plans to open source its Open Rack-compatible hardware design for AI computing - something that has been codenamed Big Sur.

Facebook's Kevin Lee and Serkan Piantino explained that Big Sur was built to use 8 x high-performance GPUs, consuming 300W each. They were using NVIDIA's Tesla Accelerated Computing Platform, claiming that Big Sur was twice as fast as previous generations, something that were using off-the-shelf components and design.

The increased speed allows Facebook to train neural networks twice as fast, as well as exploring networks that are twice as large as before. In the end, training can be distributed between the 8 x GPUs, with the size and speed of the networks being scaled by another factor of two.

Continue reading: Facebook's custom AI hardware will be open-source, ready for Skynet (full post)

Lenovo displays its NeXtScale solutions NVIDIA GTC 2015

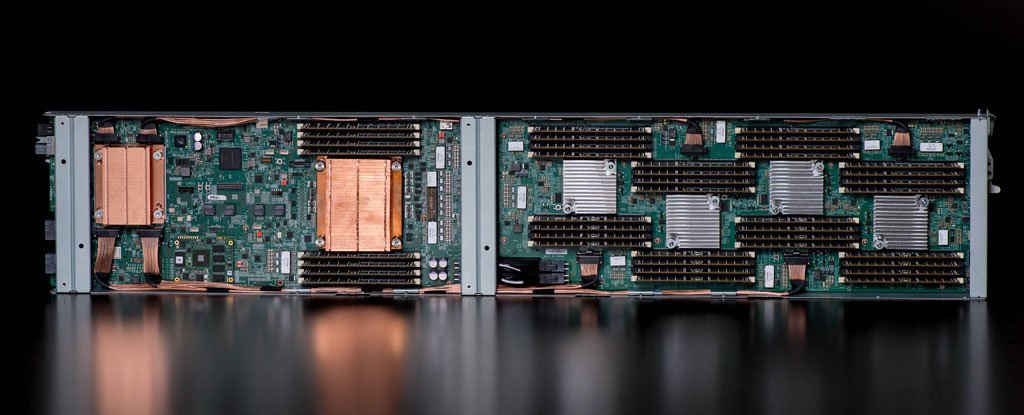

GTC 2015 - At NVIDIA GTC 2015, Lenovo shows its NeXtScale systems blades and enclosure. The blades can support NVIDIA Tesla K80 video cards, compute nodes and storage.

Here we see three blade examples with the NVIDA Tesla K80 blade in the middle. This blade can support two K80's or two Intel Xeon Phi coprocessor cards in a single doublewide blade. Other blades can be configured as computer nodes supporting two Intel Xeon E5-2600 v3 processors, or storage blades housing up to 7x hard drives.

Also on display was Lenovo's N1200 server enclosure outfitted to support NVIDIA GRID servers.

Continue reading: Lenovo displays its NeXtScale solutions NVIDIA GTC 2015 (full post)

QuantaCool displays its cooling systems at SEMI-THERM 31

SEMI-THERM 31 - We had a chance to visit QuantaCool at SEMI-THERM 31 to see their new cooling systems. The systems are using QuantaCool's MHP technology that provides passive cooling of high-intensity heat sources such as CPU's. There are no moving parts in the loop or water; cooling fluids are safe, environmentally benign and electrically nonconductive. These systems do not require a pump; Coolant circulation is driven by the heat being removed and uses gravity-return to provide circulation.

This was QuantaCool's first trade show and made a huge impression at SEMI-THERM 31. The systems they demonstrated are in prototype stages now, however they did have several systems up and running to show cooling potential in several different configurations.

The first system was a workstation running an Intel 4770K @ 4.6GHz. This system was running for several days at heavy stress loads and maintained operation without a glitch.

Continue reading: QuantaCool displays its cooling systems at SEMI-THERM 31 (full post)

Tyan shows off its HPC and OpenPOWER servers at NVIDIA GTC 2015

GTC 2015 - At NVIDIA GTC 2015, Tyan displays two of its heavy-duty HPC platforms. While most companies displayed GPU platforms, Tyan was there with its powerful High Performance Computing Platforms.

The first system is a FT77C-B7079 4U platform designed for up to 8x Intel Xeon Phi Coprocessors. This is a dual CPU socket system using Intel E5-2600 v3 processors and fast DDR4 memory.

Next, we found a real powerhouse and the only Quad CPU system that we saw at NVIDA GTC 2015. This system is called the FT76-B7922 4U4S, server platform for both Enterprise and HPC applications. The CPU's used on this system are 4x Xeon E7-4800 v3 processors. Resent leaks of data on these CPU's shows the E7-4800 v3's can go as high as 14 cores each which could give this system 56 cores / 112 threads. For memory load-out, it includes a massive capability of up to 6.144TB of DDR4 in 8x memory risers with 96 memory slots.

Continue reading: Tyan shows off its HPC and OpenPOWER servers at NVIDIA GTC 2015 (full post)