Meta has a nice surprise today: its latest large language model (LLM), Llama 3. The company explains its new Llama 3 8B contains 8 billion parameters, while its Llama 3 70B features 70 billion parameters.

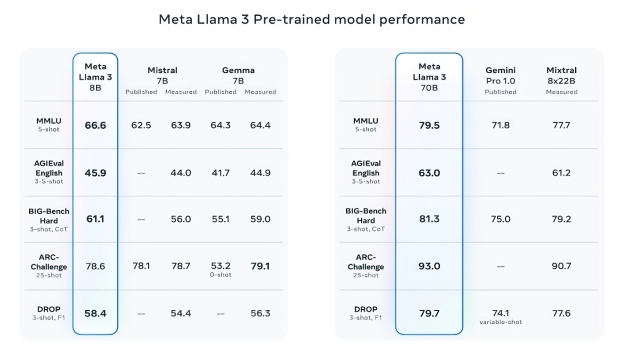

Meta promises a gigantic increase in performance over the previous Llama 2 8B and Llama 2 70B models, with the company claiming that Llama 3 8B and Llama 3 70B are some of the best-performing generative AI models available today, trained on two custom-built 24,000 GPU clusters.

The company trained its new Llama 3 model on over 15 trillion tokens that were all collected from "publicly available sources," and that Met'a training dataset is 7x larger than what was used for Llama 2, and that includes 4x more code.

Meta's new Llama 3 takeaways:

- Today, we're introducing Meta Llama 3, the next generation of our state-of-the-art open source large language model.

- Llama 3 models will soon be available on AWS, Databricks, Google Cloud, Hugging Face, Kaggle, IBM WatsonX, Microsoft Azure, NVIDIA NIM, and Snowflake, and with support from hardware platforms offered by AMD, AWS, Dell, Intel, NVIDIA, and Qualcomm.

- We're dedicated to developing Llama 3 in a responsible way, and we're offering various resources to help others use it responsibly as well. This includes introducing new trust and safety tools with Llama Guard 2, Code Shield, and CyberSec Eval 2.

- In the coming months, we expect to introduce new capabilities, longer context windows, additional model sizes, and enhanced performance, and we'll share the Llama 3 research paper.

- Meta AI, built with Llama 3 technology, is now one of the world's leading AI assistants that can boost your intelligence and lighten your load-helping you learn, get things done, create content, and connect to make the most out of every moment. You can try Meta AI here.

You can read all the nerdy stuff from Meta's new Llama 3 model here.