Phison had something very cool to show off at NVIDIA GTC 2024 last week, with a single MAINGEAR PRO AI workstation PC packing 4 x NVIDIA RTX 6000 Ada workstation GPUs using its in-house aiDaptiv+ software to use SSDs and DRAM to make up for the lack of VRAM, using it to super-speed AI workloads on the cheap.

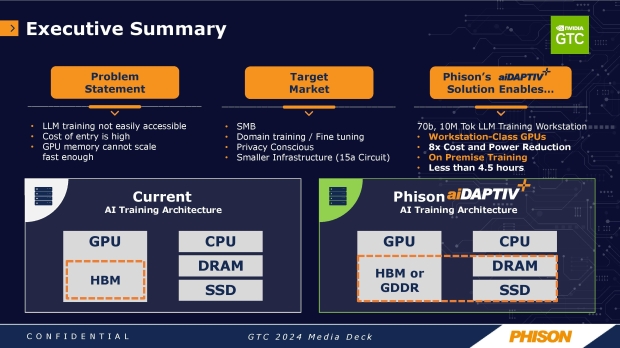

By cheap, we mean cheaper than using full-fledged NVIDIA Hopper H100 or new H200 AI GPUs, let alone the next-gen Blackwell B100 and B200 AI GPUs that will be very hard to come by this year, and a huge chunk into 2025. Phison's aim with its aiDaptiv+ platform is to lower the (cost) barrier of AI LLM training by using systems' already-there DRAM and SSDs to augment the amount of GPU VRAM available for AI training.

Phison says that achieving intense generative AI training workloads at a far lower cost than using standard GPUs will come at a cost: reduced AI training performance and longer training times. If you want the best, you'll spend magnitudes more money on it, but more off-the-shelf AI systems from the likes of MAINGEAR and its PRO AI system, putting Phison's new aiDaptiv+ platform in between is a big win.

With customers like small and medium-sized businesses and companies without hundreds of millions, and sometimes billions of dollars for high-end DGX AI GPU workstations from NVIDIA, AI training upgraded through SSDs and DRAM already in the system is very appealing. There are huge cost savings involved, with aiDaptiv+ speeding along the process.

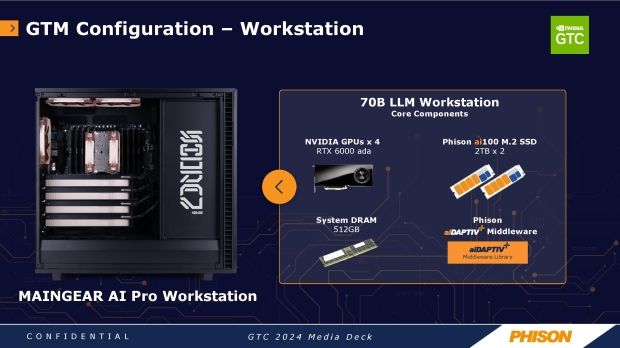

Phison showed off a demo of its aiDaptiv+ platform running on a MAINGEAR PRO AI workstation PC at GTC 2024 last week, with its 4 x NVIDIA RTX 6000 Ada workstation GPUs running a gigantic 70 billion parameter model. Phison estimates that the size to train 70 billion parameters is around 1.4TB/sec VRAM used through a massive 24 x GPU system across 6 x servers in a server rack.

This is a lot of hardware, power, and cooling that needs to be worked out, with Phison's new aiDaptiv+ system acting as as middleware software library that the company says "slices" off layers of the AI model from the VRAM that isn't actively being computed, and shoots them over to the system DRAM.

From there, the data can stay on the DRAM, or, if it's needed sooner, it can be sent over to the SSDs if they've got a lower priority. The data is then recalled and shifted back over to the VRAM on the GPU for computation tasks as required, with the newly-processed layer flushed to the DRAM and SSD to make room to process the next layer.

MAINGEAR used its new PRO AI workstation PC for Phison's new aiDaptiv+ platform test, powered by an Intel Xeon W7-3435X processor, 512GB of DDR5-5600 memory, and two specialized Phison SSDs in 2TB. MAINGEAR starts the pricing of its PRO AI workstation at $28,000 with a single GPU, or up to $60,000 if you equip it with a monster 4-way RTX 6000 Ada workstation GPU.

Phison's new aiDaptiveCache ai100E SSDs are found in the regular M.2 form factor, but are specially designed for caching workloads. We don't know much about the new SSDs, but we do know they pack SLC flash that improves performance and endurance, with the drives rated for 100 drive writes per day over 5 years.

The new aiDaptive+ middleware sits beneath the Pytorch/Tensor Flow layer, with the company adding that its middleware is transparent and doesn't require any modifications of the AI applications that owners will use.