NVIDIA might have just announced its next-generation Blackwell B200 AI GPU, but the beefed-up Hopper H200 AI GPU is smashing performance records in the very latest MLPerf 4.0 results.

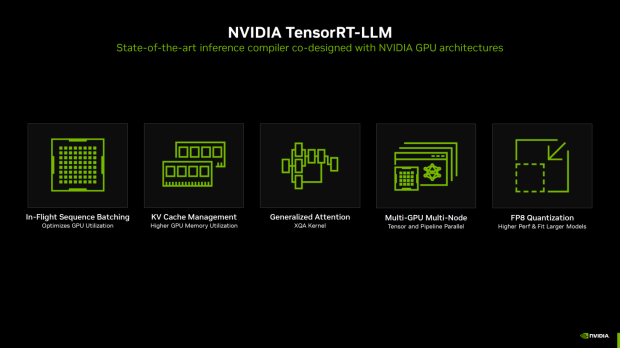

NVIDIA's optimizations on TensorRT-LLM have been a non-stop chain of progression since the company released its AI Software suite last year. There were major performance increases from MLPerf 3.1 results to MLPerf 4.0, with NVIDIA amplifying Hopper's AI performance.

Using these new TensorRT-LLM optimizations, NVIDIA has pulled out a huge 2.4x performance leap with its current H100 AI GPU in MLPerf Inference 3.1 to 4.0 with GPT-J tests using an offline scenario. With server-based scenarios using GPT-J, NVIDIA's current H100 AI GPU had a huge 2.9x increase in MLPerf 3.1 to 4.0 performance.

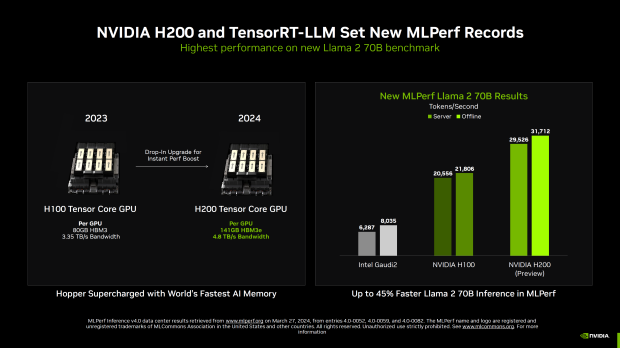

Moving onto the beefed-up H200 AI GPU and Llama 2 70B benchmarks, NVIDIA's new H200 AI GPU and TensorRT-LLM set a new MLPerf record.

NVIDIA's new H200 Tensor Core GPU is a drop-in upgrade for an instant performance boost over H100, with 141GB of HBM3E (80GB HBM3 on H100) and up to 4.8TB/sec of memory bandwidth with H200 versus 3.35TB/sec bandwidth on H100. This greatly boosts AI GPU performance, as Hopper H200 gets supercharged with the world's fastest AI memory: HBM3E.

H200 is up to 45% faster in Llama 70B inference in MLPerf, setting a new world record. NVIDIA showed off the Intel Gaudi2 AI accelerator, which the Hopper H100 AI GPU demolishes, and then the beefed-up H200 AI GPU continues to skyrocket that same AI dominance.

H100 provides 20,556 tokens per second in server-based performance and 21,806 in offline mode. Meanwhile, the new H200 dominates with 29,526 tokens per second on the server and a huge 31,712 tokens per second offline. This is a gigantic leap above what Intel Gaudi2 pumps out, with just 6287 and 8035 tokens per second for server and offline, respectively.

- Read more: Micron HBM3E for NVIDIA H200 AI GPU has shocked HBM competitors

- Read more: ASRock's new 'world's smallest' server rack: NVIDIA H200 Superchips for AI

- Read more: Micron announces HBM3e enters volume production, for new H200 AI GPU

- Read more: NVIDIA CEO Jensen Huang visits Taiwan, preparing for H200 and B100 AI GPUs

Not only that, but NVIDIA's beast HGX H200 GPU system has 8 x H200 AI GPUs, demolishing the Stable Diffusion XL benchmark with 13.8 queries per second, and 13.7 samples per second in server and offline, respectively.

NVIDIA's new Hopper H200 AI GPUs have 700W base TDP, with custom designs powering up to 1000W of power. NVIDIA's new Blackwell B100 is stock at 700W, while B200 AI GPUs come in 1200W and 1000W designs depending on the use.