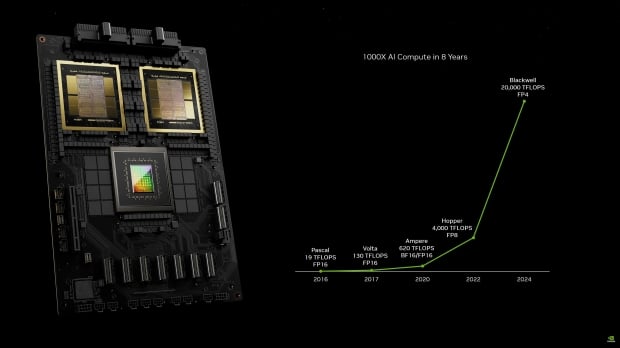

NVIDIA has just revealed its next-gen Blackwell GPU with a few new announcements: B100, B200, and GH200 Superchip, and they're all mega-exciting.

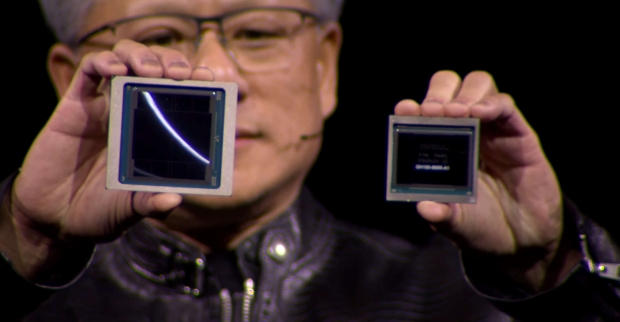

The new NVIDIA B200 AI GPU features a whopping 208 billion transistors made on TSMC's new N4P process node. It also has 192GB of ultra-fast HBM3E memory with 8TB/sec of memory bandwidth. NVIDIA is not using a single GPU die here, but a multi-GPU die with a small line between the dies differentiating the two dies, a first for NVIDIA.

The two chips think they're a single chip, with 10TB/sec of bandwidth between the GPU dies, which have no idea they're separate. The two B100 GPU dies think they're a single chip, with no memory locality issues and no cache issues... it just thinks it's a single GPU and does its (AI) thing at blistering speeds, which is thanks to NV-HBI (NVIDIA High Bandwidth Interface).

- Read more: NVIDIA details Blackwell Ultra GB300: dual-die design, 208B transistors, up to 288GB HBM3E

- Read more: NVIDIA's next-gen Vera Rubin NVL576 AI server: 576 Rubin AI GPUs, 12672C/25344T CPU, new HBM4

- Read more: AMD details Instinct MI350: 3D chiplet, 185B transistors, 288GB HBM3E, TSMC N3P node

NVIDIA's new B200 AI GPU has 20 petaflops of AI performance from a single GPU, compared to just 4 petaflops of AI performance from the current H100 AI GPU. Impressive. Note: NVIDIA is using a new FP4 number format for these numbers, with H100 using the FP8 format, which means that B200 has 2.5x theoretical FP8 compute than H100. Still, very impressive.

Each of the B200 GPU dies two full reticle-size chips, with 4 x HBM3E stacks of 24GB each, along with 1TB/sec of memory bandwidth on a 1024-bit memory interface. The total of 192GB of HBM3E memory, with 8TB/sec of memory bandwidth, is a huge upgrade over the H100 AI GPU, which had 6 x HBM3 stacks of 16GB each (at first, H200 kicked that up to 24GB per stack).

NVIDIA is using an all-new NVLink chip design that has 1.8TB/sec of bi-directional bandwidth and packing support for a 576 GPU NVLink domain. This NVLink chip itself features 50 billion transistors, manufactured by TSMC on the same N4P process node.