Experts on child abuse have warned of the increasing number of instances where children in schools have used AI-powered image generators to create indecent images of fellow students.

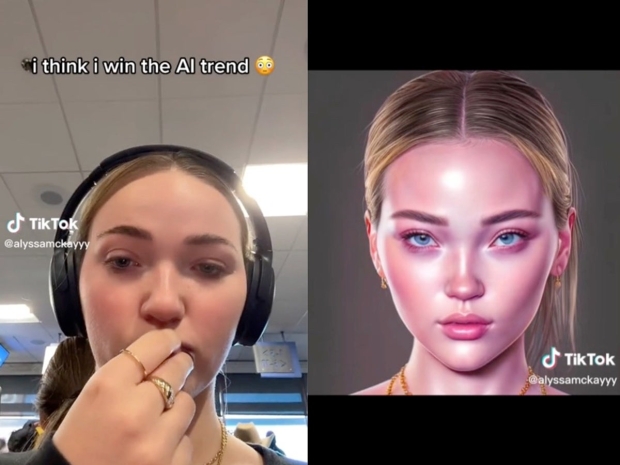

The warning has come from UK Safer Internet Center (UKSIC) director Emma Hardy, who said that there is a number of schools reporting students being caught using various AI-powered tools to create images of children that would fall under child sexual abuse material. What was most disturbing is how realistic these images were. According to Hardy, the images students were generating of other students were "terrifying" realistic, and the quality of these images was "comparable to professional photos taken annually of children up[ and down the country".

The body of experts calls on schools to enforce better blocking technology to prevent students from accessing these AI-powered tools on school premises. Additionally, UKSIC director David Wright said these reports are hardly a surprise as when new technologies such as AI generators become accessible to the public, you should anticipate people using them for bad behavior.

"The photo-realistic nature of AI-generated imagery of children means sometimes the children we see are recognisable as victims of previous sexual abuse. Children must be warned that it can spread across the internet and end up being seen by strangers and sexual predators. The potential for abuse of this technology is terrifying," said Hardy.

"The reports we are seeing of children making these images should not come as a surprise. These types of harmful behaviours should be anticipated when new technologies, like AI generators, become more accessible to the public. Children may be exploring the potential of AI image-generators without fully appreciating the harm they may be causing. Although the case numbers are small, we are in the foothills and need to see steps being taken now - before schools become overwhelmed and the problem grows," said UKSIC director David Wright