The AMD Instinct MI300X is a new high-performance computing hardware that could dent NVIDIA's dominance in the generative AI space by offering an alternative to the popular H100 GPU and the Grace Hopper CPU and GPU combo.

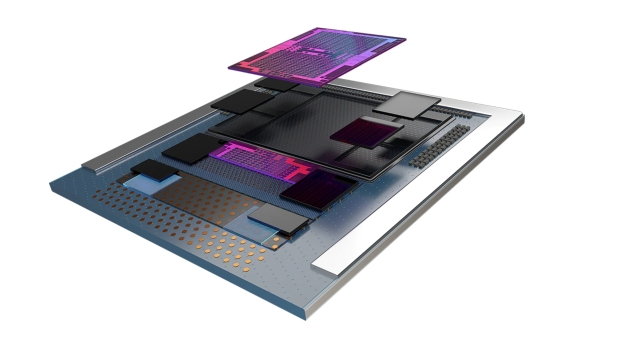

AMD Instinct MI300X is like the latter in that it's an APU with up to 24 'Zen 4' Cores alongside CDNA 3 GPU Architecture. Making full use of AMD's groundbreaking chiplet design with 8 Chiplets and 8 Memory Stacks using 5nm and 6nm processes, the real kicker is up to a whopping 192 GB of HBM3 Memory - which makes it tailor-made for memory-intensive AI workloads.

And it's right around the corner, too, with AMD noting that the CPU-only MI300A version is sampling right now, with the full MI300X expected to do the same next quarter with full production and availability in Q4 2023. The MI300A is currently being deployed in the El Capitan 2 Exaflop supercomputer.

AMD calls the new AMD Instinct MI300X "the world's most advanced accelerator for generative AI," thanks in part to the massive amount of memory it offers. "With the large memory of AMD Instinct MI300X, customers can now fit large language models such as Falcon-40, a 40B parameter model on a single MI300X accelerator," AMD adds.

And with that, this is a supercomputer chip, with the AMD Instinct Platform set to combine eight MI300X accelerators into a single design and solution for AI inference and training. Not to mention 1.5TB of HBM3 memory.

Regarding the MI300X's performance, AMD states that it delivers 8X the AI performance compared to the CDNA 2-based AMD Instinct MI250X accelerators. And with unified memory architecture, it's set to deliver an impressive 5X increase in performance per watt over CDNA 2.

Make no mistake, the AMD Instinct MI300X isn't simply AMD's take on delivering a massive GPU for AI, it'll play a major role in the company's data center-focused future as all-thing AI and AI hardware dominate the high-performance computing market.

"AI is really the defining technology that's shaping the next generation of computing," AMD CEO Dr. Lisa Su said during the reveal of the AMD Instinct MI300X. "When we try to size it, we think about the data center AI accelerator TAM growing from something like $30 billion this year, over 50 percent compound annual growth rate to over $150 billion in 2027."