So this is coming out of nowhere, but Financial Times is reporting that Apple will be placing a mandatory new software update to all Americans' iPhones called neuralMatch -- and it will act as surveillance on your phone searching for child porn.

The new program will be detecting and reporting any Americans that own iPhones and have any CSAM (Child Sexual Abuse Material). Apple will reportedly unveil neuralMatch next week, and then will be pushed onto US iPhones in a new software update.

The automated system will alert an actual team of human reviewers, after which if they confirm that child sex abuse material (CSAM) is on your iPhone, then they will contact law enforcement.

"Apple's neuralMatch algorithm will continuously scan photos that are stored on a US user's iPhone and have also been uploaded to its iCloud back-up system. Users' photos, converted into a string of numbers through a process known as "hashing", will be compared with those on a database of known images of child sexual abuse".

"The system has been trained on 200,000 sex abuse images collected by the US non-profit National Center for Missing and Exploited Children".

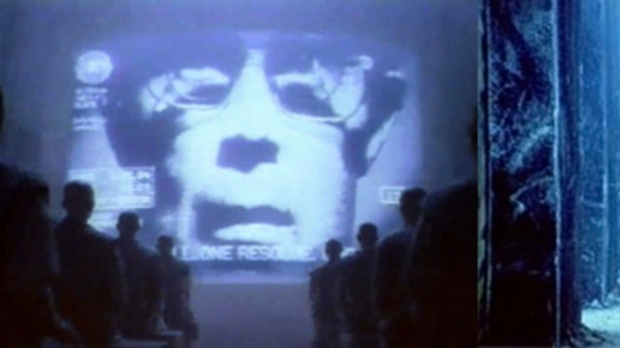

I'm totally down with tracking, detaining, and severely punishing those with CSAM... but this feels very, very Big Brother and Orwellian. I really don't like the idea of having super-spying tools on top of the super-spying tools inside and outside of our smartphones already, but this is a giant leap into the unknown.

What if there's an error? Like SWATTING with games? Apple's neuralMatch detects "CSAM" on your iPhone, but it's not... it's a picture of your own son or daughter, in the bath for example. Your door gets kicked in, and you're arrested or worse... feels very Minority Report, too.