AMD has just announced its next-gen CDNA GPU-based Instinct MI100 accelerator, which packs 7680 stream processors, and 32GB of HBM2 with an ultra-fast 1.23TB/sec of memory bandwidth. Check it out:

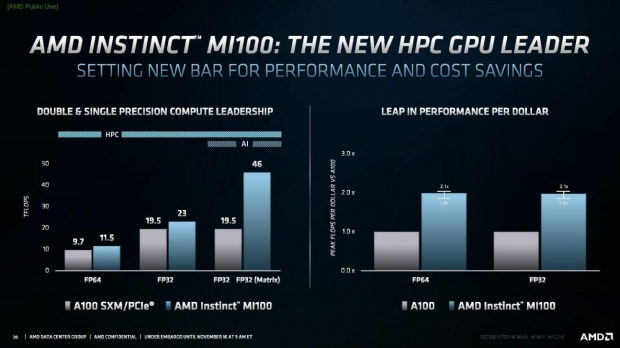

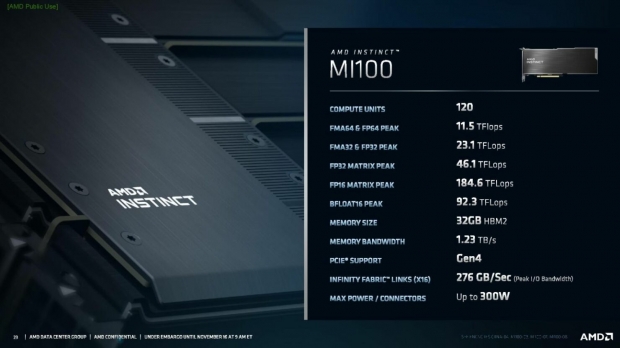

Inside, the new Instinct MI100 accelerator is a 7nm CDNA GPU with 120 Compute Units, or 7680 stream processors. We've heard of this GPU before as it is codenamed Arcturus, powering the new Instinct MI100 accerlator. The GPU itself is clocked at around 1500MHz with 11.5 TFLOPs of FP64 performance, 23.1 TFLOPs of FP32 performance, and a huge 185 TFLOPs of FP16 performance.

AMD has used 32GB of HBM2 memory, where systems with 4 or 8 GPUs will use the new Infinity Fabric X16 interconnect with bandwidth of 276GB/sec. The HBM2 memory is capable of hitting up to 1.23TB/sec of memory bandwidth which is huge -- it doesn't quite compete with the 1.53TB/sec pumping away from NVIDIA's new A100 which uses HBM2e memory.

- All-New AMD CDNA Architecture- Engineered to power AMD GPUs for the exascale era and at the heart of the MI100 accelerator, the AMD CDNA architecture offers exceptional performance and power efficiency

- Leading FP64 and FP32 Performance for HPC Workloads - Delivers industry-leading 11.5 TFLOPS peak FP64 performance and 23.1 TFLOPS peak FP32 performance, enabling scientists and researchers across the globe to accelerate discoveries in industries including life sciences, energy, finance, academics, government, defense, and more.

- All-New Matrix Core Technology for HPC and AI - Supercharged performance for a full range of single and mixed-precision matrix operations, such as FP32, FP16, bFloat16, Int8, and Int4, engineered to boost the convergence of HPC and AI.

- 2nd Gen AMD Infinity Fabric Technology - Instinct MI100 provides ~2x the peer-to-peer (P2P) peak I/O bandwidth over PCIe 4.0 with up to 340 GB/s of aggregate bandwidth per card with three AMD Infinity Fabric Links. In a server, MI100 GPUs can be configured with up to two fully-connected quad GPU hives, each providing up to 552 GB/s of P2P I/O bandwidth for fast data sharing.

- Ultra-Fast HBM2 Memory- Features 32GB High-bandwidth HBM2 memory at a clock rate of 1.2 GHz and delivers an ultra-high 1.23 TB/s of memory bandwidth to support large data sets and help eliminate bottlenecks in moving data in and out of memory.

- Support for Industry's Latest PCIe Gen 4.0 - Designed with the latest PCIe Gen 4.0 technology support providing up to 64GB/s peak theoretical transport data bandwidth from CPU to GPU.

AMD will have the new Instinct MI100 accelerator ready for fully-qualified OEM systems from the likes of Dell, GIGABYTE, HPE, and Lenovo -- by the end of 2020.