Introduction

GTX 400 talk over the last few months has been insane; there's never been so much interest on a single video card. For the most part, though, the interest was stewed up because of the massive delays for the model. A card that was supposed to launch in November is now launched today in the final days of March.

While we've got a review of the GTX 470 online, we don't of the GTX 480. That doesn't stop us from telling you the specifications of that model, though. What we'll do is give a quick rundown on both models as far as main specifications go and then talk about some of the features that NVIDIA offer in the latest series.

I also asked NVIDIA a couple of questions which they've been kind enough to answer and we'll also look at that.

In this article you should be able to find all the important pieces of information related to the GTX 400 series, while at the same time see how we squash some of the rumors that have been circulating around the model for the past few months.

The GTX 470

Priced at $349, NVIDIA says that this card sits directly in between the HD 5850 and HD 5870. In a sense, it has nothing to compete against since it's more expensive than one but cheaper than the other. If you had to put something directly against it from a price point of view, you would use something like a HD 5850 OC which will carry with it a higher price tag than the standard HD 5850.

In the processing unit side of things the three big numbers for the card are the CUDA Cores, Texture Units and ROP Units. The GTX 470 in the same order carries with it 448, 56 and 40, all on a 40nm core.

On the clock front the core comes in at 607MHz, the CUDA cores (or Shader Clock as they're sometimes known as) comes in at 1215MHz. The card carries with it a 320-bit memory interface which equates to the weird 1280MB memory amount. NVIDIA has of course opted to use GDDR5 on its new high-end model which comes in at 837MHz or 3348MHz QDR.

To power the card, two 6-Pin PCI-E connectors are needed. NVIDIA put the Max Board Power (TDP) at 215 Watt and recommend at least a 550 Watt power supply for the model.

As for connectors, NVIDIA have opted for a slightly different configuration to not only what we've seen from then in the past, but also ATI. DisplayPort isn't present which doesn't come as much of a surprise. What we do have are two Dual-Link DVI connectors and a single mini-HDMI port. Since we don't have a retail version of the card yet, we're not sure if companies will include a mini-HDMI to HDMI port, but let's hope so.

NVIDIA say that the GTX 470 is "A New Leader in Price/Performance". Time will tell if this holds true.

The GTX 480

Priced at $499, this is the new bad boy of video cards from NVIDIA. NVIDIA say this doesn't compete against the HD 5870; it's the fastest video card on the market; a class of its own you get the feeling. Of course, in really fine print you get the feeling that NVIDIA are only talking about the single GPU market.

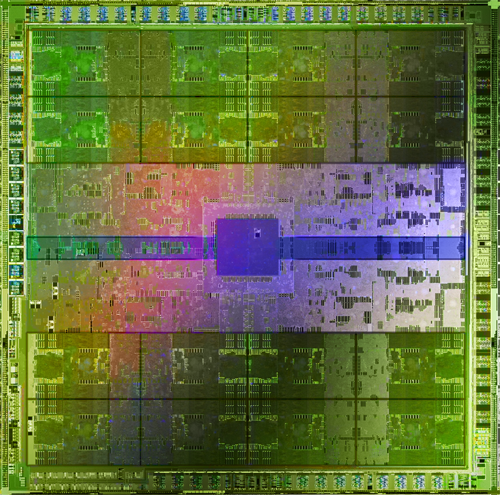

On the processing side of things there's again the three big numbers; CUDA Cores, Texture Units and ROP Units. Again in the same order, the card carries with it a 480, 60 and 48 setup. And again, this is all done on a 40nm core.

In the clock department the core comes in at 700MHz and the Shader Clock at 1401MHz. The GTX 480 like the GTX 470 carries with it GDDR5 memory, but instead of a 320-bit bus it has been widened here to 384-bit, resulting in 1536MB of memory coming in at 924MHz or 3696MHz QDR.

While the GTX 480 also requires two power connectors, instead of a dual 6-Pin configuration this model opts for a single 6-Pin and a single 8-Pin connector. NVIDIA puts the Max Board Power (TDP) at 250 Watt and recommends at least a 600 Watt power supply for the model.

Connectivity on both GTX 480 cards is the same which means here we see two Dual-Link DVI connectors and a single mini-HDMI port.

NVIDIA say the GTX 480 is "The World's Fastest Gaming GPU". I think because they say GPU and not video card, it kind of covers them when being compared against the HD 5970 which is essentially just a HD 5870 x2 and in turn not a single GPU.

The 512 CUDA Core Story

This was a big story when it broke; the decision that NVIDIA had opted to use 480 CUDA cores on the GTX 480 instead of 512. First, let's just talk about the model name. I asked NVIDIA if the model name had anything to do with the amount of CUDA cores it had (GTX 480 = 480 CUDA Cores) and they came back and said "no" in a word.

In a longer response, they said:

No, the name of the GeForce GTX 480 was 480, just for the sake of consistency of our nomenclature. Remember, the first product using our last generation architecture was the GeForce GTX 280. So it really didn't have anything to do with the number of available CUDA cores in the product.

The thing is, word of it being called the GTX 300 series was only ever just a rumor and unless they were going to call the GTX 470 the GTX 448, it would be silly to name one model after its CUDA core and not the other.

The GTX 480 was called the GTX 480 because it had 480 CUDA Cores? - False

So, the next question was; did NVIDIA drop down from 512 CUDA cores to 412 to increase yield rates? - The answer for that one is ultimately "yes" if you read between the lines.

For the GTX 480, we decided to productize 480 cores in order to support the broadest availability at the time of initial launch. Based on these specs, we expect good availability to meet pent up demand.

No, they're not saying that the card was decreased from 512; instead they're saying that 480 was the number they decided on. When asked about the 512 CUDA Cores being shown in the whitepaper and in slides, NVIDIA responded by saying "That's what FERMI is capable of."

To be honest, it's a bit of a pain in the ass answer. If it's capable of that, then why aren't we using it? - It's clear they need to readjust the CUDA cores to bring numbers up to offer what they are considering good availability.

The GTX 480 was trimmed to 480 CUDA Cores from 512 to increase yield rates aka supply? - Yes and No. Officially there was no final specification, NVIDIA say, so moving from 512 to 480 didn't really happen. Instead they just opted to have 480 in the final specification to make sure supply was available.

The Yield Rate Story

Poor yield rates for the GTX 400 series is what sparked the delay story. NVIDIA have never really come out and said anything about the yield rates. When I participated in a conference call with them recently, someone asked how the yield rates were.

Now, I thought that I heard them say the yield rates were at an acceptable level; so I asked since they were now at an acceptable level, does that mean at one point they weren't?

The response is quite interesting.

I said the yield rates are in-line with our expectations for 40nm. Not that the yield rates weren't acceptable, just that TSMC 40nm fab process has been improved over the last year, so we don't expect supply issues at launch. We expect to be in a far better position with our enthusiast class products than what our competitor experienced with 40nm in the fall.

It's interesting to see that NVIDIA clarify the fact that they didn't say the yield rates were acceptable, but instead just in line with their expectations. They also say that the process has improved over the last year and they expect to be in a far better position than its competitor (ATI) at launch.

Is yield rate a reason for the delay? - It would really seem that way. The good news is that it doesn't seem to be an issue now which is what really matters. It's clear that the building phase didn't go as smooth for NVIDIA as they had hoped, nor is it at a level that they would consider acceptable.

The Launch Supply Story

First we heard 5,000 pieces at launch; then we heard 50,000 pieces. So what is it? The first? The Latter? In between? Even more? Even Less?

Sorry, this information is confidential to NVIDIA.

Well, that doesn't come as much of a surprise; no company is really going to give out its quantity and unless you know how much every partner was getting, it would be hard to know exactly how much stock is going to be on the market when the card hits.

There's going to be 5k or 50k pieces at launch of GTX 400 series? - We'll probably never really know. Ultimately all we'll know come launch and a few weeks after it is if launch supply is good or not.

On the topic of the rumor that NVIDIA hope to pass the sales of Cypress and Hemlock (HD 5800 and HD 5900) combined by the end of summer; if so, which market will pick the product up.

Umm... Doesn't every company hope their products sell more than their competitors? ;) As for which crowd is more likely to be buying the GTX 400 series products, like all of our enthusiast level products, the most common audience who look to buy them are gamers, hardware enthusiasts and also enthusiasts of visual computing (think video/image editing, etc.).

It comes as no surprise that NVIDIA hope to outsell ATI, but if they have actually set a target date, we're not sure.

NVIDIA want to have sold more FERMI than Cypress and Hemlock combined? - Yes.

At the end of Summer? - Well, sooner than later would be better, but there doesn't seem to be a time frame on this.

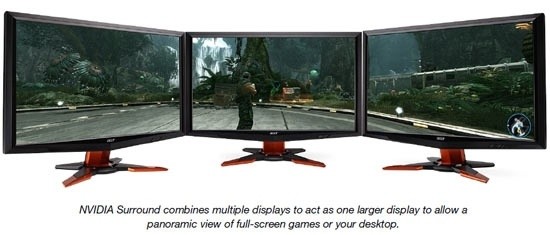

The Surround Gaming Story

Surround Gaming is to NVIDIA as Eyefinity is to ATI. There are clear flaws with Surround Gaming, though. They'll bother some people, while others they won't. I wrote a while ago on my Blog that I think NVIDIA will win the Multi Screen Technology battle because they'll be more aggressive with the marketing. In saying that, though, with the implementation of the technology in the GTX 400 series not being perfect, ATI still do have a leg up.

The first thing I want to get answered is:

Will Surround Gaming support three 2560 x 1600 screens at native resolution? - Yes

I was actually the one to come out and say that it doesn't look like surround gaming would support monitors above 1920 x 1080. It's clear now that the technology will. It seems that when NVIDIA announced the surround gaming support on the GF100 page at the NVIDIA site, the whole focus was on the 3D side of things which at the moment there's no 120HZ screens above 1920 x 1080.

You'll need two video cards to run Surround Gaming? - Yes

Just as ATI can't run two DVI connected monitors and a HDMI one from a single card, NVIDIA can't do this either. What this means is that you'll need to have two video cards offering us four Dual Link DVI ports.

So I don't need a DisplayPort monitor for Surround Gaming? - Yes

And this is what makes it a bit bitter sweet. If you're running three monitors already and you get two NVIDIA cards, you'll be able to run all three via Surround Gaming without an expensive adapter.

So, the good news is that you don't have to buy an expensive adapter or a DisplayPort enabled monitor.

The bad news is that you'll need to buy two graphics cards which means a bigger PSU, a motherboard that supports SLI and a few other things.

The bad news might not be an issue for a lot of people; at the end of the day the adapter needed to get a third DVI monitor working on an ATI based graphics card won't boost performance, unlike a second video card. Of course, the video card will cost more than the adapter. Like I said; a bit bitter sweet.

The Reviewers Guide Story

We've all joked about the fact that the NVIDIA Reviewers Guide would tell reviewers to test nothing but Unigine Heaven to take advantage of the Tessellation features on offer from the GTX 400 series card.

Well, it doesn't. The reviewers guide is very similar to ones in the past. Let me give you a quick rundown of what's inside.

Starting from the top, we have the FTP Information and Embargo Date (NDA). Moving down, we've got an Introduction to the GTX 400 series, a rundown on the GTX 480 and then the GTX 470. We continue down to a blurb about Cinematic Image Quality, Revolutionary Compute Architecture for Gaming and some information about 3D Vision Surround.

Once all that's out of the way, NVIDIA then give us an idea on some of the games we should test, what settings they recommend and some notes for particular games. NVIDIA didn't say "We'll only send the model if you test this way and that way" or anything like that.

The information here is really no different to what we get from ATI. To be honest, a number of games in the list we already test anyway, so it didn't really impact us heavily.

Once all that information was out of the way, we have a table with the GTX 480 and GTX 470 side by side showing us the different specifications which we covered in our second and third pages.

We've then got another table which gives us a bunch of results NVIDIA have received from the GTX 480, GTX 470 and GTX 480 in SLI against a number of high end ATI cards. Really, though, we take this information with a grain of salt and since we do our own testing, it's not relevant to us anyway.

The end of the reviewers guide is finished off with a bunch of contact information for NVIDIA staff in any region and that's about it.

There's nothing sinister in the Reviewers Guide nor did NVIDIA tell us that we could only get a sample if we followed a certain method of testing.

The Fancy Tech

NVIDIA cards offer a number of fancy technologies which some people love, others hate; some people want, others don't care. Whatever your position on the technology is, it's something that has to be put into consideration when comparing the cards to ATI ones who don't offer the technology.

- CUDA

CUDA has really been kicked up a notch with the latest generation of video cards from NVIDIA. Now, I could give you a copy and paste of information from the Fermi and GF100 Whitepaper, but it'll probably go straight over your head, as it does mine. You should see these documents show up online soon enough anyway.

The important thing to know about CUDA is that it's faster than it was on the last generation of cards. Now, while it's not a technology everyone will use, it's certainly handy for a number of people.

If you're right into your folding@home, the extra grunt on offer is going to help not only increase performance over previous generation cards, but also continue to demolish CPUs doing the same job.

Ripping movies to your iPhone or making them compatible with your Xbox 360 with BADABOOM is also going to get a speed boost. While we did run into some problems here running BADABOOM, we're sure that it's only a patch away from being fixed.

These are just a few of the CUDA enabled programs; there's plenty out there and as time goes on more and more developers are making use of the power that is available from GPGPU computing.

While the technology can be used by everyone, not everyone is going to need it. It doesn't make it any less important of a feature, though.

- Ray Tracing

Keeping up with what's happening with video cards is a full time job. Within video cards there's so much more to know and really, it's impossible to keep up with all of the internal tech that doesn't make use of games, while keeping up with the stuff that does make a difference and also knowing the whole top to bottom line-up of models from companies.

This is the excuse I'm using for my lack of understanding on Ray Tracing. When NVIDIA was asked the other day if Ray Tracing could be used in games, there was a bit of a "Yeah, it could, but it probably never will" kind of response.

Ray Tracing is another technology, much like CUDA in the sense that you either really want it, or you just don't need it. Looking through a list of software companies that support Ray Tracing, you see names like 3DS Max, LightWave 3D and Bryce just to name a few.

If you use programs like this the chances are you'll have a better understanding of Ray Tracing and know if it's something you need or not. Either way, for NVIDIA it's another selling point for the company in a line-up of some pretty fancy technology.

The Fancy Tech - Continued

- PhysX

PhysX continues to be a bit of a mixed bag amongst gamers. Gamers love more eye candy and that is essentially what PhysX does. The problem with PhysX is its limitation to NVIDIA-only cards. Sure, NVIDIA paid for the technology when they bought out AGEIA and it's their right to offer it exclusively on their cards. The problem is this seems to have affected the uptake of the technology. Many companies aren't keen to jump on the technology for this reason, it seems.

With that said, PhysX is still available in a number of games and it's faster than ever, so NVIDIA say in the GTX 400 series. It's again like CUDA; some people are going to love it and want it, while others aren't going to care about the technology and simply enjoy the games they have and the way they currently play.

- Antialiasing

I know what you're thinking; ATI cards offer AA as well. However, NVIDIA have implemented three new AA technologies with the GF 100 which include:

- 32x Coverage Sampling Antialiasing (CSAA)

- Alpha to Coverage from Coverage Samples

- Improved Transparency super sampling

NVIDIA say that of the three, the third one shows the most distinct improvement in image quality.

AA continues to be a hot topic, though. Some feel it's not necessary with the higher resolution of monitors these days; others can't play a game without it. I find that the performance hit by introducing AA is too great to warrant the use of it and when really moving around fast, I don't even notice the "jaggies". Each to their own, though, and AA will continue to be a hot topic for the future no doubt.

Final Thoughts

When it comes to reviewing and scoring the GTX 400 series, the performance numbers in games only tell part of the story. How much of the story it tells is really dependent on you, the user, though. If gaming is all you care about and PhysX and 32x CSAA don't interest you, then you really just want to see how the new models compare against the ATI ones.

If PhysX and the new AA features are something that interest you, though, you may say ok, while the card is a tad slower in this area, I can run it with PhysX which for me is the biggest selling point.

The same goes for CUDA, a technology that ATI don't offer. If folding@home or BADABOOM is your thing and extremely important to you, ATI cards might not even be a factor; the same goes for Ray Tracing.

At the same time, we've been able to give you a bit more insight to the rumors that have been floating around over the past six months at the same time as being able to debunk some of them.

The GTX 400 series no doubt offers a lot of bells and whistles. The question you need to ask yourself, though, is do you want or need them?

It's easy to get excited about what the GTX 400 series can do for us. You simply just have to decide if these are important features for you. Over the coming weeks and months we'll no doubt be looking at a number of GTX 400 variations from companies and let's hope that the model can really shine, because if it was for only one thing, it would be to make sure competition was strong in the market.

Our Latest NVIDIA GeForce GPU Article Coverage

- ASUS ROG Strix GeForce RTX 5070 Ti 16GB OC Edition Review - Premium Design, Premium Performance

- COLORFUL iGame GeForce RTX 5070 Ultra OC Review - When Style and Performance Meet

- PNY GeForce RTX 5080 Slim OC Review - A Compact 4K Powerhouse

- MSI GeForce RTX 5060 Ti 16G VENTUS 2X OC PLUS Review

- MSI GeForce RTX 5070 Ti VENTUS 3X PZ OC Review - Hidden Cable, Visible Performance