Introduction

The HDD has been a work in progress since its inception in 1956. The evolution of the HDD has been fundamental in many aspects, with the same basic spinning platter design being further refined to produce more storage capacity and increase efficiency. Shrinking the size of the HDD has been the principal goal, and we have come from massive drives weighing hundreds of pounds, down to devices in 2.5" and 3.5" form factors.

The evolution of the HDD has focused on increases in areal density, and a whole slew of technologies have managed to pack more data onto the platter. SMR, along with helium-based drives, have made their way to the forefront in the density war. The near-term future might hold TDMR, while longer-term HAMR and MAMR technologies will likely provide tremendous increases in density.

There is no doubt that density has a clear path forward due to intense focus from manufacturers, but the real problem lies on the performance front. The spindle speed of the common HDD has settled at a top speed of 15,000 RPM, and there are no plans to increase this speed in the future. This stagnation leaves only incremental speed increases, borne of increased density, on the horizon. SSDs, on the other hand, have brought massive increases in speed and efficiency. Even the fastest of HDD's cannot hold a candle to the sheer speed of an enterprise SSD. This has led to a continuing assault in the performance segment of the enterprise HDD market, with SSD's gaining market share at a rapid pace.

Even with the evolution of the SSD leading to lower prices, they are still many orders of magnitude more expensive to deploy than HDD's. For years, the only method to increase storage performance was short-stroking the drive. This sacrifices a large portion of available capacity to force an HDD to operate on the outer tracks, where speed is highest. This also increases the price of the storage solution considerably.

Seagate's answer to the performance problem is the introduction of SSHD's (Solid State Hybrid Drive). SSHD's are only a fraction of the cost of an SSD, yet provide some of the same workload acceleration benefits by storing hot data in a NAND cache buffer. Seagate's first foray into caching HDD's was in the client market, and the lessons learned were applied to the new Enterprise Turbo SSHD.

The Seagate Enterprise Turbo SSHD is geared for use in a multitude of mission-critical environments, such as OLTP, VDI and SAP HANA. The drive comes in capacities of 300GB, 450GB and 600GB in a 2.5" form factor with a 15mm z-height. The Turbo SSHD features a 6Gb/s SAS connection and 128MB of multi-segmented DRAM cache, though this is only the first level of caching.

The Turbo SSHD is built upon the same 15,000 RPM HDD used in the Enterprise Performance 15K v4 HDD's, and sports the same design with three platters and six heads. The real ingenious aspect of the drive lies in the NAND caching, handled by 32GB of eMLC and an additional 8MB of NVC (Non-Volatile Cache). Hot data is held in the NAND and served to the host system at a much faster rate than the platters can provide, in many cases providing up to a three times improvement in application performance. The drive itself delivers up to 800 IOPS, a SDR (Sustained Data Rate) of 247 MB/s, and an average latency of 2.0ms.

The hardest part of testing an SSHD is quantifying the massive speed increases brought out by the cache. We have developed specialized tests that isolate and explore the performance of the NAND components. First, we examine the way an SSHD operates.

Update: Unless otherwise noted performance results for the Turbo SSHD are conducted over 33% of the LBA range to highlight caching performance.

Seagate Turbo SSHD Architecture and Specifications

Seagate Turbo SSHD Architecture

The enterprise space has seen numerous caching and tiering approaches spring up to help marry the performance of SSDs with the capacity of HDD's. Unfortunately, these approaches typically involve the purchase of expensive software and/or hardware appliances, which incur increased costs for administration of the caching/tiering solution.

Seagate's SSHD cache management is entirely transparent to the user. Seagate's proprietary AMT (Adaptive Memory Technology) algorithms intelligently identify hot data at the block level, assess the data, and acts accordingly. Caching at the block level, as opposed to caching files, is important. In many cases, the same data blocks can accelerate several applications simultaneously.

Hot data is placed into NAND cache, with a copy retained on the platters to insure data integrity in the event of NAND failure. The AMT algorithm is adaptive; it constantly studies the changing hot data patterns and then evicts and promotes new hot data to the cache based upon access times from the platter. Data promotion is handled judiciously to reduce undue wear on the NAND, ensuring a long lifecycle. The drive avoids caching sequential data, which plays to the strength of the HDD and reduces wear on the NAND, only caching the hardest-to-read random data. Writing random data sequentially to the cache also helps extend the longevity of the NAND. The use of eMLC with 15K P/E Cycles, in lieu of standard consumer-grade NAND with 3K P/E cycles, also ensures plenty of endurance over the warranty period.

Caching random read data has numerous other positive side effects. Alleviating much of the head movement during intense mixed read/write workloads boosts random write performance and sequential access latency. One would also assume that the use of caching would lead to less wear and tear on the head units and actuators, though Seagate has guaranteed the drive with standard AFR and MTBF specifications.

The NAND cache of the SSHD is also multi-segmented, with a small 8MB layer of SLC designated as NVC (Non-Volatile Cache). While the cached data in the eMLC is lost when the SSHD is powered down (not a concern with 24/7 duty cycles in the enterprise), the data in the SLC layer is held for up to 90 days when powered down. The NVC layer is also used to save data stored in DRAM in the event of an unsafe power loss, creating an extra layer of power-loss protection not provided by standard HDD's.

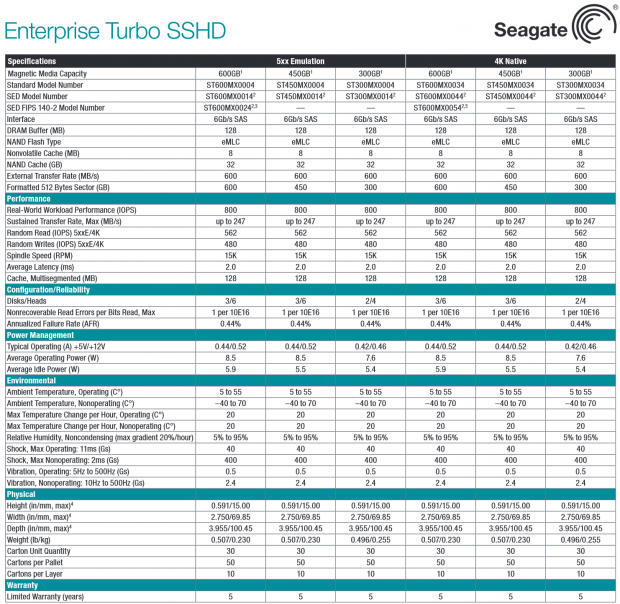

Seagate Turbo SSHD Specifications

The SSHD uses typical perpendicular recording technology and comes in several versions with both 4K native and 5xx emulation. There is further segmentation depending upon the level of encryption required: Standard, Secure Encryption, and FIPS 140-2 with encryption models are available.

Cache comes in three tiers, with 128MB of multi-segmented DRAM, 32GB of eMLC and 8MB of NVC on every model. Power consumption is unchanged at 8.5 Watts during typical operation, and 5.9 Watts at Idle.

The Turbo SSHD features a Nonrecoverable Read Error rate of 1 per 10E16, and an AFR of 0.44%. The drive also features an enterprise-standard five-year limited warranty.

Seagate Turbo SSHD Internals

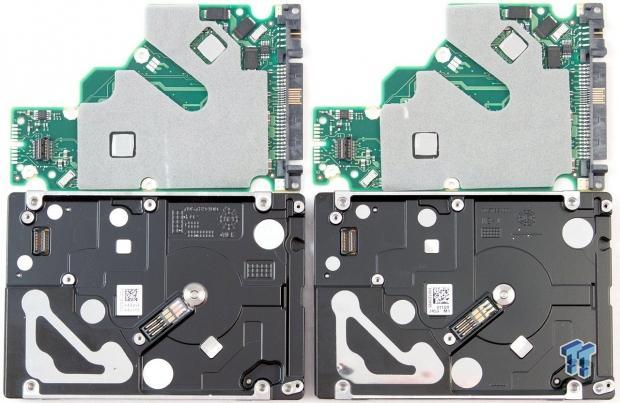

The Turbo SSHD is based upon the same platform as the Enterprise Performance 15K v4 HDD, pictured here with the SSHD.

When placed next to each other the drives, and the PCBs, are virtually identical. For the record, the drive on the right is the SSHD. It would be nearly impossible to discern that from casual observation.

Both drives feature the same thermal pads over the drive and motor controllers. These allow the drives to shed heat into the case for effective thermal management. The SSHD will also alert the system via SMART when the drive exceeds the acceptable temperature range.

Both drives also have foam padding to reduce vibration. Virtually the only visible difference between the two PCB's is the inclusion of a Samsung NAND package on the SSHD PCB to the right.

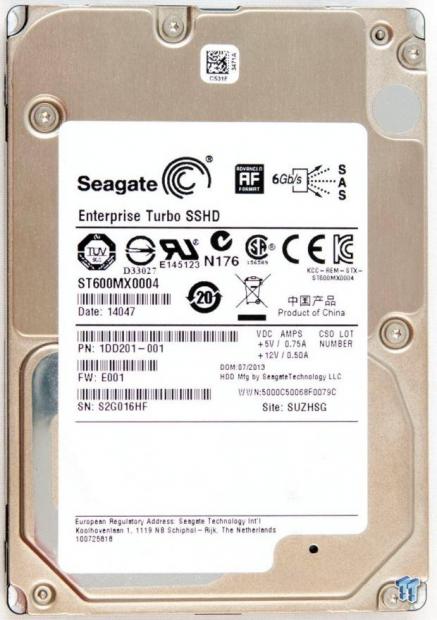

The Turbo SSHD comes in the typical 2.5" form factor with a 15mm z-height. The branding on the top of the drive indicates that our sample uses the 4K Advanced Format. The drive is also available with 5xx emulation.

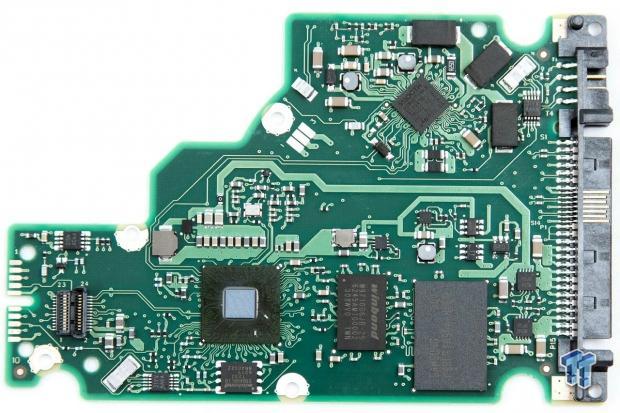

A closer examination reveals a single BGA-mounted Samsung eMLC 21nm NAND package. A Winbond 128MB multi-segmented cache chip provides the DRAM cache layer, and a SMOOTH drive controller handles the spindle motor.

The SSHD's controller is a Marvell I 1063-B0, the same utilized on the Enterprise Performance v4 15K HDD. Surprisingly, there is no separate controller for the NAND on the SSHD, it is handled by Seagate's firmware on the Marvell controller. Pulling enough computational power from the low-wattage controller to detect and manage the hot data, while simultaneously handling the drive and NAND controller functions, is simply amazing.

The Turbo SSHD features a 6Gb/s dual-port SAS connection, which provides the enhanced functionality of the SCSI command set.

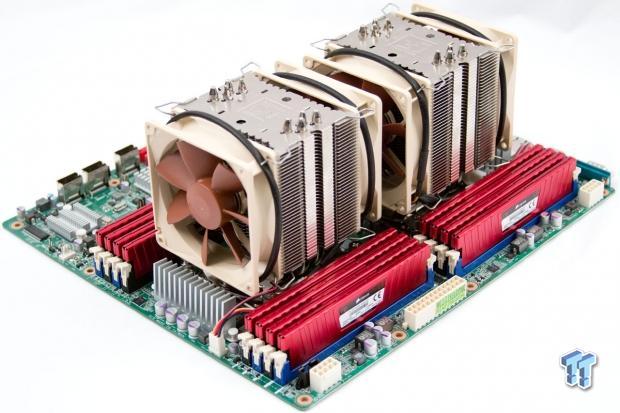

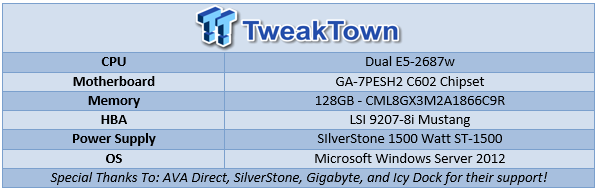

Test System and Methodology

Our Enterprise Test Bench is designed specifically to target long-term performance with a high level of granularity. Many testing methods record peak and average measurements during the test period. These average values give a basic understanding of performance, but fall short in providing the clearest view possible of I/O QoS (Quality of Service).

'Average' results do little to indicate the performance variability experienced during actual deployment. The degree of variability is especially pertinent, as many applications can hang or lag as they wait for I/O requests to complete. This testing methodology illustrates performance variability, and includes average measurements, during the measurement window.

While under load, all storage solutions deliver variable levels of performance. While this fluctuation is normal, the degree of variability is what separates enterprise storage solutions from typical client-side hardware. Providing ongoing measurements from our workloads with one-second reporting intervals illustrates product differentiation in relation to I/O QOS. Scatter charts give readers a basic understanding of I/O latency distribution without directly observing numerous graphs.

Consistent latency is the goal of every storage solution, and measurements such as Maximum Latency only illuminate the single longest I/O received during testing. This can be misleading, as a single 'outlying I/O' can skew the view of an otherwise superb solution. Standard Deviation measurements consider latency distribution, but do not always effectively illustrate I/O distribution with enough granularity to provide a clear picture of system performance. We use histograms to illuminate the latency of every I/O issued during our test runs.

We also measure power consumption during test runs. This provides measurements in time-based fashion, with results every second, to illuminate the behavior of power consumption under typical workloads. Power consumption can cost more over the life of the device than the initial acquisition price of the hardware itself. This significantly affects the TCO of the storage solution. We also present IOPS-to-Watts measurements to highlight the efficiency of the storage solution.

We will be utilizing tests specifically tailored to test SSHD's, and we will conduct the remainder of our tests over the full LBA range to allow each HDD to highlight its average performance. Both Seagate HDD's are 600GB, while the MK4001GRRB from Toshiba is only 147GB. This should be taken into consideration when viewing test results. The first page of standard test results will provide the key to understanding our testing methodology.

Update: Unless otherwise noted performance results for the Turbo SSHD are conducted over 33% of the LBA range to highlight caching performance.

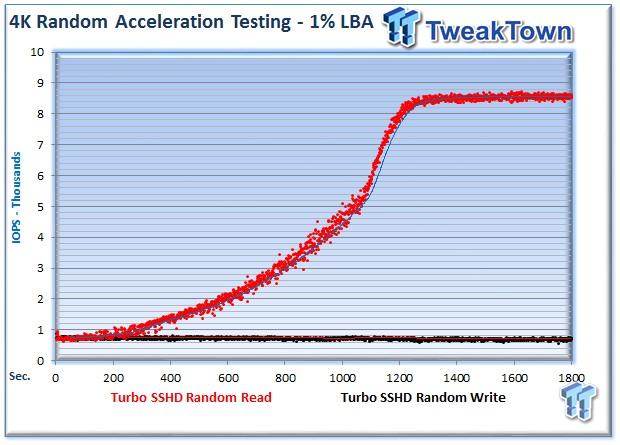

Exploring Maximum Cache Performance

With this being the first enterprise-class SSHD in the wild, we step through several tests to explore caching characteristics.

Our first task is to determine the maximum speed of the AMT caching engine under the best of circumstances. We test with 4K random read and write data on only 1% of the drive. Roughly 5% of the SSHDs capacity is mirrored with NAND cache to provide acceleration, and only accessing a small percentage of the NAND allows us to tease out the maximum performance of the Marvell controller and the Samsung NAND.

The massive read speed acceleration nearly jumps off the chart in this test, from 900 IOPS to a staggering 8,800 IOPS. Using a small amount of data assures that these results are coming almost entirely from the cache. This highlights that the controller effectively caches requested data and can pick out hot data under favorable conditions rather quickly. It took only three minutes for the algorithms to identify the hot data, and another 16 minutes to transfer the hot data into the NAND cache.

Operating under the assumption that all of the data is transferred to the NAND, we can deduce the write speed of the NAND buffer. We are reading from 1% of the 556 GB's of user-addressable space (5.56GB). With 5.56 GB of data requiring 16 minutes to transfer into cache, we come up with roughly 355 MB/s of write throughput to the single NAND package. This is an impressive amount of throughput from one package of NAND. This nearly saturates the maximum speed of Toggle 2.0 (400 MB/s), and will allow for fast adjustments as hot data changes. This also confirms the sequential transfer of cached data to the NAND. Random write speeds in that range simply aren't possible with a single NAND package.

We also observe a lack of acceleration for 4K random write data. With extended periods of writing, we were unable to trigger acceleration when the data was passed to the platters. These results do not indicate an entire lack of caching. It is possible the data is being partially cached and sequentialized in the DRAM caching buffer, but we are sure that the drive isn't committing the writes to the NAND buffer. While this would be beneficial, it would also lead to excess wear and longevity concerns with the eMLC NAND. It is important to bear in mind that random writes incur more wear than on NAND than sequential writes.

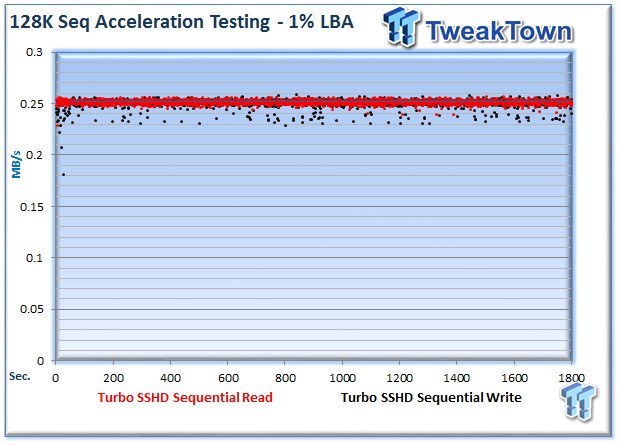

The same tests with sequential data yields no acceleration for read or write data. Both tests are evenly matched and the results overlap. These are expected results, as HDD's perform very well with sequential data and acceleration would merely add undue wear on the NAND. Sequential writes are better served going to the platters, which have no endurance concerns.

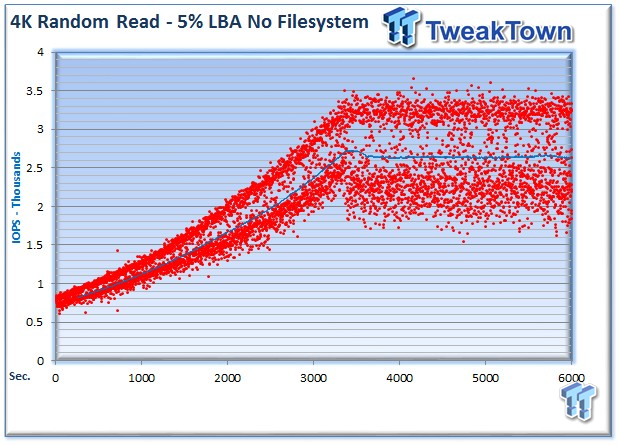

Our exploration revealed that only random read data is actively cached to the NAND buffer. This allows us to focus on the performance of the SSHD with various scenarios. Here we read from 5% of the user-addressable space, which would fill the entire cache buffer. In this test, the algorithms begin to promote data to the cache immediately. This results in data acceleration that averages 2,700 IOPS, though we record interspersed periods with much higher performance. This lower result could be the result of the data not being entirely cached, or slower speeds when the NAND is entirely full.

We experience a drastic increase in speed when we test with small LBA ranges and only one stream of data, so the task falls to us to attempt to trick the AMT algorithms and simulate a more distributed workload with several data streams.

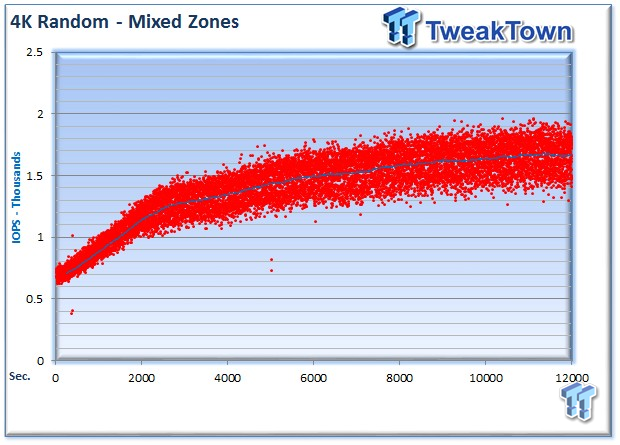

We created a complex multi-segmented test pattern with multiple data streams to test the efficacy of the AMT algorithms. We test 4K read data with three data streams. The first addresses the same 5% of the drive we tested above, but only receives 70% of the workload. The second data stream reads from a larger 30% chunk of the LBA range with 15% of the workload, and finally the third data stream reads from the entire capacity of the drive with the remaining 15% of the workload.

This effectively forces the drive to ignore the 'less desirable' read access and cache only the most relevant data. We observe from the results that the SSHD still performs admirably, with an increase from 600 IOPS to 1,700 IOPS. While this lower level of performance may seem disappointing to some, the real challenge would be to attempt to draw anything even remotely near 1,700 IOPS on a standard HDD. It simply is not possible. We note a sprinkling of much higher performance, up to 1,900 IOPS, simply unheard-of performance from a HDD.

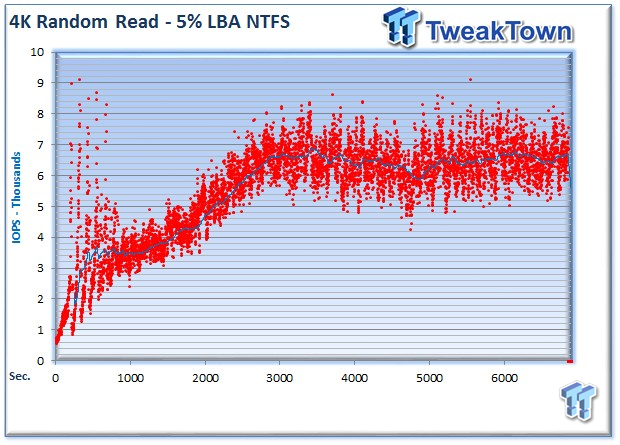

We conduct our testing outside of the file system for numerous reasons. File systems are inefficient and bring forces beyond our control into the equation, such as metadata, buffers and caches. For the purposes of the SSHD review, however, the file system can also introduce locality from the file system metadata. In many cases, metadata is a primary bottleneck during typical use.

With the same 5% test above, we topped out at 2,700 IOPS. With a file system, we top out at 6,800 IOPS. This is an impressive result, but we can also observe some of the negative effects from testing with a file system with this granularity. Observing the errata at the beginning of the test reminds us of the system caching and buffers brought on by NTFS. These results should be taken with a grain of salt, but the effects of accelerating metadata does result in a big boost in performance.

4K Random Read/Write

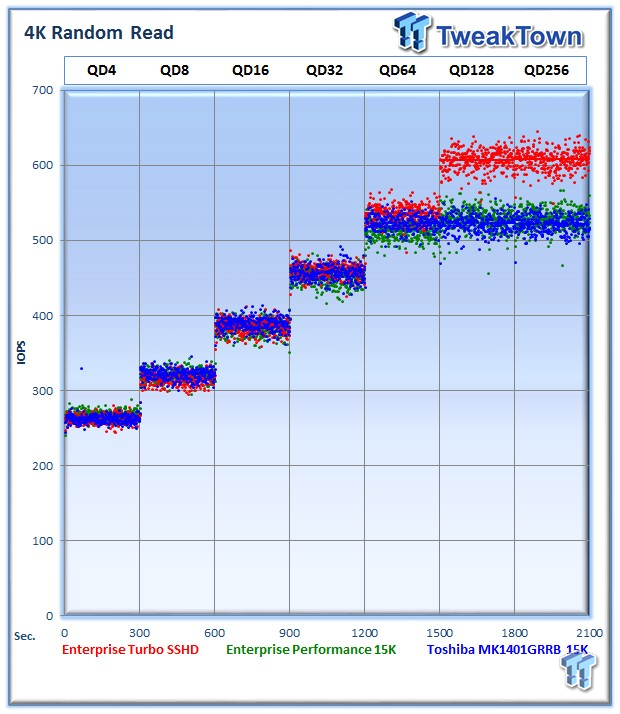

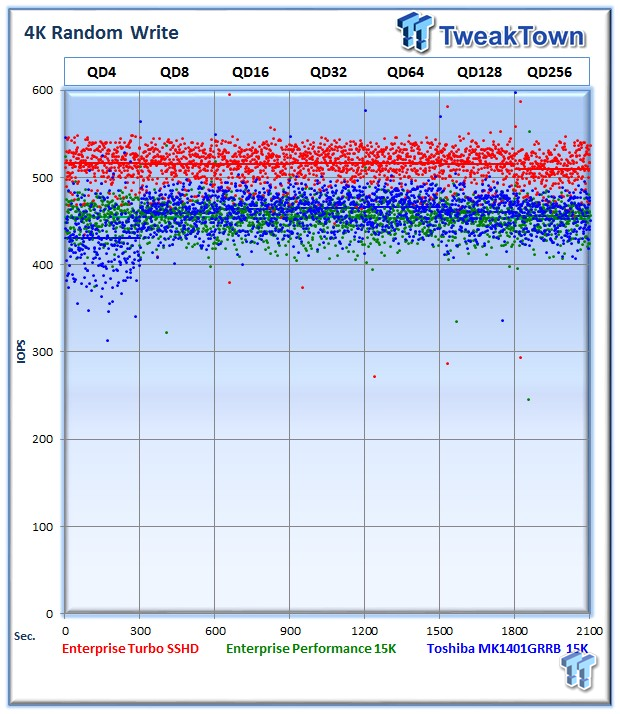

Each QD for every parameter tested includes 300 data points (five minutes of one second reports) to illustrate the degree of performance variability. The line for each QD represents the average speed reported during the five-minute interval.

4K random speed measurements are an important metric when comparing drive performance, as the hardest type of file access for any storage solution to master is small-file random. One of the most sought-after performance specifications, 4K random performance is a heavily marketed figure.

The Seagate Enterprise Turbo SSHD outpaces both of its competitors with an average of 608 IOPS. The Enterprise Performance 15K lags behind with an average of 529 IOPS, while the Toshiba MK1401 averages 522 IOPS at QD256. The SSHD's increase in speed is impressive due to the span of the entire LBA for these tests. With this testing, there really isn't any chance for the clusters of I/O the SSHD thrives on. The NAND acceleration still provides a benefit even in widely distributed workloads.

The Turbo SSHD averages 509 IOPS, the Enterprise Performance 452 IOPS, and the MK1401 averages 456 IOPS at QD256.

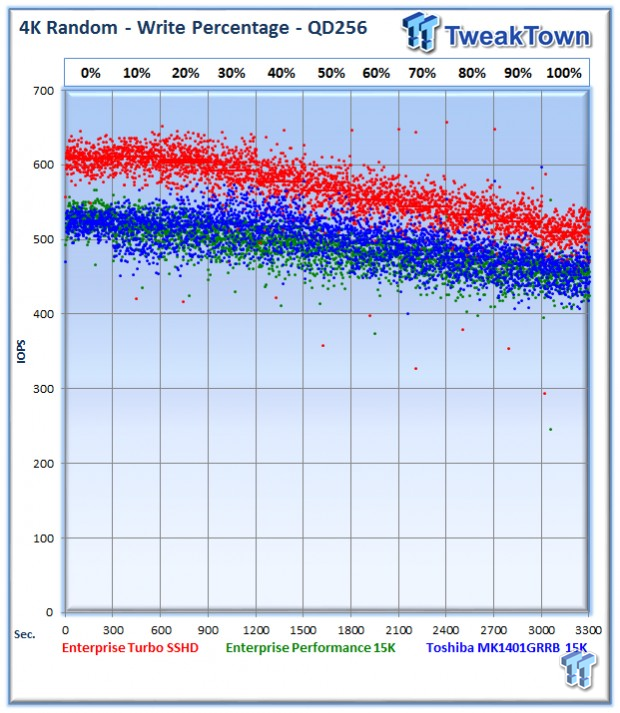

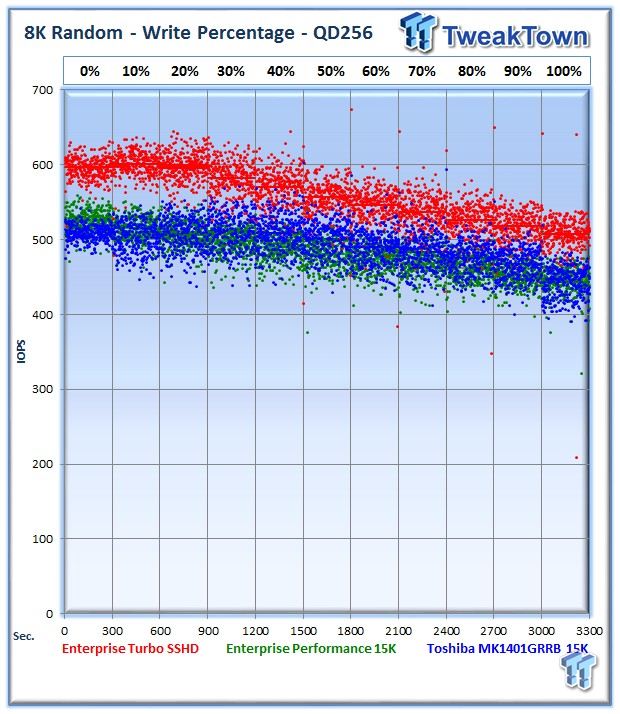

Our write percentage testing illustrates the varying performance of each solution with mixed workloads. The 100% column to the right is a pure write workload of the 4K file size, and 0% represents a pure 4K read workload.

As we move across our full-span mixed read/write workloads, we witness the Turbo SSHB enjoying a big lead in every category.

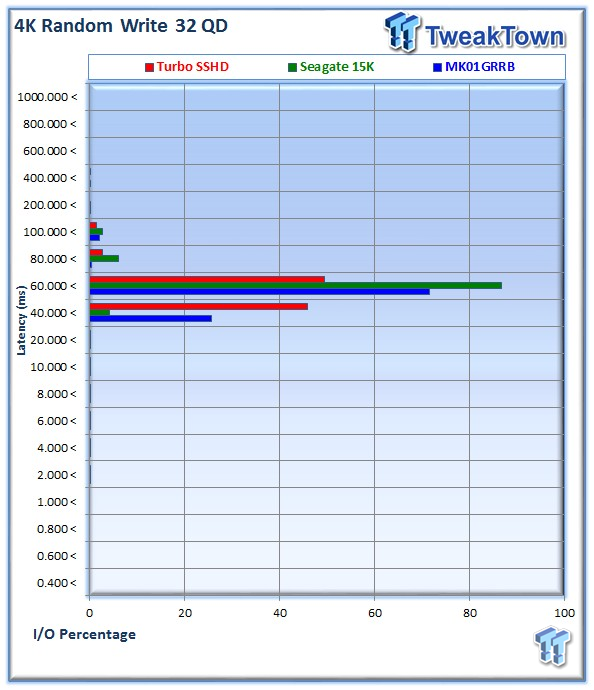

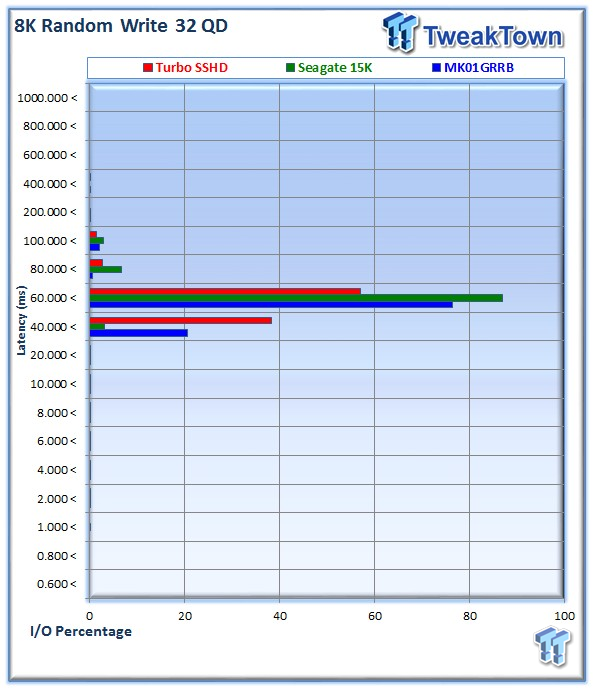

The Turbo SSHD provides more accesses in the lower latency ranges.

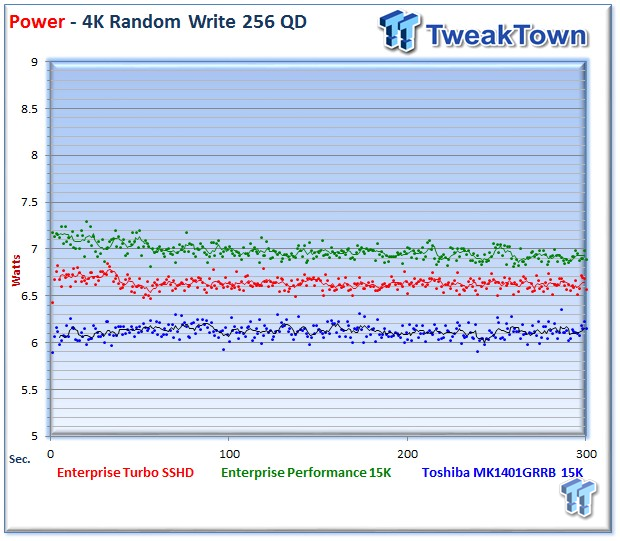

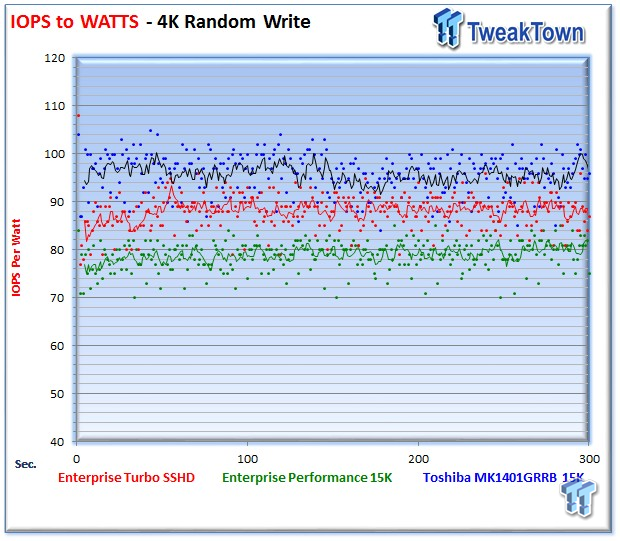

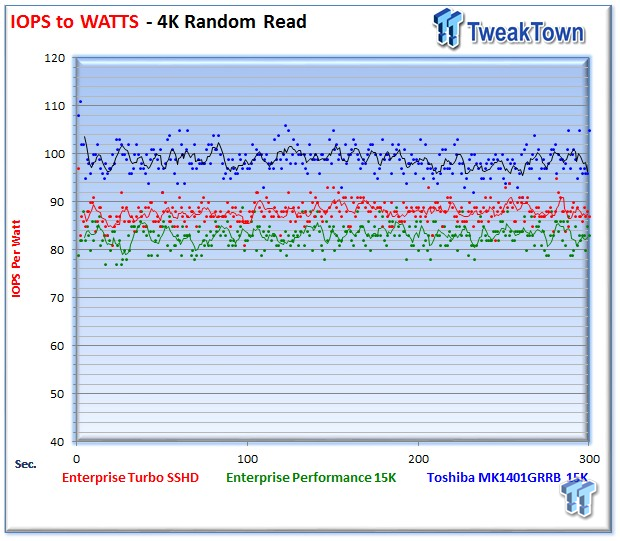

We record the power consumption measurements during our test run at QD256. The Turbo SSHD pulls fewer watts than its 15K counterpart, averaging only 6.63. The Enterprise Performance averages 6.97 and the MK1401 averages the lowest with 6.12 Watts. The MK1401 has fewer platters, which leads to low power draw across the board.

IOPS to Watts measurements are generated from data recorded during our test. The Turbo SSHD beats its sibling, yet falls behind the diminutive MK1401.

8K Random Read/Write

8K random read and write speed is a metric that is not tested for consumer use, but for enterprise environments this is an important aspect of performance. Several workloads rely heavily upon 8K performance and we include this with each evaluation. Many of our Server Emulations below also test 8K performance with various mixed read/write workloads.

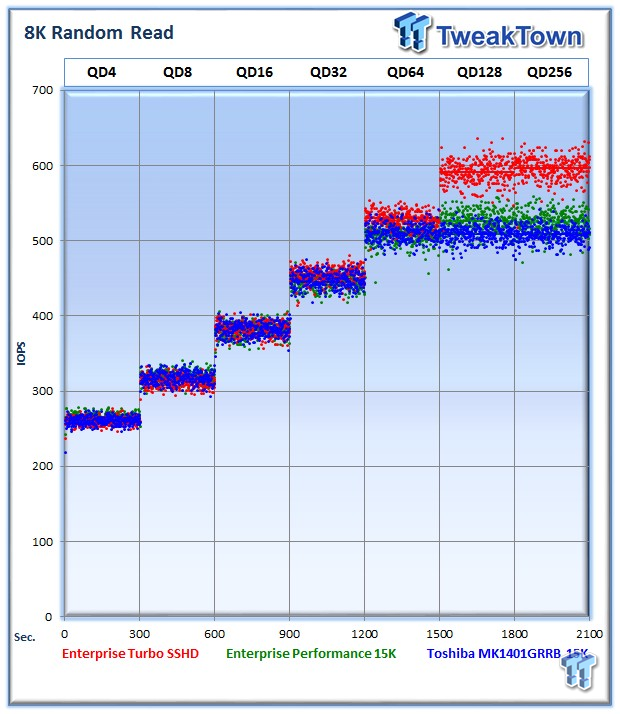

The average 8K random read speed of the Turbo SSHD weighs in at 596 IOPS. The Enterprise Turbo and MK1401 trail with 525 and 522 IOPS, respectively.

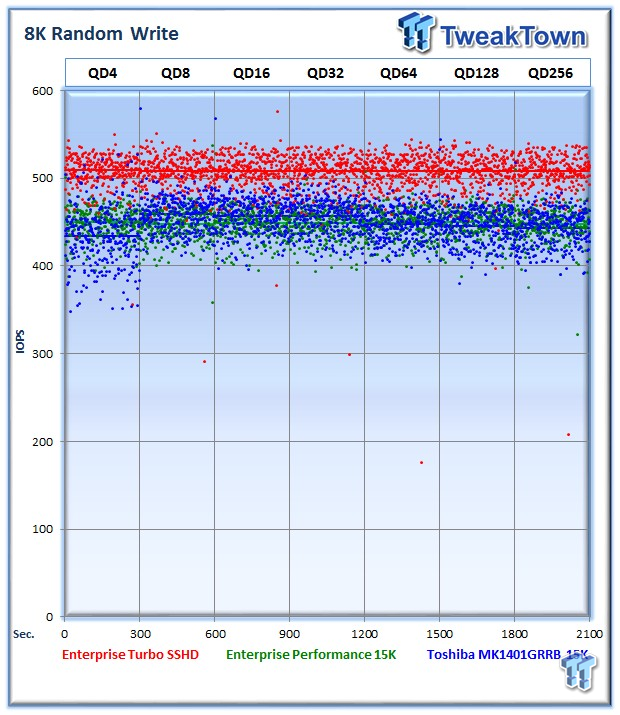

The average 8K random write speed of the Turbo SSHD is dominating at 508 IOPS. The MK1401 averages 443 IOPS, and the Enterprise Performance delivers 447 IOPS.

The Seagate Enterprise Turbo SSHD leads the pack again in our mixed read/write testing, enjoying a large lead across the various percentages.

Once again, the SSHD exhibits a lower latency distribution than the other HDD's.

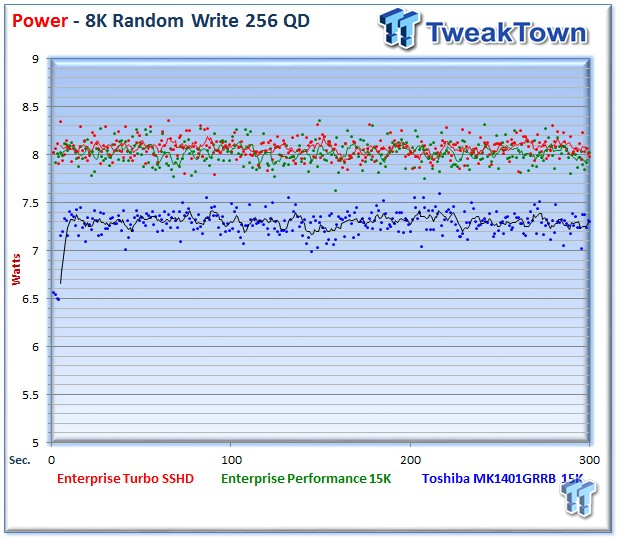

Power consumption for the Turbo SSHD and the Enterprise Performance are very close, with 8.07 and 8.02 Watts, respectively. The MK1401 averages a miserly 7.29 Watts during the measurement window.

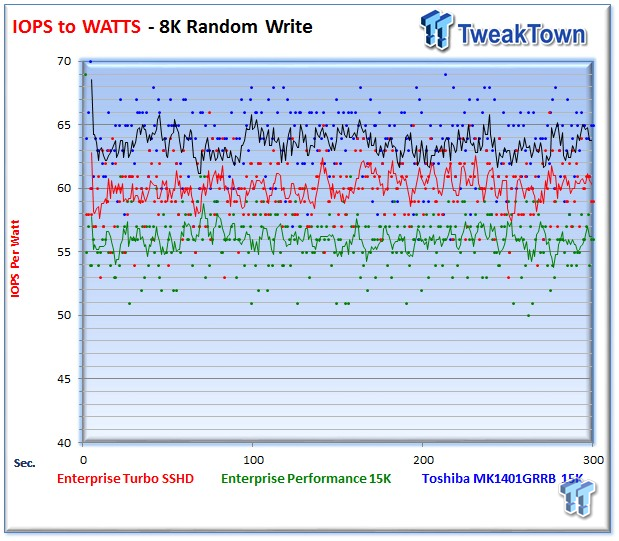

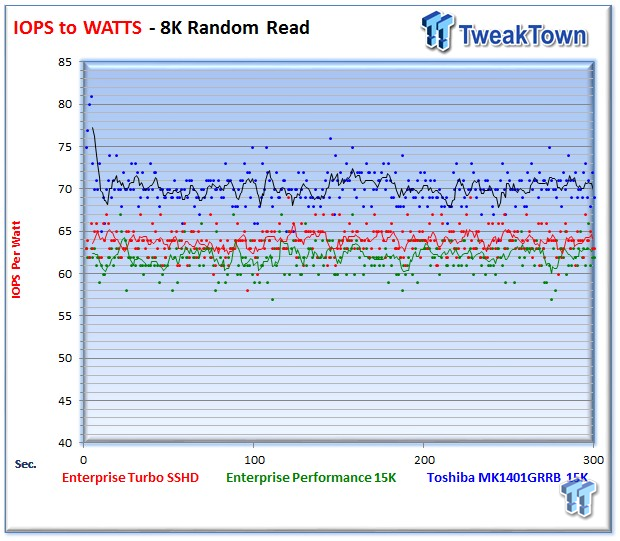

The Turbo SSHD delivers an incremental increase in IOPS-per-Watt performance over the Enterprise Performance 15K HDD.

128K Sequential Read/Write

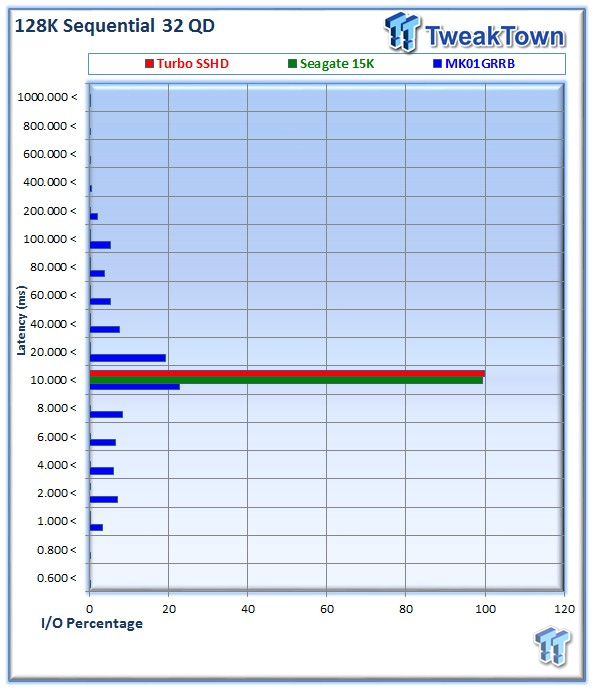

The 128K sequential speeds reflect the maximum sequential throughput of the HDD using a file size encountered in normal usage.

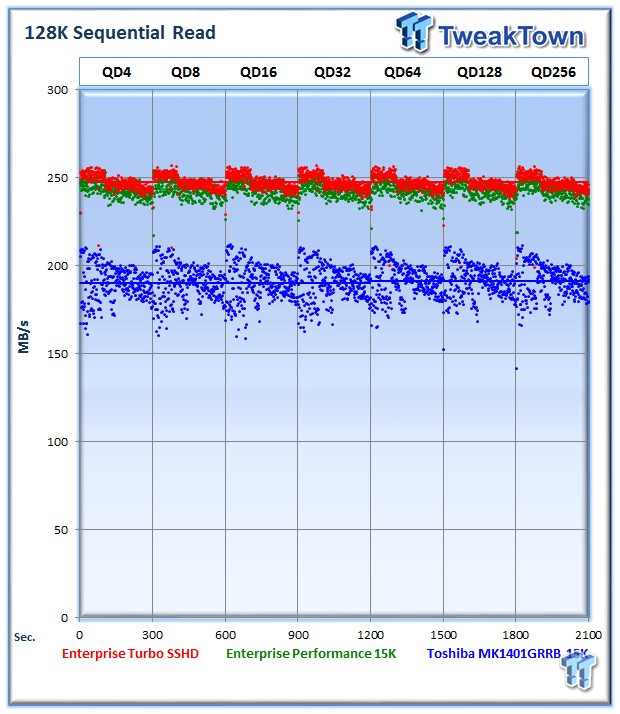

The Turbo SSHD leads with a blistering 243 MB/s of sequential read speed, while the Enterprise Performance trails slightly at 242 MB/s. The MK1401 trails by a large margin at 191 MB/s. The odd performance profile of the MK1401GRRB results from the quick travel of the head across the small 147GB platter.

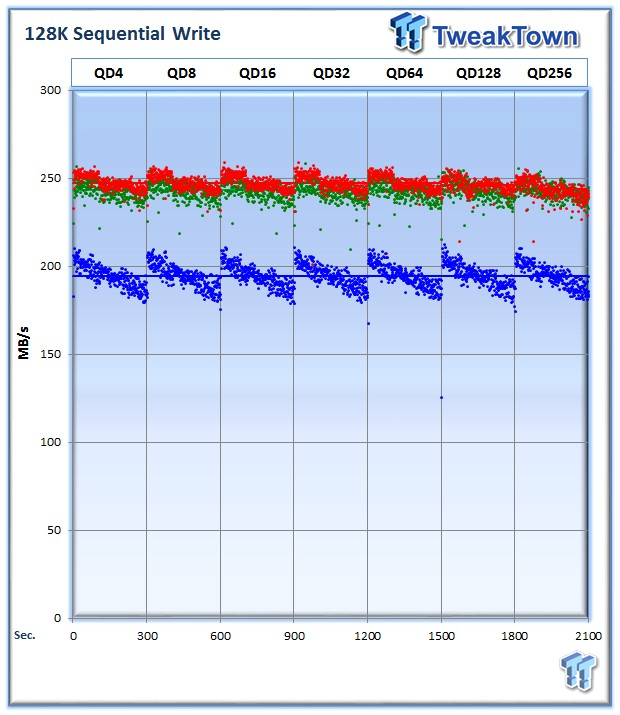

The Turbo SSHD averages 194 MB/s, the Enterprise Performance weighs in at 242 MB/s, while the MK1401 averages 194 MB/s.

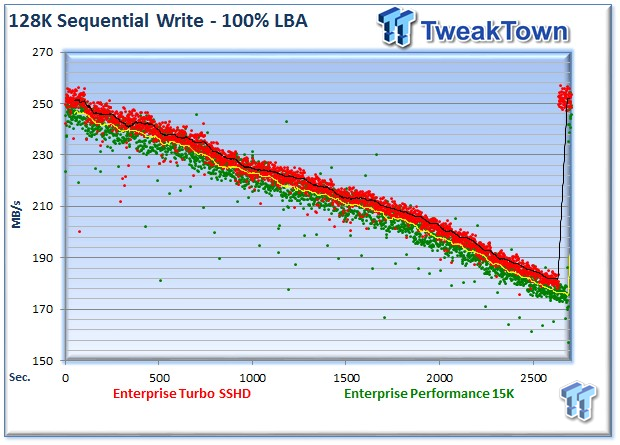

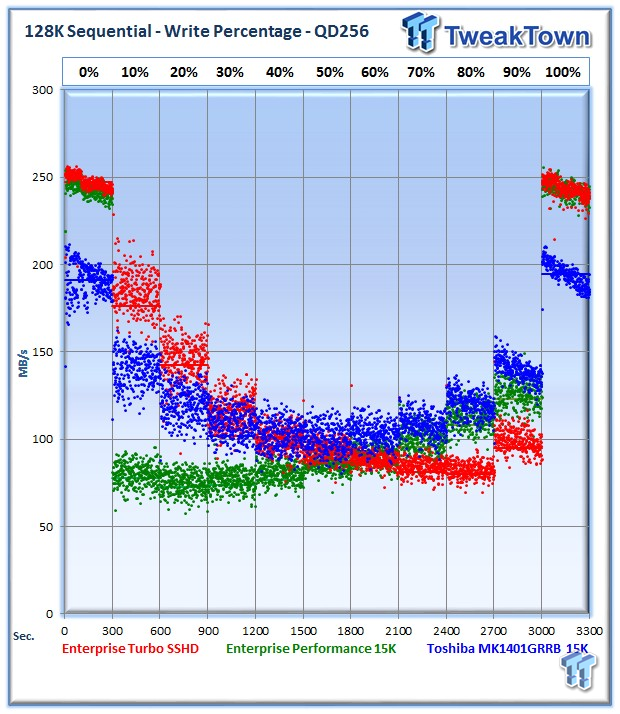

In this test, we write sequentially to the entire LBA range of the HDD to explore the performance of the HDD's with sequential activity. The MK1401GRRB is removed from this test due to its lower capacity of only 147GB. The Turbo SSHD manages to provide a faster average speed (black line) than the Enterprise Performance (yellow line). This results in the Turbo SSHD returning to the edge of the platter faster, evidenced by the jump in performance at the end of the test.

The Turbo SSHD performs well in the mixed test, with the exception of the 60-90% range, where it falls behind the competition. The NAND cache is not used for sequential access, so this isn't a surprising result.

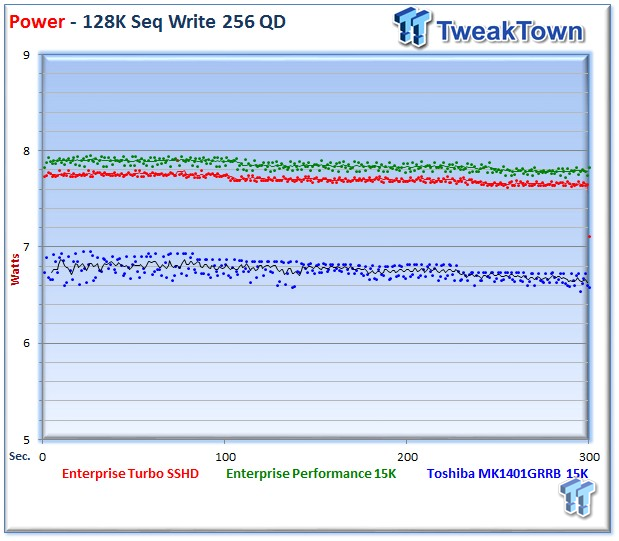

The Turbo SSHD averages 7.71 Watts, below the 7.85 of the Enterprise Performance HDD.

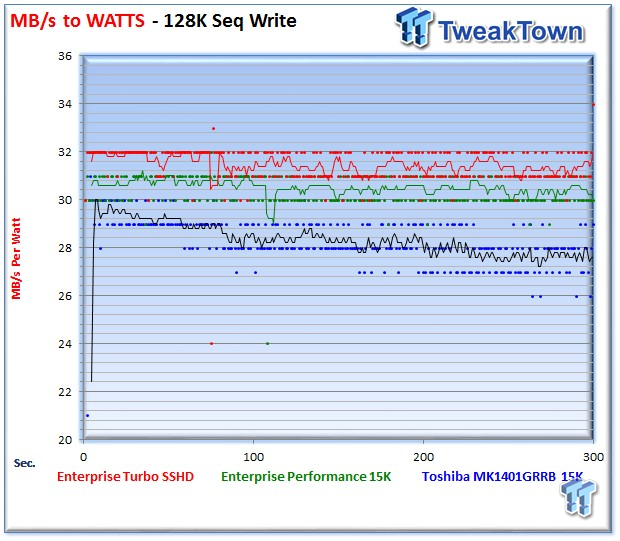

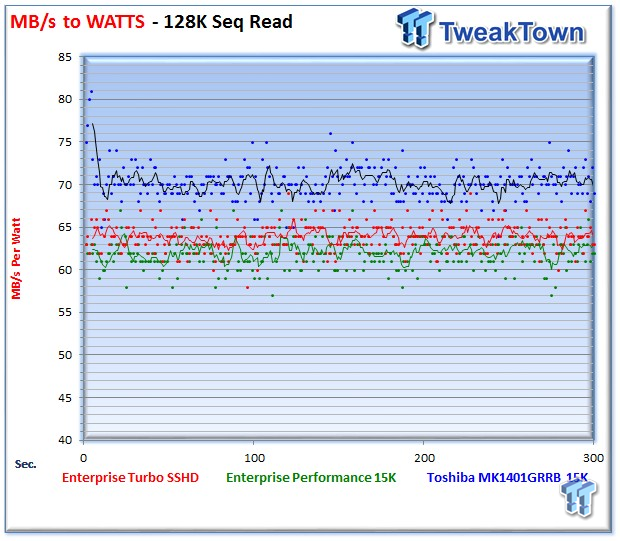

The Turbo SSHD leads in the IOPS per MB/s with sequential write access, but falls to second place in the sequential read testing.

Database/OLTP and Fileserver

Database/OLTP

This test emulates Database and On-Line Transaction Processing (OLTP) workloads. OLTP is in essence the processing of transactions such as credit cards and high frequency trading in the financial sector. Databases are the bread and butter of many deployments. These are demanding 8K random workloads with a 66% read and 33% write distribution that can bring even the highest performing solutions down to earth.

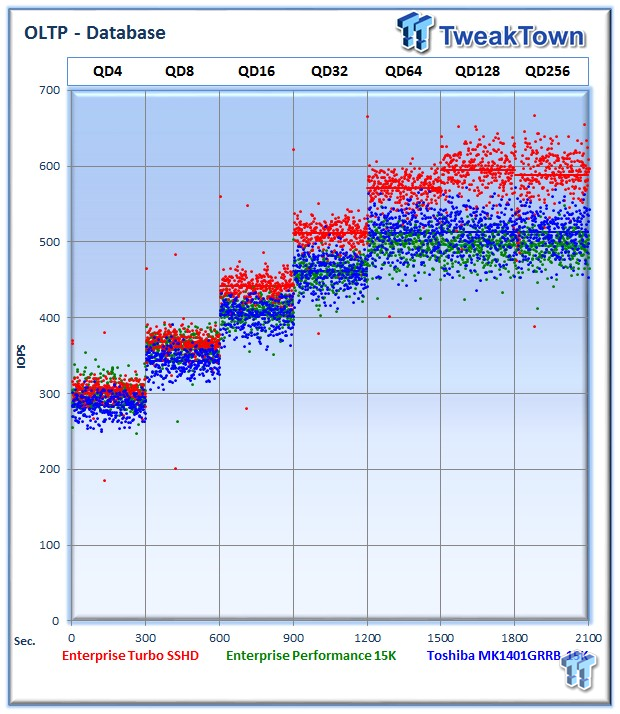

The Turbo SSHD averages 587 IOPS at QD256, while the Enterprise Performance averages 469 IOPS. The MK1401 pulls off 513 IOPS at QD256.

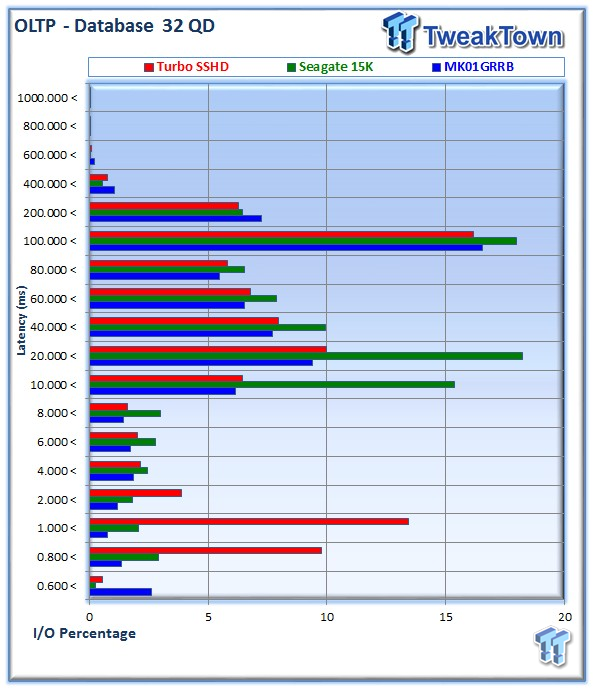

The Seagate Turbo SSHD delivers a lower latency distribution than the competing drives.

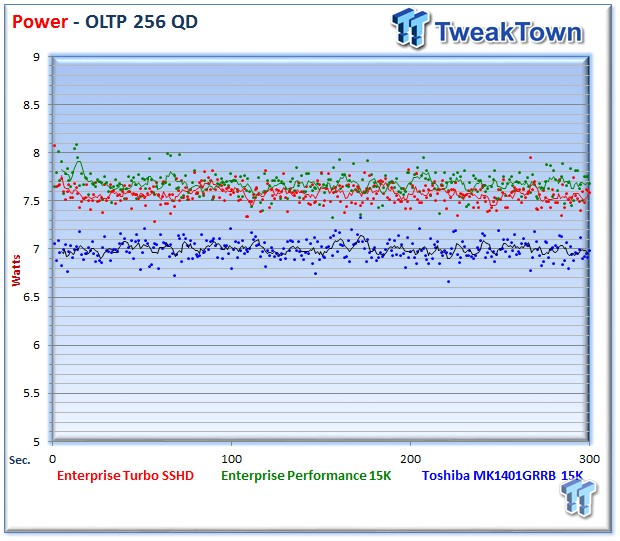

The Turbo SSHD averages 7.57 Watts, closely matched to the Enterprise Performance, but above the MK1401.

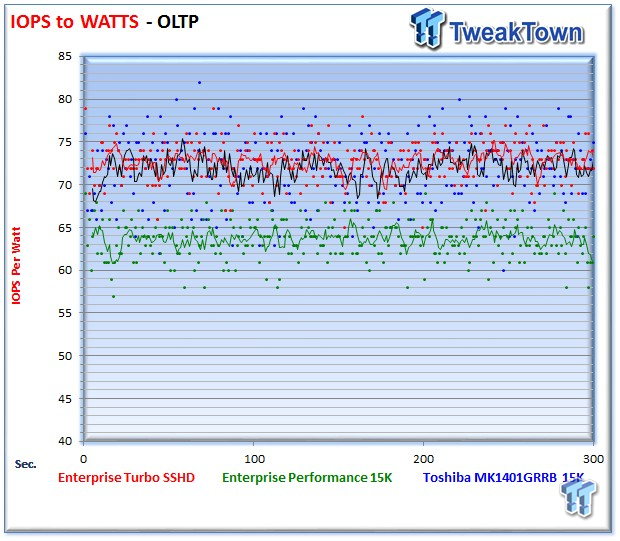

The Seagate Turbo SSHD matches the MK1401 in IOPS-per-Watt during this test.

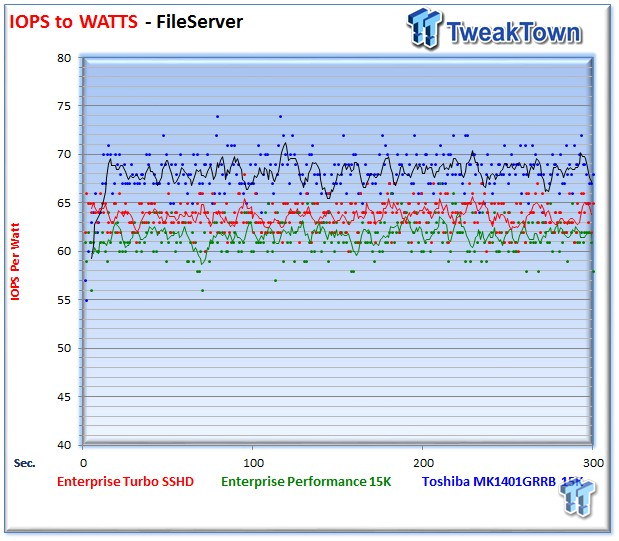

Fileserver

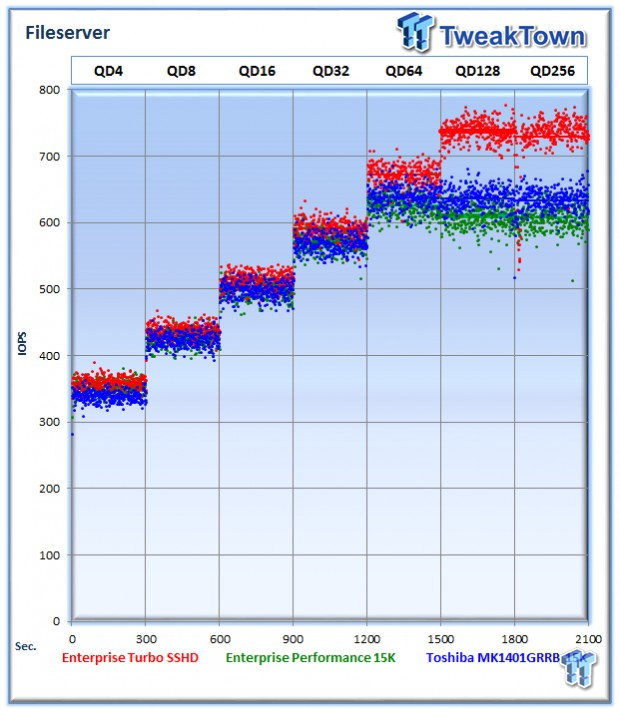

The File Server profile represents typical file server workloads. This profile tests a wide variety of different file sizes simultaneously to simulate multiple users with an 80% read and 20% write distribution.

The Turbo SSHD averages 131 IOPS, while the Red averages 182 IOPS at QD256.

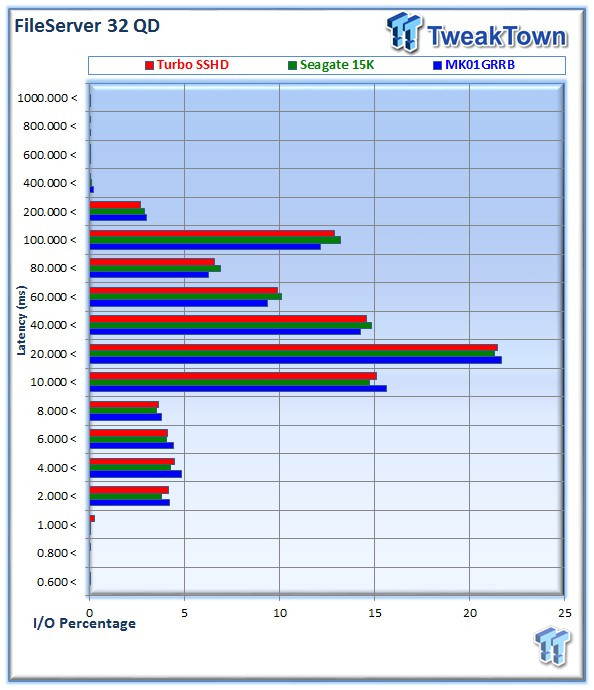

All of the drives are closely matched during this test, with the MK1401 providing the best latency.

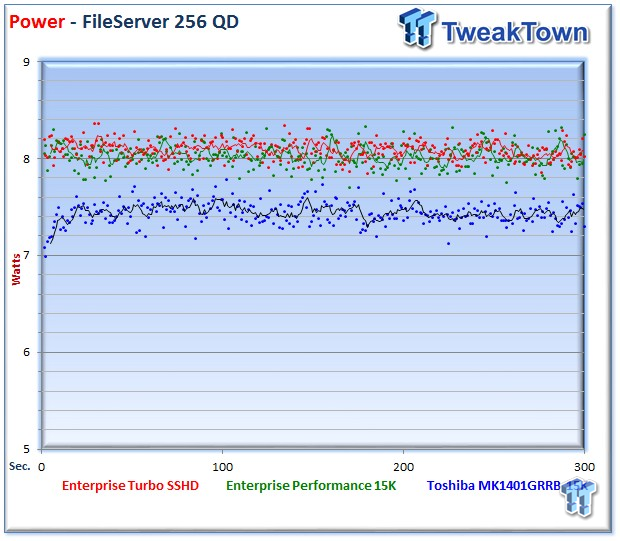

The Turbo SSHD and Enterprise Performance 15K are closely matched in power consumption for this workload.

The MK1401 again pulls off the win in the IOPS-to-Watts testing, due to its single platter design.

Emailserver and Webserver

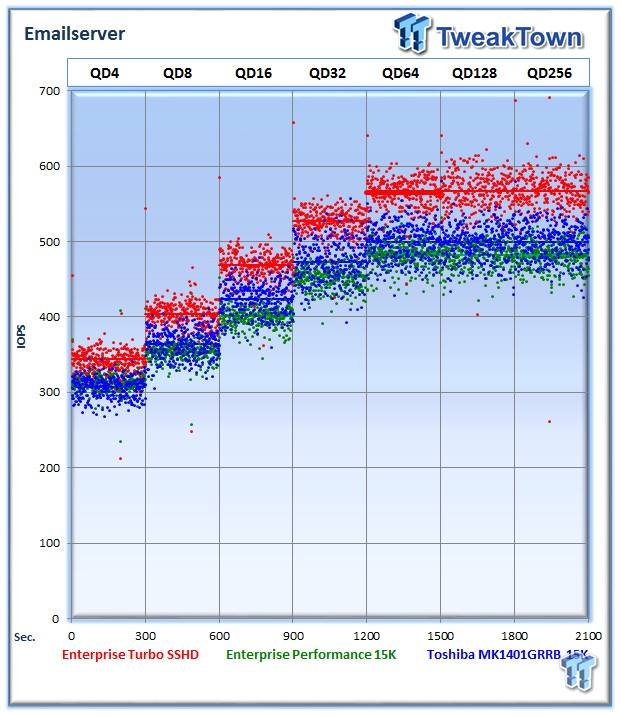

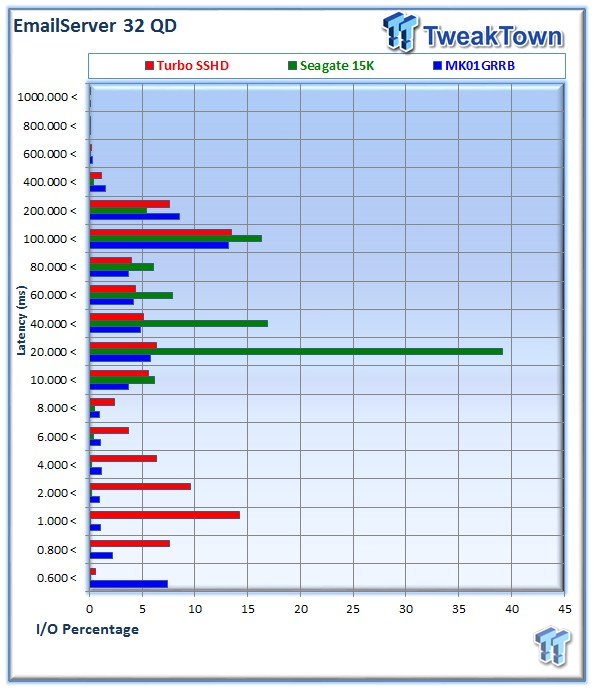

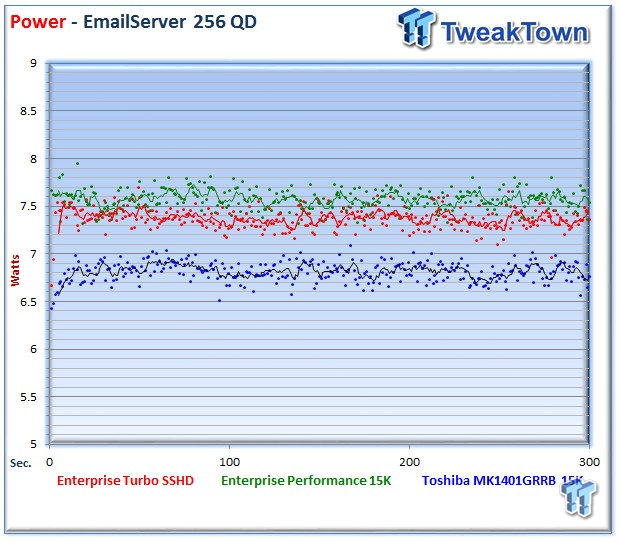

Emailserver

The Emailserver profile is a very demanding 8K test with a 50% read and 50% write distribution. This application is indicative of the performance of the solution in heavy write workloads.

The Turbo SSHD averages 566 IOPS at QD256, in comparison to 481 IOPS from the Enterprise Performance and 499 IOPS from the MK1401.

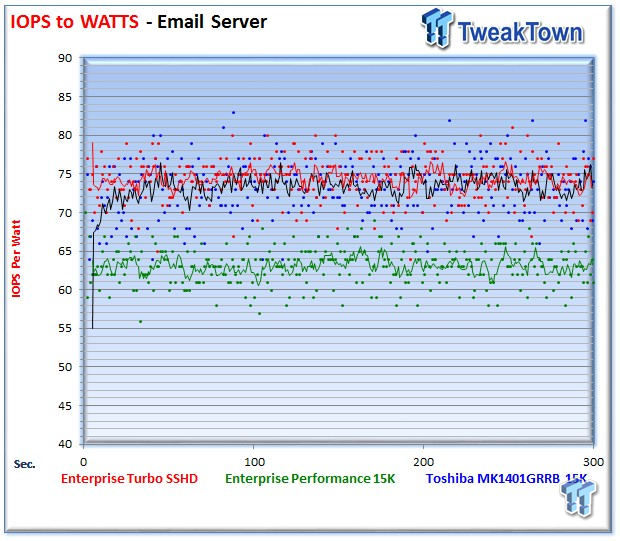

The Seagate Turbo SSHD averages 7.37 Watts during the measurement window.

The Seagate Turbo SSHD again matches the MK1401 in IOPS-to-Watts testing.

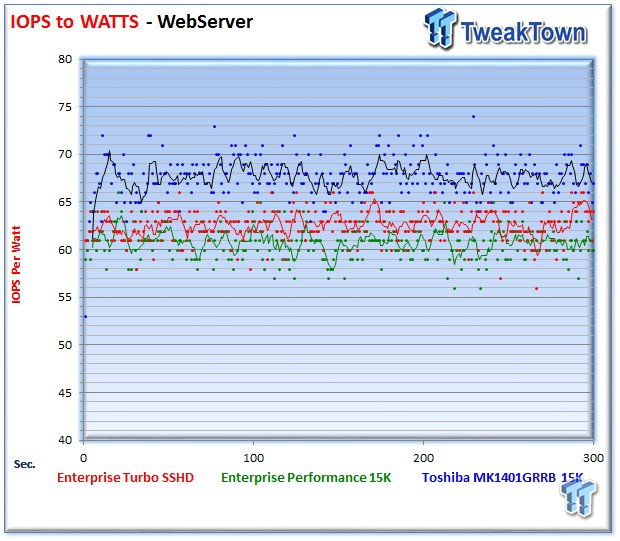

Webserver

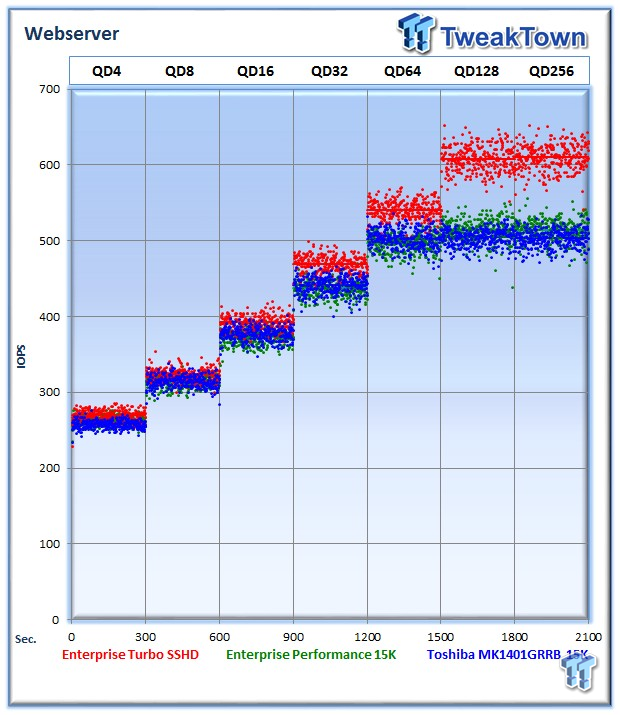

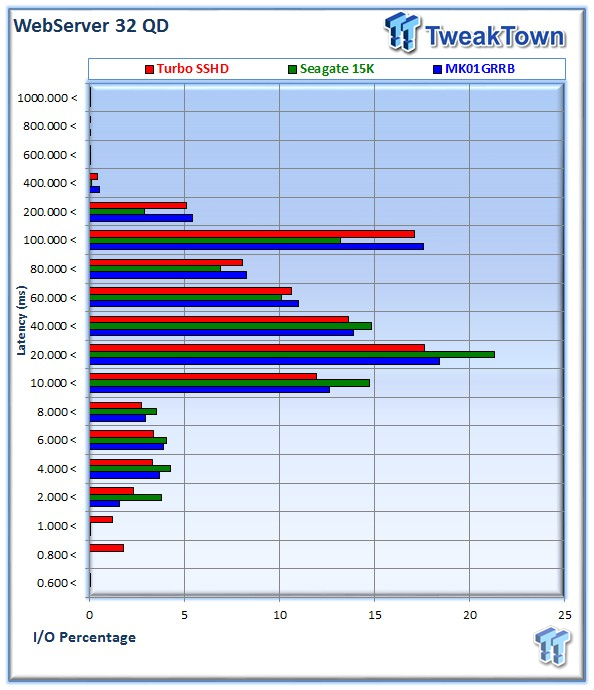

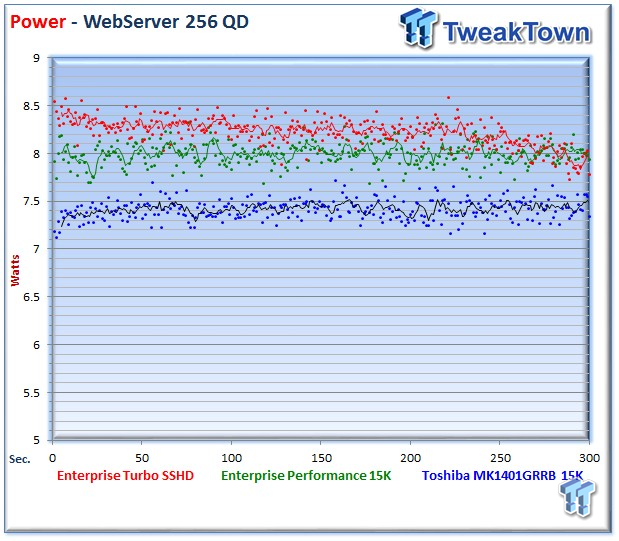

The Webserver profile is a read-only test with a wide range of file sizes. Web servers are responsible for generating content for users to view over the internet, much like the very page you are reading. The speed of the underlying storage system has a massive impact on the speed and responsiveness of the system, and thus the end-user experience.

The Seagate Turbo SSHD averages a beastly 610 IOPS, well above the competition, which hover around 500 IOPS.

The Turbo SSHD averages 7.37 Watts during the test.

Final Thoughts

At the end of a long and complex product evaluation, it is best to bring things back to simplicity. One of the major motivations for deploying Seagate Turbo SSHD's is the hassle-free installation of the drives into standard equipment. There are no special drivers or any type of management required. This simplicity will bring about huge returns in existing infrastructure. Installing 10 SSHDs adds a 320GB shot of flash acceleration into the server with absolutely no hassle; you simply install the drives. The SSHD occupies the middle ground between SSD's and HDD's, providing a single device that handles caching and tiering automatically.

It's that sort of 'problem solved' mentality that led Seagate down the path to SSHD's in the first place. With lackluster performance increases from standard approaches, and SSDs encroaching upon the performance segment in client and enterprise environments, Seagate decided to marry the speed and efficiency of the SSD with the capacity of an HDD. The initial foray into the client space has resulted in such wild success that Seagate quit producing standard 2.5" HDD's in favor of only offering SSHD's.

In our standard testing, we witnessed appreciable gains with 100% LBA random read workloads. These same gains also transferred over to our mixed workloads. The combination of partially cached read data, and faster random write speeds resulting from the alleviation of head movement, delivered a large advantage over the standard HDD. The partially cached results we received, even when testing with full-span read/writes, were outstanding. Applications often rely upon tight groupings of data that will result in significantly enhanced performance, beyond what we have demonstrated with our full span testing.

In our targeted testing, we witnessed just how fast the SSHD can be when data is cached in its entirety. It is possible to attain results well above any existing HDD with a top speed of 8,800 IOPS from the NAND cache layer. Short-stroking typical HDD's can deliver performance gains, but also adds to the cost of deployment. Not only are SSHD's much faster than short-stroking, they deliver a lower TCO. The Dollar to IOPS ratio of the SSHD will easily rival that of short-stroked HDD's.

The reliability of the SSHD is also within expected norms, with a two million hour MTBF and a .44% AFR. The extra layer of NVC cache also adds the option for expanded power-loss protection not offered with typical enterprise HDD's. FIPS 140-2 functionality and secure encryption round out the enterprise-class features, while the five-year warranty should allay any fears of utilizing this relatively 'new' technology.

While the 32GB of eMLC may seem small compared to the overall size of the SSHD, the fact is that most hot data, and metadata, occupies a small portion of the entire storage space. By intelligently caching the most relevant and hard-to-access blocks, and allowing the platters to handle the data access they excel at, the Turbo SSHD can deliver big gains in application performance.

We expect the maturation of the SSHD platform to accelerate rapidly, with larger portions of flash making their way into numerous types of enterprise-class HDD's. Seagate's AMT technology is truly revolutionary and looks to help redefine the limits of enterprise HDD performance in a cost-conscious manner.

Update: Unless otherwise noted performance results for the Turbo SSHD are conducted over 33% of the LBA range to highlight caching performance.

United

States: Find other tech and computer products like this

over at

United

States: Find other tech and computer products like this

over at  United

Kingdom: Find other tech and computer products like this

over at

United

Kingdom: Find other tech and computer products like this

over at  Australia:

Find other tech and computer products like this over at

Australia:

Find other tech and computer products like this over at  Canada:

Find other tech and computer products like this over at

Canada:

Find other tech and computer products like this over at  Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf

Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf