Introduction

The advent of the Intel DC S3700 series of enterprise SSDs brought about a new focus on performance consistency. For many unaccustomed to the inner workings of enterprise storage solutions, this triggered the revelation that SSDs do not deliver a steady level of performance, even when under a steady workload.

Intel has worked hard to illustrate the need for performance consistency in everyday applications, and rightly so. There is a plethora of cheap consumer SSDs on the market, and in an attempt to lower overall TCO, many administrators have been experimenting with these SSDs in actual production environments. Many of these same administrators come away from the experience unsatisfied with the inconsistent performance trends and poor endurance of these consumer solutions.

The problem lies in the variable performance delivered by these solutions, one Chris Ramseyer and I highlighted in our article and video Consumer vs. Enterprise SSD Performance Analysis. In our testing, we found that some client SSDs suffer from I/O 'outliers' that were in the same latency range as those delivered by a 15,000 RPM HDD. This highlights the fact that storage performance is not always defined by the 'average' results posted in typical test results, and choosing the wrong solution can have disastrous results.

By monitoring the performance of the storage with greater granularity, we can expose the flaws that plague client-side and less robust enterprise storage solutions. The Intel DC S3700 strives to provide a level of consistency unmatched by many other SSDs, and the challenge for Intel became how to spread that message. One of the best solutions for highlighting performance variability is by measuring performance in one-second intervals. At TweakTown we had already begun testing enterprise storage products in this manner, and the Intel DC S3700 spawned very similar testing by other hardware review sites.

The focus on performance consistency from Intel has brought one of the crucial aspects of storage performance into the limelight; predictable performance. One of the major motivators behind creating an SSD with predictable performance is the need to feed I/O to applications in a consistent manner. Performance variability can rob applications of performance. Individual 'hangs' and lags from outlying I/O can significantly affect application performance, simply because applications are forced into waiting for the next I/O to complete.

Outliers can rob applications of their performance, especially when there is significant variability. In enterprise scenarios, these poorly performing applications can result in the need for more resources to make up the difference. When placed into massive datacenters, the extra servers needed to compensate for poor performance can create tremendous cost.

Another of the greatest aspects of the Intel DC S3700 is the performance consistency should equate to large gains in performance in RAID applications. RAID arrays can only read and write only as fast as the slowest device in the array. If any single SSD in the array experiences significant variability, it will slow down the entire array. Since the speed of the array only operates as fast as the slowest I/O, several SSDs in an array with poor performance consistency can magnify the problem. In effect, the number of poorly performing SSDs in the RAID array multiplies the amount of outlying I/O.

We are accustomed to reading over-the-top RAID reviews with insane numbers of SSDs, and have been guilty of writing them in the past. Expect us to write more of them in the future as well, we have some very exciting new RAID testing coming up.

However, in typical deployments, SSDs are used as high performance tiers for hot data tiers or caching applications. Not every application requires millions of IOPS, and today we are focusing on the performance of a realistic deployment of four and eight Intel DC S3700 SSDs in an enterprise environment.

Intel DCS3700 Architecture

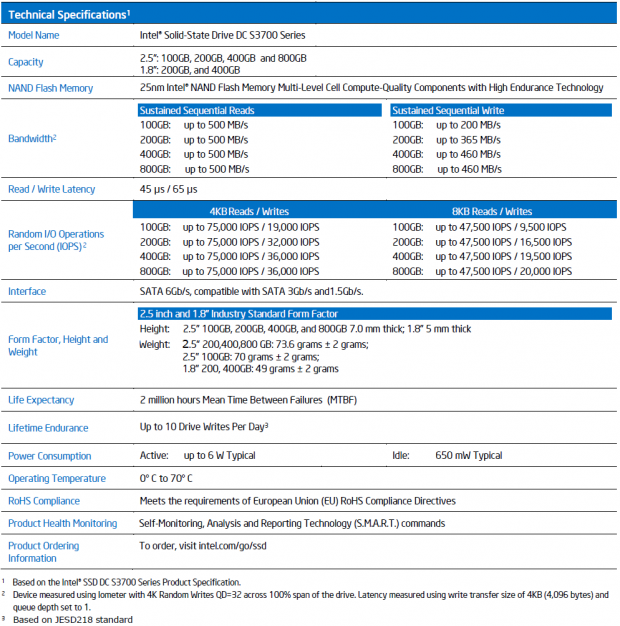

The consistent performance of the DC S3700 is due to the new Intel controller, the PC29AS21CA (an 8-Channel ASIC), and an optimized firmware designed for low latency operation.

The improved firmware performance results from employing a new type of indirection table. Intel switched from utilizing a compressed binary tree system to a fully uncompressed 1:1 mapping of the NAND flash. This eliminates the need for defragmentation of the mapping table and reduces associated I/O latency concerns. In order to access such a large indirection table quickly, Intel keeps this 'map' located in the 1GB of ECC DDR3-1333 DRAM (on the 800GB model). The large tables necessitate more cache for the SSD, with varying capacities of DRAM on each model.

The 6Gb/s controller provides sequential read and write speeds of 500/365 MB/s, respectively, for the 200GB model. The 200GB SSD also features 75,000 random read IOPS and 32,000 random write IOPS. Write performance scales with various capacity points and the larger models provide more performance.

HET-MLC is a key component in the architecture, delivering 10 DWPD (Drive Writes Per Day) of endurance for five years. This doubling of endurance from the previous generation SSD equates to 14.6 Petabytes of endurance for the 800GB model and 7.3PB at the 400GB capacity point. This endurance, and a 2 million hour MTBF, is backed up by the Intel five year warranty.

The SSD comes in both 1.8" and 2.5" 7mm form factors, with the 1.8" devices intended for high-density blade and micro-server applications. Power consumption is slated at up to 6W (typ) and an idle of 650mW. The 2.5" SSDs can pull power from either 5V and 12V rails, or both simultaneously. The 1.8-inch model only utilizes the 3.3V rail.

Enhanced power protection comes in the form of two radial electrolytic capacitors (rated for 105C at 3.5V/47uF). These capacitors flush data in-transit to the NAND in the event of a host power-loss issue. The SSD features self-diagnostics of the capacitor, and upon failure of the capacitor will automatically switch the SSD into write-through mode. Users can also monitor the capacitor via SMART data.

Intel has taken several steps to protect user data, with CRC (Cyclic Redundancy Checks), firmware and logical block address verification built into the firmware. CRC consists of a hash tag used to validate data and identify data corruption. This protects the data from its original issuance, through the various levels of internal cache (SRAM and DRAM), and down to the NAND. AES-256 bit encryption support rounds out the feature set.

Adaptec ASR-72405

The ASR-71405 utilized in this evaluation sports an unmatched 24 native SAS/SATA ports in a full-height half-length form factor.

The ASR-72405 features 24 x 6Gb/s SAS native ports combined with the x8 PCIe Gen3 interface. 16 ports alone can saturate the bus by providing 9.8 GB/s, well above the 8 GB/s supplied by PCIe Gen3. This allows Adaptec to pack as many lanes onboard the controller as possible.

The heart of the Series 7 RAID controllers is the PMC8015 processor. This very small chip packs quite the punch, delivering 6,600 MB/s in sequential speed and 450,000 IOPS in random read speed. Developed by PMC-Sierra, this cornerstone of the Series 7 controller provides Adaptec with a 24-port SAS 2.0 native solution that easily outstrips an x8 PCIe Gen3 connection.

Adaptec paired this powerful controller with the new Mini-SAS HD (High Density) cables. These new RAID controller interconnects are the only solution that can provide the required number of connections for taking advantage of the ROC's 24 available ports within the space limitations of the full height controller.

Combining a revolutionary new controller with advanced cabling provides extreme performance, a slim form factor, and enhanced density that no other product currently on the market can match.

Adaptec RAID Code (ARC) delivers RAID levels 0, 1, 1E, 5, 6, 10, 50 and 60 (71605E supports 0, 1, 1E, and 10, only). ARC also offers RAID Level Migration (the ability to easily migrate RAID levels), Online Capacity Expansion (expand capacity without powering down the server), and Copyback Hot Spare (when a failed drive has been replaced, data is automatically copied from the hot spare back to the restored drive).

Adaptec's Intelligent Power Management techniques can purportedly reduce storage power consumption, and thus cost, by up to 70%. This is enabled by shifting the power for the connected devices into one of three modes; full on, low power and spin down.

The ASR-72405 is backed by a three year warranty.

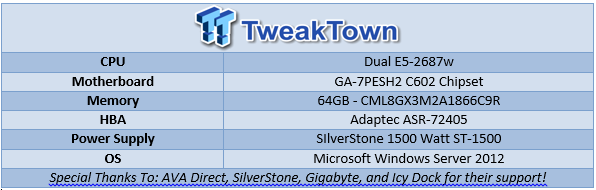

Test System and Methodology

We utilize a new approach to RAID storage testing with our Enterprise Test Bench, designed specifically to target long-term performance with a high level of granularity.

Many testing methods record peak and average measurements during the test period. These average values give a basic understanding of performance, but fall short in providing the clearest view possible of I/O QoS (Quality of Service).

'Average' results do little to indicate the performance variability experienced during actual deployment. The degree of variability is especially pertinent, as many applications can hang or lag as they wait for I/O requests to complete. This testing methodology illustrates performance variability, and includes average measurements, during the measurement window.

While executing a workload, all storage solutions deliver variable levels of performance. While this fluctuation is normal, the degree of variability is what separates enterprise storage solutions from typical client-side hardware. Providing ongoing measurements from our workloads with one-second reporting intervals illustrates product differentiation in relation to I/O QoS. Scatter charts give readers a basic understanding of I/O latency distribution without directly observing numerous graphs.

Consistent latency is the goal of every storage solution, and measurements such as Maximum Latency only illuminate the single longest I/O received during testing. This can be misleading, as a single 'outlying I/O' can skew the view of an otherwise superb solution. Standard Deviation measurements consider latency distribution, but do not always effectively illustrate I/O distribution with enough granularity to provide a clear picture of system performance. We also use latency plots to illustrate latency scaling under various workloads.

Our testing regimen follows SNIA principles to ensure consistent, repeatable testing. We attain steady state through a process that brings the device within a performance level that does not range more than 20% during the measurement window. Forcing the underlying SSDs to perform a read-write-modify procedure for new I/O triggers all garbage collection and housekeeping algorithms, highlighting the real performance of the solution.

The first page of results will provide the 'key' to understanding and interpreting our new test methodology. In replicated environments, RAID 0 can be a compelling choice for bleeding edge performance. RAID 5 provides a layer of data security that protects from the loss of a drive. We are testing both RAID 0 and RAID 5 for this evaluation.

RAID 0 4K Random Read/Write

We precondition both 64K stripe RAID 0 arrays, with 8 and 4 x 200GB Intel DC S3700 SSDs, for 18,000 seconds, or five hours, receiving reports on several parameters of workload performance every second. We plot this data to illustrate the drives' descent into steady state.

This chart consists of 16,000 data points. The dots signify IOPS performance every second during the test. The lines through the data scatter represent the average performance during the test. This type of testing presents standard deviation and maximum/minimum I/O in a visual manner.

High-granularity testing can give our readers a good feel for the latency distribution by viewing IOPS at one-second intervals. This should be in mind when viewing our test results below. We provide latency charts for further granularity below.

This downward slope of performance happens very few times in the lifetime of the device, typically during the first few hours of use, and we present the precondition results only to confirm steady state convergence.

Each QD for every parameter tested includes 300 data points (five minutes of one second reports) to illustrate the degree of performance variability. The line for each QD represents the average speed reported during the five-minute interval.

4K random speed measurements are an important metric when comparing drive performance, as the hardest type of file access for any storage solution to master is small-file random. One of the most sought-after performance specifications, 4K random performance is a heavily marketed figure.

The 8-drive array peaks at an average of 480,269 IOPS at QD256, while the 4-drive array reaches 248,753 IOPS. The 8-drive array falls shy of linear scaling, but averages an output of 60,000 IOPS per SSD.

Our read latency chart reveals that the four drive array latency nearly doubles in the jump from QD128 (2.06ms) to QD256 (4.05ms). This doubling of latency reaps only a minor performance gain in IOPS, as shown in the chart above the latency chart. This indicates that limiting the array to QD128 would provide optimum performance in both IOPS and latency for a read-centric 4K workload in some latency-sensitive environments.

Garbage collection routines are more pronounced in heavy write workloads. This leads to more variability in performance.

The Intel DC S3700 8-drive array produces an average of 252,177 IOPS at QD256, or roughly 31,522 per SSD. This is near-perfect write scaling, with the specifications for the 200GB SSDs at 32,000 IOPS each. The 4-drive array averages 130,703 IOPS at QD256, or 32,675 IOIPS per SSD, slightly above the rated specifications.

The write latency charts illustrated the scaling for the 8-drive array is optimum for IOPS/latency at QD128, and QD64 for the 4-drive array.

RAID 0 8K Random Read/Write

In this preconditioning chart, we note the telltale garbage collection cadence of the DC S3700. The garbage collection routines run on a strict schedule on each SSD, and amazingly, this schedule is precise enough that all of the drives are entering the lower performance state at the same time. With the array only able to go as fast as the slowest member, any one member falling out of sync would lower the performance of the entire array.

We noticed this in several of our workloads, and with the extended time frame of our tests, which spans several days with no reboots, one wonders how long it would take before the drives fall out of sync and the array falls into one steady line of lower performance. We will run extended tests in the future on this same array to satisfy our curiosity, and note the results in the comments below.

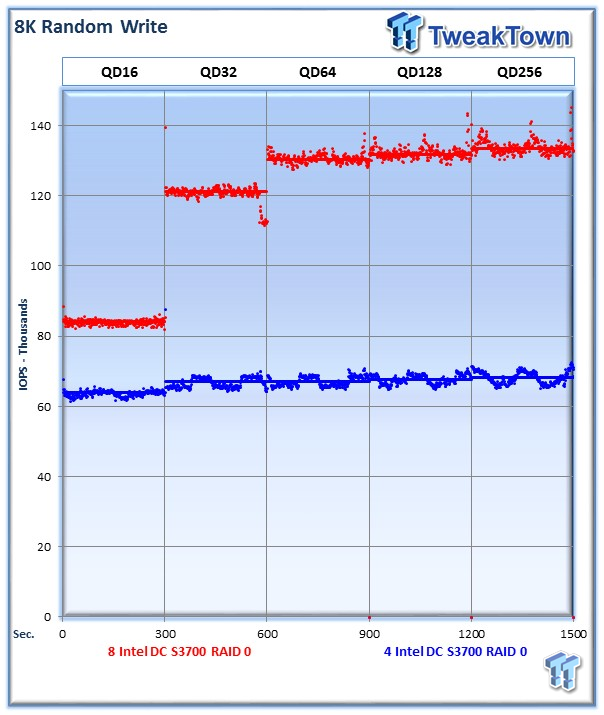

8K random read and write speed is a metric that is not tested for consumer use, but for enterprise environments this is an important aspect of performance. With several different workloads relying heavily upon 8K performance, we include this as a standard with each evaluation. Many of our Server Emulations below will also test 8K performance with various mixed read/write workloads.

The average 8K random read speed of the 8 drive array comes in at 30,4667 IOPS at QD256, and the four drive array peaks at QD256 with 155,713 IOPS.

The 8k read latency results indicates that the best performance in latency-sensitive applications for the 8-drive array falls at QD128, and the 4-drive array again performs best at QD64.

The average 8K random write speed of the 8-drive array is 133,529 IOPS at QD256, and 68,026 IOPS for the 4-drive array.

With 8K random writes, the larger array performs best at QD64, while the smaller array excels at very low QD workloads in a pure write environment.

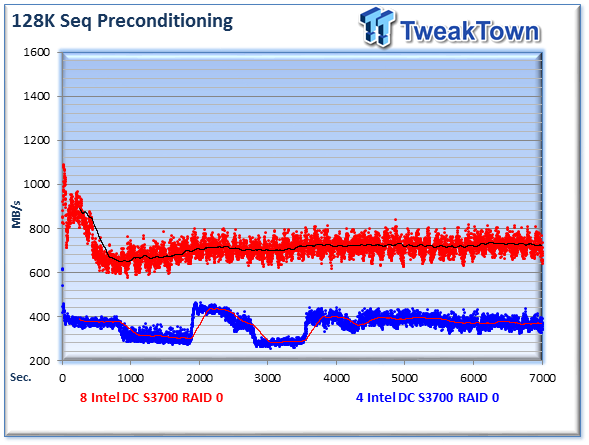

RAID 0 128K Sequential Read/Write

One interesting caveat of RAID testing is the performance impact of different RAID settings. This is very clear with read caching enabled and disabled on the ASR-72405 RAID controller. We observe that the top precondition chart, with read caching disabled, performs with a typical I/O pattern, though the scaling is not very impressive with direct writes to the SSDs.

Enabling read caching provides a huge boost in performance, as shown in the second graph. It also brings out an interesting lattice-like pattern of performance. In our testing for the article we are focusing on the performance of the Intel SSDs, so we conduct all testing, except this small example, without read caching. We will take a deeper look at the varying performance with different caching settings in future RAID controller articles.

The 128K read sequential speeds reflects the maximum sequential throughput of the SSD using a realistic file size encountered in an enterprise scenario. The large array tops out just shy of 3,000 MB/s, while the smaller array peaks at 1,675 MB/s.

128k read latency results indicates the smaller array is more nimble at much lower Queue Depths, where its latency is low and any increasing load does not yield a tangible increase in bandwidth. The larger array reaches the best IOPS/latency ratio at QD64.

The 8-drive array peaks at 1,678 MB/s at QD256, while the 4-drive array provides 857 MB/s at the same Queue Depth. While this is near-linear scaling, there is quite a bit of speed left on the table with each drive only providing 209 MB/s, while they are rated for up to 365 MB/s each.

RAID 0 Database/OLTP and Webserver

RAID 0 Database/OLTP

This test emulates Database and On-Line Transaction Processing (OLTP) workloads. OLTP is in essence the processing of transactions such as credit cards and high frequency trading in the financial sector. Enterprise SSDs are uniquely well suited for the financial sector with their low latency and high random workload performance. Databases are the bread and butter of many enterprise deployments. These are demanding 8K random workloads with a 66% read and 33% write distribution that can bring even the highest performing solutions down to earth.

The larger RAID 0 array tops out at 190,885 IOPS, and the smaller reaches speeds of 87,889 IOPS.

Webserver

The Webserver profile is a read-only test with a wide range of file sizes. Web servers are responsible for generating content for users to view over the internet, much like the very page you are reading. The speed of the underlying storage system has a massive impact on the speed and responsiveness of the server that is hosting the website, and thus the end-user experience.

The 8-drive array reaches speed of 169,537 IOPS, while the smaller array tops out at 87,889 IOPS at QD256.

Tailoring the workload for the environment would leave a few choices with this type of file access, with the larger array offering tradeoffs between IOPS or latency, though the smaller array clearly scales best at QD64.

RAID 5 4K Random Read/Write

We precondition both 64K stripe RAID 5 arrays, with 8 and 4 x 200GB Intel DC S3700 SSDs, for 18,000 seconds, or five hours, receiving reports on several parameters of workload performance every second. We plot this data to illustrate the drives' descent into steady state.

This chart consists of 16,000 data points. The dots signify IOPS performance every second during the test. The lines through the data scatter represent the average performance during the test. This type of testing presents standard deviation and maximum/minimum I/O in a visual manner.

High-granularity testing can give our readers a good feel for the latency distribution by viewing IOPS at one-second intervals. This should be in mind when viewing our test results below. We provide latency charts for further granularity below.

This downward slope of performance happens very few times in the lifetime of the device, typically during the first few hours of use, and we present the precondition results only to confirm steady state convergence.

Each QD for every parameter tested includes 300 data points (five minutes of one second reports) to illustrate the degree of performance variability. The line for each QD represents the average speed reported during the five-minute interval.

4K random speed measurements are an important metric when comparing drive performance, as the hardest type of file access for any storage solution to master is small-file random. One of the most sought-after performance specifications, 4K random performance is a heavily marketed figure.

The 8-drive array peaks at an average of 479,810 IOPS at QD256, while the 4-drive array reaches 249,151 IOPS.

The sweet spot for the 4-drive array occurs at QD128, while the larger array performs best with QD256.

Garbage collection routines are more pronounced in heavy write workloads. This leads to more variability in performance.

The Intel DC S3700 8-drive array exhibits performance that mirrors that of the smaller array, with performance peaking at QD64 and falling at higher workloads. This limitation is likely the result of the RAID 5 algorithms. Performance peaks at QD64 with 167,255 IOPS for the large array, and 24,109 for the 4-drive array.

The write latency gets considerably worse for both arrays after we cross the QD64 threshold. There is no doubt that QD64 provides optimum results in these configurations.

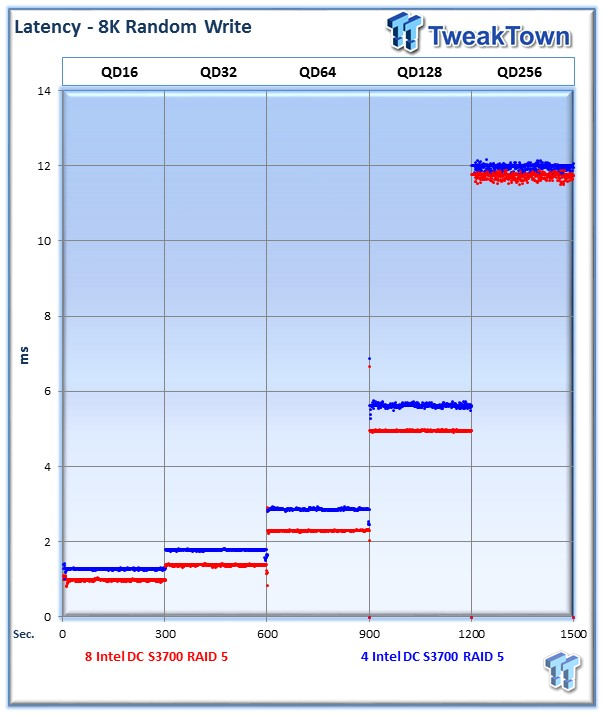

RAID 5 8K Random Read/Write

8K random read and write speed is a metric that is not tested for consumer use, but for enterprise environments this is an important aspect of performance. With several different workloads relying heavily upon 8K performance, we include this as a standard with each evaluation. Many of our Server Emulations below will also test 8K performance with various mixed read/write workloads.

The average 8K random read speed of the 8-drive array comes in at 304,344 IOPS at QD256, while the 4-drive array peaks at 155,960 IOPS.

The 8K random write results from the RAID 5 arrays closely mirrors the results we received with the 4K random write workloads, with the peak occurring at QD64 for the 8-drive array and QD128 for the 4-drive array.

The latency jumps quickly for both arrays after QD64.

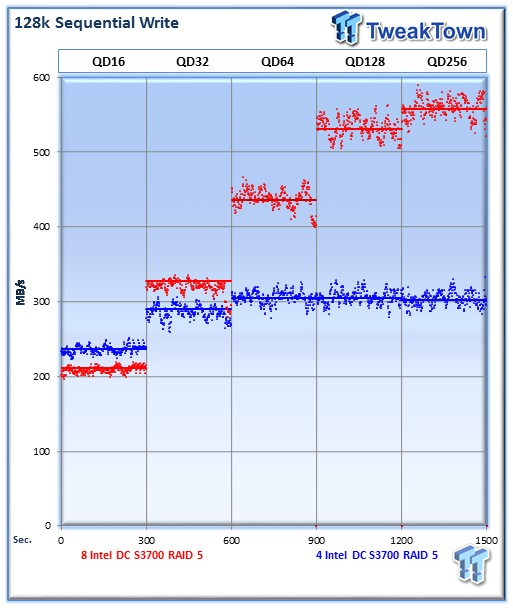

RAID 5 128K Sequential Read/Write

The 128K read sequential speeds reflect the maximum sequential throughput of the SSD using a realistic file size encountered in an enterprise scenario. The 8-drive array reaches a sequential read speed of 3,000 MB/s at QD128, while the 4-drive array peaks at 1,704 MB/s.

128k read latency reaches above 18ms under a heavy workload for the 8-drive array, and tops out at 10.75ms for the 4-drive array.

Without read caching enabled the 128K sequential performance is a bit disappointing, topping out at only 539 MB/s for the 8-drive array and 300 MB/s for the 4-drive array.

RAID 5 Database/OLTP and Webserver

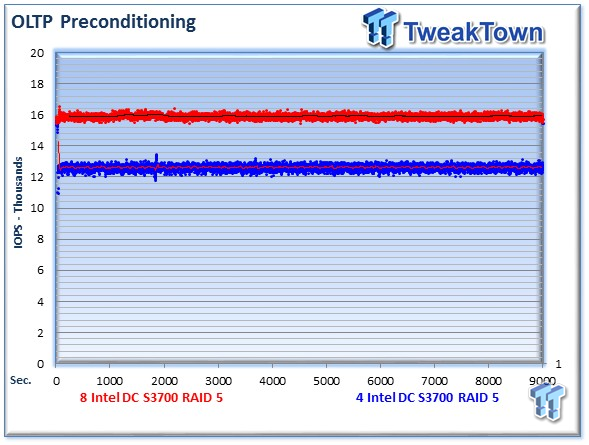

RAID 5 Database/OLTP

This test emulates Database and On-Line Transaction Processing (OLTP) workloads. OLTP is in essence the processing of transactions such as credit cards and high frequency trading in the financial sector. Enterprise SSDs are uniquely well suited for the financial sector with their low latency and high random workload performance. Databases are the bread and butter of many enterprise deployments. These are demanding 8K random workloads with a 66% read and 33% write distribution that can bring even the highest performing solutions down to earth.

The 8-drive array tops out at 63,622 IOPS at QD128, while the smaller 4-drive array tops out slightly lower at 59,303 IOPS.

Webserver

The Webserver profile is a read-only test with a wide range of file sizes. Web servers are responsible for generating content for users to view over the internet, much like the very page you are reading. The speed of the underlying storage system has a massive impact on the speed and responsiveness of the server that is hosting the website, and thus the end-user experience.

The Intel DC S700 array of 8-drives tops out at an impressive 167,769 IOPS at QD256, while the four drive array reaches 88,013 IOPS.

Final Thoughts

Large SSD arrays are pressed into service in some bleeding edge applications, but the majority of deployments center around small SSD arrays utilized to accelerate existing infrastructure. SSDs are one of the premium tiers of storage in today's datacenter, and the higher cost per GB necessitates intelligent use of the available capacity through caching and tiering models.

Flash products can be used as dedicated storage volumes, but to maximize the benefits of SSDs, many deployments consist of small SSD arrays used as front-ends for large HDD storage pools and SAN's. This can be an easy solution to accelerate the underlying storage and increase CPU utilization in the host system. Deploying the SSDs can be a simple slip-in solution, especially when used in tandem with integrated caching solutions from the leading RAID manufacturers.

The flexibility of smaller arrays also allows their utilization as a replacement for caching data in RAM, providing a higher level of data security due to the non-volatile nature of NAND. All of these deployment models require a solid platform that scales well, providing the best return on investment for administrators.

The Intel DC S3700 series of SSDs came with a renewed focus on latency consistency in enterprise SSDs. While many lower-class SSDs can suffer from significant performance variability, this can be an acceptable trade-off for cost-sensitive applications that do not require maximum performance or RAID usage. For use in RAID environments, these lower quality SSDs can result in significant loss of available performance due to poor scaling.

For those in the market for an upper-tier SSD with superb latency performance, the Intel DC S3700 is a good fit. For the price, it delivers superb latency consistency that equates to a boost in real-life applications. The unique architecture of the LBA mapping tables allows the Intel to operate in a very efficient manner, and the regular cadence of the garbage collection routines keep the SSD within a very tightly defined performance envelope. Surprisingly, these remarkably precise garbage collection routines are even visible when used with eight SSDs in a RAID configuration.

Providing a consistent level of performance allows the Intel DC S3700 Series to provide excellent scaling in RAID configurations. In write intensive applications, the importance of good scaling is especially pertinent. SSDs with significant variability do not scale well in RAID configurations, especially in heavy write workloads. Poor scaling results in an accelerated diminishing point of returns, a frustrating experience for users making significant investments.

The Intel DC S3700 performed well in our testing, and in write-intensive and RAID environments will prove to be a good fit for those in need of a simple means of workload acceleration.

United

States: Find other tech and computer products like this

over at

United

States: Find other tech and computer products like this

over at  United

Kingdom: Find other tech and computer products like this

over at

United

Kingdom: Find other tech and computer products like this

over at  Australia:

Find other tech and computer products like this over at

Australia:

Find other tech and computer products like this over at  Canada:

Find other tech and computer products like this over at

Canada:

Find other tech and computer products like this over at  Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf

Deutschland:

Finde andere Technik- und Computerprodukte wie dieses auf