Introduction

It is funny how so many things in the computer industry start out as rumours and time and time again people always presume there is no truth behind them. RyderMark started off worse than most rumours in the industry, it went as far as being an open argument between the Inquirer and DailyTech. Now when it is finally about to launch, we will see who was right and who was wrong.

But what is RyderMark and what makes it different from say 3DMark06? Well, let us try to break things down. We will start with what it is not and that is a DirectX 10 benchmark. However, a DirectX 10 version should be coming out at a later stage. What it is on the other hand is rather interesting; Candella Software, the company behind RyderMark, claims that it is based on a real game engine. Now that sounds familiar, anyone want to give the guys over at Futuremark a call and see what games use their engine?

Anyhow, what we have got here is a very advanced benchmark that allows you to test a whole range of new features that have so far not been available. It can test features such as Parallel Occlusion Mapping, a very advanced form of Bump Mapping and it supports 32- and 64-bit HDR Lighting with Anti-Aliasing. Furthermore it does Shader model 2.0 and 3.0, Soft Shadows, Normal Mapping, Soft Particles, Full Scene Motion Blur, Depth-of-Field, Heat Haze, Volumetric Fire and Realistic Water Physics, although we have to say that some of those features do not look that impressive, at least not on the graphics card we tested with. If you have played Supreme Commander you will think the water and fire sucks, but I guess the engine used is already getting outdated. The smoke effects did not look that amazing either.

Nonetheless, it does have a vast range of features that can be enabled and disabled. There is also support for stereo or 5.1-channel sound, but you cannot turn it off which we found strange, since this means that you are always going to lose out some performance to the audio processing. It is also one of the first benchmarks that supports multi-threading, although Intel already has a benchmark that supports dual- or quad-core CPUs, this is the first independent benchmark that does so.

For this preview, we did not have the chance to test RyderMark on a quad-core CPU, but hopefully there will be a performance difference here compared to a dual-core. Let us move on and see exactly what we have here!

Benchmark Results

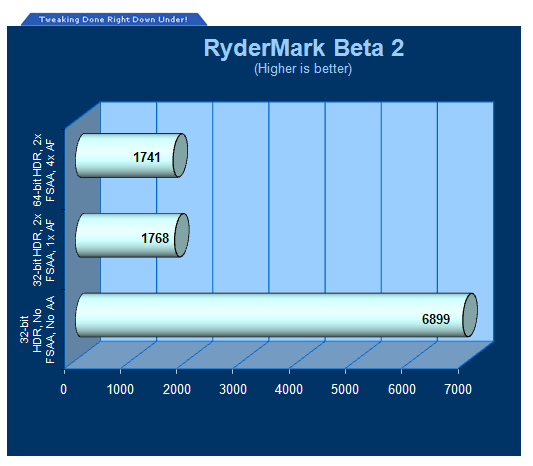

The system used for this preview consists of an Intel Core 2 Duo E6700 CPU running at 1,333MHz quad-pumped bus speed, an ASUS P5B Deluxe motherboard, 2GB of DDR2 RAM clocked at 800MHz, and a now rather old Radeon X1800 Pro graphics card. This card works pretty well in most games, but once RyderMark was fired up the card seemed like it something out of the history books. This benchmark will work under Windows Vista operating system but it is only certified for Windows XP since "it does not work well in Vista due to poor GPU drivers."

There are three settings depending on how much graphics memory you have, 128, 256 and 512MB or more. This is due to the size of the textures used and a graphics card that does not have sufficient graphics memory will not be able to use the higher resolution textures. Interestingly we had some issues with tearing and missing textures at higher resolutions, even though the 256MB option was selected. It seems like Candella Software still has some work to do before the final release of RyderMark.

One interesting thing is that there seems to be a huge difference between 32-bit and 64-bit HDR lighting, as the 64-bit option looks a lot more vivid than the 32-bit setting. Enabling features such as motion blur, shadowing and dynamic reflections really killed the performance, as you will see in the graph below. The first test is with no Anti-Aliasing or Anisotropic Filtering, and with the three previous options also disabled, while in the other two benchmark results, those settings are enabled.

The two screenshots below show the difference between 32-bit and 64-bit HDR. The full-resolution screenshots are at 1680x1050 resolution for those that want to take a closer look at the details.

Final Thoughts

It is hard to draw any conclusions on RyderMark at this stage. It is an interesting benchmark in terms of what it can do, but it is not visually impressive and some of the effects such as the water, fire and smoke are less than impressive. The surrounding landscape, which seems to be set in Venice, is quite attractive, but the boats do not look that great and the one that explodes towards the end of the demo breaks up in a way that looks very dated, even compared to a year old title with minimum physics usage. It is also the matter of how the boats travel in the water and the very poor looking foam that looks more like a bunch of white pixels.

Now, we are not knocking the people at Candella Software, as it is not easy to program a 3D benchmark and get everything right first time around. However, the buzz around RyderMark seems to have given it more credit than might be due. We will be looking into RyderMark again, once the final version appears in about two week's time. For now, RyderMark is an interesting way of testing specific features of a graphics card, but it is not going to replace any current benchmarks but you probably will start to see more and more of it appearing in our VGA reviews.

From what you have seen so far, leave your opinion below or in our forums!

Our Latest Benchmarks Article Coverage